Combinatory logic

General

Although combinatory logic has precursors, it was Moses Schönfinkel who first explored combinatory logic as such. Later the work was continued by Haskell B. Curry. Combinatory logic was developed as a theory for the foundation of mathematics [Bun:NatICL], and it has relevance in linguistics too.

Its goal was to understand paradoxes better, and to establish fundamental mathematical concepts on simpler and cleaner principles than the existing mathematical frameworks, especially to understand better the concept of substitution. Its “lack of (bound) variables” relates combinatory logic to the pointfree style of programming. (For contrast, see a very different approach which also enables full elimination of variables: recursive function theory)

General materials:

- Jonathan P. Seldin: Curry’s anticipation of the types used in programming languages (it is also an introduction to illative combinatory logic)

- Jonathan P. Seldin: The Logic of Curry and Church (it is also an introduction to illative combinatory logic)

- Henk Barendregt: The Impact of the Lambda-Calculus in Logic and Computer Science (The Bulletin of Symbolic Logic Volume 3, Number 2, June 1997). Besides theoretical relevance, the article provides implementations of recursive datatypes in CL

- To Dissect a Mockingbird and also Re: category theory <-> lambda calculus?, found on a Lambda the Ultimate site. The links to the To Dissect a Mockingbird site given by these pages seem to be broken, but I found a new one (so the link given here is correct).

Portals and other large-scale resources

Applications

Of course combinatory logic has significance in the foundations of mathematics, or in functional programming, computer science. For example, see Chaitin's construction.

It is interesting that it can be important also in some linguistical theories. See especially the theory of applicative universal grammar, it uses many important thoughts from combinatory logic.

Lojban is an artificial language (and, unlike the more a posteriori Esperanto, it is rather of an a priori taste). It is a human language, capable of expressing everything. Its grammar uses (among others) things taken from mathematical logic, e.g. predicate-like structures. Although its does not make use combinatory logic directly (even, from a category logic / functional programming point of view, it uses also rather imperative ideas), but it may give hints and analogies, how combinatry logic can be useful in linguistics.

Implementing CL

- Talks about it at haskell-cafe haskell-cafe

- Lot of interpreters at John's Lambda Calculus and Combinatory Logic Playground.

- Unlambda resources concerning David Madore's combinatory logic programming language Unlambda

- an implementation of Unlambda in Haskell

- another implementation of Unlambda in Haskell, for use by Lambdabot

- an Unlambda metacircular interpeter

- CL++, a lazy-evaluating combinatory logic interpreter with some computer algebra service: e.g. it can reply the question with instead of a huge amount of parantheses and , combinators. Unfortunately I have not written it directly in English, so all documentations, source code and libraries are in Hungarian. I want to rewrite it using more advanced Haskell programming concepts (e.g. monads or attribute grammars) and directly in English.

Base

Some thoughts on base combinators and on the relatedness of their rules to other topics

- Conal Elliott's reply to thread zips and maps

- Intuitionistic fragment of propositional logic

- Records in function: in set theory and database theory, we regard functions as consisting of more elementary parts, records: a function can be regarded as the set of all its records. A record is a pair of a key and its value, and for functions we expect unicity (and sometimes stress this requirement by writing instead of ).Sometimes I think of as having a taste of record selection: selects a record determinated by key in function (as in a database), and returns the found record (i.e. corresponding key and value) contained in the container (continuation). Is this thought just a toy or can it be brought further? Does it explain why and can constitute a base?

- Also bracket abstraction gives us a natural way to understand the seemingly rather unintuitive and artificial combinator better

Programming in CL

I think many thoughts from John Hughes' Why Functional Programming Matters can be applied to programming in Combinatory Logic. And almost all concepts used in the Haskell world (catamorphisms etc.) help us a lot here too. Combinatory logic is a powerful and concise programming language. I wonder how functional logic programming could be done by using the concepts of Illative combinatory logic, too.

Datatypes

Continuation passing for polynomial datatypes

Direct product

Let us begin with a notion of the ordered pair and denote it by . We know this construct well when defining operations for booleans

and Church numbers. I think, in generally, when defining datatypes in a continuation-passing way (e.g. Maybe or direct sum), then operations on so-defined datatypes often turn to be well-definable by some .

We define it with

in -calculus and

in combinatory logic.

A nice generalization scheme:

- as the construct can be generalized to any natural number (the concept of -tuple, see Barendregt's Calculus)

- and in this generalized scheme corresponds to the 0 case, to the 1 case, and the ordered pair construct to the 2 case, as though defining

so we can write definition

or the same

in a more interesting way:

Is this generalizable? I do not know. I know an analogy in the case of , , , .

Direct sum

The notion of ordered pair mentioned above really enables us to deal with direct products. What about it dual concept? How to make direct sums in Combinatory Logic? And after we have implemented it, how can we see that it is really a dual concept of direct product?

A nice argument described in David Madore's Unlambda page gives us a continuation-passig style like solution. We expect reductions like

so we define

now we translate it from -calculus into combinatory logic:

Of course, we can recognize Haskell's direct sum construct

Either (Left, Right)

implemented in an analogous way.

Maybe

Let us remember Haskell's maybe:

maybe :: a' -> (a -> a') -> Maybe a -> a'

maybe n j Nothing = n

maybe n j (Just x) = j x

thinking of

- n as nothing-continuation

- j as just-continuation

In a continuation passing style approach, if we want to implement something like the Maybe constuct in -calculus, then we may expect the following reductions:

we know both of them well, one is just , and we remember the other too from the direct sum:

thus their definition is

where both and have a common definition.

Maybe2

Haskell:

module Maybe2 (Maybe2, maybe2, nothing2, just2) where

data Maybe2 a b = Nothing2 | Just2 a b

maybe2 :: maybe2ab' -> (a -> b -> maybe2ab') -> Maybe2 a b -> maybe2ab'

maybe2 nothing2Cont _ Nothing2 = nothing2Cont

maybe2 _ just2Cont (Just2 a b) = just2Cont a b

nothing2 :: Maybe2 a b

nothing2 = Nothing2

just2 :: a -> b -> Maybe2 a b

just2 = Just2

Expected reductions:

Other argument orders are possible, too, but they lead to more complicated solutions than the following:

Combinators:

Conjecture for generalisation:

with straightforward generalisations, e.g.

Catamorphisms for recursive datatypes

List

Let us define the concept of list by its catamorphism (see Haskell's foldrfunction):

a list (each concrete list) is a function taking two arguments

- a two-parameter function argument (cons-continuation)

- a zero-parameter function argument (nil-continuation)

and returns a value coming from a term consisting of applying cons-continuations and nil-continuations in the same shape as the correspondig list. E. g. in case of having defined

the expression

reduces to

But how to define and ? In -calculus, we should like to see the following reductions:

Let us think of the variables as denoting head, denoting tail, denoting cons-continuation, and denoting nil-continuation.

Thus, we could achieve this goal with the following definitions:

Using the formulating combinators described in Haskell B. Curry's Combinatory Logic I, we can translate these definitions into combinatory logic without any pain:

Of course we could use the two parameters in the opposite order, but I am not sure yet that it would provide a more easy way.

A little practice: let us define concat. In Haskell, we can do that by

concat = foldr (++) []

which corresponds in cominatory logic to reducing

Let us use the ordered pair (direct product) construct:

and if I use that nasty (see later)

Monads in Combinatory Logic?

Concrete monads

Maybe as a monad

return

Implementing the return monadic method for the Maybe monad is rather straightforward, both in Haskell and CL:

instance Monad Maybe

return = Just

...

in Haskell and

in combinatory logic.

map

Haskell:

instance Functor Maybe where

map f = maybe Nothing (Just . f)

-calculus: Expected reductions:

Definition:

Combinatory logic: we expect the same reduction here too

let us get rid of one parameter:

now we have the definition:

bind

Haskell:

instance Monad Maybe (>>=) where

(>>=) f p = maybe Nothing f

-calculus: we expect

achieved by defintion

In combinatory logic the above expected reduction

getting rid of the outest parameter

yielding definition

and of course

But the other way (starting with a better chosen parameter order) is much better:

yielding the much simplier and more efficient definition:

We know already that can be seen as as a member of the scheme of tuples: for case. As the tupe construction is a usual guest at things like this (we shall meet it at list and other maybe-operations like ), so us express the above definition with denoted as :

hoping that this will enable us some interesting generalization in the future.

But why we have not made a more brave generalization, and express monadic bind from monadic join and map? Later in the list monad, we shall see that it may be better to avoid this for sake of deforestation. Here a maybe similar problem will appear: the problem of superfluous .

join

We should think of changing the architecture if we suspect that we could avoid and solve the problem with a more simple construct.

The list as a monad

Let us think of our list-operations as implementing monadic methods of the list monad. We can express this by definitions too, e.g.

we could name

Now let us see mapping a list, concatenating a list, binding a list. Mapping and binding have a common property: yielding nil for nil. I shall say these operations are centred: their definition would contain a subexpression. Thus I shall give a name to this subexpression:

Now let us define map and bind for lists:

now we see it was worth of defining a common . But to tell the truth, it may be a trap. breaks a symmetry: we should always define the cons and nil part of the foldr construct on the same level, always together. Modularization should be pointed towards this direction, and not to run forward into the T-street of .

Another remark: of course we can get the monadic bind for lists

But we used here. How do we define it? It is surprisingly simple. Let us think how we would define it in Haskell by foldr function, if it was not defined already as ++ defined in Prelude:

In defining

(++) list1 list2

we can do it by foldr:

(++) [] list2 = list2

(++) (a : as) list2 = a : (++) as list2

thus

(++) list1 list2 = foldr (:) list2 list1

let us se how we should reduce its corresponding expression in Combinatory Logic:

thus

Thus, we have defined monadic bind for lists. I shall call this the deforested bind for lists. Of course, we could define it another way too: by concat and map, which corresponds to defining monadic bind from monadic map and monadic join. But I think this way forces my CL-interpreter to manage temporary lists, so I gave rather the deforested definition.

Defining the other monadic operation: return for lists is easy:

instance Monad [] where

return = (: [])

in Haskell -- we know,

(: [])

translates to

return = flip (:) []

so we can see how to do it in combinatory logic:

How to AOP with monads in Combinatory Logic?

We have defined monadic list in CL. Of course we can make monadic Maybe, binary tree, Error monad with direct sum constructs...

But separation of concerns by monads is more than having a bunch of special monads. It requires other possibilities too: e.g. being able to use monads generally, which can become any concrete mondads.

Of course my simple CL interpreter does not know anything on type classes, overloading. But there is a rather restricted andstatic possibility provided by the concept of definition itself:

and later we can change the binding mode named A e.g. from a failure-handling Maybe-like one to a more general indeterminism-handling list-like one, then we can do that simply by replacing definition

with definition

Self-replication, quines, reflective programming

Background

David Madore's Quines (self-replicating programs) and Shin-Cheng Mu's many writings, including a Haskell quine give us wonderful insights on mathematical logic, programming, self-reference. Wikipedia's quine page and John Bethencourt's quine quine. See also the writings of Raymond Smullyan, Hofstadter, also his current research project on a self-watching cognitive architecture, Manfred Eigen and Ruthild Winkler: Laws of the Game / How the Principles of Nature Govern Chance, and Karl Sigmund's Games of Life, and Reflective programming (see Reflection '96 and P. Maes & D. Nardi: Meta-Level Architectures and Reflection). G.J. Chaitin especially his Understandable Papers on Incompleteness, especially The Unknowable (the book is available on this page, just roll the page bellow that big colored photos). The book begins with the limits of mathematics: Gödel's undecidable, Turing's uncompatiblity, Chaitin's randomness); but (or exactly that's why?) it ends with writing on the future and beuty of science.

I must read Autopoesis and The Tree of Knowledge carefully from Maturana and Varela to say if their topics are releted to here. See

- table of contents in English

- annotations

- on Autopoesis (in English)

- on The Tree of Knowledge (in Hungarian). To summarize this annotation by citing its main thought: The intelligence is not a mere map from the outer world: but it is a continuous world-creating, and there are as many worlds as many minds.

Self-replication

Quines: the idea of self-replication can be conveyed by the concept of a program, which is able to print its own list. But pure -calculus and combinatory logic does not know any notion of printing! We should like the capture the essence of self-replication, without resorting to the imperative world.

Representation, qoutation -- the DNA

Let us introduce the concept of representing combinatory logic terms. How could we do that? For example, by binary trees. The leaves should represent the base combinators, and the branches mean application.

And how to represent combintory logic terms -- in combinatory logic itself? The first thought could be, that it is not a problem. Each combinatory logic term could be represented by itself.

Sometimes this idea works. The huge power of higher order functions is exactly in being able to treat datas programs and vice versa. Sometimes we are enabled to do things, which could be done in other languages only by carefully designing a representation, a specific language.

But sometimes, representing CL terms by themselves is not enough. Let us imagine a tutoring program! Let the topic be combinatory logic, the language of implementation -- combinatory logic, too. How should the tutoring program ask the pupil questions like:

- Tell me if the following two expresions have the same normal form:

The problem is that our program is simply unable to distinguish between CL terms which have the same normal form (in fact, equivalence cannot be defined generally either). If we represent CL terms by themselves, we simply loose a lot of information, including loosing any possibility to make distinctions between equivalent terms.

We see that there is something that relates to make a distinction between target language and metalanguage (See Imre Ruzsa, or Haskell B. Curry)

In this example, the distinction is:

- We deal with combinatory logic expressions because our program has to teach them: it is related to it just like a vocabulary program is related to English.

- But we deal with programming logic expressions because our program is implemented in them. Just like VIM is related to C++.

We said CL terms are eventually trees. Let us represent them with trees then -- now let us think of trees not as of term trees, but as datatypes which we must construct by hand, in a similar way as we defined Maybes, direct sums, direct products, lists.

where let denote the representation of and that of

Let us make a distinction between term trees and datatype trees. A Haskell example:

- many Haskell expressions can be regarded as term trees

- but only special Haskell expressions can be seen as datatype trees: those who are constructed from

BranchandLeafin an appropriate way

Similarly,

- all CL expressions can be regarded as term trees.

- but CL expressions which can be revered as datatype trees must obey a huge amount of constraints: they may consist only of subexpressions , , , subexpressions in an approporiate way.

(In fact, all CL expressions can be regarded as datatype trees too: CL is a total thing, we can us each CL expression in a same way as a datatype tree: we can apply it leaf- and branch-continuation arguments. Something will always happen. At worst it will diverge -- but lazy trees can diverge too, amd they are inarguably datatype trees. But now let us ignore all these facts, and let us define the notion of quotations in the restictive way: let the definition require to be structured in a predefined way.)

We use datatype trees for representing other expressions. Let us call CL expressions which can represent (another CL expreesion) quotations. Quotations are exactly the datatype trees, but

- the world refers to their function,

- the world datatype tree refers to their implemetation, structure

This means a datatype tree

- is not only a tree regarded only as a term tree,

- but on a higher level: itself a recursive datatype implemented in CL, it is appropiately consisting of , and , subexpressions so that we can reason about it in CL itself

How do quotations relate to all CL expressions?

- In one direction, informally, we could say, quotations make a very proper subset of all CL expressions (attention: cardinality is the same!). Not every CL expressions are datatype trees.

- But the reverse is not true: all CL expressions can be quoted! Foreach CL expressionther is a (unique) CL expression who quotes it!

We can define a quote function on the set of all CL expressions. But of it is an conceptually outside function, not a CL combinator itself! (that is why I do not typest it boldface. Is it an example of what Curry called epitheory?).

After having solved the representation (quoting) problem, we can do many things. We can define meta-concepts, e.g.

- (the notion of same terms)

- by bool tree equality

- (equivalence made by reduction)

- by building a metacircular interpreter

We can write our tutor program too. But let us discuss more clean and theoretical questions.

Concept of self-replication generalized -- pure functional quines

How can be the concept of quine transferred to combinatory logic? In the bellow definition, let us think of

- 's as actions, programs

- and 's as quotations, representations

| A quine is a CL term | this means quines are pure CL concepts, no imperative compromises |

| for whose normal form | this means quines are |

| there exists an equivalent CL-term where | datatypes in CL arealmost never defined in their normal form (not even ordered pairs are!). They save us from loosing information, but they almost never do that literary. I faced this as problems in nice rewritings when I wanted to implement CL with computer algebra services |

| is a quotation, | which manifests itself in the fact that is a datatype tree (not only term tree) with boolean leafs, |

| quotes | and is exactly the representation of |

So a quine is a program which is run, then rewrited as a quotation and so we get the representation of the original program.

Of course the first three requirements can be contracted in two. Thus, a quine is a CL-term which is equivalent to its own representation (if we mean representation as treated here).

A metacircular interpeter

We have seen that we can represent CL expressions in CL itself, which enables us to do some meta things (see the into of this section, especially Reflective programming, e.g. Reflection '96). The first idea could be: to implement CL in itself!

Implementing lazy evaluation

The most important subtask to achieve this goal is to implement the algorithm of lazy evaluation. I confess I simply lack almost any knowledge on algorithms for implementing lazy evaluation. In my Haskell programs, when they must implement lazy evaluation, I use the following hand-made algorithm.

Functions of increasing number of arguments pass the term tree to each other during analyzing it deaper and deaper. The functions are eval, apply, curry and lazy, but I renamed curry, because there is also a Prelude function (and a whole concept behind it) with the same name. So I chose Schönfinkel's name for naming the third function in this scheme -- it can be justified by the fact that Curry himself attributed the idea of currying to Moses Schönfinkel (but the idea is anticipated by Frege too).

module Reduce where

import Term

import Tree

import BaseSym

eval :: Term -> Term

eval (Branch function argument) = apply function argument

eval atom = atom

apply :: Term -> Term -> Term

apply (Branch f a) b = schonfinkel f a b

apply atom argument = strictApply atom argument

schonfinkel :: Term -> Term -> Term -> Term

schonfinkel (Leaf K) f x = eval f

schonfinkel (Branch f a) b c = lazy f a b c

schonfinkel s a b = strictSchonfinkel s a b

lazy :: Term -> Term -> Term -> Term -> Term

lazy (Leaf S) c f x = schonfinkel c x (Branch f x)

lazy k_or_compound x y z = schonfinkel k_or_compound x y `apply` z

strictApply :: Term -> Term -> Term

strictApply f a = f `Branch` eval a

strictSchonfinkel :: Term -> Term -> Term -> Term

strictSchonfinkel f a b = strictApply f a `strictApply` b

module Term where

import BaseSym

import Tree

type Term = Tree BaseSym

type TermV = Tree (Either BaseSym Var)

module Tree where

data Tree a = Leaf a | Branch (Tree a) (Tree a)

module BaseSym where

data BaseSym = K | S

type Var = String

and it seems hard to me hard to implement in CL. Almost all of these functions are mutual recursive definitions, and it looks hard for me to formulate the fixpont. Of coure I could find another algorithm. The main problem is that reducing CL trees is not so simple: the rule requires lookahead in 2 levels. Maybe once I find another one with monads, arrows, or attribute grammars...

A lightweight CL implementation

I mean

- instead of writing a CL interpreter (or compiler) with a huge amount of command-line arguments and colon-escaped prompt commands,

- why not to write a lightweight library? (Lightweight in the sense as Parsec is lightweight in comparison to parser generators, or QuickCheck is lightweight).

This latter framework can contain the previous one e.g. by implementing

interpreter :: IO ()

compiler :: Term -> Haskell

where Haskell can be a string, an appropriately designed abstract datatype, a rose tree or a Template Haskell term representation of Haskell expressions, thus any way used in Haskell metaprogramming (in the picture below, does it correspond to ?).

So, the latter can contain the former, but what are the new advantages?

abstract :: Var -> TermV -> Maybe Term

abstractV :: Var -> TermV -> TermV

rewrite :: Term -> Reader Definitions (Tree (Either NonVar Definiendum))

where type Definitions = Map Definiendum Term.

So we can get compiler algebra and other useful services in a modular way.

Of course also an interpreter can yield useful services, but as the user interface grows, it develops to have a command language, which is in most cases imperative having less gluing possibilities than being able to use Haskell itself by this lightweight approach.

In fact, it the was the quines (mentioned above) that forced me to think of a lightweight CL library instead of an interpreter. Writing a CL quine (in the way I can do) requires a lot of : quoting CL terms in CL itself. But quoting CL cannot be done in CL (only : quoting-the-quotation-of-CL-further can be done in CL), so my CL quine plans needed a lot of work quoting CL terms by hand. A lightweight CL library could do this job by using the power of Haskell (quoting CL terms can be done in Haskell, or more generally said: in the ultimate implementating language of this CL-project).

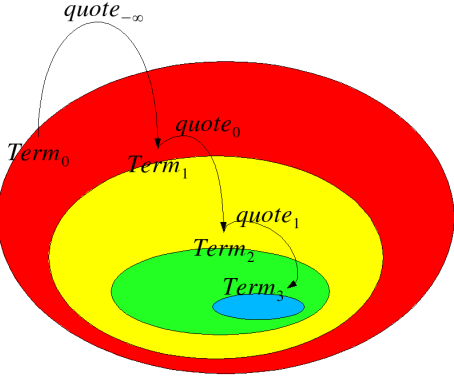

The quotation stratification of subsequent series of meta- and object languages:

- the ultimate implementing language of thus CL project (here Haskell)

- combinatory logic, its terms being represented by Haskell binary tree abstract datatype.

- combinatory logic, its terms being represented by combinatory logic binary tree abstract datatype. We have already seen that we can define lists in combinatory logic by catamorhisms, so we can define binary trees too. And we can represent the base combinators by booleans which can be defined too in CL. So we can quote each CL term in CL itself.

- ...

Quoting:

Invoking:

-- Yet we do not require type safety in quotation stratification of CL terms:

type Term1 = Term

type Term2 = Term

type Term3 = Term

-- ...

quote'0 :: Term1 -> Term2

quote'1_0 :: Term2 -> Term3

-- ...

-- At or beyond the depth of quote'1

-- these functions can be implemented in CL too

-- more generally: everyhing can be made at a higher level too,

-- but not vice versa. So everyhing has a grad

-- We index things by this grad

-- Ultimate implementing language (here:Haskell) is designed by grad 0

-- Unavailable grad is designed by minus infinity

-- (see the analogy of grad of polinomials)

quote'1 :: Term1

quote'2_1 :: Term1

quote'2 :: Term2

Illative combinatory logic

- Jonathan P. Seldin: Curry’s anticipation of the types used in programming languages

- Jonathan P. Seldin: The Logic of Curry and Church (it is also an introduction to illative combinatory logic)

- Henk Barendregt, Martin Bunder, Wil Dekkers: Systems of Illative Combinatory Logic complete for first-order propositional and predicate calculus.

I think combinator can be thought of as something analogous to Dependent type: it seems to me that the dependent type construct of Epigram corresponds to in Illative Combinatory Logic. I think e.g. the followings should correspond to each other:

My dream is making something in Illative Combinatory Logic. Maybe it could be theoretical base for a functional logic language?

References

- [Bun:NatICL] Martin W. Bunder: The naturalness of Illative Combinatory Logic as a Basis for Mathematics, see in Dedicated to H.B.Curry on the occasion of his 80th birthday.