Index

Haskell Communities and Activities Report

Twelfth edition – May 30, 2007

Andres Löh (ed.)

Lloyd Allison

Tiago Miguel Laureano Alves

Krasimir Angelov

Carlos Areces

Alistair Bayley

Jean-Philippe Bernardy

Clifford Beshers

Chris Brown

Bjorn Buckwalter

Andrew Butterfield

Manuel Chakravarty

Olaf Chitil

Duncan Coutts

Jacome Cunha

Atze Dijkstra

Frederik Eaton

Martin Erwig

Jeroen Fokker

Richard A. Frost

Clemens Fruhwirth

Andy Gill

Dimitry Golubovsky

Daniel Gorin

Martin Grabmüller

Murray Gross

Walter Gussmann

Kevin Hammond

Christopher Lane Hinson

Guillaume Hoffmann

Paul Hudak

Liyang Hu

Graham Hutton

S. Alexander Jacobson

Wolfgang Jeltsch

Antti-Juhani Kaijanaho

Jeremy O’Donoghue

Oleg Kiselyov

Dirk Kleeblatt

Lennart Kolmodin

Slawomir Kolodynski

Eric Kow

Huiqing Li

Andres Löh

Rita Loogen

Salvador Lucas

Ian Lynagh

Ketil Malde

Christian Maeder

Simon Marlow

Conor McBride

Arie Middelkoop

Neil Mitchell

William Garret Mitchener

Andy Adams-Moran

Dino Morelli

Yann Morvan

Diego Navarro

Rishiyur Nikhil

Stefan O’Rear

Sven Panne

Ross Paterson

Simon Peyton-Jones

Claus Reinke

Colin Runciman

Alberto Ruiz

David Sabel

Uwe Schmidt

Alexandra Silva

Ganesh Sittampalam

Anthony Sloane

Dominic Steinitz

Donald Bruce Stewart

Jennifer Streb

Glenn Strong

Martin Sulzmann

Doaitse Swierstra

Wouter Swierstra

Hans van Thiel

Henning Thielemann

Peter Thiemann

Simon Thompson

Phil Trinder

Miguel Vilaca

Joost Visser

Edsko de Vries

Malcolm Wallace

Mark Wassell

Stefan Wehr

Ashley Yakeley

Bulat Ziganshin

Preface

You are reading the twelfth edition of the Haskell Communities and Activities

Report – as always, containing entries from enthusiastic Haskellers all over

the world.

This edition has 138 entries, 33 of them are completely new (and therefore

highlighted with a blue background), and 54 have had updates since the previous

edition (and have a header with a blue background). All entries that have not

been updated for a year or longer have been removed to make sure that your are

reading information that is as up-to-date as possible.

I want to use the opportunity to thank all the contributors. This report has 90

authors, but the number of total contributors to all the projects reported on

is much, much greater. I find it wonderful that the Haskell communities continue

to be so diverse and open at the same time.

As always, I want to encourage you to watch out for projects that are missing

from this report, and to make their authors aware of the report so that they can

contribute to the November edition (deadline probably around the end of October).

Feedback is very welcome at <hcar at haskell.org>. Pleasant reading!

Andres Löh, University of Bonn, Germany

1 General

1.1 HaskellWiki and haskell.org

HaskellWiki is a MediaWiki installation running on haskell.org,

including the haskell.org “front page”. Anyone can create an account

and edit and create pages. Examples of content include:

- Documentation of the language and libraries

- Explanation of common idioms

- Suggestions and proposals for improvement of the language and

libraries

- Description of Haskell-related projects

- News and notices of upcoming events

We encourage people to create pages to describe and advertise their

own Haskell projects, as well as add to and improve the existing

content. All content is submitted and available under a “simple

permissive” license (except for a few legacy pages).

In addition to HaskellWiki, the haskell.org website hosts some

ordinary HTTP directories. The machine also hosts mailing lists.

There is plenty of space and processing power for just about anything

that people would want to do there: if you have an idea for which

HaskellWiki is insufficient, contact the maintainers, John Peterson

and Olaf Chitil, to get access to this machine.

Further reading

The #haskell IRC channel is a real-time text chat where anyone

can join to discuss Haskell. The channel has grown dramatically in

users over the last 6 months, and now #haskell averages over

300 concurrent users (with a high water mark of 340 users), and is one

of the biggest channels on freenode. The irc channel is home to hpaste

and lambdabot, two useful Haskell bots. Point your IRC client to

irc.freenode.net and join the #haskell conversation!

For non-English conversations about Haskell there is now:

- #haskell.de – German speakers

- #haskell.dut – Dutch speakers

- #haskell.es – Spanish speakers

- #haskell.fi – Finnish speakers

- #haskell.fr – French speakers

- #haskell.hr – Croatian speakers

- #haskell.it – Italian speakers

- #haskell.jp – Japenese speakers

- #haskell.no – Norwegian speakers

- #haskell_ru – Russian speakers

- #haskell.se – Swedish speakers

Related Haskell channels are now emerging, including:

- #haskell-overflow – Overflow conversations

- #haskell-blah – Haskell people talking about anything except Haskell itself

- #gentoo-haskell – Gentoo/Linux specific Haskell conversations (→7.4.2)

- #darcs – Darcs revision control channel (written in Haskell) (→6.10)

- #ghc – GHC developer discussion (→2.1)

- #happs – HAppS Haskell Application Server channel (→4.10.1)

- #xmonad – Xmonad a tiling window manager written in Haskell (→6.1)

Further reading

More details at the #haskell home page:

http://haskell.org/haskellwiki/IRC_channel

Planet Haskell is an aggregator of Haskell people’s blogs and other

Haskell-related news sites. As of mid-October content from 29 blogs

and other sites is being republished in a common format.

A common misunderstanding about Planet Haskell is that it republishes

only Haskell content. That is not its mission. A Planet shows

what is happening in the community, what people are thinking about or

doing. Thus Planets tend to contain a fair bit of “off-topic”

material. Think of it as a feature, not a bug.

A blog is eligible to Planet if it is being written by somebody who is

active in the Haskell community, or by a Haskell celebrity; also

eligible are blogs that discuss Haskell-related matters frequently,

and blogs that are dedicated to a Haskell topic (such as a software

project written in Haskell). Note that at least one of these

conditions must apply, and virtually no blog satisfies them all.

However, blogs will not be added to Planet without the blog author’s

consent.

To get a blog added, email Antti-Juhani Kaijanaho

<antti-juhani at kaijanaho.fi> and provide evidence that the blog

author consents to this (easiest is to get the author send the email,

but any credible method suffices).

Planet is hosted by Galois Connections, Inc. (→7.1.3) as a

service to the community. The Planet maintainer is not affiliated with

them.

Further reading

http://planet.haskell.org/

The Haskell Weekly News (HWN) is a weekly newsletter covering

developments in Haskell. Content includes announcements of new projects,

jobs, discussions from the various Haskell communities, notable project

commit messages, Haskell in the blogspace, and more.

It is published in html form on The Haskell Sequence,

via mail on the Haskell mailing list, on Planet

Haskell (→1.3), and via RSS. Headlines are published on

haskell.org (→1.1).

Further reading

There are plenty of academic papers about Haskell and plenty of

informative pages on the Haskell Wiki. Unfortunately, there’s not

much between the two extremes. That’s where The Monad.Reader tries

to fit in: more formal than a Wiki page, but more casual than a

journal article.

There are plenty of interesting ideas that maybe don’t warrant an

academic publication – but that doesn’t mean these ideas aren’t

worth writing about! Communicating ideas to a wide audience is much

more important than concealing them in some esoteric journal. Even

if its all been done before in the Journal of Impossibly

Complicated Theoretical Stuff, explaining a neat idea

about ‘warm fuzzy things’ to the rest of us can still be plain fun.

The Monad.Reader is also a great place to write about a tool or

application that deserves more attention. Most programmers don’t

enjoy writing manuals; writing a tutorial for The Monad.Reader,

however, is an excellent way to put your code in the limelight and

reach hundreds of potential users.

I do try to publish a new issue quarterly, but I’m completely

reliant on your submissions. So please consider contributing to the

functional programming community by writing something for The

Monad.Reader!

Further reading

All the recent issues and the information you need

to start writing an article are available from:

http://www.haskell.org/haskellwiki/The_Monad.Reader.

1.6 Books and tutorials

1.6.1 New textbook – Programming in Haskell

Haskell is one of the leading languages for teaching functional

programming, enabling students to write simpler and cleaner code,

and to learn how to structure and reason about programs. This

introduction is ideal for beginners: it requires no previous

programming experience and all concepts are explained from first

principles via carefully chosen examples. Each chapter includes

exercises that range from the straightforward to extended projects,

plus suggestions for further reading on more advanced topics. The

presentation is clear and simple, and benefits from having been

refined and class-tested over several years.

Features:

- Powerpoint slides for each chapter freely available for instructors

and students from the book’s website;

- Solutions to exercises and examination questions (with solutions)

available to instructors;

- All the code in the book is fully compliant with the latest release

of Haskell, and can be downloaded from the web;

- Can be used with courses, or as a stand-along text for self-learning.

Publication details:

- Published by Cambridge University Press, January 2007.

Paperback: ISBN 0521692695; Hardback: ISBN: 0521871727.

Further information:

1.6.2 Haskell Wikibook (was: Haskell Tutorial Wikibook)

The Haskell wikibook is an attempt to build a community textbook that is

at once free (in cost and remixability), comprehensive and cohesive.

Since the last report, we have added some original content, giving a

friendly introduction to advanced topics: Category Theory, Denotational

Semantics, The Curry-Howard isomorphism and Zippers. Thanks to David

House and Apfelmus for their hard work and to the Haskell community for

your helpful comments! (Of course, one of our greatest dreams is a

module by one of the very founders of the Haskell programming language.)

The wikibook is starting to be recognised as a useful resource for

beginners in Haskell, and has been receiving some positive comments from

the blogosphere. The wikibook has even selected for inclusion into the

list of “featured books” on the English wikibooks project. It will

now be prominently displayed on the wikibooks front page in rotation

with other featured books.

Our community has been starting to grow, in the meantime. For example,

the Polish and Russian Haskell wikibooks have been rather active in the

last six months. Want to see a Haskell wikibook in your language? Be

bold and get started! While you’re at it, you might even consider

participating in our new mailing list, <wikibook at haskell.org>.

Further reading

http://en.wikibooks.org/wiki/Haskell

1.6.3 Haskell Tutorials in Portuguese

1.6.3.1 Two weights, two measures

“Two weights, two measures” is a Haskell tutorial focusing on the

construction of a very simple DSEL for a fictional prison system

exploiting the structure of the Either type (with a few proposed

extensions). Its target audience is beginning programmers. The

tutorial aims to explore the first steps of how

closures/combinators/higher-order functions can be used to define

domain specific languages for simple algebraic structures. It’s

currently available only in portuguese, but it should be translated at

some point.

The full text can be found at the URL below.

An introduction to Haskell with autophagic snakes

“An introduction to Haskell with autophagic snakes” is a Haskell

tutorial focusing on the exploration of co-recursive sequences using

infinite lists in Haskell. Its target audience is beginning

programmers. The tutorial aims to exempllify lazy evaluation and

simple combinators to abstract repetitive structures in the

corecursive definitions of sequences. It’s currently available only in

portuguese, but it should be translated at some point.

The full text can be found at the URL below.

Further reading

1.7 A Survey on the Use of Haskell in Natural-Language Processing

The survey "Realization of Natural-Language Interfaces Using Lazy

Functional Programming" is scheduled to be published in ACM Computing

Surveys in December 2006. If I have missed any relevant publications,

please contact me at rfrost@cogeco.ca. It may be possible to add references

before the survey goes to print. If not, I shall put new references on a

web page which I am creating to keep the survey up-to-date with future

work.

Further reading

A draft of the survey is available at:

http://cs.uwindsor.ca/~richard/PUBLICATIONS/NLI_LFP_SURVEY_DRAFT.pdf

2 Implementations

2.1 The Glasgow Haskell Compiler

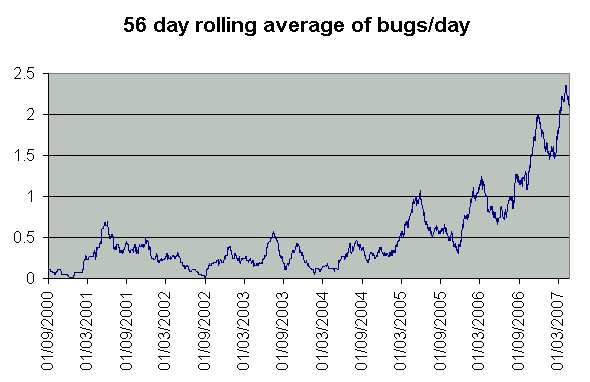

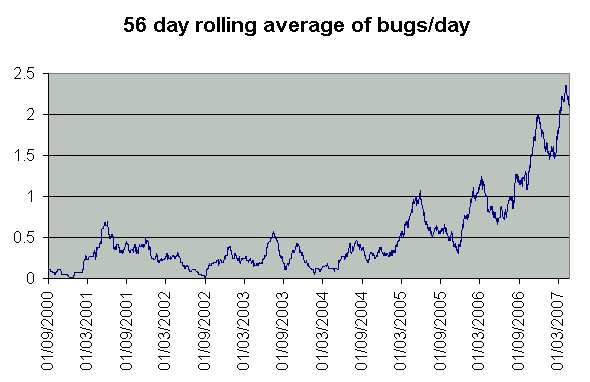

GHC continues to thrive. One indicator of how widely GHC is used is the

number of bug reports we get. Here is a graph showing how the number of

bug reports filed has varied with time:

You could interpret these figures as saying that GHC is getting steadily

more unreliable! But we don’t think so …we believe that it’s

mostly a result of more people using GHC, for more applications, on more

platforms.

As well as more bug reports, we are getting more help from the community, too.

Some people regularly commit patches, and we get a steady trickle of patches

emailed in from folk who (mostly) do not have commit rights, but who have built GHC,

debugged a problem, sent us the patch. Our thanks go out to

Aaron Tomb, Alec Berryman, Alexey Rodriguez, Andrew Pimlott,

Andy Gill, Bas van Dijk, Bernie Pope, Bjorn Bringert,

Brian Alliet, Brian Smith, Chris Rodrigues, Claus Reinke,

David Himmelstrup, David Waern, Judah Jacobson, Isaac Jones,

Lennart Augustsson, Lennart Kolmodin, Manuel M T Chakravarty,

Pepe Iborra, Ravi Nanavati, Samuel Bronson, Sigbjorn Finne,

Spencer Janssen, Sven Panne, Tim Chevalier, Tim Harris,

Tyson Whitehead, Wolfgang Thaller, and anyone else who has contributed

but we have accidentally omitted.

As a result of this heavy usage, it has taken us nearly six months to

stabilise GHC 6.6.1, fixing over 100 reported bugs or infelicities in

the already-fairly-solid GHC 6.6.

The HEAD (which will become GHC 6.8) embodies nine months

of development work since we forked the tree for GHC 6.6. We are now

aiming to get a stable set of features implemented in the HEAD, with

a view to forking off the GHC 6.8 branch in the early summer. As

our last HCAR report indicated, there will be lots of new stuff in

GHC 6.8. The rest of this entry describes the features that

are likely to end up in 6.8.

You can find binary snapshots at

the download page http://www.haskell.org/ghc/dist/current/dist/

or build from sources available via the darcs

repository (http://darcs.haskell.org/ghc/).

Simon Peyton Jones, Simon Marlow, Ian Lynagh

Type system and front end

-

We have completely replaced GHC’s intermediate language with

System FC(X), an extension of System F with explicit equality

witnesses. This enables GHC to support GADTs and associated types,

with two new simple but powerful mechanisms. The paper is

“System F with Type Equality Coercions”

(http://research.microsoft.com/~simonpj/papers/ext-f/)

Much of the conversion work was done by Kevin Donnelly, while he

was on an internship at Microsoft.

-

Manuel Chakravarty has implemented “data-type families” (aka indexed data types),

a modest generalisation of the “associated data types”

of our POPL’05 paper “Associated types with class”

(http://research.microsoft.com/~simonpj/papers/assoc-types/)

This part is done. Now we are working on “type-synonym families”

(aka type functions or associated type synonyms (ICFP’05)

(http://research.microsoft.com/~simonpj/papers/assoc-types),

which are considerably trickier that

data type families, at least so far as type inference is concerned.

Tom Schrijvers is in Cambridge for three months to help us use ides

from Constraint Handling Rules to solve the inference problem.

Type synonym families will almost completely fill the spot occupied

by the always-troublesome functional dependencies, so we are quite

excited about this.

Details are at http://haskell.org/haskellwiki/GHC/Indexed_types.

-

Simon PJ finally implemented “implication constraints”, which are

the key to fixing the interaction between

GADTs and type classes. GHC’s users have been very polite about

this collection of bugs, but they are now finally fixed.

Implication constraints are described by Martin Sulzmann in

“A framework for Extended Algebraic Data Types”

(http://www.comp.nus.edu.sg/~sulzmann/publications/tr-eadt.ps.gz).

-

Björn Bringert (a GHC Hackathon graduate) implemented

“standalone deriving”, which allows you to write a ‘deriving’

declaration anywhere, rather than only where the data type is

declared. Details of the syntax have not yet quite settled. See

also http://haskell.org/haskellwiki/GHC/StandAloneDeriving.

-

Lennart Augustsson implemented overloaded string literals. So now

just as a numeric literal has type forall a. Num a => a,

so a string literal has type forall a. IsString a => a,

The documentation is here:

http://www.haskell.org/ghc/dist/current/docs/users_guide/other-type-extensions.html#overloaded-strings.

A less successful feature of the last year has been the

story on impredicative instantiation

(see the paper “Boxy types: type inference for higher-rank types and impredicativity”

(http://research.microsoft.com/~simonpj/papers/boxy).

The feature is implemented, but the implementation is significantly

more complicated than we expected; and it delivers fewer benefits than

we hoped. For example, the system described in the paper does not

type-check (runST $ foo) and everyone complains. So Simon PJ added

an even more ad-hoc extension that does left-to-right instantiation.

The power-to-weight ratio is not good. We’re still hoping that

Dimitrios Vytiniotis and Stephanie Weirich will come out with a simpler

system, even if it’s a bit less powerful. So don’t get too used to

impredicative instantiation as it now stands; it might change!

Optimisations

-

Simon PJ rewrote the Simplifier (again). It isn’t clear whether it was that alone, or

whether something else happened too, but performance has improved quite significantly;

on the order of 12%.

-

Roman Leshchinskiy, Don Stewart, and Duncan Coutts did some beautiful

work on “fusion”; see their paper “Rewriting Haskell strings”

(http://www.cse.unsw.edu.au/~dons/papers/CSL06.html).

This fusion work is already being heavily used in the parallel array library

(see below), and they are also working on replacing foldr/build fusion with

stream fusion in the main base library (→4.6.2) (→4.6.3).

Their work highlighted the importance of the SpecConstr transformation, which Simon PJ

implemented several years ago. Of course, they suggested many enhancements, many of

which Simon PJ duly implemented; see the new paper

“Constructor specialisation for Haskell programs”

(http://research.microsoft.com/~simonpj/papers/spec-constr/).

-

Alexey Rodriguez visited us for three months from Utrecht, and implemented

a new back-end optimisation called “dynamic pointer tagging”. We have wanted

to do this for ages, but it needed a skilled and insightful hacker to make it all

happen, and Alexey is just that. This optimisation alone buys us another 15%

performance for compiled programs: see the paper

“Dynamic pointer tagging”

(http://research.microsoft.com/~simonpj/papers/ptr-tag/index.htm).

Concurrency

-

Gabriele Keller, Manuel Chakravarty, and Roman Leshchinskiy, at the

University of New South Wales, are collaborating with us on support

for “nested data-parallel computation” in GHC.

We presented a paper “Data parallel Haskell: a status report”

(http://research.microsoft.com/~simonpj/papers/ndp)

at the Declarative Aspects of Multicore Programing

workshop in January 2007, and made a first release of the

library in March.

It’s a pretty ambitious project, and we have quite a way to go.

You can peek at the current status on the project home page:

http://haskell.org/haskellwiki/GHC/Data_Parallel_Haskell.

-

Tim Harris added support for ”invariants” to GHC’s Software

Transactional Memory (STM) implementation. Paper is

“Transactional memory with data invariants”

(http://research.microsoft.com/~simonpj/papers/stm/).

-

At the moment GHC’s “garbage collector” is single-threaded,

even when GHC is running on a multiprocessor. Roshan James spent

the summer at Microsoft on an internship, implementing a multi-threaded

GC (http://hackage.haskell.org/trac/ghc/wiki/MotivationForParallelization).

It works! But alas, doing GC with two processors runs no faster than

with one! (We do plan to investigate this further and find the source of

the bottleneck.)

Peng Li, from the University of Pennsylvania, spent an exciting

three months at Cambridge, working on a whole new architecture for

concurrency in GHC. (If you don’t know Peng you should read his

wonderful paper

“Combining Events And Threads For Scalable Network Services”

(http://www.seas.upenn.edu/~lipeng/homepage/papers/lz07pldi.pdf)

on implementing a network protocol stack in

Haskell.) At the moment GHC’s has threads, scheduling, forkIO,

MVars, transactional memory, and more besides, all “baked

into” the run-time system and implemented in C. If you want to

change this implementation you have to either be Simon Marlow, or else

very brave indeed. With Peng (and help from Andrew Tolmach, Olin

Shivers, Norman Ramsey) we designed a new, much lower-level set of

primitives, that should allow us to implement all of the above

“in Haskell”. If you want a different scheduler, just code it up

in Haskell, and plug it in.

Peng has a prototype running, but it has to jump the “Marlow barrier”

of being virtually as fast as the existing C runtime; so far we have

not committed to including this in GHC, and it certainly won’t be in

GHC 6.8. No paper yet, but look out for a Haskell Workshop 2007 submission.

Programming environment

There have been some big developments in the programming

environment:

-

Andy Gill implemented the Haskell Program Coverage

(http://haskell.org/haskellwiki/GHC/HPC)

option (-fhpc) for GHC, which is solid enough to be used to

test coverage in GHC itself. (It turns out that the GHC testsuite

gives remarkably good coverage over GHC already.)

-

Pepe Iborra, Bernie Pope, and Simon Marlow have leveraged the same

“tick” points used in the Haskell Program Coverage work to implement

a breakpoint debugger in GHCi http://hackage.haskell.org/trac/ghc/wiki/NewGhciDebugger.

Unlike HAT, which transforms the whole

program into a new program that generates its own (massive) trace,

this is a cheap-and-cheerful debugger. It simply lets you set

breakpoints and look around to see what is in the heap, more in the

manner of a conventional debugger. No need to recompile your program: it

“just works”.

-

Aaron Tomb and Tim Chevalier are working on resurrecting External

Core, whose implementation was not only bit-rotted, but also poorly

designed (by Simon PJ). By GHC 6.8 we hope to be able to spit out External

Core for any program, perhaps transform it in some external program,

and read it in again, surviving the round trip unscathed.

-

It is now possible to compile to object code instead of bytecode inside GHCi,

simply by setting a flag

(-fobject-code).

-

The GHC API has seen some cleanup, and it should now be both more complete

and slightly easier to use. There is still plenty of work to do here,

though.

-

David Waern has been working on integrating Haddock and GHC during his

Google Summer of Code project last year. The parts of this project that

involved modifying GHC are done and integrated into the GHC tree. The new

version of Haddock based on GHC is usable but still experimental; the darcs

repository is http://darcs.haskell.org/SoC/haddock.ghc.

Libraries

-

The set of “corelibs” has been further streamlined, with parsec,

regex-base, regex-compat, regex-posix and stm

moved to extralibs in the HEAD. This disentangles

releases of these packages from the GHC release process, and also means that development

builds of GHC are quicker as they don’t need to build those libraries.

-

We plan to extract parts of the base package into separate smaller packages;

see http://www.haskell.org/pipermail/libraries/2007-April/007342.html

on the libraries mailing list.

The September 2006 release of Hugs fixes a few bugs found in the

previous release, and updates the libraries to approximately match

those of GHC 6.6, which was about to release at the time. The

Windows build is now largely automated, thanks to Neil Mitchell,

so it is easier to produce more frequent releases.

As with the previous release, the source distribution is available

in two forms: a huge omnibus bundle containing the Hugs programs

and lots of useful libraries, or a minimal bundle, with most of the

libraries hived off as separate Cabal packages. We hope that more

library packages will be released independently, so that Hugs will

become less reliant on development snapshots.

Obsolete non-hierarchical libraries will be removed in the next

major release.

As ever, volunteers are welcome.

nhc98 is a small, easy to install, compiler for Haskell’98. Despite

rumours to the contrary, nhc98 is still very much alive and working,

although it does not see much new development these days.

The current public release is version 1.18, with a new release expected

soon for compatibility with ghc-6.6 and the re-arranged hierarchical

libraries. We recently moved over to a darcs repo for maintenance.

The Yhc (→2.4) fork of nhc98 is also making good progress.

Further reading

The York Haskell Compiler (yhc) is a fork of the nhc98 (→2.3)

compiler, with goals such as increased portability, platform

independent bytecode, integrated Hat support and generally being a

cleaner code base to work with. Yhc now compiles and runs almost all

Haskell 98 programs, has basic FFI support – the main thing missing is

haskell.org base libraries, which is being worked on.

Since that last HCAR we have focused on integrating the standard

haskell.org libraries (we have gained Data.Map and others) – but still have

some way to go. We have also enhanced our Yhc.Core library, gaining many new

users, and have produced an article for The Monad.Reader (→1.5)

on the applications of Yhc.Core.

Further reading

3 Language

3.1 Variations of Haskell

When Haskell consists of Haskell semantics plus Haskell syntax, then

Liskell consists of Haskell semantics plus Lisp syntax. Liskell is

Haskell on the inside but looks like Lisp on the outside, as in its

source code it uses the typical Lisp syntax forms, namely symbol

expressions, that are distinguished by their fully parenthesized

prefix notation form. Liskell captures the most Haskell syntax forms

in this prefix notation form, for instance:

if x then y else z becomes (if x y z), while

a + b becomes (+ a b).

Except for aesthetics, there is another argument for Lisp syntax:

meta-programming becomes easy. Liskell features a different

meta-programming facility than the one found in Haskell with Template

Haskell. Before turning the stream of lexed tokens into an abstract

Haskell syntax tree, Liskell adds an intermediate processing data

structure: the parse tree. The parse tree is essentially is a string

tree capturing the nesting of lists with their enclosed symbols stored

as the string leaves. The programmer can implement arbitrary code

expansion and transformation strategies before the parse tree is seen

by the compilation stage.

After the meta-programming stage, Liskell turns the parse tree into a

Haskell syntax tree before it sent to the compilation

stage. Thereafter the compiler treats it as regular Haskell code and

produces a Haskell calling convention compatible output. You can use

Haskell libraries from Liskell code and vice versa.

Liskell is implemented as an extension to GHC and its darcs branch is

freely available from the project’s website. The Liskell Prelude

features a set of these parse tree transformations that enables

traditional Lisp-styled meta-programming as with defmacro and

backquoting. The project’s website demonstrates meta-programming

application such as proof-of-concept versions of embedding Prolog

inference, a minimalistic Scheme compiler and type-inference in

meta-programming.

The future development roadmap includes stabilization of its design,

improving the user experience for daily programming – especially

error reporting – and improving interaction with Emacs.

Further reading

http://liskell.org

3.1.2 Haskell on handheld devices

The project at Macquarie University (→7.3.5) to run Haskell on handheld devices

based on Palm OS has a running implementation for small tests but, like most ports of

languages to Palm OS, we are dealing with memory allocation issues. Also, other higher

priority projects have now intervened so this project is going into the background for a

while.

The Camila project explores how concepts from the VDM++ specification language

and the functional programming language Haskell can be combined. On one

hand, it includes experiments of expressing VDM’s data types (e.g. maps, sets,

sequences), data type invariants, pre- and post-conditions, and such within

the Haskell language. On the other hand, it includes the translation of VDM

specifications into Haskell programs. Moreover, the use of the

OOHaskell library (→4.6.6) allows the definition of classes and objects and

enables important features such as inheritance. In the near

future, support for parallelism and automatic translation of VDM++ specifications

into Haskell will be added to the libraries.

Camila goes beyond VDM++ and has support for modelling software

components. The work done until now in this field is concerned with

rendering and prototyping (coalgebraic models of) software components

in Camila. To encourage the use of this technology we have developed a

tool to generate components from Camila specifications. The advantage

of component based development is that it makes possible to construct

complex software from simple pre-existing building blocks. So we have

also animated an algebra of components to compose them in several

ways. Finally a way to animate components was also implemented.

Two implementation strategies were devised: one in terms of a direct

encoding in “plain” Haskell, another resorting to type-level

programming techniques, the latter offered interesting

particularities.

Further reading

The web site of Camila

(http://wiki.di.uminho.pt/wiki/bin/view/PURe/Camila) provides

documentation. Both library and tool are distributed as part of the UMinho

Haskell Libraries and Tools.

3.2 Non-sequential Programming

3.2.1 GpH – Glasgow Parallel Haskell

Status

A complete, GHC-based implementation of the parallel Haskell extension

GpH and of

evaluation

strategies is available. Extensions of the runtime-system and language to

improve performance and support new platforms are under development.

System Evaluation and Enhancement

- A major revision of the parallel runtime environment for GHC 6.5

is currently under development. Support for the parallel language

Eden (→3.2.2) exists and is currently being tested. Support for the

parallel language GpH is currently being added to this version

of the runtime environment.

- We have developed an adaptive runtime environment (GRID-GUM) for

GpH on computational grids. GRID-GUM incorporates new load

management mechanisms that cheaply and effectively combine static

and dynamic information to adapt to the heterogeneous and

high-latency environment of a multi-cluster computational grid. We

have made comparative measures of GRID-GUM’s performance on

high/low latency grids and heterogeneous/homogeneous grids using

clusters located in Edinburgh, Munich and Galashiels. Results are

published in:

Al Zain A. Implementing High-Level Parallelism on Computational

Grids, PhD Thesis, Heriot-Watt University, 2006.

Al Zain A. Trinder P.W. Loidl H.W. Michaelson G.J. Managing

Heterogeneity in a Grid Parallel Haskell, Journal of Scalable

Computing: Practice and Experience 7(3), (September 2006).

- SMP-GHC, an implementation of GpH for multi-core machines has been

developed by Tim Harris, Simon Marlow and Simon Peyton

Jones.

- At St Andrews GpH is being used as a vehicle for investigating

scheduling on the GRID.

- We are teaching parallelism to undergraduates using GpH at

Heriot-Watt and

Phillips

Universitat Marburg.

GpH Applications

- GpH is being used to parallelise the GAP mathematical library in an EPSRC

project (GR/R91298).

- As part of the SCIEnce EU FP6 I3 project (026133) (→7.3.9) that started in

April 2006 we will use GpH and Java to provide access to Grid

services from Computer Algebra(CA) systems, including GAP and

Maple. We will both produce Grid-parallel implementations of

common CA library functions, and also wrap CA systems as Grid

services.

Implementations

The GUM implementation of GpH is available in three development branches.

- The focus of the development has switched to the version based on

GHC 6.5, and we plan to make an early prototype available from the

GpH web site later

this year.

- The stable branch (GUM-4.06, based on GHC-4.06) is available for

RedHat-based Linux machines.

The stable branch is available from the GHC CVS repository

via tag gum-4-06.

’item A current unstable branch (GUM-5.02, based on GHC-5.02) is

available on request.

Our main hardware platform are Intel-based Beowulf clusters. Work on ports to

other architectures is also moving on (and available on request):

- A port to a Mosix cluster has been built in the

Metis project at

Brooklyn College, with a first version available on request from Murray

Gross.

Further reading

Contact

<gph at macs.hw.ac.uk>, <mgross at dorsai.org>

Description

Eden has been jointly developed by two groups at Philipps

Universität Marburg, Germany and Universidad Complutense de

Madrid, Spain. The project has been ongoing since 1996. Currently,

the team consists of the following people:

-

in Madrid:

Ricardo Peña, Yolanda Ortega-Mallén,

Mercedes Hidalgo, Fernando Rubio, Clara Segura, Alberto Verdejo

-

in Marburg:

Rita Loogen, Jost Berthold, Steffen Priebe, Mischa Dieterle

Eden extends Haskell with a small set of syntactic constructs for

explicit process specification and creation. While providing

enough control to implement parallel algorithms efficiently, it

frees the programmer from the tedious task of managing low-level

details by introducing automatic communication (via head-strict

lazy lists), synchronisation, and process handling.

Eden’s main constructs are process abstractions and process

instantiations. The function process :: (a -> b) -> Process a

b embeds a function of type (a -> b) into a process

abstraction of type Process a b which, when instantiated,

will be executed in parallel. Process instantiation is

expressed by the predefined infix operator ( # ) :: Process

a b -> a -> b. Higher-level coordination is achieved by defining

skeletons, ranging from a simple parallel map to

sophisticated replicated-worker schemes. They have been used to

parallelise a set of non-trivial benchmark programs.

Survey and standard reference

Rita Loogen, Yolanda Ortega-Mallén and Ricardo Peña:

Parallel Functional Programming in Eden, Journal of

Functional Programming 15(3), 2005, pages 431–475.

Implementation

A major revision of the parallel Eden runtime environment for GHC

6.7 is available on request. Support for Glasgow parallel Haskell

(GpH) is currently being added to this version of the runtime

environment. It is planned for the future to maintain a common

parallel runtime environment for Eden, GpH and other parallel

Haskells.

Recent and Forthcoming Publications

- Steffen Priebe: Structured Generic Programming in

Eden, Department of Mathematics and Computer Science

Philipps-Universitaet Marburg, February 2007.

- Jost Berthold and Rita Loogen: Visualising Parallel

Functional Program Runs - Case Studies with the Eden Trace

Viewer, Parallel Computing (ParCo) 2007, September 2007.

- Jost Berthold, Mischa Dieterle, Rita Loogen, Steffen Priebe:

Hierarchical Master-Worker Skeletons, Symposium on Trends

in Functional Programming (TFP), New York, April 2007.

- Jost Berthold, Abyd Al-Zain, and Hans-Wolfgang Loidl:

Adaptive High-Level Scheduling in a Generic Parallel Runtime

Environment, Symposium on Trends in Functional Programming (TFP),

New York, April 2007.

- Jost Berthold, Rita Loogen: Parallel Coordination Made

Explicit in a Functional Setting. In Zolton Horath and

Viktoria Zsok, editors, 18th Intl. Symposium on the

Implementation of Functional Languages (IFL 2006), LNCS 4449, pp

73–90, Springer 2007. Awarded best paper of IFL 2006 (Peter

Landin-Prize 2006).

- Mercedes Hidalgo-Herrero, Yolanda Ortega-Mallen,

Fernando Rubio: Comparing Alternative Evaluation Strategies

for Stream-based Parallel Functional Languages. In Zolton

Horath and Viktoria Zsok, editors, 18th Intl.

Symposium on the Implementation of Functional Languages (IFL

2006), LNCS 4449, Springer 2007.

- Mercedes Hidalgo-Herrero, Alberto Verdejo, Yolanda

Ortega-Mallen: Using Maude and its strategies for

defining a framework for analyzing Eden semantics, WRS 06 (6th

International Workshop on Reduction Strategies in Rewriting and

Programming), Aachen 2006, Electronic Notes in Theoretical

Computer Science, to appear.

Further reading

http://www.mathematik.uni-marburg.de/~eden

3.3 Type System/Program Analysis

Epigram is a prototype dependently typed functional programming language,

equipped with an interactive editing and typechecking environment.

High-level Epigram source code elaborates into a dependent type theory

based on Zhaohui Luo’s UTT. The definition of Epigram, together with its

elaboration rules, may be found in ‘The view from the left’ by Conor

McBride and James McKinna (JFP 14 (1)).

Motivation

Simply typed languages have the property that any subexpression of a well

typed program may be replaced by another of the same type. Such type systems

may guarantee that your program won’t crash your computer, but the simple fact

that True and False are always interchangeable inhibits the expression of

stronger guarantees. Epigram is an experiment in freedom from this compulsory

ignorance.

Specifically, Epigram is designed to support programming with inductive

datatype families indexed by data. Examples include matrices indexed by their

dimensions, expressions indexed by their types, search trees indexed by their

bounds. In many ways, these datatype families are the progenitors of

Haskell’s GADTs, but indexing by data provides both a conceptual

simplification – the dimensions of a matrix are numbers – and a new

way to allow data to stand as evidence for the properties of other

data. It is no good representing sorted lists if comparison does not produce

evidence of ordering. It is no good writing a type-safe interpreter if one’s

typechecking algorithm cannot produce well-typed terms.

Programming with evidence lies at the heart of Epigram’s design. Epigram

generalises constructor pattern matching by allowing types resembling

induction principles to express as how the inspection of data may affect both

the flow of control at run time and the text and type of the program in the

editor. Epigram extracts patterns from induction principles and induction

principles from inductive datatype families.

Current Status

Whilst at Durham, Conor McBride developed the Epigram prototype in

Haskell, interfacing with the xemacs editor. Nowadays, a team of

willing workers at the University of Nottingham are developing a new

version of Epigram, incorporating both significant improvements over

the previous version and experimental features subject to active

research.

The Epigram system is also being used successfully by Thorsten

Altenkirch, and more recently Conor McBride, in an undergraduate

course on Computer Aided Formal Reasoning for two years

http://www.e-pig.org/darcs/g5bcfr/. Several final year students

have successfully completed projects that involved both new

applications of and useful contributions to Epigram.

Peter Morris is working on how to build the datatype system of Epigram

from a universe of containers. This technology would enable datatype

generic programming from the ground up. Central to these ideas is the

concept of indexed container that has been developed

recently. There are ongoing efforts to elaborate the ideas in Edwin

Brady’s PhD thesis about efficiently compiling dependently typed

programming languages.

We have started writing a stand-alone editor for Epigram using

Gtk2Hs (→4.8.3). Thanks to a most helpful visit from Duncan

Coutts and Axel Simon, two leading Gtk2Hs developers, we now have the

beginnings of a structure editor for Epigram 2. For the moment, we are

also looking into a cheap terminal front-end.

There has also been steady progress on Epigram 2 itself. Most of the

recent progress has been on the type theoretic basis underpinning

Epigram. A new representation of the core syntax has been designed to

facilitate bidirectional type checking. The semantics of individual

terms are glued to their syntactical representation. We have started

implementing observational equality, combining the benefits of

both intensional and extensional notions of equality. The lion’s share

of the core theory has already been implemented, but there is still

plenty of work to do.

Whilst Epigram seeks to open new possibilities for the future of

strongly typed functional programming, its implementation benefits

considerably from the present state of the art. Our implementation

makes considerable use of applicative functors, higher-kind

polymorphism and type classes. Moreover, its denotational approach

translates Epigram’s lambda-calculus directly into Haskell’s. On a

more practical note, we have recently shifted to the darcs version

control system and cabal framework.

Epigram source code and related research papers can be found on the web at

http://www.e-pig.org and its community of experimental users communicate

via the mailing list <epigram at durham.ac.uk>. The current

implementation is naive in design and slow in practice, but it is

adequate to exhibit small examples of Epigram’s possibilities. The new

implementation will be much less rudimentary. At the moment, there is

direct low-level interface to the state of the proof state called

Ecce. Its documentation, together with other Epigram 2 design

documents, can be found at http://www.e-pig.org/epilogue/.

Chameleon is a Haskell style language which integrates sophisticated

reasoning capabilities into a programming language via its CHR

programmable type system. Thus, we can program novel type system

applications in terms of CHRs which previously required

special-purpose systems.

Chameleon including examples and documentation

is available via

http://taichi.ddns.comp.nus.edu.sg/taichiwiki/ChameleonHomePage

Latest developments

The latest developments mostly concern the transfer of ideas/methods

found in Chameleon to other systems. For example,

implication constraints as pioneered in Chameleon have found their

way into GHC 6.6. We also plan to integrate some of Chameleon’s type

inference capabilities into Tim Sheard’s Omega.

XHaskell is an extension of Haskell with XDuce style regular

expression types and regular expression pattern matching.

We have much improved the implementation which can found

under the XHaskell home-page:

http://taichi.ddns.comp.nus.edu.sg/taichiwiki/XhaskellHomePage

Latest developments

We are currently working on the integration of type classes.

A new version is planned for June 2007.

3.3.4 ADOM: Agent Domain of Monads

ADOM is an agent-oriented extension of Haskell with a unique approach

to the implementation of cognitive Belief-Desire-Intention (BDI)

agents. In ADOM, agent reasoning operations are viewed as monadic

computations. Agent reasoning operations can be stratified: Low-level

reasoning operations involve the agents beliefs and actions whereas

high-level reasoning operations involve the agents goals and

plans. Monads allow us to compose various levels of reasoning

together, while maintaining clear and distinct separation between the

different levels. ADOM can be used directly as an agent-oriented

domain specific language, or used to build more higher level BDI agent

abstractions on top of it (eg. AgentSpeak, 3APL). ADOM also introduces

the use of Constraint Handling Rules (CHR), embedded with Haskell, to

directly model the agent’s belief of its dynamically changing domain

(world) and it’s actions which invoke change to it’s domain. The key

advantage of our approach are:

- CHRs provides a clear and concise representation and

implementation of dynamically changing agent beliefs and actions.

- Stratifying the various levels of agent cognitive reasoning by

monads, maintains a distinct separation between different reasoning

computations and their responsibilities. We can also preserve

certain desirable properties possessed by each level of

computations. For example, CHR notion of observable confluence.

- Monadic computations can be composed to form more complex

computations, hence ADOM can be easily extended with more complex

functionalities. For example, we can build higher level monadic

computations that implements other BDI frameworks, like agentspeak

or 3APL.

Further reading

More information on ADOM can be found here

http://taichi.ddns.comp.nus.edu.sg/taichiwiki/ADOMHomePage

Latest developments

We are working on a much improved version which is scheduled for June 2007.

3.3.5 EHC, ‘Essential Haskell’ Compiler

The purpose of the EHC project is to provide a description of a Haskell

compiler which is as understandable as possible so it can be used for

education as well as research.

For its description an Attribute Grammar system (AG) (→4.3.3) is

used as well as other formalisms allowing compact notation like parser

combinators. For the description of type rules, and the generation of

an AG implementation for those type rules, we use the Ruler

system (→5.5.3) (included in the EHC project).

The EHC project also tackles other issues:

-

In order to avoid overwhelming the innocent reader,

the description of the compiler is organised as a series of

increasingly complex steps.

Each step corresponds to a Haskell subset which itself is an extension

of the previous step.

The first step starts with the essentials, namely typed lambda

calculus.

-

Each step corresponds to an actual, that is, an executable compiler.

Each of these compilers is a compiler in its own right so

experimenting can be done in isolation of additional complexity

introduced in later steps.

-

The description of the compiler uses code fragments which are

retrieved from the source code of the compilers.

In this way the description and source code are kept synchronized.

Currently EHC already incorporates more advanced features like

higher-ranked polymorphism, partial type signatures, class system,

explicit passing of implicit parameters (i.e. class instances),

extensible records, kind polymorphism.

Part of the description of the series of EH compilers is available

as a PhD thesis,

which incorporates previously published material on the EHC project.

The compiler is used for small student projects as well as larger

experiments such as the incorporation of an Attribute Grammar system.

Current activities

We are currently working on the following:

-

A Haskell98 frontend, supporting most of Haskell98, done by Atze Dijkstra.

-

A GRIN (Graph Reduction Intermediate Notation, see below) like backend,

which allows experimenting with global program optimization.

This is done by Jeroen Fokker.

-

Arie Middelkoop will continue with the development of the Ruler system (→5.5.3).

Further reading

An important feature of pure functional programming languages is referential

transparency. A consequence of referential transparency is that functions

cannot be allowed to modify their arguments, unless it can be

guaranteed that they have the sole reference to that argument. This is the

basis of uniqueness typing.

We have been developing a uniqueness type system based on that of the language

Clean but with various improvements: no subtyping is required, and the

type language does not include constraints (types in Clean often involve

implications between uniqueness attribute). This makes the type system

sufficiently similar to standard Hindley/Milner type systems that (1) standard

inference algorithms can be applied, and (2) that modern extensions such as

arbitrary rank types and generalized algebraic data types (GADTs) can easily be

incorporated.

Although our type system is developed in the context of the language Clean, it

is also relevant to Haskell because the core uniqueness type system we propose

is very similar to the Haskell’s core type system. Moreover, we are currently

working on defining syntactic conventions, which programmers can use to write

type annotations, and compilers can use to report types, without mentioning

uniqueness at all.

Further reading

- Edsko de Vries, Rinus Plasmeijer and David Abrahamson,

“Equality-Based Uniqueness Typing”. Presented at TFP 2007, submitted for

post-proceedings.

- Edsko de Vries, Rinus Plasmeijer and David Abrahamson,

“Uniqueness Typing Redefined”, in Z. Horvath, V. Zsok, and

Andrew Butterfield (Eds.): IFL 2006, LNCS 4449 (to appear).

3.3.7 Uniqueness Typing in EHC

Uniqueness typing is a type system feature of the functional

programming language Clean to

identify unique values. The space these values occupy can be recycled

directly after their only use,

thus enabling a form of static garbage collection that greatly

improves the efficiency of functional

programs. Our goal is to take this idea, and use it to produce more

efficient Haskell code.

This project consists of two parts: an analysis to determine which

values are unique (front-end), and a

code specializer that uses the analysis results to optimize memory

management (back-end). We did

focus on the front-end part and implemented a prototype using the

Essential Haskell (→3.3.5) project

as a research vehicle. Code generation is ongoing work of the

Essential Haskell project, and we intent

to integrate the results of the uniqueness analysis in a later phase.

Our uniqueness analyzer works as follows. Each type constructor of a

well-typed program is annotated

with a fresh identifier called the uniqueness annotation. From the

structure of the AST, we generate

a bunch of constraints between these annotations. Solving the

constraints gives a local reference

count (taking the current slice of the program into account) and

global reference count (taking the whole

program into account) of each annotation. The global reference count

is constructed from the local

reference counts and serves as an approximation of an upper bound to

the actual usage of a value.

(Sub)values that end up with an upper bound are considered unique,

others are shared.

Further reading

3.3.8 Object-Oriented Haskell

A set of type and other extensions to a Haskell-derived language to

support the general notion of Object-Oriented programming. An

interpreter is under construction to provide a programming

environment. No public release is currently available as the system

is not yet usable.

3.4 IO

3.4.1 Formal Aspects of Pure Functional I/O

We are particularly interested in formal models of the external effects

of I/O in pure lazy functional languages.

The emphasis is on reasoning about how programs affect their environment,

rather than the issue of which programs have identical I/O behaviour.

Further reading

-

BS01

Andrew Butterfield and Glenn Strong,

“Proving correctness of programs with I/O

— a paradigm comparison”,

in Thomas Arts and Markus Mohnen, editors,

Proceedings of the 13th International Workshop,

IFL2001, LNCS 2312, pages 72–87, 2001.

-

BDS02

Malcolm Dowse, Glenn Strong, and Andrew Butterfield,

“Proving make correct — I/O proofs in Haskell and Clean”,

in Ricardo Peña and Thomas Arts, editors,

Proceedings of IFL 2002, LNCS 2670, pages 68–83, 2002

-

BDE04

Malcolm Dowse, Andrew Butterfield, and Marko van Eekelen,

“Reasoning about deterministic concurrent functional i/o”,

in Clemens Grelck, Frank Huch, and Phil Trinder, editors,

IFL’04 - Revised Papers, LNCS 3474, 2005.

-

BD06

Malcolm Dowse, Andrew Butterfield,

“Modelling Deterministic Concurrent I/O”,

in Julia Lawall, editor,

ICFP 2006, Portland, September 18–20, 2006.

4 Libraries

4.1 Packaging and Distribution

Thanks to Cabal, we can now easily upgrade any installed library

to a new version. There is only one exception: the Base library is closely

tied to compiler internals, so you cannot use the Base library shipped with

GHC 6.4 in GHC 6.6 and vice versa.

The Core library is a project of dividing the Base library into two

parts – a small compiler-specific one (the Core library proper) and

the rest – a new, compiler-independent Base library that uses only

services provided by the Core lib.

Then, any version of the Base library can be used with any version of

the Core library, i.e. with any compiler. Moreover, it means that the

Base library will become available for the new compilers, like

yhc (→2.4) and jhc – this will require adding to the

Core lib only a small amount of code implementing low-level

compiler-specific functionality.

The Core library consists of directories GhcCore, HugsCore …

implementing compiler-specific functionality and Core directory

providing common interface to this functionality, so that external

libs should import only Core.* modules in order to be

compiler-independent.

In practice, the implementation of the Core lib became a refactoring

of the GHC.* modules by splitting them into GHC-specific and

compiler-independent parts. Adding implementations of

compiler-specific parts for other compilers will allow us to compile

the refactored Base library with any compiler, including old versions

of GHC. At this moment, the following modules were succesfully refactored:

GHC.Arr, GHC.Base, GHC.Enum, GHC.Float, GHC.List, GHC.Num, GHC.Real,

GHC.Show, GHC.ST, GHC.STRef; the next step is to refactor IO

functionality.

Further reading

Contact

<Bulat.Ziganshin at gmail.com>

4.2 General libraries

The Test.IOSpec library provides a pure specification of several

functions in the IO monad. This may be of interest to anyone who

wants to debug, reason about, analyse, or test impure code.

The Test.IOSpec library is essentially a drop-in replacement for

several other modules, most notably Data.IORef and

Control.Concurrent. Once you’re satisfied that your functions are

reasonably well-behaved with respect to the pure specification, you

can drop the Test.IOSpec import in favour of the “real” IO

modules.

There’s still quite some work to be done. First and foremost, I’d

like to make it easier to combine different modules. Furthermore,

I’d also like to add new modules providing specifications of other

parts of the IO monad: Control.Concurrent.STM and Control.Exception

are two prime candidates.

If you use Test.IOSpec for anything useful at all, I’d love to hear

from you.

Further reading

http://www.cs.nott.ac.uk/~wss/repos/IOSpec/

4.2.2 PFP – Probabilistic Functional Programming Library for Haskell

The PFP library is a collection of modules for Haskell that

facilitates probabilistic functional programming, that is, programming

with stochastic values. The probabilistic functional programming

approach is based on a data type for representing distributions. A

distribution represent the outcome of a probabilistic event as a

collection of all possible values, tagged with their likelihood.

A nice aspect of this system is that simulations can be specified

independently from their method of execution. That is, we can either

fully simulate or randomize any simulation without altering the code

which defines it.

The library was developed as part of a simulation project with

biologists and genome researchers. We originally had planned to apply

the library to more examples in this area, however, the student

working in this area has left, so this project is currently in limbo.

No changes since the last report. For the next version, some refactorings

are planned. Several variations of this library seem to have evolved.

Somebody is also working on a documentation. Maybe all the different

threads should be brought together on one web page?

Further reading

http://eecs.oregonstate.edu/~erwig/pfp/

GSLHaskell is a simple library for linear algebra and numerical computation,

internally implemented using GSL, BLAS and LAPACK. The goal is to achieve the

functionality and performance of GNU-Octave and similar systems. Recent dev

elopments include important bugfixes and the interface to additional LAPACK

functions. A brief manual is available at the URL below.

This library is used in the easyVision project (→6.19).

Further reading

http://dis.um.es/~alberto/GSLHaskell

4.2.4 An Index Aware Linear Algebra Library

The index aware linear algebra library is a Haskell interface to a set

of common vector and matrix operations. The interface exposes index

types to the type system so that operand conformability can be

statically guaranteed. For instance, an attempt to add or multiply two

incompatibly sized matrices is a static error.

The library should still be considered alpha quality. A backend for

sparse vector types is near completion, which allows low-overhead

“views” of tensors as arbitrarily nested vectors. For instance, a

matrix, which we represent as a tuple-indexed vector, could also be

seen as a (rank 1) vector of (rank 1) vectors. These different views

usually produce different behaviours under common vector operations,

thus increasing the expressive power of the interface.

Further reading

4.2.5 Haskell Rules: Embedding Rule Systems in Haskell

Haskell Rules is a domain-specific embedded language that allows

semantic rules to be expressed as Haskell functions. This DSEL

provides logical variables, unification, substitution,

non-determinism, and backtracking. It also allows Haskell functions to

be lifted to operate on logical variables. These functions are

automatically delayed so that the substitutions can be applied. The

rule DSEL allows various kinds of logical embedding, for example,

including logical variables within a data structure or wrapping a data

structure with a logical wrapper.

No changes since last report. No plans for future versions.

Further reading

http://eecs.oregonstate.edu/~erwig/HaskellRules/

4.3 Parsing and transforming

The InterpreterLib library is a collection of modules for constructing

composable, monadic interpreters in Haskell. The library provides a

collection of functions and type classes that implement semantic

algebras in the style of Hutton and Duponcheel. Datatypes for related

language constructs are defined as non-recursive functors and composed

using a higher-order sum functor. The full AST for a language is the

least fixed point of the sum of its constructs’ functors. To denote a

term in the language, a sum algebra combinator composes algebras for

each construct functor into a semantic algebra suitable for the full

language and the catamorphism introduces recursion. Another piece of

InterpreterLib is a novel suite of algebra combinators conducive to

monadic encapsulation and semantic re-use. The Algebra Compiler, an

ancillary preprocessor derived from polytypic programming principles,

generates functorial boilerplate Haskell code from minimal

specifications of language constructs. As a whole, the InterpreterLib

library enables rapid prototyping and simplified maintenance of

language processors.

InterpreterLib is available for download at the link provided below.

Version 1.0 of InterpreterLib was released in April 2007.

Further reading

http://www.ittc.ku.edu/Projects/SLDG/projects/project-InterpreterLib.htm

Contact

<nfrisby at ittc.ku.edu>

HsColour is a small command-line tool (and Haskell library) that

syntax-colorises Haskell source code for multiple output formats. It

consists of a token lexer, classification engine, and multiple separate

pretty-printers for the different formats. Current supported output

formats are ANSI terminal codes, HTML (with or without CSS), and LaTeX.

In all cases, the colours and highlight styles (bold, underline, etc)

are configurable. It can additionally place HTML anchors in front of

declarations, to be used as the target of links you generate in

Haddock documentation.

HsColour is widely used to make source code in blog entries look more

pretty, to generate library documentation on the web, and to improve the

readability of ghc’s intermediate-code debugging output.

Further reading

4.3.3 Utrecht Parsing Library and Attribute Grammar System

The Utrecht attribute grammar system has been extended:

- the attribute flow analysis has been completely implemented by

Joost Verhoog, and it is now possible to generate visit-function

based evaluators, which are much faster and use less space. We assume

that such functions are strict in all their arguments, and generate

the appropriate `seq` calls to make the GHC aware of this. As a

result also case’s are generated instead on let’s wherever possible.

Since the last report several improvements were made: better error

reporting of cyclic dependencies, and a large speed improvements in

the overall flow analysis have been made. The first versions of the

EHC now compile without circularities, nor direct nor induced by

fixing the attribute evaluation orders

- we are adding better support for higher order attribute

grammars and forwarding rules

- Tthe error correcting strategies of the parser combinators are

now being used as a base for providing automatic feedback in systems

for training strategies (Johan Jeuring, Arthur van Leeuwen)

- a start has been made with providing Haddock information with

the code of the parser combinators

- we plan to enhance the parser combinators with a second basic

parsing engine, in order to support monadic uses of the combinators

while keeping the error correcting capabilities

The software is again available through the Haskell Utrecht Tools page.

(

http://www.cs.uu.nl/wiki/HUT/WebHome).

4.3.4 Left-Recursive Parser Combinators

Existing parser combinators cannot accommodate left-recursive

grammars. In some applications, this shortcoming requires grammars

to be rewritten to non-left-recursive form which may hinder

definition of the associated semantic functions. In applications

that involve ambiguous pattern-matching, such as NLP, the

rewriting to non-left-recursive form may result in loss of parses.

In our project, we have developed combinators which

accommodate ambiguity and left-recursion (both direct

and indirect) in polynomial time, and which generate

polynomial-sized representations of the exponential

number of parse trees corresponding to highly-ambiguous

input. The compact representations are similar to those

generated by Tomita’s algorithm.

Polynomial complexity for ambiguous grammars is achieved through

memoization of fully-backtracking combinators. Systematic

memoization is implemented using monads. Direct left-recursion

is accommodated by storing additional data in the memotable which

is used to curtail recursive descent when no parse is possible.

Indirect left recursion is accommodated by use of the context in

which results are created and the context in which they are

subsequently considered for re-use.

We have implemented our approach in Haskell, and are in the

process of optimizing the code and preparing it for release in

December of 2006.

Further reading

A technical report with definitions, proofs of termination and

complexity, and reference to publications, is available at:

http://cs.uwindsor.ca/~richard/GPC/TECH_REPORT_06_022.pdf

4.3.5 RecLib – A Recursion and Traversal Library for Haskell

The Recursion Library for Haskell provides a rich set of generic

traversal strategies to facilitate the flexible specification of

generic term traversals. The underlying mechanism is the Scrap Your

Boilerplate (SYB) approach. Most of the strategies that are used to

implement recursion operators are taken from Stratego.

The library is divided into two layers. The high-level layer defines a

universal traverse function that can be parameterized by five aspects

of a traversal.

The low-level layer provides a set of primitives that can be used for

defining more traversal strategies not covered in the library. Two

fixpoint strategies inntermost and outermost are defined to

demonstrate the usage of the primitives. The design and implementation

of the library is explained in a paper listed on the project web page.

No changes since last report. No plans for future versions.

Further reading

http://eecs.oregonstate.edu/~erwig/reclib/

4.4 System

Harpy is a library for run-time code generation of IA-32 machine code. It

provides not only a low level interface to code generation operations, but

also a convenient domain specific language for machine code fragments, a

collection of code generation combinators and a disassembler. We use it in two

independent (unpublished) projects: On the one hand, we are implementing a

just-in-time compiler for functional programs, on the other hand, we use it to

implement an efficient type checker for a dependently typed language. It might

be useful in other domains, where specialised code generated at run-time can

improve performance.

Harpy’s implementation makes use of the foreign function interface, but only

contains functions written in Haskell. Moreover, it has some uses of other

interesting Haskell extensions as for example multi-parameter type classes to

provide an in-line assembly language, and Template Haskell to generate stub

functions to call run-time generated code. The disassembler uses Parsec to

parse the instruction stream.

We intend to implement supporting operations for garbage collectors

cooperating with run-time generated code.

We made an initial public release, and are now looking forward to ideas from

the community to show some further uses of run-time code generation in

Haskell.

Further reading

http://uebb.cs.tu-berlin.de/harpy/

hs-plugins is a library for dynamic loading and runtime compilation of

Haskell modules, for Haskell and foreign language applications. It can

be used to implement application plugins, hot swapping of modules in

running applications, runtime evaluation of Haskell, and enables the use

of Haskell as an application extension language.

hs-plugins has been ported to GHC 6.6.

Further reading

4.4.3 The libpcap Binding

Nicholas Burlett has now created a cabalized version and made it

available on hackage. However, beware that this doesn’t use

autoconf to check your system supports sa_len and it doesn’t

check which version of libpcap is installed. It will probably

work but may not. If it doesn’t then try this:

-

Install libpcap. I used 0.9.4.

-

autoheader

-

autoconf

-

./configure

-

hsc2hs Pcap.hsc

-

ghc -o test test.hs --make -lpcap -fglasgow-exts

All contributions are welcome especially if you know how to get

cabal to run autoconf and check for versions of non-Haskell

libraries.

Streams is the new I/O library developed to extend existing

Haskell’s Handle-based I/O features. It includes:

- Hugs (→2.2) and GHC (→2.1) compatibility

- Lightning speed (up to 100 times faster than Handle-based I/O)

- UTF-8 and other Char encodings for text I/O

- Various stream types (files, memory-mapped files, memory and string

buffers, pipes)

- Binary I/O and serialization facilities (see AltBinary lib (→4.7.1))

- Support for streams working in IO, ST and other monads

The main idea of the library is its clear class-based design that allows

to split all functionality into a set of small maintainable modules, each of

which supports one type of streams (file, memory buffer …) or one

feature (locking, buffering, Char encoding …). The interface of each such

module is fully defined by some type class (Stream, MemoryStream,

TextStream), so the library can be easily extended by third party

modules that implement additional stream types (network sockets,

array buffers …) and features (overlapped I/O …).

The new version 0.2 adds support for memory-mapped files, files >4GB on Windows,

ByteString I/O, full backward compatibility with the NewBinary library

(both byte-aligned and bit-aligned modes), more orthogonal serialization API,

serialization from/to memory buffer, and even better speed.

Sorry, it was never documented

The upcoming version 0.3 will provide automatic buffer deallocation using ForeignPtrs,

serialization from/to ByteStrings, full backward compatibility with Handle-base I/O

and, hopefully, full documentation for all its features.

Further reading

Contact

<Bulat.Ziganshin at gmail.com>

System.FilePath is a library for manipulating FilePath’s in Haskell programs.

This library is Posix (Linux) and Windows capable – just import

System.FilePath and it will pick the right one. It is written in Haskell 98 +

Hierarchical Modules. There are features to manipulate the extension,

filename, directory structure etc. of a FilePath.

This library has now been incorporated into the set of standard libraries

distributed with all Haskell compilers. From GHC 6.6.1 onwards, the filepath

library is always available. Version 1.0 of this library has been released,

and no further changes are envisaged.

Further reading

http://www-users.cs.york.ac.uk/~ndm/filepath/

hinotify is a simple Haskell wrapper for the Linux kernel’s inotify

mechanism. inotify allows applications to watch file changes since

Linux kernel 2.6.13. You can for example use it to do a proper

locking procedure on a set of files, or keep your application up do

date on a directory of files in a fast and clean way.

hinotify is still a very young library and might still be a bit rough around

the edges. Next updates will include non-threading support and perhaps a

little reworked API.

Further reading

4.5 Databases and data storage

The CoddFish library provides a strongly typed model

of relational databases and operations on them, which allows for

static checking of errors and integrity at compile time. Apart from

the standard relational database operations, it allows the definition

of functional dependencies and, therefore, provides normal form

verification and database transformation operations.

The library makes essential use of the HList library (→4.6.6), which

provides arbitrary-length tuples (or heterogeneous lists), and makes extensive

use of type-level programming with multi-parameter type classes.

CoddFish lends itself as a sandbox for the design of typed languages

for modeling, programming, and transforming relational databases.

Currently, a reimplementation of CoddFish based on GADTs is underway.

Further reading

Takusen is a library for accessing DBMS’s. Like HSQL, we support

arbitrary SQL statements (currently strings, extensible to anything

that can be converted to a string).

Takusen’s ‘unique-selling-point’ is safety and

efficiency. We statically ensure all acquired database resources such

as cursors, connection and statement handles are released, exactly

once, at predictable times. Takusen can avoid loading the whole result

set in memory and so can handle queries returning millions of rows, in

constant space. Takusen also supports automatic marshalling and