Index

Haskell Communities and Activities Report

Sixteenth edition – May 2009

Janis Voigtländer (ed.)

Peter Achten

Andy Adams-Moran

Lloyd Allison

Tiago Miguel Laureano Alves

Krasimir Angelov

Heinrich Apfelmus

Emil Axelsson

Arthur Baars

Justin Bailey

Alistair Bayley

Jean-Philippe Bernardy

Clifford Beshers

Gwern Branwen

Joachim Breitner

Niklas Broberg

Björn Buckwalter

Denis Bueno

Andrew Butterfield

Roman Cheplyaka

Olaf Chitil

Jan Christiansen

Sterling Clover

Alberto Gomez Corona

Duncan Coutts

Jacome Cunha

Nils Anders Danielsson

Atze Dijkstra

Robert Dockins

Chris Eidhof

Conal Elliott

Henrique Ferreiro Garcia

Marc Fontaine

Nicolas Frisby

Peter Gavin

Patai Gergely

Brett G. Giles

Andy Gill

George Giorgidze

Dimitry Golubovsky

Daniel Gorin

Jurriaan Hage

Bastiaan Heeren

Claude Heiland-Allen

Aycan Irican

Judah Jacobson

Jan Martin Jansen

Wolfgang Jeltsch

Florian Haftmann

Kevin Hammond

Enzo Haussecker

Christopher Lane Hinson

Guillaume Hoffmann

Martin Hofmann

Creighton Hogg

Csaba Hruska

Liyang HU

Paul Hudak

Graham Hutton

Farid Karimipour

Garrin Kimmell

Oleg Kiselyov

Lennart Kolmodin

Slawomir Kolodynski

Michal Konecny

Eric Kow

Stephen Lavelle

Sean Leather

Huiqing Li

Bas Lijnse

Ben Lippmeier

Andres Löh

Rita Loogen

Ian Lynagh

John MacFarlane

Christian Maeder

Jose Pedro Magalhães

Ketil Malde

Blazevic Mario

Simon Marlow

Michael Marte

Bart Massey

Simon Michael

Arie Middelkoop

Ivan Lazar Miljenovic

Neil Mitchell

Maarten de Mol

Dino Morelli

Matthew Naylor

Rishiyur Nikhil

Thomas van Noort

Johan Nordlander

Jeremy O’Donoghue

Patrick O. Perry

Jens Petersen

Simon Peyton Jones

Dan Popa

Fabian Reck

Claus Reinke

Alexey Rodriguez

Alberto Ruiz

David Sabel

Ingo Sander

Uwe Schmidt

Martijn Schrage

Tom Schrijvers

Paulo Silva

Ben Sinclair

Ganesh Sittampalam

Martijn van Steenbergen

Dominic Steinitz

Don Stewart

Jon Strait

Martin Sulzmann

Doaitse Swierstra

Wouter Swierstra

Hans van Thiel

Henning Thielemann

Wren Ng Thornton

Phil Trinder

Jared Updike

Marcos Viera

Miguel Vilaca

David Waern

Malcolm Wallace

Jinjing Wang

Kim-Ee Yeoh

Brent Yorgey

Preface

This is the 16th edition of the Haskell Communities and Activities Report.

There are a number of completely new entries and many small updates.

As usual, fresh entries are formatted using a blue background, while updated entries have a header with a blue background.

Entries on which no activity has been reported for a year or longer have been dropped.

Please do revive them next time if you have news on them.

My aim for October is to significantly increase the percentage of blue throughout the pages of the report.

Clearly, there are two ways to achieve this: have more blue or have less white.

My preference is the former option, and I count on the community to make it feasible.

In any case, I will tend to be less conservative than this time around: I plan to drop, or simply replace with pointers to previous versions, entries that see no update between now and then.

A call for new entries and updates to existing ones will be issued on the usual mailing lists.

Now enjoy the current report and see what other Haskellers have been up to in the last half year.

Any kind of feedback is of course very welcome <hcar at haskell.org>, as are thoughts on the plan outlined above.

Janis Voigtländer, Technische Universität Dresden, Germany

1 Information Sources

1.1 Book: Programming in Haskell

Haskell is one of the leading languages for teaching functional

programming, enabling students to write simpler and cleaner code,

and to learn how to structure and reason about programs. This

introduction is ideal for beginners: it requires no previous

programming experience and all concepts are explained from first

principles via carefully chosen examples. Each chapter includes

exercises that range from the straightforward to extended projects,

plus suggestions for further reading on more advanced topics. The

presentation is clear and simple, and benefits from having been

refined and class-tested over several years.

Features include: freely accessible PowerPoint slides for each

chapter; solutions to exercises, and examination questions (with

solutions) available to instructors; downloadable code that is

compliant with the latest Haskell release.

Publication details:

- Published by Cambridge University Press, 2007. Paperback:

ISBN 0521692695; Hardback: ISBN: 0521871727; eBook: ISBN 051129218X;

Kindle: ASIN B001FSKE6Q.

In-depth review:

Further reading

http://www.cs.nott.ac.uk/~gmh/book.html

There are plenty of academic papers about Haskell and plenty of

informative pages on the

HaskellWiki. Unfortunately, there is not much

between the two extremes. That is where The Monad.Reader tries to

fit in: more formal than a Wiki page, but more casual than a journal

article.

There are plenty of interesting ideas that maybe do not warrant an

academic publication — but that does not mean these ideas are not

worth writing about! Communicating ideas to a wide audience is much

more important than concealing them in some esoteric journal. Even

if it has all been done before in the Journal of Impossibly

Complicated Theoretical Stuff, explaining a neat idea

about “warm fuzzy things” to the rest of us can still be plain fun.

The Monad.Reader is also a great place to write about a tool or

application that deserves more attention. Most programmers do not

enjoy writing manuals; writing a tutorial for The Monad.Reader,

however, is an excellent way to put your code in the limelight and

reach hundreds of potential users.

Since the last HCAR there have been two new issues, including

another “Summer of Code” special. With your submissions, I expect

we can keep publishing quarterly issues of the Monad.Reader. Check

out the Monad.Reader homepage for all the information you need to

start writing your article.

Further reading

http://www.haskell.org/haskellwiki/The_Monad.Reader

The goal of the Haskell wikibook project is to build a community textbook

about Haskell that is at once free (as in freedom and in beer), gentle, and

comprehensive. We think that the many marvelous ideas of lazy functional

programming can and thus should be accessible to everyone in a central

place.

Currently, the wikibook is slowly advancing in breath rather than depth, with a new sketch of a chapter on polymorphism and higher rank types, and a new page on Monoids. Additional authors and contributors that help writing new contents or simply spot mistakes and ask those questions we had never thought of are more than welcome!

Further reading

The “Greenhorn’s Guide to becoming a Monad Cowboy” is yet another monad tutorial.

It covers the basics, with simple examples, and includes monad transformers and monadic functions. The didactic style is a variation on the “for dummies” format. Estimated learning time, for a monad novice, is 2–3 days.

It is available at http://www.muitovar.com/monad/moncow.xhtml

Further reading

http://www.muitovar.com/

1.5 Oleg’s Mini tutorials and assorted small projects

The collection of various Haskell mini tutorials and assorted

small projects

(http://okmij.org/ftp/Haskell/) has received two additions:

Monadic Regions

Monadic Regions is a technique for managing resources such as memory

areas, file handles, database connections, etc. Regions offer an

attractive alternative to both manual allocation of resources and

garbage collection. Unlike the latter, region-based resource

management makes resource disposal and finalization predictable. We

can precisely identify the program points where allocated resources

are freed and finalization actions are run. Like other automatic

resource managements schemes, regions statically assure us that no

resource is used after it is disposed of, no resource is freed twice,

and all resources are eventually deallocated.

We first describe a very simple implementation of Monadic Regions for

the particular case of file IO, statically ensuring that there are no

attempts to access an already closed file handle, and all open file

handles are eventually closed. Many handles can be open

simultaneously; the type system enforces the proper nesting of their

regions. The technique has no run-time overhead and induces no

run-time errors, requiring only the rank-2–type extension to

Haskell 98. The technique underlies safe database interfaces of

Takusen.

The simplicity of the implementation comes with a limitation: it is

impossible to store file handles in reference cells and return them

from an inner region, even when it is safe to do so. We show that

practice often requires more flexible region policies.

With Chung-chieh Shan, we developed a novel, improved, and extended

implementation of the Fluet and Morrisett’s calculus of nested regions

in Haskell, with file handles as resources. Our library supports

region polymorphism and implicit region subtyping, along with

higher-order functions, mutable state, recursion, and run-time

exceptions. A program may allocate arbitrarily many resources and

dispose of them in any order, not necessarily LIFO. Region annotations

are part of an expression’s inferred type. Our implementation assures

timely deallocation even when resources have markedly different

lifetimes and the identity of the longest-living resource is

determined only dynamically.

For contrast, we also implement a Haskell library for manual resource

management, where deallocation is explicit and safety is assured by a

form of linear types. We implement the linear typing in Haskell with

the help of phantom types and a parameterized monad to statically

track the type-state of resources.

http://okmij.org/ftp/Haskell/regions.html

Variable (type)state “monad”

The familiar State monad lets us represent computations with a state

that can be queried and updated. The state must have the same type

during the entire computation however. One sometimes wants to express

a computation where not only the value but also the type of the state

can be updated – while maintaining static typing. We wish for a

parameterized “monad” that indexes each monadic type by an initial

(type)state and a final (type) state. The effect of an effectful

computation thus becomes apparent in the type of the computation, and

so can be statically reasoned about.

In a parameterized monad m p q a, m is a type

constructor of three type arguments. The argument a is the

type of values produced by the monadic computation. The two other

arguments describe the type of the computation state before and after

the computation is executed. As in ordinary monad, bind is

required to be associative and ret to be the left and the

right unit of bind. All ordinary monads are particular case

of parameterized monads, when p and q are the same

and constant throughout the computation. Like the ordinary class

monad, parameterized monads are implementable in Haskell 98.

The web page describes various applications of the type-state Monad:

- IO-like monad that statically enforces a locking protocol;

- statically tracking ticks so to enforce timing and protocol

restrictions when writing device drivers and their generators;

- implementing a form of linear types for resource management.

http://okmij.org/ftp/Computation/monads.html#param-monad

The “Haskell Cheat Sheet” covers the syntax, keywords, and other

language elements of Haskell 98. It is intended for beginning to

intermediate Haskell programmers and can even serve as a memory aid to

experts.

The cheat sheet is distributed as a PDF and executable source file in

one package. It is available from Hackage and can be installed with

cabal.

Further reading

http://cheatsheet.codeslower.com

1.7 The Happstack Tutorial

The Happstack Tutorial aims to be a definitive, up-to-date, resource for how to use the Happstack libraries. I have recently taken over the project from Thomas Hartman. An instance of the Happstack Tutorial is running as a stand-alone website, but in order to truly dig into writing Happstack applications you can cabal install it from Hackage and experiment with it locally.

Happstack Tutorial is updated along with the Happstack Hackage releases, but the darcs head is generally compatible with the darcs head of Happstack.

I am adding a few small tutorials to the package with every release and am always looking for more feedback from beginning Happstack users.

Further reading

2 Implementations

2.1 The Glasgow Haskell Compiler

The last six months have been busy ones for GHC.

The GHC 6.10 branch

We finally released GHC 6.10.1 on 4 November 2008, with a raft of new

features we discussed in the October 2008 status report.

A little over five months later we released GHC 6.10.2, with more than 200 new

patches fixing more than 100 tickets raised against 6.10.1. We hoped that would be

it for the 6.10 branch, but we slipped up and 6.10.2 contained a couple

of annoying regressions (concerning Control-C and editline).

By the time you read this, GHC 6.10.3 (fixing these regressions) should

be out, after which we hope to shift all our attention to the 6.12 branch.

The new build system

Our old multi-makefile build system had grown old, crufty, hard to

understand. And it did not even work very well. So we embarked

on a plan to re-implement the build system.

Rather than impose the new system on everyone immediately, Ian and Simon (Marlow)

did all the development on a branch, and invited others to give it a whirl.

Finally, on 25 April 2009, we went “live” on the HEAD.

The new design is described on the Wiki. It still

uses make, but it is now based on a non-recursive make strategy. This means that dependency tracking is vastly more accurate than before,

so that if something “should” be built it “will” be built.

The new build system is also much less dependent on Cabal than it was before.

We now use Cabal only to read package meta-data from the <pkg>.cabal file,

and emit a bunch of Makefile bindings. Everything else is done in make.

You can read more about the design rationale on the Wiki.

We also advertised our intent to switch to Git as our

version control system (VCS). We always planned to change the build system first, and only

then tackle the VCS. Since then, there has been lots of activity on the Darcs front, so

it is not clear how high priority making this change is. We would welcome your opinion (<cvs-ghc at haskell.org>).

The GHC 6.12 branch

The main list of new features in GHC 6.12 remains much the same as it was in our last status report. Happily, there has been progress on all fronts.

Parallel performance

Simon Marlow has been working on improving performance for parallel programs, and there will be significant improvements to be had in 6.12 compared to 6.10. In particular

-

There is an implementation of lock-free work-stealing queues, used for load-balancing of sparks and also

in the parallel GC. Initial work on this was done by Jost Berthold.

-

The parallel GC itself has been tuned to retain locality in parallel programs. Some speedups are

dramatic.

-

The overhead for running a spark is much lower, as sparks are now run in batches rather than creating

a new thread for each one. This makes it possible to take advantage of parallelism at a much finer

granularity than before.

-

There is optional “eager-blackholing”, with the new -feager-blackholing flag, which can help eliminate

duplicate computation in parallel programs.

Our recent ICFP submission Runtime Support for Multicore Haskell describes all these in more detail, and gives extensive measurements.

Things are not in their final state yet: for example, we still need to work on tuning the default flag settings to get good performance for more programs without any manual tweaking. There are some larger possibilities on the horizon too, such as redesigning the garbage collector to support per-CPU independent GC, which will reduce the synchronization overheads of the current stop-the-world strategy.

Parallel profiling

GHC 6.12 will feature parallel profiling in the form of ThreadScope, under development by Satnam Singh, Donnie Jones and Simon Marlow. Support has been added to GHC for lightweight runtime tracing (work originally done by Donnie Jones), which is used by ThreadScope to generate profiles of the program’s real-time execution behavior. This work is still in the very early stages, and there are many interesting directions we could take this in.

Data Parallel Haskell

Data Parallel Haskell remains under very active development by Manuel Chakravarty, Gabriele Keller, Roman Leshchinskiy, and Simon Peyton Jones. The current state of play is documented on the Wiki. We also wrote a substantial paper Harnessing the multicores: nested data parallelism in Haskell for FSTTCS 2008; you may find this paper a useful tutorial on the whole idea of nested data parallelism.

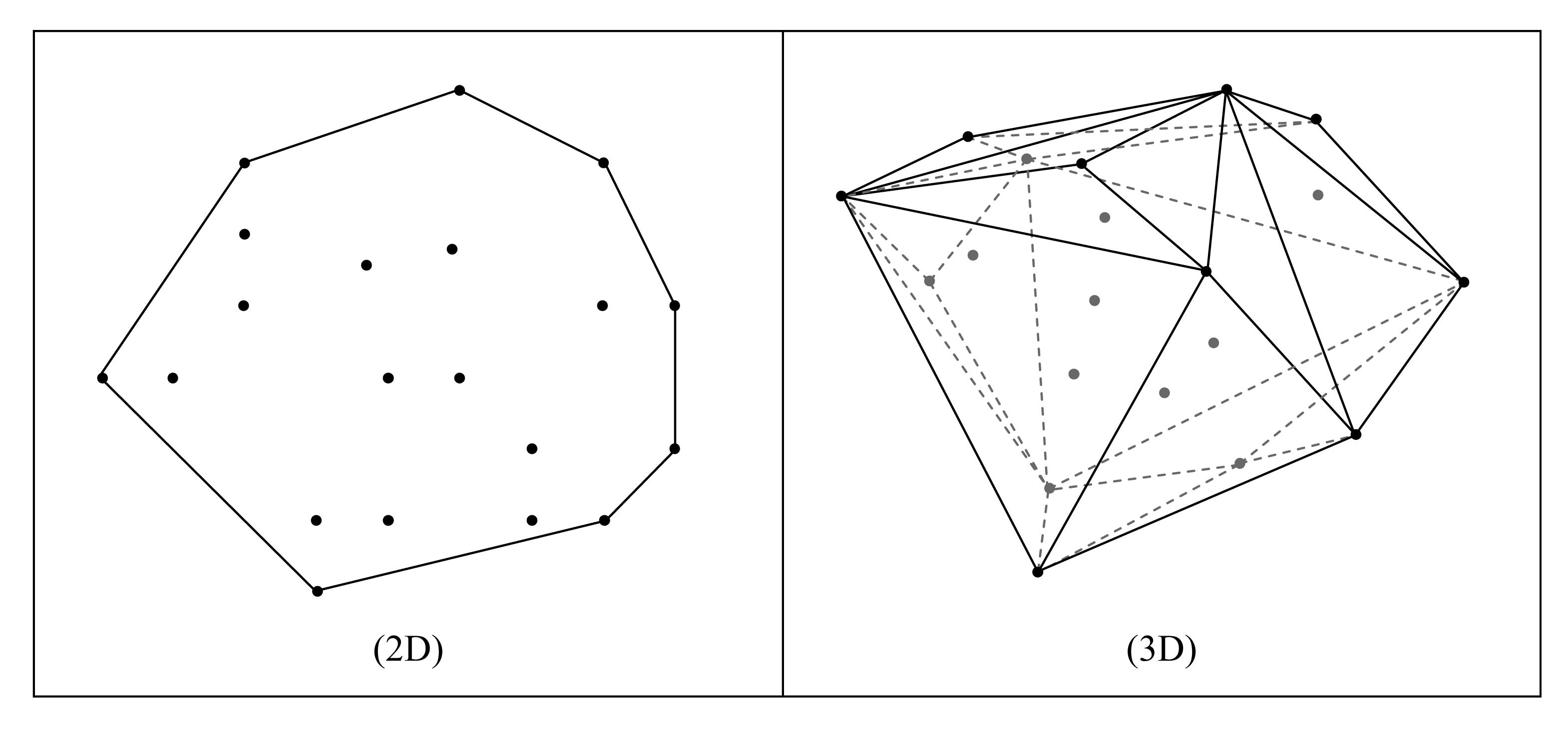

The system currently works well for small programs, such as computing a dot product or the product of a sparse matrix with a dense vector. For such applications, the generated code is as close to hand written C code as GHC’s current code generator enables us to be (i.e., within a factor of 2 or 3). We ran three small benchmarks on an 8-core x86 server and on an 8-core UltraSPARC T2 server, from which we derived two comparative figures: a comparison between x86 and T2 on a memory-intensive benchmark (dot product) and a summary of the speedup of three benchmarks on x86 and T2. Overall, we achieved good absolute performance and good scalability on the hardware we tested.

Our next step is to scale the implementation up to properly handle larger programs. In particular, we are currently working on improving the interaction between vectorized code, the aggressively-inlined array library, and GHC’s standard optimization phases. The current main obstacle is excessively long compile times, due to a temporary code explosion during optimization. Moreover, Gabriele started to work on integrating specialized support for regular multi-dimensional arrays into the existing framework for nested data parallelism.

Type system improvements

The whole area of GADTs, indexed type families, and associated types remains in a ferment of development. It is clear that type families are jolly useful: many people are using them even though they are only partially supported by GHC 6.10. (You might enjoy a programmers-eye-view tutorial Fun with type functions that Oleg, Ken, and Simon wrote in April 2009.)

But these new features have made the type inference engine pretty complicated, and Simon PJ, Manuel Chakravarty, Tom Schrijvers, Dimitrios Vytiniotis, and Martin Sulzmann have been busy thinking about ways to make type inference simpler and more uniform. Our ICFP’08 paper Type checking with open type functions was a first stab (which we subsequently managed to simplify quite a bit). Our new paper (to be presented at ICFP’09) Complete and decidable type inference for GADTs tackles a different part of the problem. And we are not done yet; for example, our new inference framework is designed to smoothly accommodate Dimitrios’ work on FPH: First class polymorphism for Haskell (ICFP’08).

Other developments

-

Max Bolingbroke has revised and simplified his Dynamically Loaded Plugins summer of code project, and we (continue to) plan to merge it into 6.12. Part of this is already merged: a new, modular system for annotations, rather like Java or C#attributes. These attributes are persisted into interface files, can be examined and created by plugins, or by GHC API clients.

-

John Dias has continued work on rewriting GHC’s backend. You can find an overview of the new architecture on the Wiki. He and Norman and Simon wrote Dataflow optimisation made simple, a paper about the dataflow optimization framework that the new back end embodies. Needless to say, the act of writing the paper has made us re-design the framework, so at the time of writing it still is not on GHC’s main compilation path. But it will be.

-

Shared Libraries are inching ever closer to being completed. Duncan Coutts has taken up the reins and is pushing our shared library support towards a fully working state. This project is supported by the Industrial Haskell Group.

-

Unicode text I/O support is at the testing stage, and should be merged in in time for 6.12.1.

nhc98 is a small, easy to install, compiler for Haskell’98.

nhc98 is still very much alive and working,

although it does not see many new features these days.

We expect a new public release (1.22) soon, to coincide with

the release of ghc-6.10.x, in particular to ensure that the

included libraries are compatible across compilers.

Further reading

Helium is a compiler that supports a substantial subset of Haskell 98 (but, e.g.,

n+k patterns are missing). Type classes are restricted to a number of

built-in type classes and all instances are derived. The advantage of Helium is

that it generates novice friendly error feedback. The latest versions of the

Helium compiler are available for download from the new website located at

http://www.cs.uu.nl/wiki/Helium. This website also explains in detail

what Helium is about, what it offers, and what we plan to do in the near and far

future.

We are still working on making version 1.7 available, mainly a matter of updating

the documentation and testing the system. Internally little has changed, but

the interface to the system has been standardized, and the functionality of the

interpreters has been improved and made consistent. We have made new options

available (such as those that govern where programs are logged to). The use

of Helium from the interpreters is now governed by a configuration file, which

makes the use of Helium from the interpreters quite transparent for the programmer.

It is also possible to use different versions of Helium side by side

(motivated by the development of Neon (→5.3.3)).

A student has added parsing and static checking for type class and instance

definitions to the language, but type inferencing and code generating still

need to be added. The work on the documentation has progressed quite a bit,

but there has been little testing thus far, especially on a platform

such as Windows.

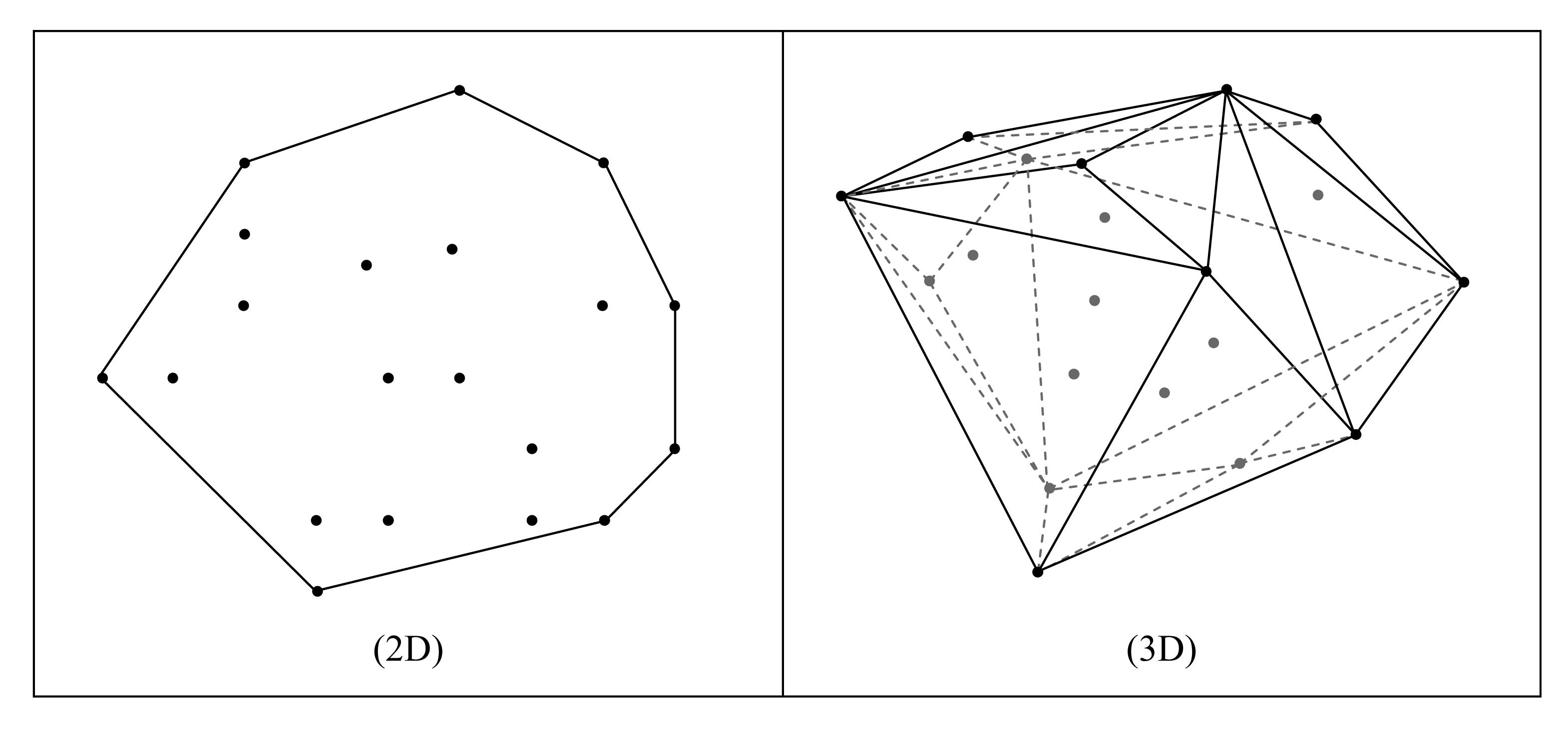

2.4 UHC, Utrecht Haskell Compiler (previously: EHC, “Essential Haskell” compiler)

What is UHC? and EHC?

UHC is the Utrecht Haskell Compiler, supporting almost all Haskell 98 features plus many

experimental extensions.

The first release of UHC was announced on April 18 at the 5th Haskell Hackathon, held in Utrecht.

EHC is the Essential Haskell Compiler project, a series of compilers of which the last is UHC, plus

an aspectwise organized infrastructure for facilitating experimentation and extension.

The end-user will probably only be aware of UHC as a Haskell compiler,

whereas compiler writers will be more

aware of the internals known as EHC.

The name EHC however will disappear over time, both EHC and UHC together will be branded as UHC.

UHC in its current state still very much is work in progress.

Although we feel it is stable enough to offer the public,

much work needs to be done to make it usable for serious development work.

By design its strong point is the internal aspectwise organization which we started as EHC.

UHC also offers more advanced and experimental features like higher-ranked polymorphism, partial type signatures,

and local instances.

Under the hoodFor the description of UHC an Attribute Grammar system (AG) is used as well as other

formalisms allowing compact notation like parser combinators. For the

description of type rules, and the generation of an AG implementation for

those type rules, we use the Ruler system.

For source code management we use Shuffle, which allows partitioning the system into a sequence of steps and aspects.

(Both Ruler and Shuffle are included in UHC).

The implementation of UHC also tackles other issues:

-

To deal with the inherent complexity of a compiler the implementation of UHC is organized as a series of

increasingly complex steps.

Each step corresponds to a Haskell subset which itself is an extension

of the previous step.

The first step starts with the essentials, namely typed lambda

calculus; the last step corresponds to UHC.

-

Independent of each step the implementation is organized into a set of aspects.

Currently the type system and code generation are defined as aspects,

which can then be left out so the remaining part can be used as a barebones starting point.

-

Each combination of step + aspects corresponds to an actual, that is, an executable compiler.

Each of these compilers is a compiler in its own right.

-

The description of the compiler uses code fragments which are

retrieved from the source code of the compilers.

In this way the description and source code are kept synchronized.

Part of the description of the series of EH compilers is available

as a PhD thesis.

What is UHC’s status, what is new?

-

UHC has seen daylight as a first release.

At the time of this writing the current release is 1.0.1, fixing reported installation problems.

-

Previously started work is still continuing: GRIN backend, full program analysis (Jeroen Fokker),

type system formalization and automatic generation from type rules

(Lucilia Camarãao de Figueiredo, Arie Middelkoop).

What will happen with UHC in the near future?

We plan to do the following:

-

Improving installation of UHC and its use as a Haskell compiler: use of Cabal, adding missing Haskell 98 features.

-

Work on adding static analyses (such as strictness analysis), to enable optimizations.

Further reading

2.5 Hugs as Yhc Core Producer

Background

Hugs is one of the oldest implementations of Haskell known, an interactive compiler

and bytecode interpreter. Yhc is a fork of nhc98 (→2.2). Yhc Core is an

intermediate form Yhc uses to represent a compiled Haskell program.

Yhc converts each Haskell module to a binary Yhc Core file. Core modules are linked together, and all redundant (unreachable) code is removed. The Linked Core is ready for further conversions by backends.

Hugs loads Haskell modules into memory and stores them in a way to some degree similar to Yhc Core.

Hugs is capable to dump its internal storage structure in textual form (let us call it Hugs Core). The output looks similar to Yhc Core, pretty-printed. This was initially intended for debugging purposes, however several Hugs CVS (now darcs) log records related such output to some “Snowball Haskell compiler” ca. 2001.

The experiment

The goal of the experiment described here was to convert Hugs Core into Yhc Core, so Hugs might become a frontend for existing and future Yhc Core optimizers and backends. At least one benefit is clear: Hugs is well maintained to be compatible with recent versions of Haskell libraries and supports many of Haskell language extensions that Yhc does not yet support.

The necessary patches were pushed to the main Hugs repository in June 2008, thanks to Ross Paterson for reviewing them. The following changes were made:

- A configuration option was added to enable the generation of Hugs Core.

- The toplevel Makefile was modified to build an additional executable, corehugs.

- Consistency of Hugs Core output in terms of naming of modules and functions was improved.

The corehugs program converts Haskell source files into Hugs Core files, one for one. All functions and data constructors are preserved in the output, whether reachable or not. Unreachable items will be removed later using Yhc Core tools.

The conversion of Hugs Core to Yhc Core is performed outside of Hugs using the hugs2yc package. The package provides a parser for the syntax of Hugs Core and an interface to the Yhc Core Linker. All Hugs Core files written by corehugs are read in and parsed, resulting in the set of Yhc Core modules in memory. The modules are linked together using the Yhc Core Linker, and all unreachable items are removed at this point. A “driver” program that uses the package may save the linked Yhc Core in a file, or pass it on to a backend.

The code of the hugs2yc package is compatible to both Hugs and GHC.

Availability

In order to use the new Hugs functionality, obtain Hugs from the “HEAD” darcs repo, see http://hackage.haskell.org/trac/hugs/wiki/GettingTheSource. However, Hugs obtained in such a way may not always compile. This Google Code project: http://code.google.com/p/corehugs/ hosts specialized snapshots of Hugs that are more likely to build on a random computer and also include additional packages necessary to work with Yhc Core.

Future plans

Further effort will be taken to standardize various aspects of Yhc Core, especially the specification of primitives, because all backends must implement them uniformly. This Google spreadsheet: http://tinyurl.com/prim-normal-set contains the proposal for an unified set of Yhc Core primitives.

Work is in progress on various backends for Yhc Core, including JavaScript, Erlang, Python, JVM, .NET, and others. This Wiki page: http://tinyurl.com/ycore-conv-infra summarizes their development status.

There were no significant changes, additions, or improvements made to the project since November 2008. After more testing and bugfixing, the Cabal package with Hugs front-end to Yhc Core was released on Hackage. Also, the corehugs project page was updated with new custom Hugs snapshot (as of February 16, 2009); this snapshot is recommended for experiments with Yhc Core.

Further reading

2.6 Haskell frontend for the Clean compiler

We are currently working on a frontend for the Clean compiler (→3.2.3)

that supports a subset of Haskell 98. This will allow Clean modules to import

Haskell modules, and vice versa. Furthermore, we will be able to use some of

Clean’s features in Haskell code, and vice versa. For example, we could define

a Haskell module which uses Clean’s uniqueness typing, or a Clean module which

uses Haskell’s newtypes. The possibilities are endless!

Future plans

Although a beta version of the new Clean compiler is released last year to the

institution in Nijmegen, there is still a lot of work to do before we are able

to release it to the outside world. So we cannot make any promises regarding

the release date. Just keep an eye on the Clean mailing lists for any important

announcements!

Further reading

http://wiki.clean.cs.ru.nl/Mailing_lists

2.7 SAPL, Simple Application Programming Language

SAPL is an experimental interpreter for a lazy functional intermediate language.

The language is more or less equivalent to the core language of Clean (→3.2.3).

SAPL implementations in C and Java exist. It is possible the write SAPL programs directly,

but the preferred use is to generate SAPL. We already implemented an experimental

version of the Clean compiler that generates SAPL as well. The Java version of the

SAPL interpreter can be loaded as a PlugIn in web applications. Currently we use it

to evaluate tasks from the iTask 6.8.3 system at the client side and to handle

(mouse) events generated by a drawing canvas PlugIn.

Future plans

For the near future we have planned to make the Clean to SAPL compiler available in

the standard Clean distribution. Also some further performance improvements of SAPL

are planned.

Further reading

Since the last HCAR, we have started a 15-month project to further

explore the potential of the Reduceron. I have begun implementation of

a new design, and I presented initial results in talks at HFL’09 and

at Birmingham University. Slides of both talks are available online.

Once complete, we will have a rather nippy sequential reducer, and we

plan to see if special-purpose architectural features can also aid

garbage collection and parallel reduction.

There have also been some other developments in the project. I have

made a new Lava (→6.9.2) clone supporting multi-output primitives,

RAMs, easy creation of new primitives and back-ends, behavioral

description, and sized-vectors. And Jason Reich is working on a

supercompiler for the Reduceron’s core language, exploring some of

Neil Mitchell’s ideas from Supero.

Further reading

http://www.cs.york.ac.uk/fp/reduceron/

2.9 Platforms

2.9.1 Haskell in Gentoo Linux

Gentoo Linux is working towards supporting GHC 6.10.3 and the Haskell

Platform (→5.2), and at the time of writing both are available hard masked in portage.

For previous GHC versions we have binaries available for alpha, amd64, hppa,

ia64, sparc, and x86.

Browse the packages in portage at

http://packages.gentoo.org/category/dev-haskell?full_cat.

The GHC architecture/version matrix is available at

http://packages.gentoo.org/package/dev-lang/ghc.

Please report problems in the normal Gentoo bug tracker

at bugs.gentoo.org.

There is also a Haskell overlay providing another 300 packages. Thanks to

the haskell developers using Cabal and Hackage (→5.1), we have been

able to write a tool called “hackport” (initiated by Henning Günther) to

generate Gentoo packages that rarely need much tweaking.

The overlay is available at

http://haskell.org/haskellwiki/Gentoo. Using

Darcs (→6.1.1), it is easy to keep updated and send patches.

It is also available via the Gentoo overlay manager “layman”.

If you choose to use the overlay, then problems should be

reported on

IRC (#gentoo-haskell on freenode), where we coordinate

development, or via email <haskell at gentoo.org>.

Lately a few of our developers have shifted focus, and only a few

developers remain. If you would like to help, which would include

working on the Gentoo Haskell framework, hacking on hackport, writing

ebuilds, and supporting users, please contact us on IRC or email as noted

above.

The Fedora Haskell SIG is an effort to provide good support for Haskell in Fedora.

A new cabal2spec package now generates rpm-spec files for Fedora’s Haskell Packaging Guidelines from Cabal packages, making it easier than ever to package Haskell for Fedora. cabal2spec has been ported to Haskell by Konrad and Yaakov.

Fedora 11 will ship with ghc-6.10.2, cabal-install and an improved set of rpm macros at the end of May 2009.

For Fedora 12 we are planning to include xmonad and hopefully haskell-platform. The latest ghc.spec in development already supports building ghc-6.11 with shared libraries.

Further reading

http://fedoraproject.org/wiki/SIGs/Haskell

Through January–April this year I repaired GHC’s back end support for the SPARC architecture, and benchmarked its performance on haskell.org’s shiny new SPARC T2 server. I also spent time refactoring GHC’s native code generator to make it easier to understand and maintain, and thus less likely for pieces to suffer bit-rot in the future.

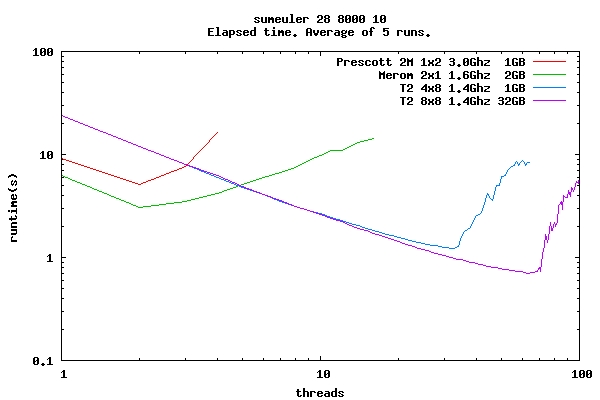

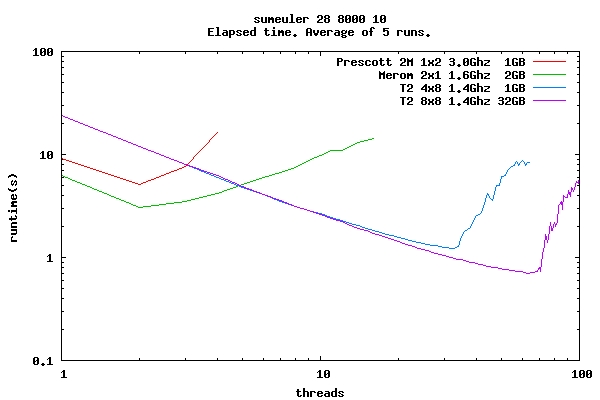

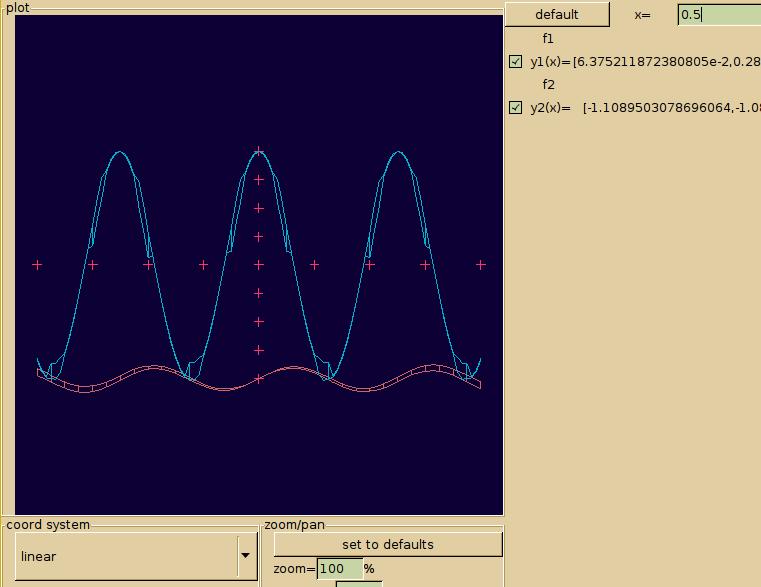

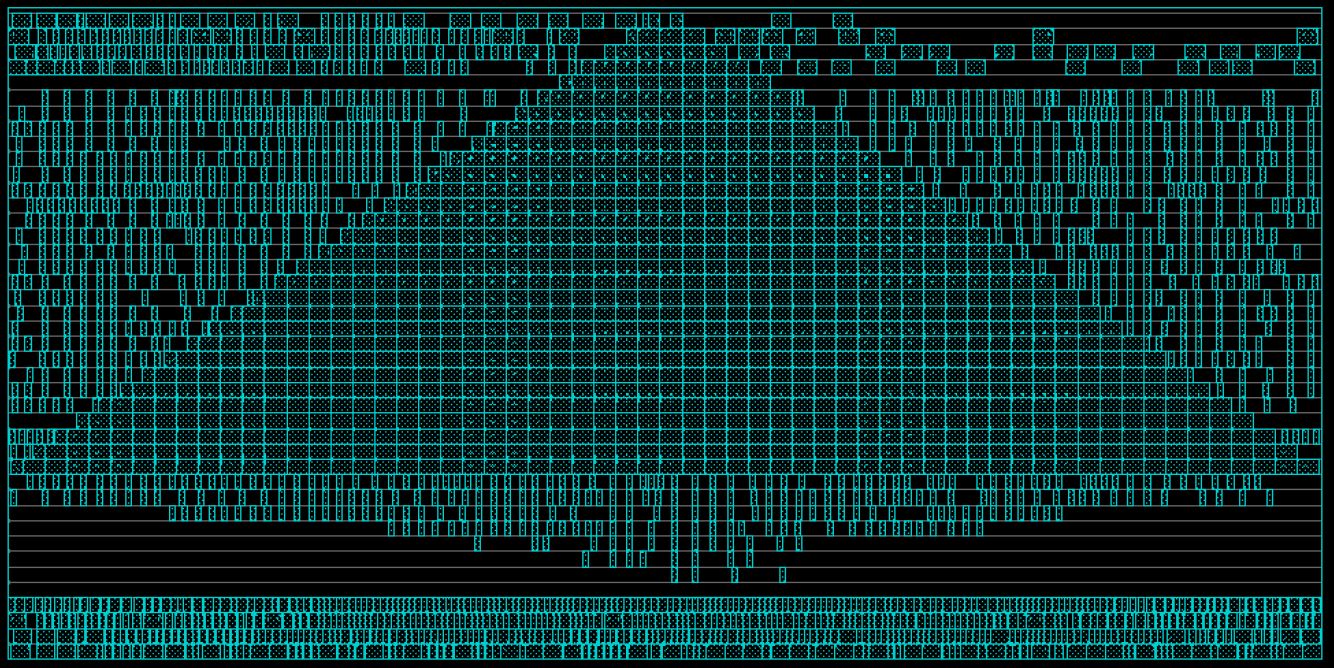

The T2 architecture is interesting to functional programmers because of its highly multi-threaded nature. The T2 has eight cores with eight hardware threads each, for a total of 64 threads per processor. When one of the threads suffers a cache miss, another can continue on with little context switching overhead. All threads on a particular core also share the same L1 cache, which supports fast thread synchronization. This is a perfect fit for parallel lazy functional programs, where memory traffic is high, but new threads are only a par away. The following graph shows the performance of the sumeuler benchmark from the nofib suite when running on the T2. Note that the performance scales almost linearly (perfectly) right up to the point where it runs out of hardware threads.

The project is nearing completion, pending tying up some loose ends, but the port is fully functional and available in the current head branch. More information, including benchmarking is obtainable form the link below. The GHC on OpenSPARC project was generously funded by Sun Microsystems.

Further reading

http://ghcsparc.blogspot.com

3 Language

3.1 Extensions of Haskell

3.1.1 Haskell Server Pages (HSP)

Haskell Server Pages (HSP) is an extension of Haskell targeted at

writing dynamic web pages.

Key features and selling points include:

-

Use literal XML syntax in your Haskell code for creating values of

appropriate datatypes. (Note though that writing literal XML is quite

optional, if you, like me, do not really enjoy that language.)

-

Guarantees that XML output is well-formed (and an HTML output mode

if that is what you need).

-

A model that gives easy access to necessary environment variables.

-

Simple programming model that is easy to use even for

non-experienced Haskell programmers, in particular with a very simple

transition from static XML pages to dynamic HSP pages.

-

Easy integration with a DSL called HJScript that makes it easy to

write client-side (JavaScript) scripts.

-

An extension of HAppS that can serve HSP pages on the fly, making

deployment of pages really simple.

HSP is continuously released onto Hackage. It consists of

a series of interdependent packages with package hsp as the main

top-level starting point, and package happs-hsp for integration with

HAppS. The best way to keep up with development is to grab the darcs

repositories, all located under

http://code.haskell.org/HSP.

Further reading

http://haskell.org/haskellwiki/HSP

3.1.2 GpH — Glasgow Parallel Haskell

Status

A complete, GHC-based implementation of the parallel Haskell extension

GpH and of

evaluation

strategies is available. Extensions of the runtime-system and language to

improve performance and support new platforms are under development.

System Evaluation and Enhancement

- Both GpH and

Eden parallel

Haskells are

being used for parallel language research and in the SCIEnce project

(see below).

- We are making comparative evaluations of a range of GpH implementations and other parallel

functional languages (Eden and Feedback Directed Implicit Parallelism (FDIP)) on multicore architectures.

- We are teaching parallelism to undergraduates using GpH at

Heriot-Watt and

Phillips

Universität Marburg.

- We are developing a big step operational semantics for seq and using it to prove identities.

GpH Applications

As part of the SCIEnce EU FP6 I3 project (026133) (→8.6)

(April 2006 – April 2011) we use GpH and Eden as middleware to provide access to

computational grids from Computer Algebra(CA) systems, including

GAP, Maple MuPad and KANT. We have designed, implemented and are evaluating the SymGrid-Par interface

that facilitates the orchestration of computational algebra components into

high-performance parallel applications.

In recent work we have demonstrated that SymGrid-Par is capable of exploiting

a variety of modern parallel/multicore architectures without any

change to the underlying CA components; and that SymGrid-Par is capable of orchestrating

heterogeneous computations across a high-performance computational

Grid.

Implementations

The GUM implementation of GpH is available in two main development branches.

- The focus of the development has switched to versions tracking GHC releases, currently

GHC 6.8, and the development version is available upon request to the GpH mailing list (see the

GpH web site).

- The stable branch (GUM-4.06, based on GHC-4.06) is available for

RedHat-based Linux machines.

The stable branch is available from the GHC CVS repository

via tag gum-4-06.

We are exploring new, prescient scheduling mechanisms for GpH.

Our main hardware platforms are Intel-based Beowulf clusters and multicores. Work on ports to

other architectures is also moving on (and available on request):

- A port to a Mosix cluster has been built in the

Metis project at

Brooklyn College, with a first version available on request from Murray

Gross.

Further reading

Contact

<gph at macs.hw.ac.uk>, <mgross at dorsai.org>

Eden extends Haskell with a small set of syntactic constructs for

explicit process specification and creation. While providing

enough control to implement parallel algorithms efficiently, it

frees the programmer from the tedious task of managing low-level

details by introducing automatic communication (via head-strict

lazy lists), synchronization, and process handling.

Eden’s main constructs are process abstractions and process

instantiations. The function process :: (a -> b) ->

Process a b embeds a function of type (a -> b) into a

process abstraction of type Process a b which,

when instantiated, will be executed in parallel. Process

instantiation is expressed by the predefined infix operator

( # ) :: Process a b -> a -> b. Higher-level

coordination is achieved by defining skeletons, ranging

from a simple parallel map to sophisticated replicated-worker

schemes. They have been used to parallelize a set of non-trivial

benchmark programs.

Survey and standard reference

Rita Loogen, Yolanda Ortega-Mallén, and Ricardo Peña:

Parallel Functional Programming in Eden, Journal of

Functional Programming 15(3), 2005, pages 431–475.

Implementation

A major revision of the parallel Eden runtime environment for GHC

6.8.1 is available from the Marburg group on request. Support for

Glasgow parallel Haskell (→3.1.2) is currently being added to

this version of the runtime environment. It is planned for the

future to maintain a common parallel runtime environment for Eden,

GpH, and other parallel Haskells. Program executions can be

visualized using the Eden trace viewer tool EdenTV. Recent results

show that the system behaves equally well on workstation clusters

and on multi-core machines.

Recent and Forthcoming Publications

-

Jost Berthold, Mischa Dieterle, Oleg Lobachev, Rita Loogen:

Distributed Memory Programming on Many-Cores - A Case Study

Using Eden Divide-&-Conquer Skeletons,

in Workshop on Many-Cores at ARCS ’09,

Delft, NL, March 2009, VDE Verlag 2009.

-

Mischa Dieterle, Jost Berthold, and Rita Loogen:

Implementing Parallel Google Map-Reduce in Eden,

in: EuroPar 2009, Delft, NL, August 2009, to appear in LNCS.

- Jost Berthold, Mischa Dieterle, Oleg Lobachev, Rita Loogen:

Parallel FFT With Eden Skeletons, in PaCT 2009, Novosibirsk,

Russia, September 2009, to appear in LNCS.

- Thomas Horstmeyer, Rita Loogen: Grace — Graph-based Communication in Eden,

in Draft Proceedings of TFP, Kormarno, June 2009.

- Oleg Lobachev, Rita Loogen: Benchmarking Parallel Haskells

Using Scientific Computing Algorithms, in Draft Proceedings of TFP,

Kormarno, June 2009.

- Lidia Sanchez-Gil, Mercedes Hidalgo-Herrero, Yolanda Ortega-Mallen:

An Operational Semantics for Distributed Lazy Evaluation, in Draft Proceedings of TFP,

Kormarno, June 2009.

Further reading

http://www.mathematik.uni-marburg.de/~eden

XHaskell is an extension of Haskell

which combines parametric polymorphism, algebraic data types, and type

classes with XDuce style regular expression types, subtyping, and

regular expression pattern matching.

The latest version can be downloaded via

http://code.google.com/p/xhaskell/

Latest developments

We are still in the process of turning XHaskell into a library (rather than stand-alone compiler).

We expect results (a Hackage package) by August’09.

The focus of the HaskellActor project is on Erlang-style concurrency abstractions.

See for details:

http://sulzmann.blogspot.com/2008/10/actors-with-multi-headed-receive.html.

Novel features of HaskellActor include

- Multi-headed receive clauses, with support for

- guards, and

- propagation

The HaskellActor implementation (as a library extension to Haskell) is available

via

http://hackage.haskell.org/cgi-bin/hackage-scripts/package/actor.

The implementation is stable, but there is plenty of room for optimizations and extensions (e.g. regular expressions in patterns). If this sounds interesting to anybody (students!), please contact me.

Latest developments

We are currently collecting “real-world” examples where the

HaskellActor extension (multi-headed receive clauses) proves to be useful.

HaskellJoin is a (library) extension of Haskell to support join patterns. Novelties are

- guards and propagation in join patterns,

- efficient parallel execution model which exploits multiple processor cores.

Latest developments

Olivier Pernet (a student of Susan Eisenbach) is currently working on a nicer monadic interface to

the HaskellJoin library.

Further reading

3.2 Related Languages

Curry is a functional logic programming language with Haskell

syntax.

In addition to the standard features of functional programming like

higher-order functions and lazy evaluation, Curry supports features

known from logic programming.

This includes programming with non-determinism, free variables,

constraints, declarative concurrency, and the search for solutions.

Although Haskell and Curry share the same syntax, there is one main

difference with respect to how function declarations are

interpreted.

In Haskell the order in which different rules are given in the source

program has an effect on their meaning.

In Curry, in contrast, the rules are interpreted as equations,

and overlapping rules induce a non-deterministic choice and a

search over the resulting alternatives.

Furthermore, Curry allows to call functions with free variables

as arguments so that they are bound to those values

that are demanded for evaluation, thus providing for

function inversion.

There are three major implementations of Curry.

While the original implementation PAKCS (Portland Aachen Kiel Curry

System) compiles to Prolog, MCC (Münster Curry Compiler) generates

native code via a standard C compiler.

The Kiel Curry System (KiCS) compiles Curry to Haskell aiming to

provide nearly as good performance for the purely functional part as

modern compilers for Haskell do.

From these implementations only MCC will provide type classes in the

near future.

Type classes are not part of the current definition of Curry, though

there is no conceptual conflict with the logic extensions.

Recently, new compilation schemes for translating Curry to Haskell

have been developed that promise significant speedups compared to both

the former KiCS implementation and other existing implementations of

Curry.

There have been research activities in the area of functional logic

programming languages for more than a decade.

Nevertheless, there are still a lot of interesting research topics

regarding more efficient compilation techniques and even semantic

questions in the area of language extensions like encapsulation and

function patterns.

Besides activities regarding the language itself, there is also an

active development of tools concerning Curry (e.g., the documentation

tool CurryDoc, the analysis environment CurryBrowser, the observation

debuggers COOSy and iCODE, the debugger B.I.O. (http://www-ps.informatik.uni-kiel.de/currywiki/tools/oracle_debugger), EasyCheck (→4.3.2), and CyCoTest).

Because Curry has a functional subset, these tools can canonically be

transferred to the functional world.

Further reading

Do you crave for highly expressive types, but do not want to resort to

type-class hackery? Then Agda might provide a view of what the future

has in store for you.

Agda is a dependently typed functional programming language (developed

using Haskell). The language has inductive families, i.e. GADTs which

can be indexed by values and not just types. Other goodies

include coinductive types, parameterized modules, mixfix operators,

and an interactive Emacs interface (the type checker can assist

you in the development of your code).

A lot of work remains in order for Agda to become a full-fledged

programming language (good libraries, mature compilers, documentation,

etc.), but already in its current state it can provide lots of fun as

a platform for experiments in dependently typed programming.

New since last time:

- Versions 2.2.0 and 2.2.2 have been released. The previous

release was in 2007, so the new versions include lots of changes.

- Agda is now available on Hackage (cabal install

Agda-executable).

- Highlighted, hyperlinked HTML can be generated from Agda source

code using agda –html.

- The Agda Wiki is better organized, so it should be easier for a

newcomer to find relevant information.

Further reading

The Agda Wiki: http://wiki.portal.chalmers.se/agda/

Clean is a general purpose, state-of-the-art, pure and lazy functional

programming language designed for making real-world applications. Clean is the

only functional language in the world which offers uniqueness typing.

This type system makes it possible in a pure functional language to incorporate

destructive updates of arbitrary data structures (including arrays) and to make

direct interfaces to the outside imperative world.

Here is a short list with notable features:

- Clean is a lazy, pure, and higher-order functional programming language with

explicit graph rewriting semantics.

- Although Clean is by default a lazy language, one can smoothly turn it into

a strict language to obtain optimal time/space behavior: functions can be

defined lazy as well as (partially) strict in their arguments; any (recursive)

data structure can be defined lazy as well as (partially) strict in any of its

arguments.

- Clean is a strongly typed language based on an extension of the well-known

Milner/Hindley/Mycroft type inferencing/checking scheme including the common

higher-order types, polymorphic types, abstract types, algebraic types, type

synonyms, and existentially quantified types.

- The uniqueness type system in Clean makes it possible to develop efficient

applications. In particular, it allows a refined control over the single

threaded use of objects which can influence the time and space behavior of

programs. The uniqueness type system can be also used to incorporate destructive

updates of objects within a pure functional framework. It allows destructive

transformation of state information and enables efficient interfacing to the

non-functional world (to C but also to I/O systems like X-Windows) offering

direct access to file systems and operating systems.

- Clean supports type classes and type constructor classes to make overloaded

use of functions and operators possible.

- Clean offers records and (destructively updateable) arrays and files.

- Clean has pattern matching, guards, list comprehensions, array

comprehensions and a lay-out sensitive mode.

- Clean offers a sophisticated I/O library with which window based

interactive applications (and the handling of menus, dialogs, windows, mouse,

keyboard, timers, and events raised by sub-applications) can be specified

compactly and elegantly on a very high level of abstraction.

- There is a Clean IDE and there are many libraries available offering

additional functionality.

Future plans

Please see the entry on a Haskell frontend for the Clean

compiler (→2.6) for the future plans.

Further reading

Timber is a general programming language derived from Haskell, with the specific aim of supporting development of complex event-driven systems. It allows programs to be conveniently structured in terms of objects and reactions, and the real-time behavior of reactions can furthermore be precisely controlled via platform-independent timing constraints. This property makes Timber particularly suited to both the specification and the implementation of real-time embedded systems.

Timber shares most of Haskell’s syntax but introduces new primitive constructs for defining classes of reactive objects and their methods. These constructs live in the Cmd monad, which is a replacement of Haskell’s top-level monad offering mutable encapsulated state, implicit concurrency with automatic mutual exclusion, synchronous as well as asynchronous communication, and deadline-based scheduling. In addition, the Timber type system supports nominal subtyping between records as well as datatypes, in the style of its precursor O’Haskell.

A particularly notable difference between Haskell and Timber is that Timber uses a strict evaluation order. This choice has primarily been motivated by a desire to facilitate more predictable execution times, but it also brings Timber closer to the efficiency of traditional execution models. Still, Timber retains the purely functional characteristic of Haskell, and also supports construction of recursive structures of arbitrary type in a declarative way.

The first public release of the Timber compiler was announced in December 2008. It uses the Gnu C compiler as its back-end and targets POSIX-based operating systems. Binary installers for Linux and MacOS X can be downloaded from the Timber web site timber-lang.org.

The current source code repository (also available on-line) contains numerous bug-fixes since the release, but also initial cross-compilation support for ARM7-equipped embedded systems. A proper release of the current version is in preparation.

Apart from this release, on-going work on Timber is concerned with extending the compiler with a supercompilation pass, adding the iPhone as a cross-compilation target, and taking a fundamental grip on the generation of type error messages. The latter work will be based on the principles developed in the context of the Helium compiler.

Further reading

http:://timber-lang.org

3.3 Type System / Program Analysis

3.3.1 Free Theorems for Haskell

Free theorems are statements about program behavior derived from (polymorphic)

types. Their origin is the polymorphic lambda-calculus, but they have also

been applied to programs in more realistic languages like Haskell. Since there

is a semantic gap between the original calculus and modern functional

languages, the underlying theory (of relational parametricity) needs to be

refined and extended. We aim to provide such new theoretical foundations, as

well as to apply the theoretical results to practical problems.

Recent publications are “Parametricity for Haskell with Imprecise Error Semantics” (TLCA’09) and “Free Theorems Involving Type Constructor Classes” (ICFP’09).

On the practical side, we maintain a library and tools for generating free theorems from Haskell types, originally implemented by Sascha Böhme and with contributions from Joachim Breitner. Both the library and a shell-based tool are available from Hackage (as free-theorems and ftshell, respectively). There is also a web-based tool at http://linux.tcs.inf.tu-dresden.de/~voigt/ft.

General features include:

-

three different language subsets to choose from

-

equational as well as inequational free theorems

-

relational free theorems as well as specializations down to function level

-

support for algebraic data types, type synonyms and renamings, type classes

While the web-based tool is restricted to algebraic data types, type synonyms,

and type classes from Haskell standard libraries, the shell-based tool also enables the user to declare their own algebraic data types and so on, and then to derive free theorems from types

involving those. A distinctive feature of the web-based tool is to export the generated theorems in PDF format.

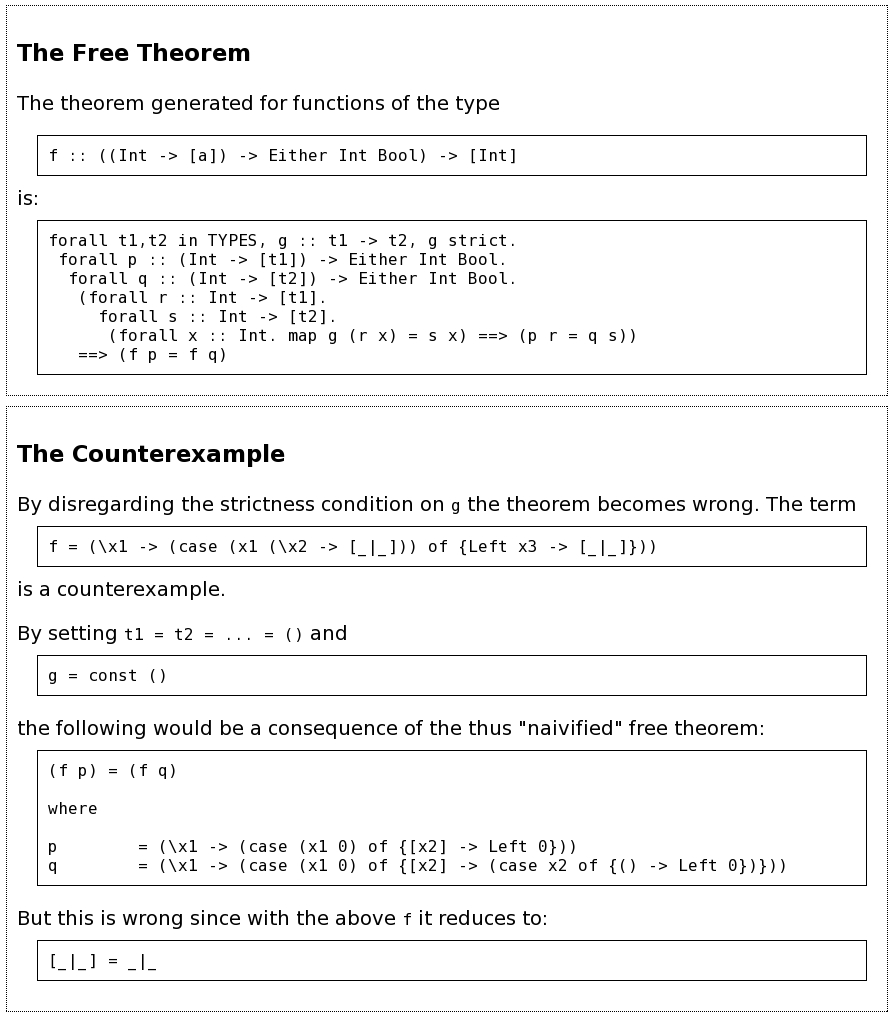

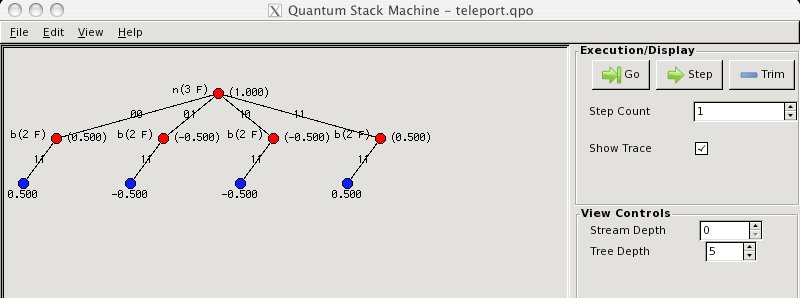

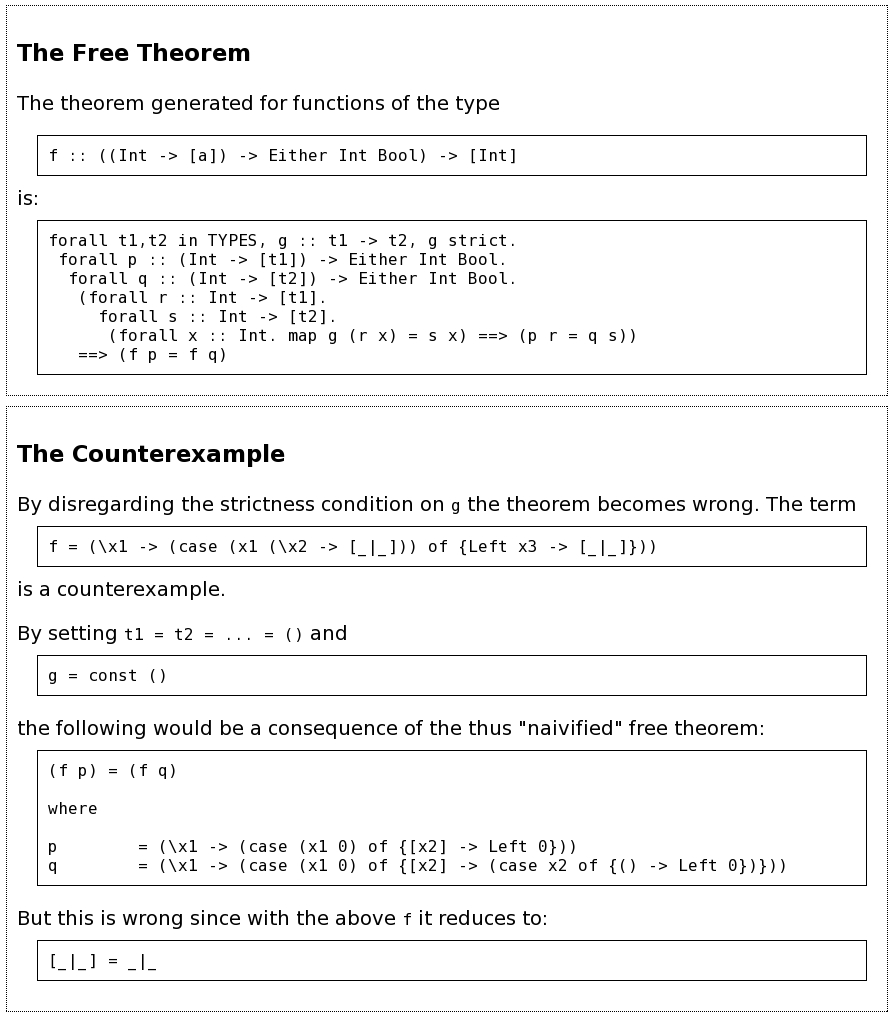

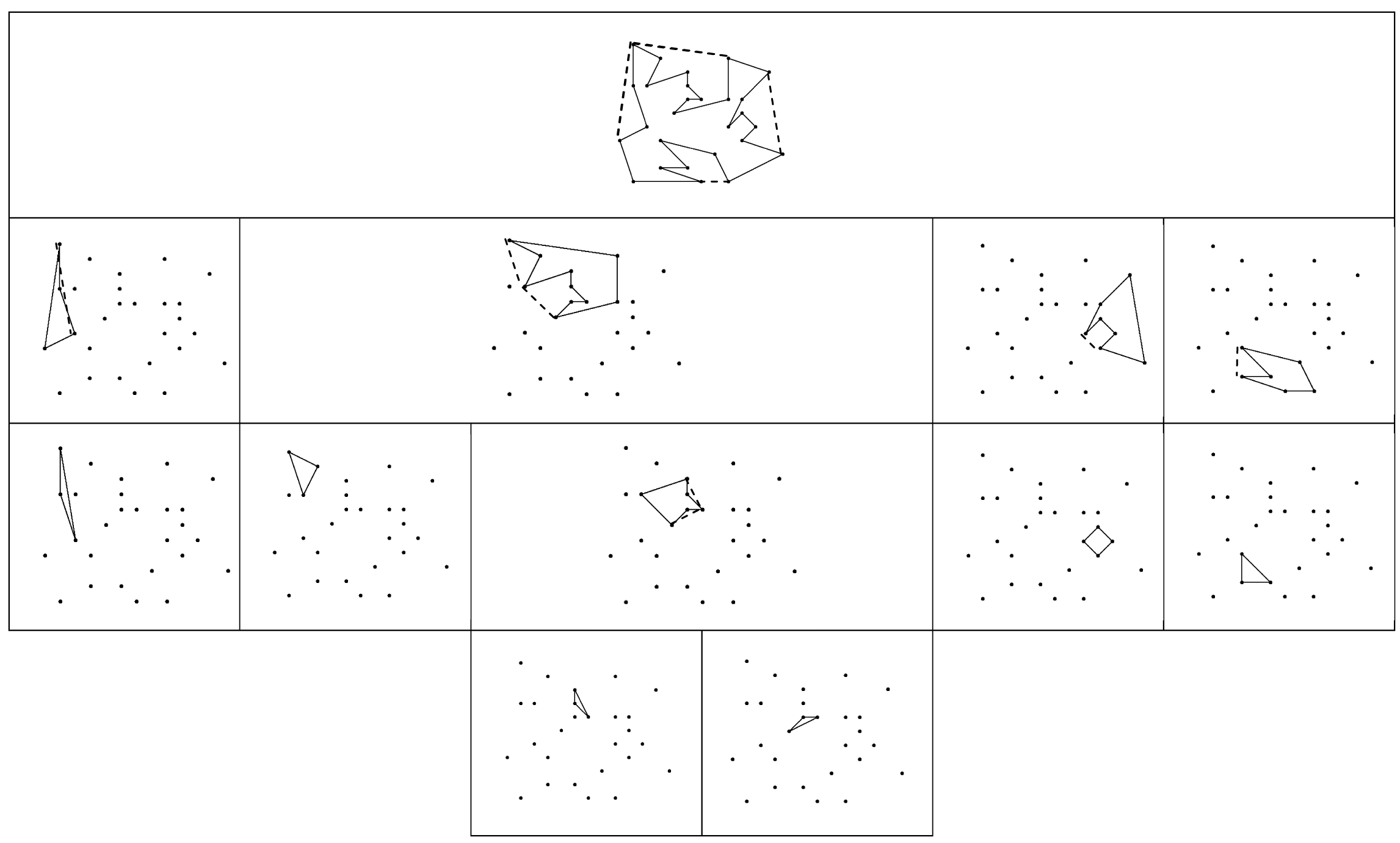

Recent work with Daniel Seidel involves automatically generating counterexamples to naive free theorems (“naive” meaning that the theorems ignore the presence of general recursion and ⊥ in Haskell; see screenshot below), and investigating how selective strictness a la seq has to be put under control to tame its impact on free theorems.

Further reading

http://wwwtcs.inf.tu-dresden.de/~voigt/project/

3.3.2 The Disciplined Disciple Compiler (DDC)

Disciple is an explicitly lazy dialect of Haskell which is being developed as part of my PhD project into program optimization in the presence of side effects. Disciple’s type system is based on Haskell 98, but extends it with region, effect and closure information. This extra information models the potential aliasing of data, interference of computations, and the data sharing properties of functions.

Effect typing is offered as a practical alternative to source level state monads, and allows the programmer to offload the task of maintaining the intended sequence of computations onto the compiler. Disciple can also directly express the “flat” T-monads discussed in Wadler’s “The marriage of effects and monads”, if one were that way inclined.

Disciple uses strict evaluation as the default, but the programmer can suspend arbitrary function applications if desired. As the type system detects when the combination of side effects and call-by-need evaluation would yield a result different from the call-by-value case, we argue that Disciple is still a purely functional language by Sabry’s definition.

Future plans

DDC is currently a research prototype, but will compile programs if you are nice to it. Work over the last six months has consisted of cleaning up the theory, finishing my thesis, and fixing bugs. Immediate plans consist of fixing more bugs, doing a point release in July, and writing a paper on it. DDC is open source, available from the url below, and comes with some cute graphical demos.

Further reading

http://www.haskell.org/haskellwiki/DDC

4 Tools

4.1 Scanning, Parsing, Transformations

Alex is a lexical analyzer generator for Haskell, similar to the tool

lex for C. Alex takes a specification of a lexical syntax written in

terms of regular expressions, and emits code in Haskell to parse that

syntax. A lexical analyzer generator is often used in conjunction

with a parser generator, such as Happy (→4.1.2), to build a complete parser.

The latest release is version 2.3, released October 2008. Alex is in

maintenance mode, we do not anticipate any major changes in the near

future.

Changes in version 2.3 vs. 2.2:

- Works with GHC 6.10.1 and Cabal 1.6.

- Support for efficient lexing of strict bytestrings, by Don Stewart.

- The monadUserState wrapper type was added by Alain Cremieux.

Further reading

http://www.haskell.org/alex/

Happy is a tool for generating Haskell parser code from a BNF

specification, similar to the tool Yacc for C. Happy also includes

the ability to generate a GLR parser (arbitrary LR for ambiguous

grammars).

The latest release is 1.18.2, released 5 November 2008.

Changes in version 1.18.2 vs. 1.17:

- Macro-like parameterized rules were added by Iavor Diatchki.

- Works with GHC 6.10.1 and Cabal 1.6.

- A couple of minor bugfixes: Happy does not get confused by

Template Haskell quoted names in code, and a multi-word token type

is allowed.

Further reading

Happy’s web page is at http://www.haskell.org/happy/.

Further information on the GLR extension can be found at

http://www.dur.ac.uk/p.c.callaghan/happy-glr/.

UUAG is the Utrecht University Attribute Grammar system. It is a preprocessor for Haskell which makes it easy to write catamorphisms (that is, functions that do to any datatype what foldr does to lists). You can define tree walks using the intuitive concepts of inherited and synthesized attributes, while keeping the full expressive power of Haskell. The generated tree walks are efficient in both space and time.

New features are support for polymorphic abstract syntax and higher-order attributes. With polymorphic abstract syntax, the type of certain terminals can be parameterized. Higher-order attributes are useful to incorporate computed values as subtrees in the AST.

The system is in use by a variety of large and small projects, such as the Haskell compiler EHC, the editor Proxima for structured documents, the Helium compiler (→2.3), the Generic Haskell compiler, and UUAG itself. The current version is 0.9.10 (April 2009), is extensively tested, and is available on Hackage.

The last year, there have been bugfix releases only, and one feature has been introduced. An alternative syntax is now available which resembles the Haskell syntax more closely. This syntax can be enabled by means of a commandline flag. The old syntax is still the default.

Further reading

4.2 Documentation

Haddock is a widely used documentation-generation tool for Haskell

library code. Haddock generates documentation by parsing the Haskell

source code directly and including documentation supplied by the

programmer in the form of specially-formatted comments in the source

code itself. Haddock has direct support in Cabal (→5.1), and is used to

generate the documentation for the hierarchical libraries that come

with GHC, Hugs, and nhc98

(http://www.haskell.org/ghc/docs/latest/html/libraries).

The latest release is version 2.2.2, released August 5 2008.

Recent changes:

- Support for GHC 6.8.3

- The Hoogle backend is back, thanks to Neil Mitchell.

- Show associated types in the documentation for class declarations

- Show associated types in the documentation for class declarations

- Show type family declarations

- Show type equality predicates

- Major bug fixes (#1 and #44)

- It is no longer required to specify the path to GHC’s lib dir

- Remove unnecessary parenthesis in type signatures

Future plans

Currently, Haddock ignores comments on some language constructs like GADTs and

Associated Type synonyms. Of course, the plan is to support comments

for these constructs in the future.

Haddock is also slightly more picky on where to put comments compared

to the 0.x series. We want to fix this

as well. Both of these plans require changes to the GHC parser. We

want to investigate to what degree it is possible to

decouple comment parsing from GHC and move it into Haddock, to not be bound by

GHC releases.

Other things we plan to add in future releases:

- Support for GHC 6.10.1

- HTML frames (a la Javadoc)

- Support for documenting re-exports from other packages

Further reading

This tool by Ralf Hinze and Andres Löh

is a preprocessor that transforms literate Haskell code

into LaTeX documents. The output is highly customizable

by means of formatting directives that are interpreted

by lhs2TeX. Other directives allow the selective inclusion

of program fragments, so that multiple versions of a program

and/or document can be produced from a common source.

The input is parsed using a liberal parser that can interpret

many languages with a Haskell-like syntax, and does not restrict

the user to Haskell 98.

The program is stable and can take on large documents.

Since the last report, version 1.14 has been released.

This version is compatible with (and requires) Cabal 1.6.

Apart from minor bugfixes, experimental support for typesetting

Agda (→3.2.2) programs has been added.

Further reading

http://www.cs.uu.nl/~andres/lhs2tex

4.3 Testing, Debugging, and Analysis

4.3.1 SmallCheck and Lazy SmallCheck

SmallCheck is a one-module lightweight testing library. It adapts

QuickCheck’s ideas of type-based generators for test data and a class

of testable properties. But instead of testing a sample of randomly

generated values, it tests properties for all the finitely many values

up to some depth, progressively increasing the depth used. Among

other advantages, existential quantification is supported, and

generators for user-defined types can follow a simple pattern and are

automatically derivable.

Lazy SmallCheck is like SmallCheck, but generates partially-defined

inputs that are progressively refined as demanded by the property

under test. The key observation is that if a property evaluates to

True or False for a partially-defined input then it would also do so

for all refinements of that input. By not generating such refinements,

Lazy SmallCheck may test the same input-space as SmallCheck using

significantly fewer tests. Lazy SmallCheck’s interface is a subset of

SmallCheck’s, often allowing the two to be used interchangeably.

Since the last HCAR, we have not made any significant new

developments. We are still interested in improving and harmonizing

the two libraries and welcome comments and suggestions from users.

Further reading

http://www.cs.york.ac.uk/fp/smallcheck/

EasyCheck is an automatic test tool like QuickCheck or SmallCheck (→4.3.1). It is implemented in the functional logic programming language Curry (→3.2.1). Although simple test cases can be generated from nothing but type

information in all mentioned test tools, users have the possibility to

define custom test-case generators — and make frequent use of this

possibility. Nondeterminism — the main extension of functional-logic programming

over Haskell — is an elegant concept to describe such generators. Therefore it is easier to define custom test-case generators in

EasyCheck than in other test tools. If no custom generator is provided, test cases are generated by a free

variable which non-deterministically yields all values of a type. Moreover, in EasyCheck, the enumeration strategy is independent of the

definition of test-case generators. Unlike QuickCheck’s strategy, it is complete, i.e., every specified

value is eventually enumerated if enough test cases are processed, and

no value is enumerated twice. SmallCheck also uses a complete strategy (breadth-first search) which

EasyCheck improves w.r.t. the size of the generated test data. EasyCheck is distributed with the Kiel Curry System (KiCS).

Further reading

http://www-ps.informatik.uni-kiel.de/currywiki/tools/easycheck

Checkers is a library for reusable QuickCheck properties, particularly for standard type classes (class laws and class morphisms). For instance, much of Reactive (→6.5.2) can be specified and tested using just these properties. Checkers also lots of support for randomly generating data values.

For the past few months, this work has been graciously supported by Anygma.

Further reading

http://haskell.org/haskellwiki/checkers

Gast is a fully automatic test system, written in Clean (→3.2.3). Given a logical property, stated

as a function, it is able to generate appropriate test values, to execute tests with

these values, and to evaluate the results of these tests.

In this respect Gast is similar to Haskell’s QuickCheck.

Apart from testing logical properties, Gast is able

to test state based systems.

In such tests, an extended state machine (esm) is used instead of logical properties.

This gives Gast the possibility to test

properties in a way that is somewhat similar to model checking and

allows you to test interactive systems, such as web pages or GUI programs.

In order to validate and test the quality of the

specifying extended state machine, the esmViz tool

simulates the state machine and tests properties of this esm on the fly.

Gast is based on the generic programming techniques of Clean

which are very similar to Generic Haskell.

Gast is distributed as a library in the standard

Clean distribution. This version is somewhat older than the

version described in recent papers.

Future plans

We would like to determine the quality of the tests for instance by determining

the coverage of tests.

As a next step we would like to use techniques from

model checking to direct the testing based on esms in Gast.

Further reading

4.3.5 Concurrent Haskell Debugger

Programming concurrent systems is difficult and error prone.

The Concurrent Haskell Debugger is a tool for debugging and visualizing

Concurrent Haskell and STM programs.

By simply importing CHD.Control.Concurrent instead of Control.Concurrent and

CHD.Control.Concurrent.STM instead of Control.Concurrent.STM the forked threads

and their concurrent actions are visualized by a GUI.

Furthermore, when a thread performs a concurrent action like writing an

MVar or committing a transaction, it is stopped until the user grants

permission.

This way the user is able to determine the order of execution of concurrent actions.

Apart from that, the program behaves exactly like the original program.

An extension of the debugger can automatically search for deadlocks and

uncaught exceptions in the background. The user is interactively led to a program state where a deadlock or an exception was encountered. To use this feature, it is necessary to

use a simple preprocessor that comes with the package that is available at

http://www.informatik.uni-kiel.de/~fre/chd/.

Another purpose of the preprocessor is to enrich the source code with information for highlighting the next concurrent action in a source code view.

Future plans

- provide a more powerful preprocessor that is able to process imported modules

- add new views, like a visualization as a message sequence chart

- allow to undo concurrent actions

Further reading

Haskell Program Coverage (HPC) is a set of tools for understanding

program coverage. It consists of a source-to-source translator, an

option (-fhpc) in ghc, a stand alone post-processor (hpc), a

post-processor for reporting coverage, and an AJAX based interactive

coverage viewer.

Hpc has been remarkably stable over the lifetime of ghc-6.8, with only

a couple of minor bug fixes, including better support for .hsc

files. The source-to-source translator is not under active

development, and is looking for a new home. The interactive coverage

viewer, which was under active development in 2007 at Galois, has now

been resurrected at Hpc’s new home in Kansas. Thank you Galois, for

letting this work be released. The plan is to take the interactive

viewer, and merge it with GHCi’s debugging API, giving an AJAX based

debugging tool.

Contact

<andygill at ku.edu>

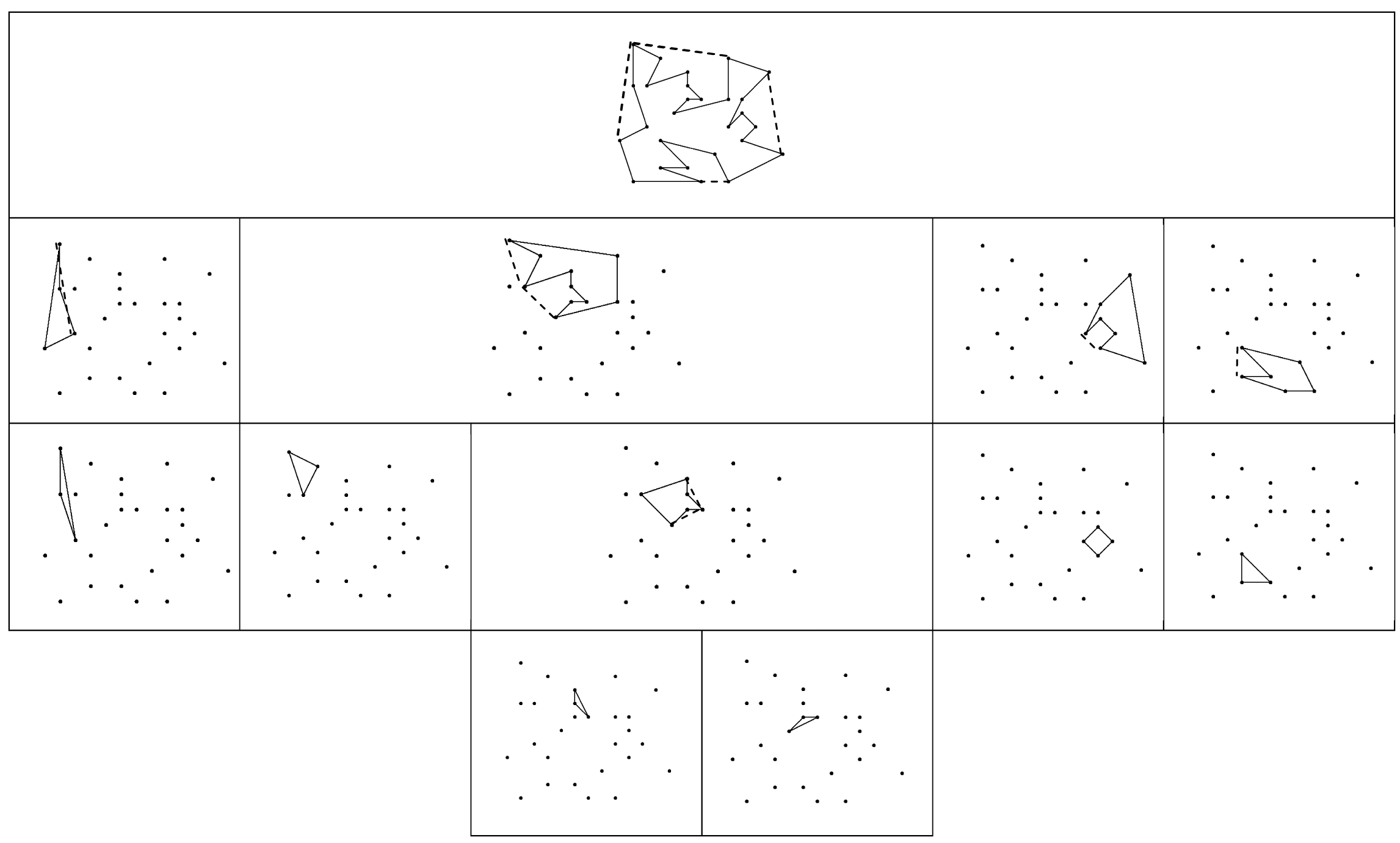

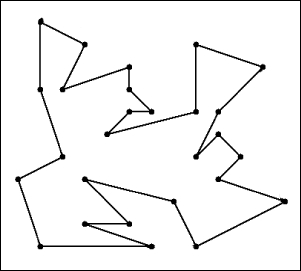

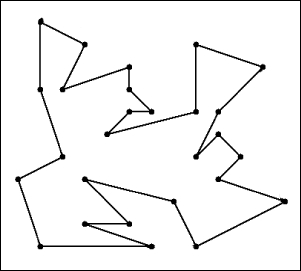

SourceGraph is a utility program aimed at helping Haskell programmers

visualize their code and perform simple graph-based analysis (representing

functions as nodes in the graphs and function calls as directed edges). It is a sample usage of the Graphalyze

library (→5.8.4), which is designed as a general-purpose

graph-theoretic analysis library. These two pieces of software

are the focus of Ivan’s mathematical honors thesis, “Graph-Theoretic Analysis

of the Relationships Within Discrete Data”.

Whilst fully usable, SourceGraph is currently limited in terms of input and

output. It takes in the Cabal file of the project, and then analyzes all .hs

and .lhs files recursively found in that directory. It utilizes Haskell-Src

with Extensions, and should thus parse all extensions (with the current

exceptions of Template Haskell, HaRP, and HSX); files requiring C Pre-Processing

are as yet unparseable, though this should be fixed in a future release.

However, all functions defined in Class declarations and records are ignored

due to difficulty in determining which actual instance is meant. The final

report is then created in Html format in a “SourceGraph” subdirectory of the

project’s root directory. It is envisaged that

future versions will at least allow the user to choose which output

format to produce the report in, and even customize which analyses are

performed (e.g., just create the graph of the entire code-base, and not

perform any analysis).

Current analysis algorithms utilized include: alternative module

groupings, whether a module should be split up, root analysis, clique

and cycle detection as well as finding functions which can safely be

compressed down to a single function. Please note however that

SourceGraph is not a refactoring utility, and that its analyses

should be taken with a grain of salt: for example, it might recommend

that you split up a module, because there are several distinct

groupings of functions, when that module contains common utility

functions that are placed together to form a library module (e.g., the

Prelude).

The output from running SourceGraph on itself can be found at

http://community.haskell.org/~ivanm/SourceGraph/SourceGraph.html.

Further reading

HLint is a tool that reads Haskell code and suggests changes to make it simpler.

For example, if you call maybe foo id it will suggest using fromMaybe foo instead.

HLint is compatible with almost all Haskell extensions, and can be easily extended with additional hints.

Further reading

http://community.haskell.org/~ndm/hlint/

hp2any is the codename for the 2009 Google Summer of Code

project titled “Improving space profiling experience”.

The aim of the project is to create a set of tools that make heap

profiling of Haskell programs easier. In particular, the following

components are planned:

- a library to process profiler output in an efficient way and

make it easily accessible for other tools;

- a real-time visualizer (most likely using OpenGL);

- some kind of history manager to keep track of profiling data and

make it possible to perform a comparative analysis of performance

between different versions of your program;

- a maintainable and extensible replacement for hp2ps.

Community input is greatly appreciated!

Further reading

4.4 Development

4.4.1 Hoogle — Haskell API Search

Hoogle is an online Haskell API search engine. It searches the functions in the

various libraries, both by name and by type signature. When searching by

name, the search just finds functions which contain that name as a

substring. However, when searching by types it attempts to find any

functions that might be appropriate, including argument reordering and

missing arguments. The tool is written in Haskell,

and the source code is available online. Hoogle is available as a web

interface, a command line tool, and a lambdabot plugin.

Work is progressing to generate Hoogle search information for

all the libraries on Hackage.

Further reading

http://haskell.org/hoogle

4.4.2 HEAT: The Haskell Educational Advancement Tool

Heat is an interactive development environment (IDE) for learning and teaching Haskell. Heat was designed for novice students learning the functional programming language Haskell. Heat provides a small number of supporting features and is easy to use. Heat is portable, small and works on top of the Haskell interpreter Hugs.

Heat provides the following features:

- Editor for a single module with syntax-highlighting and matching brackets.

- Shows the status of compilation: non-compiled; compiled with or without error

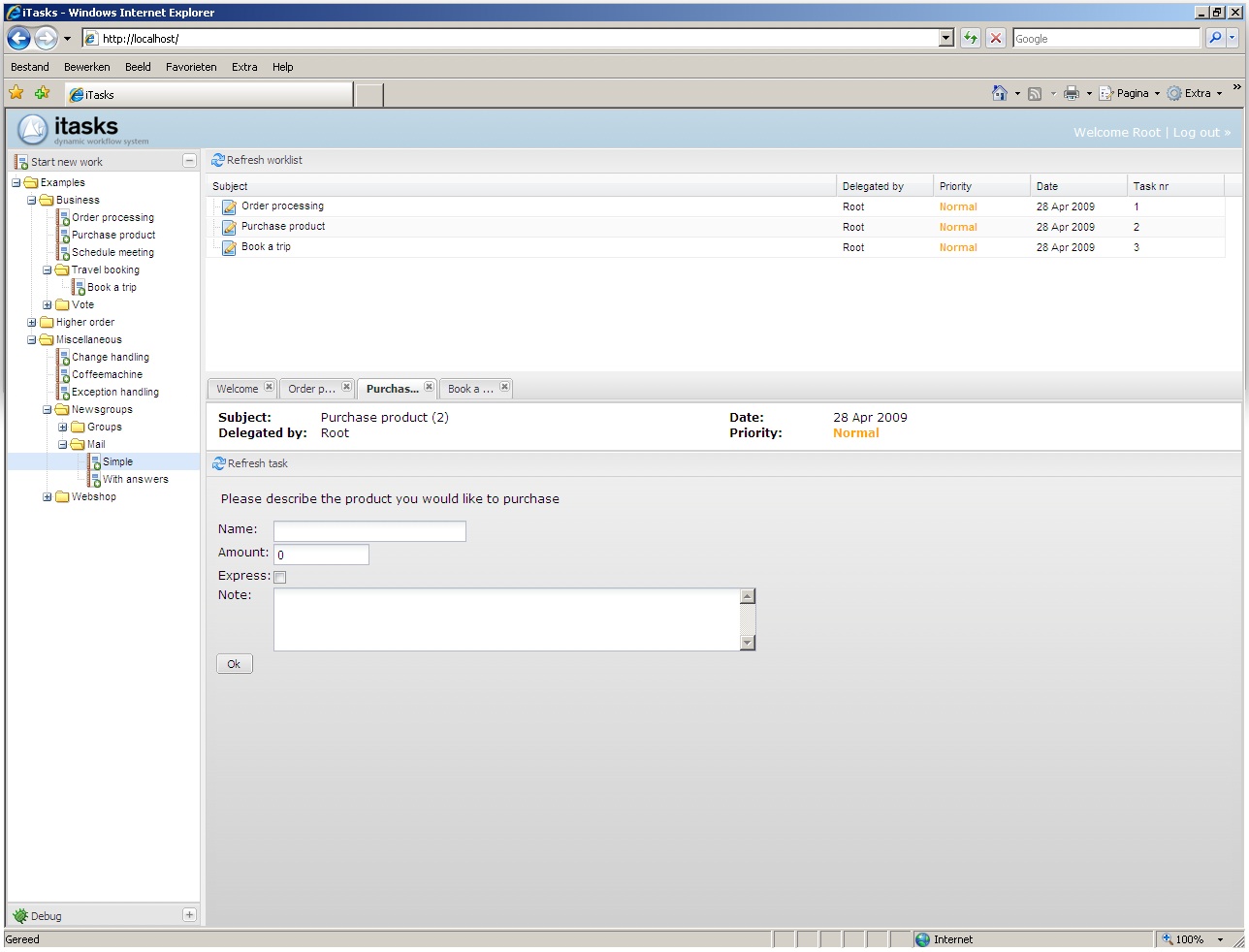

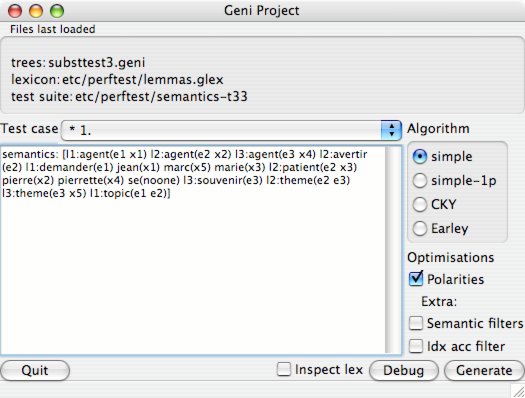

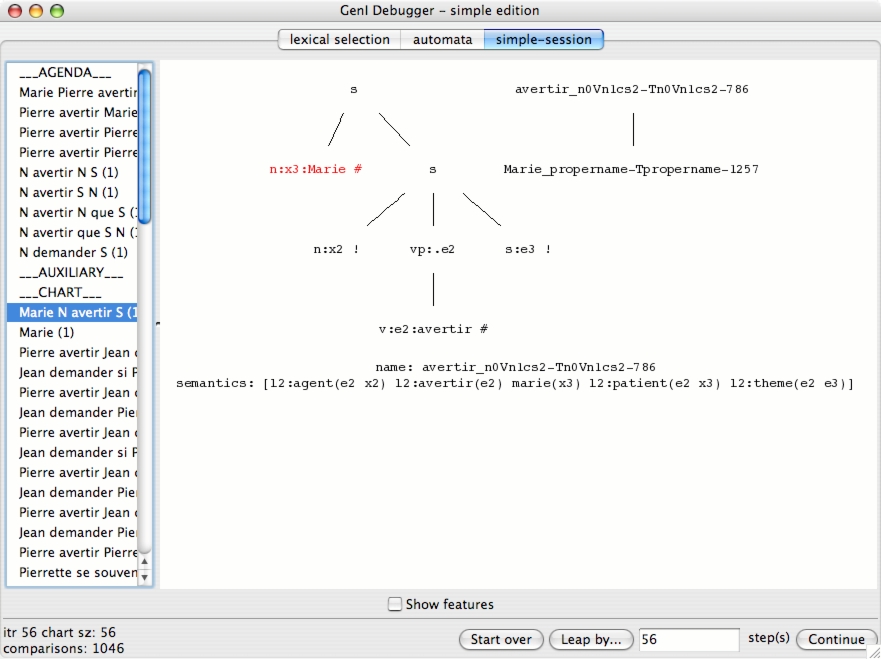

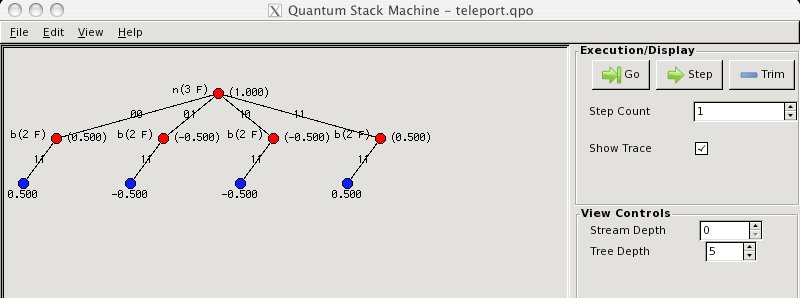

- Interpreter console that highlights the prompt and error messages.