type family Equals (x :: *) (y :: *) :: Bool

type instance where

Equals x x = True

Equals x y = False

Details can be found on the wiki page [OTF].

Back end and code generation:

- The new code generator.

-

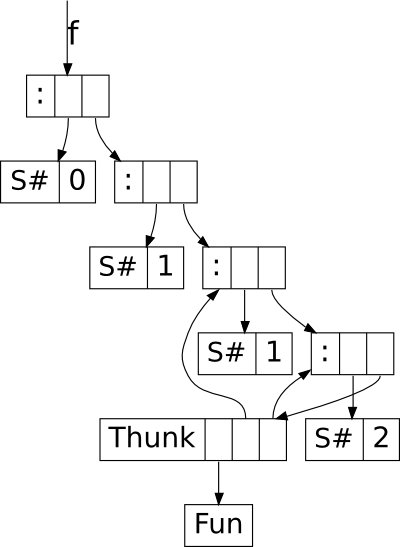

Several years since this project was started, the new code generator is finally working [14], and is now switched on by default in master. It will be in GHC 7.8.1. From a user’s perspective there should be very little difference, though some programs will be faster.

There are three important improvements in the generated code. One is that let-no-escape functions are now compiled much more efficiently: a recursive let-no-escape now turns into a real loop in C--. The second improvement is that global registers (R1, R2, etc.) are now available for the register allocator to use within a function, provided they aren’t in use for argument passing. This means that there are more registers available for complex code sequences. The third improvement is that we have a new sinking pass that replaces the old “mini-inliner” from the native code generator, and is capable of optimisations that the old pass couldn’t do.

Hand-written C-- code can now be written in a higher-level style with real function calls, and most of the hand-written C-- code in the RTS has been converted into the new style. High-level C-- does not mention global registers such as R1 explicitly, nor does it manipulate the stack; all this is handled by the C-- code generator in GHC. This is more robust and simpler, and means that we no longer need a special calling-convention for primops — they now use the same calling convention as ordinary Haskell functions.

We’re interested in hearing about both performance improvements and regressions due to the new code generator.

- Support for vector (SSE/AVX) instructions.

- Support for SSE vector instructions, which permit 128-bit vectors, is now in HEAD. As part of this work, up to 6 arguments of type |Double|, |Float|, or vector can be passed in registers. Previously only 4 |Float| and 2 |Double| arguments could be passed in registers. AVX support will be added soon pending a refactoring of the code that implements vector primops.

Data Parallel Haskell:

- Vectorisation Avoidance.

-

Gabriele Keller and Manuel Chakravarty have extended the DPH vectoriser with an analysis that determines when expressions cannot profitably be vectorised. Vectorisation avoidance improves compile times for DPH programs, as well as simplifying the handling of vectorised primitive operations. This work is now complete and will be in GHC 7.8.

- New Fusion Framework.

- Ben Lippmeier has been waging a protracted battle with the problem of array fusion. Absolute performance in DPH is critically dependent on a good array fusion system, but existing methods cannot properly fuse the code produced by the DPH vectoriser. An important case is when a produced array is consumed by multiple consumers. In vectorised code this is very common, but none of the “short cut” array fusion approaches can handle it — e.g. stream fusion used in |Data.Vector|, delayed array fusion in Repa, |foldr|/|build| fusion etc. The good news is that we’ve found a solution that handles this case and others, based on Richard Waters’s series expressions, and are now working on an implementation. The new fusion system is embodied by a GHC plugin that performs a custom core-to-core transformation, and some added support to the existing Repa library. We’re pushing to get the first version working for a paper at the upcoming Haskell Symposium.

A faster I/O manager:

Andreas Voellmy performed a significant reworking of the IO manager to improve multicore scaling and sequential speed. The most significant problems of the old IO manager were (1) severe contention (under some workloads) on a single |MVar| holding the table of callbacks, (2) invoking a callback typically requires messaging across capabilities, (3) polling for ready files performs an OS context switch, causing excessive context switching. These problems contribute greatly to the response time of servers written in Haskell.

The redesigned IO manager addresses these problems in the following ways. We replace the single |MVar| for the callback table with a simple concurrent hash table, allowing for more concurrent registrations and callbacks. We use one IO manager service thread per capability, each with its own callback table and with the service thread for a given capability serving the waiting Haskell threads that were running (and will be woken up) on that capability. This further reduces contention on callback tables, ensures that notifying a thread is typically done without cross-capability messaging and allows the work of polling and notifying threads to be parallelized across cores. To reduce context switching, we modify the service loops to first poll without waiting, which can be done without releasing the HEC (which would typically incur an OS context switch).

The new IO manager also takes advantage of the edge-triggered and one-shot modes of epoll on Linux to achieve further performance improvements on Linux.

These changes result in substantial performance improvements in some applications. In particular, we implemented a minimal web server and found that performance with the new “parallel” IO manager improved by a factor of 19 versus the old IO manager; with the old IO manager, our server achieved a peak performance of roughly 45,000 http requests per second using 8 cores (performance degraded after 8 cores), while the same server using the parallel IO manager serves 860,000 requests/sec using 18 cores [PIO]. We have measured similar improvements in the response time of servers written in Haskell.

Kazu Yamamoto contributed greatly to the project by implementing the redesign for BSD-based systems using kqueue and by improving the code in order to bring it up to GHC’s standards. In addition, Bryan O’Sullivan and Johan Tibell provided critical guidance and reviews.

Dynamic linking:

Ian Lynagh has changed GHCi to use dynamic libraries rather than static libraries. This means that we are now able to use the system linker to load packages, rather than having to implement our own linker. From the user’s point of view, that means that a number of long-standing bugs in GHCi will be fixed, and it also reduces the amount of work needed to get a fully functional GHC port to a new platform. Currently, on Windows GHCi still uses static libraries, but we hope to have dynamic libraries working on Windows too by the time we release.

Cross compilation:

Three connected projects concerned cross-compilation

- Registerised ARM support

-

added using David Terei’s LLVM compiler back end with Stephen Blackheath doing an initial ARMv5 version and LLVM patch and Karel Gardas working on floating point support, ARMv7 compatibility and LLVM headaches. Ben Gamari did work on the runtime linker for ARM.

- General cross-compiling

-

with much work by Stephen Blackheath and Gabor Greif (though many others have worked on this as well).

- A cross-compiler for Apple iOS.

- iOS-specific parts [IOS] were mostly done by Stephen Blackheath with Luke Iannini on the Cabal patch, testing and supporting infrastructure, also with assistance and testing by Mietek Bak and Jonathan Fischoff, and thanks to many others for testing; The iOS cross compiler was started back in 2009 by Stephen Blackheath with funding from Ryan Trinkle of iPwn Studios.

Links:

- [TYH], http://www.haskell.org/haskellwiki/GHC/TypeHoles

- [OL], http://hackage.haskell.org/trac/ghc/wiki/OverloadedLists

- [KD], http://hackage.haskell.org/trac/ghc/wiki/GhcKinds/KindsWithoutData

- [OTF], Overlapping type family instances, http://hackage.haskell.org/trac/ghc/wiki/NewAxioms

- [CG], The new codegen is nearly ready to go live, http://hackage.haskell.org/trac/ghc/blog/newcg-update

- [PIO], The results are amazing, https://twitter.com/bos31337/status/284701554458640384

- [IOS], Building for Apple iOS targets, http://hackage.haskell.org/trac/ghc/wiki/Building/CrossCompiling/iOS

3.3 UHC, Utrecht Haskell Compiler

| Report by: | Atze Dijkstra |

| Participants: | many others |

| Status: | active development |

What is new? UHC is the Utrecht Haskell Compiler, supporting almost all Haskell98 features and most of Haskell2010, plus experimental extensions. The current focus is on the Javascript backend.

What do we currently do and/or has recently been completed? As part of the UHC project, the following (student) projects and other activities are underway (in arbitrary order):

- (completed) Jurrien Stutterheim and others: building web applications with the Javascript backend. See the below UHC Javascript url for more info.

- (ongoing) Jeroen Bransen (PhD): “Incremental Global Analysis”.

- (ongoing) Jan Rochel (PhD): “Realising Optimal Sharing”, based on work by Vincent van Oostrum and Clemens Grabmayer.

- (ongoing) Atze Dijkstra: overall architecture, type system, bytecode interpreter + java + javascript backend, garbage collector.

Background.UHC actually is a series of compilers of which the last is UHC, plus infrastructure for facilitating experimentation and extension. The distinguishing features for dealing with the complexity of the compiler and for experimentation are (1) its stepwise organisation as a series of increasingly more complex standalone compilers, the use of DSL and tools for its (2) aspectwise organisation (called Shuffle) and (3) tree-oriented programming (Attribute Grammars, by way of the Utrecht University Attribute Grammar (UUAG) system (→5.3.3).

Further reading

- UHC Homepage:

http://www.cs.uu.nl/wiki/UHC/WebHome

- UHC Github repository:

https://github.com/UU-ComputerScience/uhc

- UHC Javascript backend:

http://uu-computerscience.github.com/uhc-js/

- Attribute grammar system:

http://www.cs.uu.nl/wiki/HUT/AttributeGrammarSystem

3.4 Specific Platforms

The FreeBSD Haskell Team is a small group of contributors who maintain Haskell software on all actively supported versions of FreeBSD. The primarily supported implementation is the Glasgow Haskell Compiler together with Haskell Cabal, although one may also find Hugs and NHC98 in the ports tree. FreeBSD is a Tier-1 platform for GHC (on both i386 and amd64) starting from GHC 6.12.1, hence one can always download vanilla binary distributions for each recent release.

We have a developer repository for Haskell ports that features around 460 ports of many popular Cabal packages. The updates committed to this repository are continuously integrated to the official ports tree on a regular basis. However, the FreeBSD Ports Collection already includes many popular and important Haskell software: GHC 7.4.2, Haskell Platform 2012.4.0.0, Gtk2Hs, wxHaskell, XMonad, Pandoc, Gitit, Yesod, Happstack, Snap, Agda, git-annex, and so on – all of them will be available as part of the upcoming FreeBSD 8.4-RELEASE.

Note that Haskell ports are now built with dynamic linking on by default, and the GHC port uses the latest available version of GCC and binutils from the Ports Collection as GCC in base is obsolete and soon replaced with Clang. We have just started the preparations for Haskell Platform 2013.2.0.0, which will bring us GHC 7.6.3 to the Ports Collection soon. We also learned that our Haskell ports have been successfully imported to DPorts, an effort to use FreeBSD ports on DragonFly. In addition to this, there was some support added for LLVM-based code generation in our development repository.

If you find yourself interested in helping us or simply want to use the latest versions of Haskell programs on FreeBSD, check out our page at the FreeBSD wiki (see below) where you can find all important pointers and information required for use, contact, or contribution.

Further reading

The Debian Haskell Group aims to provide an optimal Haskell experience to users of the Debian GNU/Linux distribution and derived distributions such as Ubuntu. We try to follow the Haskell Platform versions for the core package and package a wide range of other useful libraries and programs. At the time of writing, we maintain 628 source packages.

A system of virtual package names and dependencies, based on the ABI hashes, guarantees that a system upgrade will leave all installed libraries usable. Most libraries are also optionally available with profiling enabled and the documentation packages register with the system-wide index.

The just released stable Debian release (“wheezy”) provides the Haskell Platform 2012.3.0.0 and GHC 7.4.1. We are already working on new features for the next release. Full support for running hoogle to search all installed Haskell documentation is in the making, and Debian “experimental” already ships GHC 7.6.3 and up-to-date versions of the libraries.

Debian users benefit from the Haskell ecosystem on 13 architecture/kernel combinations, including the non-Linux-ports KFreeBSD and Hurd.

Further reading

Gentoo Linux currently officially supports GHC 7.4.1, GHC 7.0.4 and GHC 6.12.3 on x86, amd64, sparc, alpha, ppc, ppc64 and some arm platforms.

The full list of packages available through the official repository can be viewed at http://packages.gentoo.org/category/dev-haskell?full_cat.

The GHC architecture/version matrix is available at http://packages.gentoo.org/package/dev-lang/ghc.

Please report problems in the normal Gentoo bug tracker at http://bugs.gentoo.org.

There is also an overlay which contains almost 800 extra unofficial and testing packages. Thanks to the Haskell developers using Cabal and Hackage (→6.3.1), we have been able to write a tool called “hackport” (initiated by Henning Günther) to generate Gentoo packages with minimal user intervention. Notable packages in the overlay include the latest version of the Haskell Platform (→3.1) as well as the latest 7.4.1 release of GHC, as well as popular Haskell packages such as pandoc, gitit, yesod (→5.2.6) and others.

As usual GHC 7.4 branch required some packages to be patched. For a 6 months period we have got about 150 patches waiting for upstream inclusion.

Over the time more and more people get involved in gentoo-haskell project which reflects positively on haskell ecosystem health status.

More information about the Gentoo Haskell Overlay can be found at http://haskell.org/haskellwiki/Gentoo. It is available via the Gentoo overlay manager “layman”. If you choose to use the overlay, then any problems should be reported on IRC (#gentoo-haskell on freenode), where we coordinate development, or via email <haskell at gentoo.org> (as we have more people with the ability to fix the overlay packages that are contactable in the IRC channel than via the bug tracker).

As always we are more than happy for (and in fact encourage) Gentoo users to get involved and help us maintain our tools and packages, even if it is as simple as reporting packages that do not always work or need updating: with such a wide range of GHC and package versions to co-ordinate, it is hard to keep up! Please contact us on IRC or email if you are interested!

For concrete tasks see our perpetual TODO list: https://github.com/gentoo-haskell/gentoo-haskell/blob/master/projects/doc/TODO.rst

3.4.4 Fedora Haskell SIG

| Report by: | Jens Petersen |

| Participants: | Lakshmi Narasimhan, Shakthi Kannan, Michel Salim, Ben Boeckel, and others |

| Status: | ongoing |

The Fedora Haskell SIG works on providing good Haskell support in the Fedora Project Linux distribution.

Fedora 18 will ship in December with ghc-7.4.1 and haskell-platform-2012.2.0.0, and version updates also to many other packages. New packages added since the release of Fedora 17 include cabal-rpm, happstack-server, hledger, and a bunch of libraries. Cabal-rpm has been revamped to replace the previously used cabal2spec packaging shell-script.

At the time of writing there are now 205 Haskell source packages in Fedora. The Fedora package version numbers listed on the Hackage website refer to the latest branched version of Fedora (currently 18).

Fedora 19 work is starting now with ghc-7.4.2, haskell-platform-2012.4 and plans finally to package up Yesod.

If you want to help with package reviews and Fedora Haskell packaging, please join us on Freenode irc #fedora-haskell and our low-traffic mailing-list, or follow @fedorahaskell.

Further reading

- Homepage: http://fedoraproject.org/wiki/SIGs/Haskell

- Mailing-list: https://admin.fedoraproject.org/mailman/listinfo/haskell

- Package list: https://admin.fedoraproject.org/pkgdb/users/packages/haskell-sig

- Package changes: http://git.fedorahosted.org/cgit/haskell-sig.git/tree/packages/diffs/f17-f18.compare

4 Related Languages and Language Design

4.1 Agda

| Report by: | Nils Anders Danielsson |

| Participants: | Ulf Norell, Andreas Abel, and many others |

| Status: | actively developed |

Agda is a dependently typed functional programming language (developed using Haskell). A central feature of Agda is inductive families, i.e. GADTs which can be indexed by values and not just types. The language also supports coinductive types, parameterized modules, and mixfix operators, and comes with an interactive interface—the type checker can assist you in the development of your code.

A lot of work remains in order for Agda to become a full-fledged programming language (good libraries, mature compilers, documentation, etc.), but already in its current state it can provide lots of fun as a platform for experiments in dependently typed programming.

In November Agda 2.3.2 was released, with the following changes (among others):

- Pattern synonyms (Stevan Andjelkovic and Adam Gundry).

- Modifications to the constraint solver (Andreas Abel).

- A LaTeX backend, with the aim to support both precise, Agda-style highlighting, and lhs2TeX-style alignment of code (Stevan Andjelkovic).

- The Emacs mode no longer depends on GHCi and haskell-mode (Peter Divianszky).

- The Emacs mode is more interactive: type-checking no longer blocks Emacs, and there is an option to highlight the expression that is currently being type-checked (Guilhem Moulin and Nils Anders Danielsson).

Further reading

The Agda Wiki: http://wiki.portal.chalmers.se/agda/

Disciple Core is an explicitly typed language based on System-F2, intended as an intermediate representation for a compiler. In addition to the polymorphism of System-F2 it supports region, effect and closure typing. Evaluation order is left-to-right call-by-value by default, but explicit lazy evaluation is also supported. The language includes a capability system to track whether objects are mutable or constant, and to ensure that computations that perform visible side effects are not suspended with lazy evaluation.

The Disciplined Disciple Compiler (DDC) is being rewritten to use the redesigned Disciple Core language. This new DDC is at a stage where it will parse and type-check core programs, and compile first-order functions over lists to executables via C or LLVM backends. There is also an interpreter that supports the full language.

What is new?

- Over the last month we’ve been working on a new core language fragment, Disciple Core Flow, to support work on array fusion for Data Parallel Haskell (DPH). We’re writing a GHC plugin that translates GHC core programs to Disciple Core Flow, performs array fusion, and translates back. We’re using Disciple Core Flow instead of GHC Core directly because it has a simple (and working) external core format, which we use to test the fusion transform.

Further reading

4.4 SugarHaskell

| Report by: | Sebastian Erdweg |

| Participants: | Tillmann Rendel, Felix Rieger, Klaus Ostermann |

| Status: | active |

SugarHaskell is a generic extension of Haskell that enables programmers to define and use flexible syntactic extensions of Haskell. SugarHaskell extensions are organized as regular libraries, which define an extended syntax and a transformation of the extended syntax into Haskell’s base syntax (or an extension thereof). To activate an extension, a SugarHaskell programmer simply imports the library that defines the extension; the extension is active in the remainder of the current file. Our Haskell Symposium paper [4] contains numerous examples, including arrow notation and, as illustrated in the following, idiom brackets:

import Control.Applicative

import Control.Applicative.IdiomBrackets

instance Traversable Tree where

traverse f Leaf = (| Leaf |)

traverse f (Node l x r) =

(| Node (traverse f l) (f x) (traverse f r) |)

The library |Control.Applicative.IdiomBrackets| provides a syntactic extension for programming with applicatives, using idiomatic brackets |(|| ... ||)|. Uses of idiom brackets are desugared in-place to produce plain Haskell code. Generally, the usage of syntactic extensions in a program is transparent to its clients.

SugarHaskell provides both a compiler and an Eclipse-based IDE. The SugarHaskell compiler is available as a Hackage package [2] and can be easily installed using cabal-install. Since our system is implemented in Java, the SugarHaskell package requires a preinstalled Java runtime. Moreover, we distribute the source code via github, and involvement of others is welcome. The SugarHaskell IDE is available as an Eclipse plugin and can be installed from our Eclipse update site [3]. The IDE provides some standard editor services such as code coloring or outlining for Haskell, and is also extensible itself to accommodate user-defined editor services for SugarHaskell extensions.

SugarHaskell is a research prototype that is under active development. We work both on the implementation and the conceptional foundation of the system. The feedback cycle is short and any feedback is appreciated.

Further reading

- http://sugarj.org

- http://hackage.haskell.org/package/sugarhaskell

- Eclipse update site: http://sugarj.org/update

- Sebastian Erdweg, Felix Rieger, Tillmann Rendel, and Klaus Ostermann. Layout-sensitive Language Extensibility with SugarHaskell. In Haskell Symposium, pages 149–160. ACM, 2012.

5 Haskell and …

5.1 Haskell and Parallelism

5.1.1 Eden

| Report by: | Rita Loogen |

| Participants: |

in Madrid: Yolanda Ortega-Mallén,

Mercedes Hidalgo, Lidia Sanchez-Gil, Fernando Rubio, Alberto de la Encina,

in Marburg: Mischa Dieterle, Thomas Horstmeyer, Oleg Lobachev, Rita Loogen, in Copenhagen: Jost Berthold |

| Status: | ongoing |

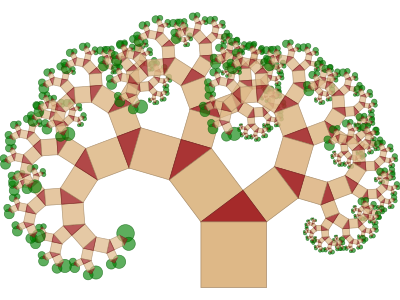

Eden extends Haskell with a small set of syntactic constructs for explicit process specification and creation. While providing enough control to implement parallel algorithms efficiently, it frees the programmer from the tedious task of managing low-level details by introducing automatic communication (via head-strict lazy lists), synchronization, and process handling.

Eden’s primitive constructs are process abstractions and process

instantiations. The Eden logo

consists of four λ turned in such a way that they form the Eden instantiation operator (#).

Higher-level coordination is achieved by defining

skeletons, ranging from a simple parallel map to sophisticated

master-worker schemes. They have been used to parallelize a set of

non-trivial programs.

consists of four λ turned in such a way that they form the Eden instantiation operator (#).

Higher-level coordination is achieved by defining

skeletons, ranging from a simple parallel map to sophisticated

master-worker schemes. They have been used to parallelize a set of

non-trivial programs.

Eden’s interface supports a simple definition of arbitrary communication topologies using Remote Data. A PA-monad enables the eager execution of user defined sequences of Parallel Actions in Eden.

Web Pages

Survey and standard reference

Rita Loogen, Yolanda Ortega-Mallén, and Ricardo Peña: Parallel Functional Programming in Eden, Journal of Functional Programming 15(3), 2005, pages 431–475.

Tutorial

Rita Loogen: Eden - Parallel Functional Programming in Haskell,

in: V. Zsok, Z. Horvath, and R. Plasmeijer (Eds.): CEFP 2011, Springer LNCS 7241, 2012, pp. 142-206.

(see also: http://www.mathematik.uni-marburg.de/~eden/?content=cefp)

Implementation

Eden is implemented by modifications to the Glasgow-Haskell Compiler (extending its runtime system to use multiple communicating instances). Apart from MPI or PVM in cluster environments, Eden supports a shared memory mode on multicore platforms, which uses multiple independent heaps but does not depend on any middleware. Building on this runtime support, the Haskell package edenmodules defines the language, and edenskels provides libraries of parallel skeletons.

The current stable release of the Eden compiler is based on GHC 7.4.2. Binary packages and source code are available on our web pages, the Eden libraries (Haskell-level) are also available via Hackage.

A newer variant based on GHC-7.6.1 (and matching Eden libraries) are available as source code via git repositories at http://james.mathematik.uni-marburg.de:8080/gitweb. We plan the next full release of Eden with the next (minor or major) GHC release.

Tools and libraries

The Eden trace viewer tool EdenTV provides a visualisation of Eden program runs on various levels. Activity profiles are produced for processing elements (machines), Eden processes and threads. In addition message transfer can be shown between processes and machines. EdenTV is written in Haskell and is freely available on the Eden web pages and on hackage.

The Eden skeleton library is under constant development. Currently it contains various skeletons for parallel maps, workpools, divide-and-conquer, topologies and many more. Take a look on the Eden pages.

Recent and Forthcoming Publications

- Mischa Dieterle, Thomas Horstmeyer, Jost Berthold, Rita Loogen: Iterating Skeletons — Structured Parallelism by Composition, Selected Papers of the Symposium on the Implementation and Application of Functional Languages (IFL 2012), LNCS, Springer 2013, to appear.

- M. KH. Aswad, P. W. Trinder, A. D. Al-Zain, G. J. Michaelson, J. Berthold: Comparing Low-Pain and No-Pain Multicore Haskells, revised and extended version of TFP 2009 paper, in Special Issue of Higher-Order Symbol Computation (HOSC), to appear.

- Thomas Horstmeyer and Rita Loogen: Graph-Based Communication in Eden, revised and extended version of TFP 2009 paper, in Special Issue of Higher-Order Symbol Computation (HOSC), to appear.

- Oleg Lobachev, Michael Guthe, Rita Loogen: Estimating parallel performance, Journal of Parallel and Distributed Computing, Vol 73, No. 6, June 2013, pp. 876 - 887.

Further reading

5.1.2 GpH — Glasgow Parallel Haskell

| Report by: | Hans-Wolfgang Loidl |

| Participants: | Phil Trinder, Patrick Maier, Mustafa Aswad, Malak Aljabri, Evgenij Belikov, Pantazis Deligianis, Robert Stewart, Prabhat Totoo (Heriot-Watt University); Kevin Hammond, Vladimir Janjic, Chris Brown (St Andrews University) |

| Status: | ongoing |

Status

A distributed-memory, GHC-based implementation of the parallel Haskell extension GpH and of a fundamentally revised version of the evaluation strategies abstraction is available in a prototype version. In current research an extended set of primitives, supporting hierarchical architectures of parallel machines, and extensions of the runtime-system for supporting these architectures are being developed.

Main activities

We have been extending the set of primitives for parallelism in GpH, to provide enhanced control of data locality in GpH applications. Results from applications running on up to 256 cores of our Beowulf cluster demonstrate significant improvements in performance when using these extensions.

In the context of the SICSA MultiCore Challenge, we are comparing the performance of several parallel Haskell implementations (in GpH and Eden) with other functional implementations (F#, Scala and SAC) and with implementations produced by colleagues in a wide range of other parallel languages. The latest challenge application was the n-body problem. A summary of this effort is available on the following web page, and sources of several parallel versions will be uploaded shortly: http://www.macs.hw.ac.uk/sicsawiki/index.php/MultiCoreChallenge.

New work has been launched into the direction of inherently parallel data structures for Haskell and using such data structures in symbolic applications. This work aims to develop foundational building blocks in composing parallel Haskell applications, taking a data-centric point of view. Current work focuses on data structures such as append-trees to represent lists and quad-trees in an implementation of the n-body problem.

Another strand of development is the improvement of the GUM runtime-system to better deal with hierarchical and heterogeneous architectures, that are becoming increasingly important. We are revisiting basic resource policies, such as those for load distribution, and are exploring modifications that provide enhanced, adaptive behaviour for these target platforms.

GpH Applications

As part of the SCIEnce EU FP6 I3 project (026133) (April 2006 – December 2011) and the HPC-GAP project (October 2009 – September 2013) we use Eden, GpH and HdpH as middleware to provide access to computational Grids from Computer Algebra (CA) systems, in particular GAP. We have developed and released SymGrid-Par, a Haskell-side infrastructure for orchestrating heterogeneous computations across high-performance computational Grids. Based on this infrastructure we have developed a range of domain-specific parallel skeletons for parallelising representative symbolic computation applications. A Haskell-side interface to this infrastructures is available in the form of the Computer Algebra Shell CASH, which is downloadable from Hackage. We are currently extending SymGrid-Par with support for fault-tolerance, targeting massively parallel high-performance architectures.

Implementations

The latest GUM implementation of GpH is built on GHC 6.12, using either PVM or MPI as communications library. It implements a virtual shared memory abstraction over a collection of physically distributed machines. At the moment our main hardware platforms are Intel-based Beowulf clusters of multicores. We plan to connect several of these clusters into a wide-area, hierarchical, heterogenous parallel architecture.

Further reading

http://www.macs.hw.ac.uk/~dsg/gph/

Contact

<gph at macs.hw.ac.uk>

5.1.3 Parallel GHC project

| Report by: | Duncan Coutts |

| Participants: | Duncan Coutts, Andres Löh, Mikolaj Konarski, Edsko de Vries |

| Status: | active |

Microsoft Research funded a 2-year project, which is now coming to an end, to promote the real-world use of parallel Haskell. The project involved industrial partners working on their own tasks using parallel Haskell, and consulting and engineering support from Well-Typed (→8.1). The overall goal has been to demonstrate successful serious use of parallel Haskell, and along the way to apply engineering effort to any problems with the tools that the organisations might run into. In addition we have put significant engineering work into a new implementation of Cloud Haskell.

The participating organisations are working on a diverse set of complex real world problems:

- Dragonfly (New Zealand): Hierarchical Bayesian Modeling

- Los Alamos National Laboratory (USA): high performance Monte Carlo algorithms to model the flow of radiation and other physical phenomena

- IIJ Innovation Institute Inc. (Japan): network servers handling a massive number of concurrent connections

- Telefonica I+D: processing large graphs representing social networks

As the project winds down, we will be publishing more details about the outcomes of these projects.

On the engineering side, the two main areas of focus in the project recently have been ThreadScope and Cloud Haskell.

ThreadScope.The latest release of ThreadScope (version 0.2.2) provides detailed statistics about heap and GC behaviour. It is much like the output that can be obtained by running your program with +RTS -s but presented in a more friendly way and with the ability to see the same statistics for any period within the program, not just the entire program run. This work could be extended to show graphs of the heap size over time. Compared to GHC’s traditional heap profiling this does not require recompiling in profiling mode and is very low overhead, but what is lost is the detailed breakdown of the heap by type, cost centre or retainer.

In addition there is a new feature to emit phase markers from user code and have these visualised in the ThreadScope timeline window.

These new features rely on the development version of GHC, and so will become generally available with GHC-7.8.

Finally, there is an alpha release of an ambitious new feature to integrate data from Linux’s “perf” system into ThreadScope. The Linux “perf” system lets us see events in the OS such as system calls and other internal kernel trace points, and also to collect detailed CPU performance counters. Our work has focused on capturing and transforming this data source, and integrating it with the existing RTS event tracing system which we believe will enable many useful new visualisations. Our initial new visualisation in ThreadScope lets us see when system calls are occurring. We hope that this and other future work in this area will help developers who are trying to optimise the performance of applications like network servers.

Cloud Haskell.For about the last year we have been working on a new implementation of Cloud Haskell. This is the same idea for concurrent distributed programming in Haskell that Simon Peyton Jones has been telling everyone about, but it’s a new implementation designed to be robust and flexible.

The summary about the new implementation is that it exists, it works, it’s on hackage, and we think it is now ready for serious experiments.

Compared to the previous prototype:

- it is much faster;

- it can run on multiple kinds of network;

- has backends to support different environments (like cluster or cloud);

- has a new system for dealing with node disconnect and reconnect;

- has a more precisely defined semantics;

- supports composable, polymorphic serialisable closures;

- and internally the code is better structured and easier to work with.

By the time you read this, we will have also released a backend for the Windows Azure cloud platform. Backends for other environments should be relatively straightforward to develop.

Further details including papers, videos and blog posts are on the Cloud Haskell homepage.

Further reading

- Parallel GHC project homepage: http://www.haskell.org/haskellwiki/Parallel_GHC_Project

- Cloud Haskell homepage: http://www.haskell.org/haskellwiki/Cloud_Haskell

- ThreadScope homepage: http://www.haskell.org/haskellwiki/ThreadScope

5.2 Haskell and the Web

The Web Application Interface (WAI) is an interface between Haskell web applications and Haskell web servers. By targeting the WAI, a web framework or web application gets access to multiple deployment platforms. Platforms in use include CGI, the Warp web server, and desktop webkit.

WAI has mostly been stable since the last HCAR, with the exception of a newly added field to represent the request body length. This avoids repeatedly doing a costly integer parse, and correctly handling the case of chunked bodies at the type level. WAI has also been updated to allow the newest version of the conduit (→7.1.1) package.

WAI is also a platform for re-using code between web applications and web frameworks through WAI middleware and WAI applications. WAI middleware can inspect and transform a request, for example by automatically gzipping a response or logging a request. The Yesod (→5.2.6) web framework provides the ability to embed arbitrary WAI applications as subsites, making them a part of a larger web application.

By targeting WAI, every web framework can share WAI code instead of wasting effort re-implementing the same functionality. There are also some new web frameworks that take a completely different approach to web development that use WAI, such as webwire (FRP) and dingo (GUI). The Scotty web framework also continues to be developed, and provides a lighter-weight alternative to Yesod. Other frameworks- whether existing or newcomers- are welcome to take advantage of the existing WAI architecture to focus on the more innovative features of web development.

WAI applications can send a response themselves. For example, wai-app-static is used by Yesod to serve static files. However, one does not need to use a web framework, but can simply build a web application using the WAI interface alone. The Hoogle web service targets WAI directly.

The WAI standard has proven itself capable for different users and there are no outstanding plans for changes or improvements.

Further reading

Warp is a high performance, easy to deploy HTTP server backend for WAI (→5.2.1). Since the last HCAR, Warp has switched from enumerators to conduits (→7.1.1), added SSL support, and websockets integration.

Due to the combined use of ByteStrings, blaze-builder, conduit, and GHC’s improved I/O manager, WAI+Warp has consistently proven to be Haskell’s most performant web deployment option.

Warp is actively used to serve up most of the users of WAI (and Yesod).

“Warp: A Haskell Web Server” by Michael Snoyman was published in the May/June 2011 issue of IEEE Internet Computing:

5.2.3 Holumbus Search Engine Framework

| Report by: | Uwe Schmidt |

| Participants: | Timo B. Kranz, Sebastian Gauck, Stefan Schmidt |

| Status: | first release |

Description

The Holumbus framework consists of a set of modules and tools for creating fast, flexible, and highly customizable search engines with Haskell. The framework consists of two main parts. The first part is the indexer for extracting the data of a given type of documents, e.g., documents of a web site, and store it in an appropriate index. The second part is the search engine for querying the index.

An instance of the Holumbus framework is the Haskell API search engine Hayoo! (http://holumbus.fh-wedel.de/hayoo/).

The framework supports distributed computations for building indexes and searching indexes. This is done with a MapReduce like framework. The MapReduce framework is independent of the index- and search-components, so it can be used to develop distributed systems with Haskell.

The framework is now separated into four packages, all available on Hackage.

- The Holumbus Search Engine

- The Holumbus Distribution Library

- The Holumbus Storage System

- The Holumbus MapReduce Framework

The search engine package includes the indexer and search modules, the MapReduce package bundles the distributed MapReduce system. This is based on two other packages, which may be useful for their on: The Distributed Library with a message passing communication layer and a distributed storage system.

Features

- Highly configurable crawler module for flexible indexing of structured data

- Customizable index structure for an effective search

- find as you type search

- Suggestions

- Fuzzy queries

- Customizable result ranking

- Index structure designed for distributed search

- Git repository containing the current development version of all packages under https://github.com/fortytools/holumbus

- Distributed building of search indexes

Current Work

Currently there are activities to optimize the index structures of the framework. In the past there have been problems with the space requirements during indexing. The data structures and evaluation strategies have been optimized to prevent space leaks. A second index structure working with cryptographic keys for document identifiers is under construction. This will further simplify partial indexing and merging of indexes.

There is a small project extracting the sources of the data structure used for the index to build a separate package. The search tree used in Holumbus is a space optimised version of a radix tree, which enables fast prefix and fuzzy search.

The second project, a specialized search engine for the FH-Wedel web site, has been finished http://w3w.fh-wedel.de/. The new aspect in this application is a specialized free text search for appointments, deadlines, announcements, meetings and other dates.

The Hayoo! and the FH-Wedel search engine have been adopted to run on top of the Snap framework (→5.2.7).

Further reading

The Holumbus web page (http://holumbus.fh-wedel.de/) includes downloads, Git web interface, current status, requirements, and documentation. Timo Kranz’s master thesis describing the Holumbus index structure and the search engine is available at http://holumbus.fh-wedel.de/branches/develop/doc/thesis-searching.pdf. Sebastian Gauck’s thesis dealing with the crawler component is available at http://holumbus.fh-wedel.de/src/doc/thesis-indexing.pdf The thesis of Stefan Schmidt describing the Holumbus MapReduce is available via http://holumbus.fh-wedel.de/src/doc/thesis-mapreduce.pdf.

Happstack is a fast, modern framework for creating web applications. Happstack is well suited for MVC and RESTful development practices. We aim to leverage the unique characteristics of Haskell to create a highly-scalable, robust, and expressive web framework.

Happstack pioneered type-safe Haskell web programming, with the creation of technologies including web-routes (type-safe URLS) and acid-state (native Haskell database system). We also extended the concepts behind formlets, a type-safe form generation and processing library, to allow the separation of the presentation and validation layers.

Some of Happstack’s unique advantages include:

- a large collection of flexible, modular, and well documented libraries which allow the developer to choose the solution that best fits their needs for databases, templating, routing, etc.

- the most flexible and powerful system for defining type-safe URLs.

- a type-safe form generation and validation library which allows the separation of validation and presentation without sacrificing type-safety

- a powerful, compile-time HTML templating system, which allows the use of XML syntax

A recent addition to the Happstack family is the happstack-foundation library. It combines what we believe to be the best choices into a nicely integrated solution. happstack-foundation uses:

- happstack-server for low-level HTTP functionality

- acid-state for type-safe database functionality

- web-routes for type-safe URL routing

- reform for type-safe form generation and processing

- HSP for compile-time, XML-based HTML templates

- JMacro for compile-time Javascript generation and syntax checking

Future plans

Happstack is the oldest, actively developed Haskell web framework. We are continually studying and applying new ideas to keep Happstack fresh. By the time the next release is complete, we expect very little of the original code will remain. If you have not looked at Happstack in a while, we encourage you to come take a fresh look at what we have done.

Some of the projects we are currently working on include:

- a fast pipes-based HTTP and websockets backend with a high level of evidence for correctness

- a dynamic plugin loading system

- a more expressive system for weakly typed URL routing combinators

- a new system for processing form data which allows fine grained enforcement of RAM and disk quotas and avoids the use of temporary files

- a major refactoring of HSP (fewer packages, migration to Text/Builder, a QuasiQuoter, and more).

One focus of Happstack development is to create independent libraries that can be easily reused. For example, the core web-routes and reform libraries are in no way Happstack specific and can be used with other Haskell web frameworks. Additionally, libraries that used to be bundled with Happstack, such as IxSet, SafeCopy, and acid-state, are now independent libraries. The new backend will also be available as an independent library.

When possible, we prefer to contribute to existing libraries rather than reinvent the wheel. For example, our preferred templating library, HSP, was created by and is still maintained by Niklas Broberg. However, a significant portion of HSP development in the recent years has been fueled by the Happstack team.

We are also working directly with the Fay team to bring an improved type-safety to client-side web programming. In addition to the new happstack-fay integration library, we are also contributing directly to Fay itself.

For more information check out the happstack.com website — especially the “Happstack Philosophy” and “Happstack 8 Roadmap”.

Further reading

5.2.5 Mighttpd2 — Yet another Web Server

| Report by: | Kazu Yamamoto |

| Status: | open source, actively developed |

Mighttpd (called mighty) version 2 is a simple but practical Web server in Haskell. It is now working on Mew.org serving static files, CGI (mailman and contents search) and reverse proxy for back-end Yesod applications.

Mighttpd is based on Warp providing performance on par with nginx. You can use the mightyctl command to reload configuration files dynamically and shutdown Mighttpd gracefully.

You can install Mighttpd 2 (mighttpd2) from HackageDB.

Further reading

5.2.6 Yesod

| Report by: | Michael Snoyman |

| Participants: | Greg Weber, Luite Stegeman, Felipe Lessa |

| Status: | stable |

Yesod is a traditional MVC RESTful framework. By applying Haskell’s strengths to this paradigm, Yesod helps users create highly scalable web applications.

Performance scalablity comes from the amazing GHC compiler and runtime. GHC provides fast code and built-in evented asynchronous IO.

But Yesod is even more focused on scalable development. The key to achieving this is applying Haskell’s type-safety to an otherwise traditional MVC REST web framework.

Of course type-safety guarantees against typos or the wrong type in a function. But Yesod cranks this up a notch to guarantee common web application errors won’t occur.

- declarative routing with type-safe urls — say goodbye to broken links

- no XSS attacks — form submissions are automatically sanitized

- database safety through the Persistent library (→7.6.2) — no SQL injection and queries are always valid

- valid template variables with proper template insertion — variables are known at compile time and treated differently according to their type using the shakesperean templating system.

When type safety conflicts with programmer productivity, Yesod is not afraid to use Haskell’s most advanced features of Template Haskell and quasi-quoting to provide easier development for its users. In particular, these are used for declarative routing, declarative schemas, and compile-time templates.

MVC stands for model-view-controller. The preferred library for models is Persistent (→7.6.2). Views can be handled by the Shakespeare family of compile-time template languages. This includes Hamlet, which takes the tedium out of HTML. Both of these libraries are optional, and you can use any Haskell alternative. Controllers are invoked through declarative routing and can return different representations of a resource (html, json, etc).

Yesod is broken up into many smaller projects and leverages Wai (→5.2.1) to communicate with the server. This means that many of the powerful features of Yesod can be used in different web development stacks that use WAI such as Scotty.

The new 1.2 release of Yesod, introduces a number of simplifications, especially to the subsite handling. Most applications should be able to upgrade easily. Some of the notable features are:

- Much more powerful multi-representation support via the selectRep/provideRep API.

- More efficient session handling.

- All Handler functions live in a typeclass, providing you with auto-lifting.

- Type-based caching of responses via the cached function.

- More sensible subsite handling, switch to HandlerT/WidgetT transformers.

- Simplified dispatch system, including a lighter-weight Yesod.

- Simplified streaming data mechanism, for both database and non-database responses.

- Completely overhauled yesod-test, making it easier to use and providing cleaner integration with hspec.

- yesod-auth’s email plugin now supports logging in via username in addition to email address.

- Refactored persistent module structure for clarity and ease-of-use.

- Easy asset combining for static javascript and css files

- Faster shakespeare template reloading and support for TypeScript templates.

Since the 1.0 release, Yesod has maintained a high level of API stability, and we intend to continue this tradition. The 1.2 release introduces a lot of potential code breakage, but most of the required updates should be very straightforward. Future directions for Yesod are now largely driven by community input and patches. We’ve been making progress on the goal of easier client-side interaction, and have high-level interaction with languages like Fay, TypeScript, and CoffeScript.

The Yesod site (http://www.yesodweb.com/) is a great place for information. It has code examples, screencasts, the Yesod blog and — most importantly — a book on Yesod.

To see an example site with source code available, you can view Haskellers (→1.2) source code: (https://github.com/snoyberg/haskellers).

Further reading

5.2.7 Snap Framework

| Report by: | Doug Beardsley |

| Participants: | Gregory Collins, Shu-yu Guo, James Sanders, Carl Howells, Shane O’Brien, Ozgun Ataman, Chris Smith, Jurrien Stutterheim, Gabriel Gonzalez, and others |

| Status: | active development |

The Snap Framework is a web application framework built from the ground up for speed, reliability, and ease of use. The project’s goal is to be a cohesive high-level platform for web development that leverages the power and expressiveness of Haskell to make building websites quick and easy.

The Snap Framework continues to have a lot of activity since the last HCAR. We released Snap 0.10 which included a major redesign of the Heist template system with a huge performance improvement. That was followed by 0.11 as we continued to develop a higher level API on top of the new compiled Heist functionality that was introduced in 0.10.

The Snap team also released a new package called io-streams that provides a streaming I/O solution focused on simplicity and ease of use. The io-streams library includes a comprehensive test suite with 100%code coverage. The io-streams release was accompanied by a package providing OpenSSL support and a third-party HTTP client library called http-streams.

All in all, we are very happy with the continued growth of the Snap ecosystem. Going forward, we are working on a new Snap server built on io-streams. Also, several of the core Snap developers have full-time employment working with Snap in production systems and are continuing to develop new higher-level libraries and tools for commercial Haskell deployments. Join the team in the #snapframework IRC channel on Freenode to keep up with all the latest developments.

Further reading

- io-streams release announcement: http://snapframework.com/blog/2013/03/05/announcing-io-streams

- Snaplet Directory: http://snapframework.com/snaplets

- http://snapframework.com

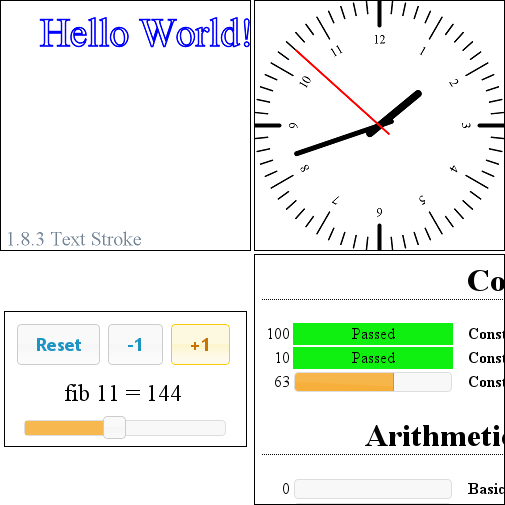

Sunroof is a Domain Specific Language (DSL) for generating JavaScript. It is built on top of the JS-monad, which, like the Haskell IO-monad, allows read and write access to external resources, but specifically JavaScript resources. As such, Sunroof is primarily a feature-rich foreign function API to the browser’s JavaScript engine, and all the browser-specific functionality, like HTML-based rendering, event handling, and drawing to the HTML5 canvas.

Furthermore, Sunroof offers two threading models for building on top of JavaScript, atomic and blocking threads. This allows full access to JavaScript APIs, but using Haskell concurrency abstractions, like MVars and Channels. In combination with the push mechanism Kansas-Comet, Sunroof offers a great platform to build interactive web applications, giving the ability to interleave Haskell and JavaScript computations with each other as needed.

It has successfully been used to write smaller applications. These applications range from 2D rendering using the HTML5 canvas element, over small GUIs, up to executing the QuickCheck tests of Sunroof and displaying the results in a neat fashion. The development has been active over the past 6 months and there is a drafted paper submitted to TFP 2013.

Further reading

- Homepage: http://www.ittc.ku.edu/csdl/fpg/software/sunroof.html

- Tutorial: https://github.com/ku-fpg/sunroof-compiler/wiki/Tutorial

- Main Repository: https://github.com/ku-fpg/sunroof-compiler

5.3 Haskell and Compiler Writing

MateVM is a method-based Java Just-In-Time Compiler. That is, it compiles a method to native code on demand (i.e. on the first invocation of a method). We use existing libraries:

- hs-java

- for proccessing Java Classfiles according to The Java Virtual Machine Specification.

- harpy

- enables runtime code generation for i686 machines in Haskell, in a domain specific language style.

In the current state we are able to execute simple Java programs. The compiler eliminates the JavaVM stack via abstract interpretation, does a liveness analysis, linear scan register allocation and finally code emission. The architecture enables easy addition of further optimization passes on an intermediate representation.

Future plans are, to add an interpreter to gather profile information for the compiler and also do more aggressive optimizations (e.g. method inlining or stack allocation) , using the interpreter as fallback path via deoptimization if a assumption is violated.

Apart from that, many features are missing for a full JavaVM, most noteable are the concept of Classloaders, Floating Point or Threads. We would like to use GNU Classpath as base library some day. Other hot topics are Hoopl and Garbage Collection.

If you are interested in this project, do not hestiate to join us on IRC (#MateVM @ OFTC) or contact us on Github.

Further reading

5.3.2 CoCoCo

| Report by: | Marcos Viera |

| Participants: | Doaitse Swierstra, Arthur Baars, Arie Middelkoop, Atze Dijkstra, Wouter Swierstra |

| Status: | experimental |

CoCoCo (Compositional Compiler Construction) is a set of libraries and tools in the form of a collection of embedded domain specific languages (EDSL) in Haskell for constructing extensible compilers, where compilers can be composed out of separately compiled and statically type checked language-definition fragments.

Our approach builds on:

- the introduction of a naming structure which makes it possible to represent mutually dependent structures and the possibility to inspect and manipulate such structures in a type-safe way

- the description of typed grammar fragments as first class Haskell values, and the typed Left-Corner Transform to remove left-recursion

- the possibility to construct a self-analysing, error correcting parser on the fly

- the possibility to deal with attribute grammars as first class Haskell values, which can be transformed, composed and finally evaluated.

As a case study we have implemented an Oberon0 compiler, which is available as a Hackage package:

Its implementation is described in a technical report:- Viera, M., Swierstra, S.D.: Compositional Compilers Construction: Oberon0. UU-CS 2012-016, Institute of Information and Computing Science (October 2012).

Related Libraries

- murder: The murder library is an EDSL for grammar fragments as first-class values. It provides combinators to define and extend grammars, and produce compilers out of them.

http://hackage.haskell.org/package/murder - AspectAG: Library of strongly typed Attribute Grammars implemented using type-level programming.

http://hackage.haskell.org/package/AspectAG - TTTAS: Library for Typed Transformations of Typed Abstract Syntax.

http://hackage.haskell.org/package/TTTAS - uulib: Fast Parser Combinators and Pretty Printing Combinators .

http://hackage.haskell.org/package/uulib - uu-parsinglib: New version of the Utrecht University parser combinator library, which provides online, error correction, annotation free, applicative style parser combinators.

http://hackage.haskell.org/package/uu-parsinglib

Further reading

5.3.3 UUAG

| Report by: | Jeroen Bransen |

| Participants: | ST Group of Utrecht University |

| Status: | stable, maintained |

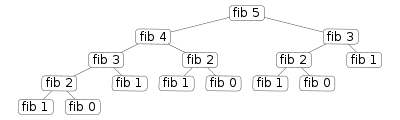

UUAG is the Utrecht University Attribute Grammar system. It is a preprocessor for Haskell that makes it easy to write catamorphisms, i.e., functions that do to any data type what foldr does to lists. Tree walks are defined using the intuitive concepts of inherited and synthesized attributes, while keeping the full expressive power of Haskell. The generated tree walks are efficient in both space and time.

An AG program is a collection of rules, which are pure Haskell functions between attributes. Idiomatic tree computations are neatly expressed in terms of copy, default, and collection rules. Attributes themselves can masquerade as subtrees and be analyzed accordingly (higher-order attribute). The order in which to visit the tree is derived automatically from the attribute computations. The tree walk is a single traversal from the perspective of the programmer.

Nonterminals (data types), productions (data constructors), attributes, and rules for attributes can be specified separately, and are woven and ordered automatically. These aspect-oriented programming features make AGs convenient to use in large projects.

The system is in use by a variety of large and small projects, such as the Utrecht Haskell Compiler UHC (→3.3), the editor Proxima for structured documents (http://www.haskell.org/communities/05-2010/html/report.html#sect6.4.5), the Helium compiler (http://www.haskell.org/communities/05-2009/html/report.html#sect2.3), the Generic Haskell compiler, UUAG itself, and many master student projects. The current version is 0.9.42.3 (April 2013), is extensively tested, and is available on Hackage. There is also a Cabal plugin for easy use of AG files in Haskell projects.

Some of the recent changes to the UUAG system are:

- OCaml support.

-

We have added OCaml code generation such that UUAG can also be used in OCaml projects.

- Improved build system.

-

We have improved the building procedure to make sure that the UUAGC can both be built from source as well as from the included generated Haskell sources, without the need of an external bootstrap program.

- First-class AGs.

-

We provide a translation from UUAG to AspectAG (http://www.haskell.org/communities/11-2011/html/report.html#sect5.4.2) .

AspectAG is a library of strongly typed Attribute Grammars

implemented using type-level programming. With this extension, we can write the main part of

an AG conveniently with UUAG, and use AspectAG for (dynamic) extensions. Our goal is to have

an extensible version of the UHC.

- Ordered evaluation.

- We have implemented a variant of Kennedy and Warren (1976) for ordered AGs. For any absolutely non-circular AGs, this algorithm finds a static evaluation order, which solves some of the problems we had with an earlier approach for ordered AGs. A static evaluation order allows the generated code to be strict, which is important to reduce the memory usage when dealing with large ASTs. The generated code is purely functional, does not require type annotations for local attributes, and the Haskell compiler proves that the static evaluation order is correct.

We are currently working on the following enhancements:

- Incremental evaluation.

- We are currently also running a Ph.D. project that investigates incremental evaluation of AGs. In this ongoing work we hope to improve the UUAG compiler by adding support for incremental evaluation, for example by statically generating different evaluation orders based on changes in the input.

Further reading

5.3.4 LQPL — A Quantum Programming Language Compiler and Emulator

| Report by: | Brett G. Giles |

| Participants: | Dr. J.R.B. Cockett and Rajika Kumarasiri |

| Status: | v 0.9.0 experimental released in July 2012 |

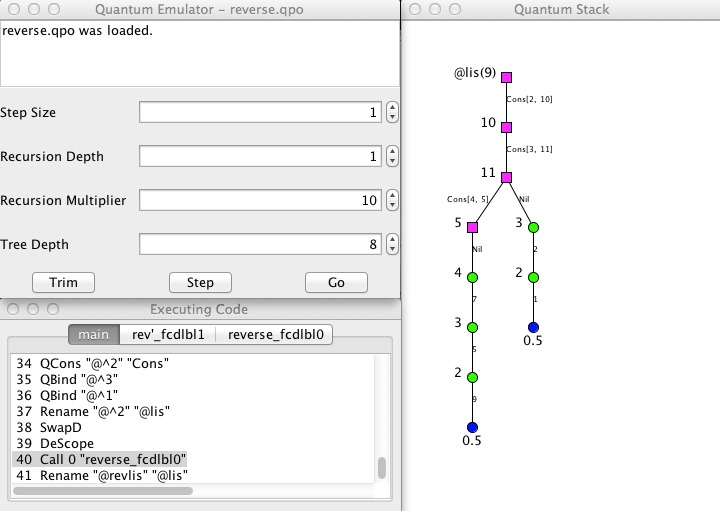

LQPL (Linear Quantum Programming Language) is a functional quantum programming language inspired by Peter Selinger’s paper “Towards a Quantum Programming Language”.

The LQPL system consists of a compiler, a GUI based front end and an emulator. Compiled programs are loaded to the emulator by the front end. LQPL incorporates a simple module / include system (more like C’s include than Haskell’s import), predefined unitary transforms, quantum control and classical control, algebraic data types, and operations on purely classical data.

The largest difference since the previous release of the package is that LQPL is now split into separate components. These consist of:

- The compiler (written in Haskell) — available at the command line and via a TCP/IP interface.

- The emulator (written in Haskell) — available as a server via a TCP/IP interface.

- The front end (Java/Swing)— with version 0.9, the front end was rewritten as a Java/Swing application, which connects to both the compiler and the emulator via TCP/IP. A text based / command line interface is being considered.

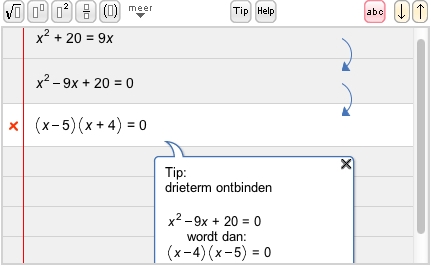

A screenshot of the new interface (showing a probabilistic list) is included below.

Quantum programming allows us to provide a fair coin toss, as shown in the code example below.

toss ::( ; c:Coin) =

{ q = |0>; Had q;

measure q of

|0> => {c = Heads}

|1> => {c = Tails}

}

Separation into modules was a preparatory step for improving the performance of the emulator and adding optimization features to the language.

Further reading

Documentation and executable downloads may be found at http://pll.cpsc.ucalgary.ca/lqpl/index.html. The source code, along with a wiki and bug tracker, is available at https://bitbucket.org/BrettGilesUofC/lqpl.

6 Development Tools

6.1 Environments

6.1.1 EclipseFP

| Report by: | JP Moresmau |

| Participants: | building on code from B. Scott Michel, Alejandro Serrano, Thiago Arrais, Leif Frenzel, Thomas ten Cate, Martijn Schrage, Adam Foltzer and others |

| Status: | stable, maintained, and actively developed |

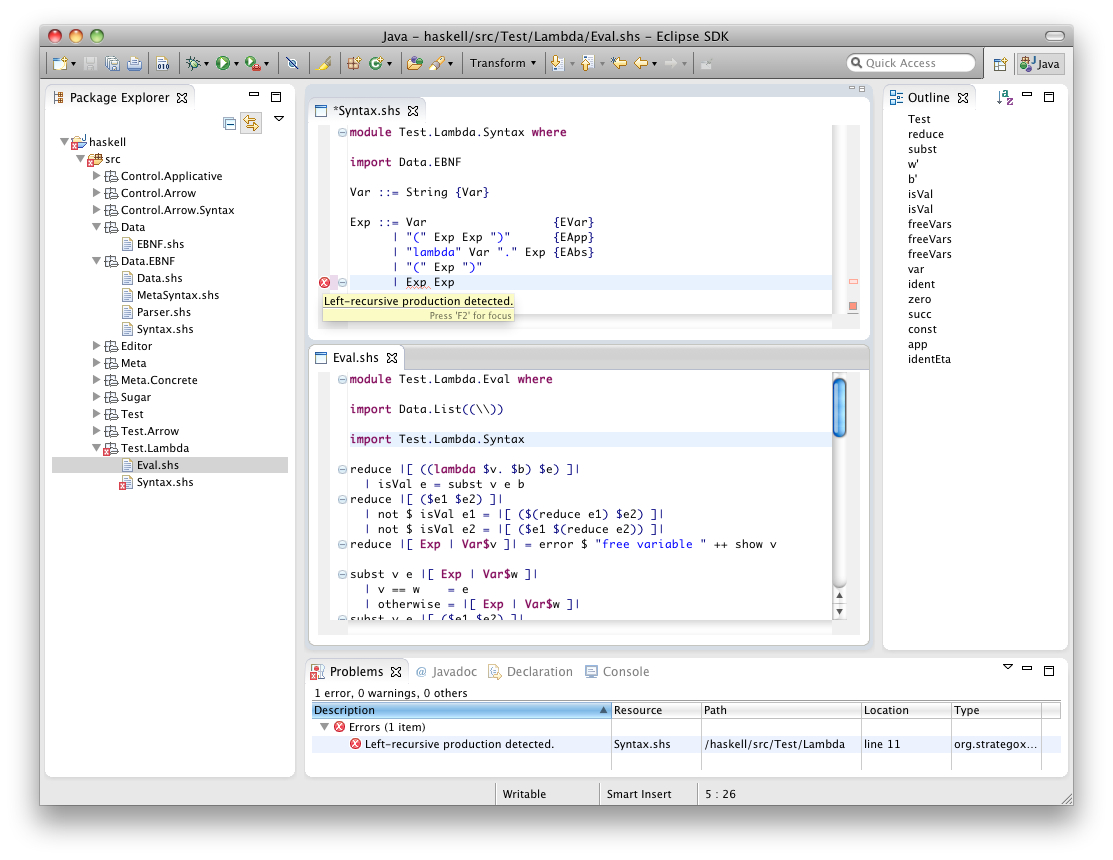

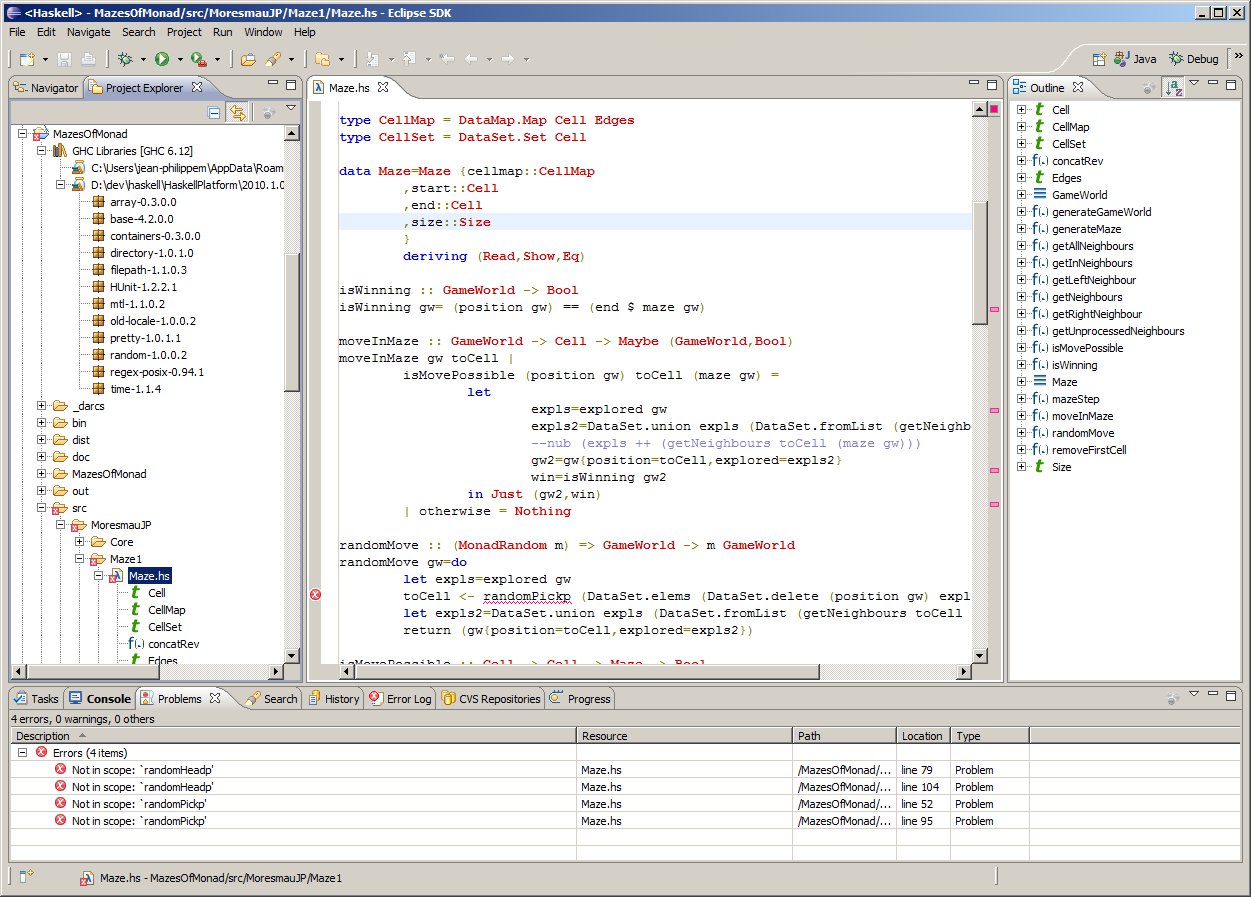

EclipseFP is a set of Eclipse plugins to allow working on Haskell code projects. Its goal is to offer a fully featured Haskell IDE in a platform developers coming from other languages may already be familiar with. It features Cabal integration (.cabal file editor, uses Cabal settings for compilation, allows the user to install Cabal packages from within the IDE), and GHC integration. Compilation is done via the GHC API, syntax coloring uses the GHC Lexer. Other standard Eclipse features like code outline, folding, and quick fixes for common errors are also provided. HLint suggestions can be applied in one click, and imports can be organized automatically. EclipseFP also allows launching GHCi sessions on any module including extensive debugging facilities: the management of breakpoints and the evaluation of variables and expressions uses the Eclipse debugging framework, and requires no knowledge of GHCi syntax. It uses the BuildWrapper Haskell tool to bridge between the Java code for Eclipse and the Haskell APIs. It also provides a full package and module browser to navigate the Haskell packages installed on your system, integrated with Hackage. EclipseFP integrates with Haskell test frameworks, most notably HTF, to provide UI feedback on test failures. It can also use cabal-dev to provide sandboxing and project dependencies inside an Eclipse workspace. The source code is fully open source (Eclipse License) on github and anyone can contribute. Current version is 2.5.2, released in March 2013, and more versions with additional features are planned and actively worked on. Feedback on what is needed is welcome! The website has information on downloading binary releases and getting a copy of the source code. Support and bug tracking is handled through Sourceforge forums and github issues.

Further reading

6.1.2 ghc-mod — Happy Haskell Programming

| Report by: | Kazu Yamamoto |

| Status: | open source, actively developed |

ghc-mod is a backend command to enrich Haskell programming on editors including Emacs and Vim. The ghc-mod package on Hackage includes the ghc-mod command and Emacs front-end.

Emacs front-end provides the following features:

- Completion

-

You can complete a name of keyword, module, class, function, types, language extensions, etc.

- Code template

-

You can insert a code template according to the position of the cursor. For instance, “module Foo where” is inserted in the beginning of a buffer.

- Syntax check

-

Code lines with error messages are automatically highlighted thanks to flymake. You can display the error message of the current line in another window. hlint can be used instead of GHC to check Haskell syntax.

- Document browsing

-

You can browse the module document of the current line either locally or on Hackage.

- Expression type

- You can display the type/information of the expression on the cursor.

There are two Vim plugins:

- ghcmod-vim

- syntastic

Here are new features:

- ghc-mod now analyses library dependencies from a cabal file.

- The “check” subcommand became faster than before unless Template Haskell is used.

- The “debug” subcommand is provided.

- The “browse” subcommand displays more information on functions etc if the “-d” option is specified.

Further reading

Heat is an interactive development environment (IDE) for learning and teaching Haskell. Heat was designed for novice students learning the functional programming language Haskell. Heat provides a small number of supporting features and is easy to use. Heat is distributed as a single, portable Java jar-file and works on top of GHCi.

Version 5.05, with small improvements and bug-fixes, was released end of April 2013.

Heat provides the following features:

- Editor for a single module with syntax-highlighting and matching brackets.

- Shows the status of compilation: non-compiled; compiled with or without error.

- Interpreter console that highlights the prompt and error messages.

- If compilation yields an error, then the relevant source line is highlighted and no further expression can be evaluated in the console until the source has been changed and successfully recompiled.

- A tree structure provides a program summary, giving definitions of types and types of functions.

- Automatic checking of either Boolean or QuickCheck properties of a program; results shown in summary.

Further reading

6.2 Code Management

Darcs is a distributed revision control system written in Haskell. In Darcs, every copy of your source code is a full repository, which allows for full operation in a disconnected environment, and also allows anyone with read access to a Darcs repository to easily create their own branch and modify it with the full power of Darcs’ revision control. Darcs is based on an underlying theory of patches, which allows for safe reordering and merging of patches even in complex scenarios. For all its power, Darcs remains a very easy to use tool for every day use because it follows the principle of keeping simple things simple.

Our most recent release, Darcs 2.8.4 (with GHC 7.6 support), was in Februrary 2013. Some key changes in Darcs 2.8 include a faster and more readable darcs annotate, a darcs obliterate -O which can be used to conveniently “stash” patches, and hunk editing for the darcs revert command.

Our work on the next Darcs release continues. In our sights are the new ‘darcs rebase‘ command (for merging and amending patches that would be hard to do with patch theory alone), the patch index optimisation (for faster local lookups on repositories with long histories), and the packs optimisation (for faster darcs get).

To accompany this work are some very interesting lines of development being contributed by Sebastian Fischer on behalf of factis research. The work is aimed at improving the patch management experience for teams of Darcs users, first with a mechanism to track the history of patches (who pulled them into the repository, or removed them, when) and second with possible commands that would allow patches to be easily split apart and merged together. We’re very grateful to factis for funding this development work and look forward to using it ourselves.

Meanwhile, the Darcs hub at http://hub.darcs.net continues to grow in robustness and usage (at the time of this writing, 254 accounts, 294 repos). The Darcs hub is based on work by Simon Michael improving the original Darcsden by Alex Suraci, resulting in a 1.0 release earlier last year. Feedback and help pushing forward this new Darcs hosting option will be greatly appreciated!

Darcs is free software licensed under the GNU GPL (version 2 or greater). Darcs is a proud member of the Software Freedom Conservancy, a US tax-exempt 501(c)(3) organization. We accept donations at http://darcs.net/donations.html.

Further reading

DarcsWatch is a tool to track the state of Darcs (→6.2.1) patches that have been submitted to some project, usually by using the darcs send command. It allows both submitters and project maintainers to get an overview of patches that have been submitted but not yet applied.

DarcsWatch continues to be used by the xmonad project, the Darcs project itself, and a few developers. At the time of writing (April 2013), it was tracking 39 repositories and 4579 patches submitted by 244 users.

Further reading

6.2.3 cab — A Maintenance Command of Haskell Cabal Packages

| Report by: | Kazu Yamamoto |

| Status: | open source, actively developed |

cab is a MacPorts-like maintenance command of Haskell cabal packages. Some parts of this program are a wrapper to ghc-pkg, cabal, and cabal-dev.

If you are always confused due to inconsistency of ghc-pkg and cabal, or if you want a way to check all outdated packages, or if you want a way to remove outdated packages recursively, this command helps you.

cab now provides the benchmark option (“-b”) for the “conf” subcommand

Further reading

6.3 Deployment

Background

Cabal is the standard packaging system for Haskell software. It specifies a standard way in which Haskell libraries and applications can be packaged so that it is easy for consumers to use them, or re-package them, regardless of the Haskell implementation or installation platform.

Hackage is a distribution point for Cabal packages. It is an online archive of Cabal packages which can be used via the website and client-side software such as cabal-install. Hackage enables users to find, browse and download Cabal packages, plus view their API documentation.

cabal-install is the command line interface for the Cabal and Hackage system. It provides a command line program cabal which has sub-commands for installing and managing Haskell packages.

Recent progress

The Cabal packaging system has always faced growing pains. We have been through several cycles where we’ve faced chronic problems, made major improvements which bought us a year or two’s breathing space while package authors and users become ever more ambitious and start to bump up against the limits again. In the last few years we have gone from a situation where 10 dependencies might be considered a lot, to a situation now where the major web frameworks have a 100+ dependencies and we are again facing chronic problems.

The Cabal/Hackage maintainers and contributors have been pursuing a number of projects to address these problems:

The IHG sponsored Well-Typed (→8.1) to work on cabal-install resulting in a new package dependency constraint solver. This was incorporated into the cabal-install-0.14 release in the spring, and which is now in the latest Haskell Platform release. The new dependency solver does a much better job of finding install plans. In addition the cabal-install tool now warns when installing new packages would break existing packages, which is a useful partial solution to the problem of breaking packages.

We had two Google Summer of Code projects on Cabal this year, focusing on solutions to other aspects of our current problems. The first is a project by Mikhail Glushenkov (and supervised by Johan Tibell) to incorporate sandboxing into cabal-install. In this context sandboxing means that we can have independent sets of installed packages for different projects. This goes a long way towards alleviating the problem of different projects needing incompatible versions of common dependencies. There are several existing tools, most notably cabal-dev, that provide some sandboxing facility. Mikhail’s project was to take some of the experience from these existing tools (most of which are implemented as wrappers around the cabal-install program) and to implement the same general idea, but properly integrated into cabal-install itself. We expect the results of this project will be incorporated into a cabal-install release within the next few months.

The other Google Summer of Code project this year, by Philipp Schuster (and supervised by Andres Löh), is also aimed at the same problem: that of different packages needing inconsistent versions of the same common dependencies, or equivalently the current problem that installing new packages can break existing installed packages. The solution is to take ideas from the Nix package manager for a persistent non-destructive package store. In particular it lifts an obscure-sounding but critical limitation: that of being able to install multiple instances of the same version of a package, built against different versions of their dependencies. This is a big long-term project. We have been making steps towards it for several years now. Philipp’s project has made another big step, but there’s still more work before it is ready to incorporate into ghc, ghc-pkg and cabal.

Looking forward

Johan Tibell and Bryan O’Sullivan have volunteered as new release managers for Cabal. Bryan moved all the tickets from our previous trac instance into github, allowing us to move all the code to github. Johan managed the latest release and has been helping with managing the inflow of patches. Our hope is that these changes will increase the amount of contributions and give us more maintainer time for reviewing and integrating those contributions. Initial indications are positive. Now is a good time to get involved.

The IHG is currently sponsoring Well-Typed to work on getting the new Hackage server ready for switchover, and helping to make the switchover actually happen. We have recruited a few volunteer administrators for the new site. The remaining work is mundane but important tasks like making sure all the old data can be imported, and making sure the data backup system is comprehensive. Initially the new site will have just a few extra features compared to the old one. Once we get past the deployment hurdle we hope to start getting more contributions for new features. The code is structured so that features can be developed relatively independently, and we intend to follow Cabal and move the code to github.

We would like to encourage people considering contributing to take a look at the bug tracker on github, take part in discussions on tickets and pull requests, or submit their own. The bug tracker is reasonably well maintained and it should be relatively clear to new contributors what is in need of attention and which tasks are considered relatively easy. For more in-depth discussion there is also the cabal-devel mailing list.

Further reading

- Cabal homepage: http://www.haskell.org/cabal

- Hackage package collection: http://hackage.haskell.org/

- Bug tracker: https://github.com/haskell/cabal/

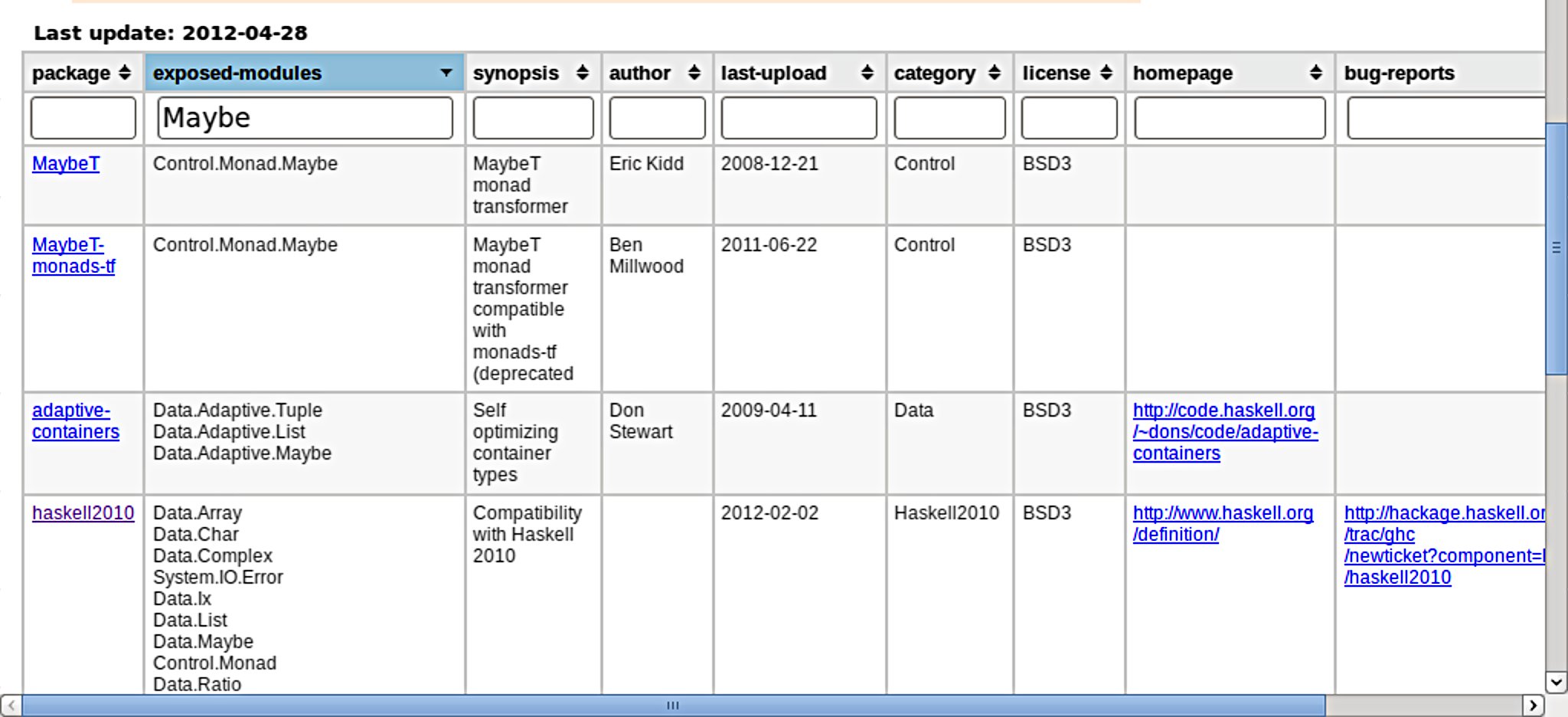

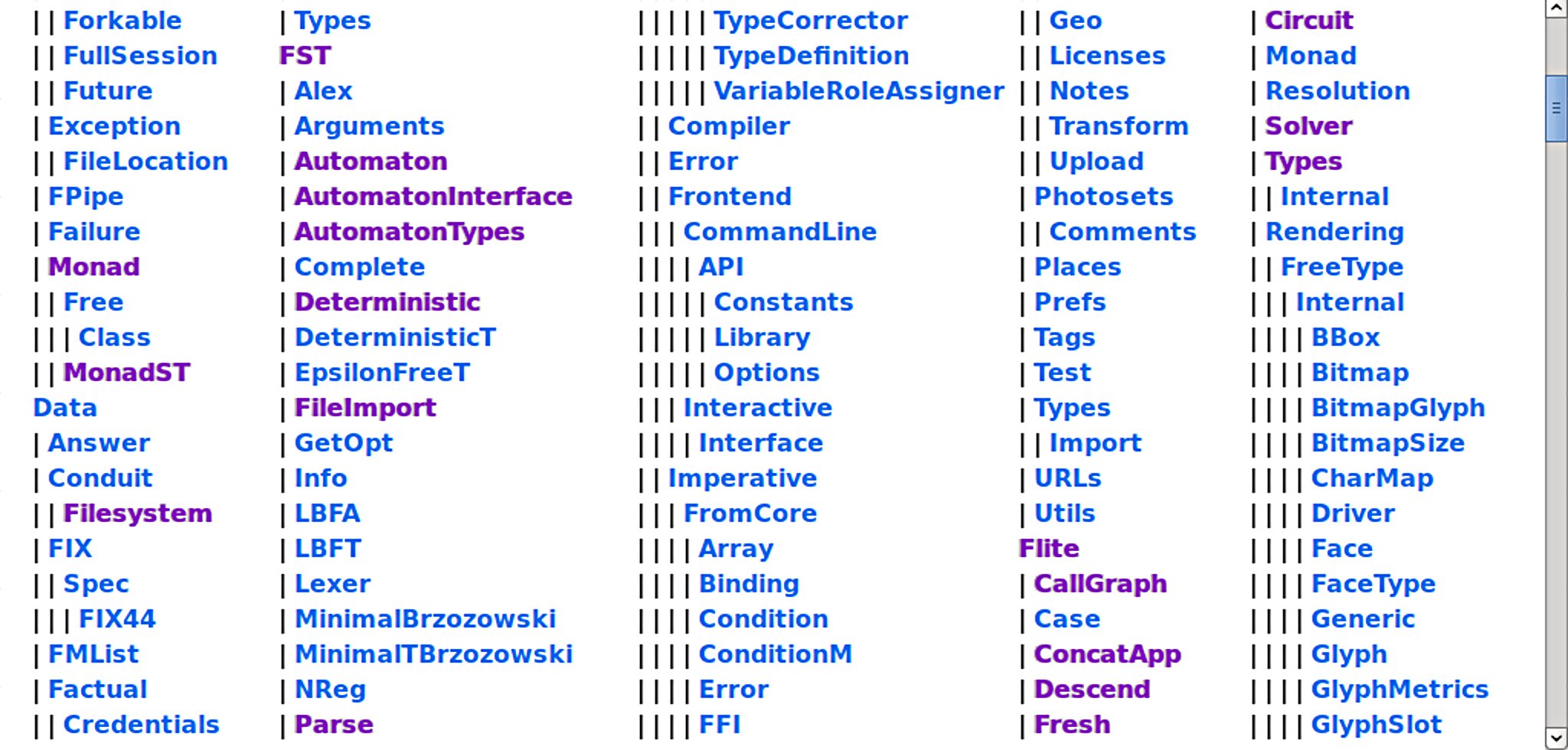

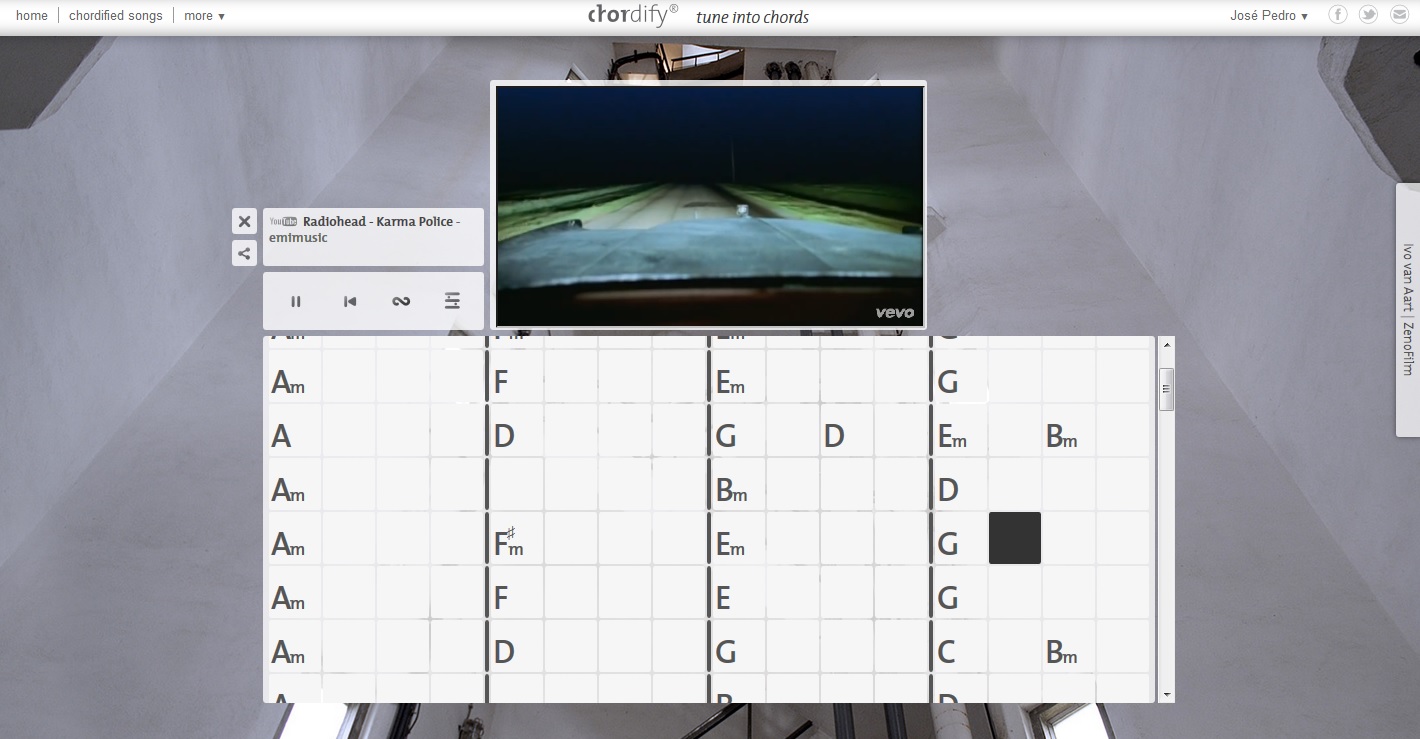

Portackage (http://fremissant.net/portackage) is a web interface to all of http://hackage.haskell.org, which at the time of writing includes some 4000 packages exposing over 17000 modules. There are package and module views, as seen in the screenshots.

The package view includes links to the package, homepage, and bug tracker when available. Each name in the module tree view links to the Haddock API page. Control-hovering will show the fully-qualified name in a tooltip.

Portackage is only a few days old; imminent further work includes

- Tree branches will be collapsed by default.

- Cookies (as well as server DB) will maintain persistent state of which nodes you have open, since this information carries value, both in terms of cost to reconstruct manually, and of personal mnemonics — if nodes were collapsed, you would forget where things were, instead of having them right there filtered out.

- A flat list of modules with the filtering text input field would be good, but the full list of modules is too large for the present naive JavaScript.

The code itself is mostly Haskell, but is still too green to expose on Hackage.

6.4 Others