Index

Haskell Communities and Activities Report

Thirty Second Edition – May 2017

Mihai Maruseac (ed.)

Chris Allen

Christopher Anand

Moritz Angermann

Francesco Ariis

Heinrich Apfelmus

Emil Axelsson

Gershom Bazerman

Doug Beardsley

Ingo Blechschmidt

Emanuel Borsboom

Jan Bracker

Jeroen Bransen

Joachim Breitner

Björn Buckwalter

Erik de Castro Lopo

Manuel M. T. Chakravarty

Olaf Chitil

Alberto Gomez Corona

Nils Dallmeyer

Tobias Dammers

Kei Davis

Dimitri DeFigueiredo

Richard Eisenberg

Tom Ellis

Maarten Faddegon

Dennis Felsing

Olle Fredriksson

Phil Freeman

PALI Gabor Janos

Ben Gamari

Michael Georgoulopoulos

Andrew Gill

Mikhail Glushenkov

Mark Grebe

Adam Gundry

Jurriaan Hage

Bastiaan Heeren

Sylvain Henry

Joey Hess

Guillaume Hoffmann

Graham Hutton

Nicu Ionita

Patrik Jansson

Anton Kholomiov

Harendra Kumar

Oleg Kiselyov

Rob Leslie

Ben Lippmeier

Andres Löh

Rita Loogen

Tim Matthews

Gilberto Melfe

Simon Michael

Andrey Mokhov

Dino Morelli

Antonio Nikishaev

Wisnu Adi Nurcahyo

Ulf Norell

Ivan Perez

Jens Petersen

Bryan Richter

Herbert Valerio Riedel

Sibi Prabakaran

Alexey Radkov

Michael Schröder

Jeremy Shaw

Christian Höner zu Siederdissen

Jeremy Singer

Gideon Sireling

Michael Snoyman

David Sorokin

Lennart Spitzner

Yuriy Syrovetskiy

Jonathan Thaler

Henk-Jan van Tuyl

Michael Walker

Ingo Wechsung

Li-yao Xia

Kazu Yamamoto

Yuji Yamamoto

Brent Yorgey

Marco Zocca

Stack Builders

Preface

This is the 32nd edition of the Haskell Communities and Activities Report. It

comes shortly after the 31st edition because that one was delayed until

December. It is my intention to keep the next editions on schedule.

This report has 125 entries, 67 of which were touched between December and

today. Out of these, 16 are new. As usual, fresh entries – either completely

new or old entries which have been revived after a short temporarily

disappearance – are formatted using a blue background, while updated entries

have a header with a blue background.

Since my goal is to keep only entries which are under active development

(defined as receiving an update in a 3-editions sliding window), contributions

from 2015 and before have been completely removed. However, they can be

resurfaced in the next edition, should a new update be sent for them. For the

30th edition, for example, we had around 20 new entries which resurfaced. We

hope to see more entries revived and updated in the next edition.

A call for new HCAR entries and updates to existing ones will be issued on the

Haskell mailing lists in late September/early October.

Work on a new, modern HCAR pipeline continues to develop. Once that is done,

the next edition will look different, allowing for more expressivity. Details

will follow on the usual communication channels, once they become available.

Now enjoy the current report and see what other Haskellers have been up to lately.

Any feedback is very welcome, as always.

Mihai Maruseac, Leap Year Technologies, US

<hcar at haskell.org>

1 Community

1.1 Haskell’ — Haskell 2020

Haskell’ is an ongoing process to produce revisions to the Haskell

standard, incorporating mature language extensions and well-understood

modifications to the language. New revisions of the language are

expected once per year.

The goal of the Haskell Language committee together with the Core Libraries

Committee is to work towards a new Haskell 2020 Language Report. The Haskell

Prime Process relies on everyone in the community to help by

contributing proposals which the committee will then evaluate and, if

suitable, help formalise for inclusion. Everyone interested in participating

is also invited to join the haskell-prime mailing list.

Four years (or rather ~3.5 years) from now may seem like a long

time. However, given the magnitude of the task at hand, to discuss,

formalise, and implement proposed extensions (taking into account the recently

enacted three-release-policy) to the Haskell Report, the process shouldn’t

be rushed. Consequently, this may even turn out to be a tight schedule after

all. However, it’s not excluded there may be an interim revision of the

Haskell Report before 2020.

Based on this schedule, GHC 8.8 (likely to be released early 2020) would be

the first GHC release to feature Haskell 2020 compliance. Prior GHC releases

may be able to provide varying degree of conformance to drafts of the upcoming

Haskell 2020 Report.

The Haskell Language 2020 committee starts out with 20 members which

contribute a diversified skill-set. These initial members also represent the

Haskell community from the perspective of practitioners, implementers,

educators, and researchers.

The Haskell 2020 committee is a language committee; it will focus its efforts

on specifying the Haskell language itself. Responsibility for the libraries

laid out in the Report is left to the Core Libraries Committee (CLC).

Incidentally, the CLC still has an available seat; if you would like to

contribute to the Haskell 2020 Core Libraries you are encouraged to apply for

this opening.

Haskellers is a site designed to promote Haskell as a language for use in the

real world by being a central meeting place for the myriad talented Haskell

developers out there. It allows users to create profiles complete with skill

sets and packages authored and gives employers a central place to find Haskell

professionals.

Haskellers is a web site in maintenance mode. No new features are being added,

though the site remains active with many new accounts and job postings

continuing. If you have specific feature requests, feel free to send them in

(especially with pull requests!).

Haskellers remains a site intended for all members of the Haskell community,

from professionals with 15 years experience to people just getting into the

language.

Further reading

http://www.haskellers.com/

2 Books, Articles, Tutorials

2.1 Oleg’s Mini Tutorials and Assorted Small Projects

The collection of various Haskell mini tutorials and assorted small projects

(http://okmij.org/ftp/Haskell/) has received a three-part addition:

Implementing Explicit and Finding Implicit Sharing in Embedded

DSLs

The web page collects the materials for the tutorial on sharing and common

subexpression elimination in embedded domain-specific language compilers. The

tutorial was presented at DSL 2011: IFIP Working Conference on Domain-Specific

Languages (6-8 September 2011, Bordeaux, France). The material includes the

extensively commented Haskell source code, with solutions to selected

exercises.

As the running example, the tutorial uses a DSL of arithmetic expressions with

the strong flavor of hardware description languages. In fact, the output of

the DSL compilation is essentially a netlist (the list of primitive gates and

connections among them). The tutorial reviews several ways to introduce

sharing, so important in hardware and so difficult to represent in functional

programming. The DSL approach advocated in the tutorial is probably the best

compromise, hiding the essentially imperative nature of sharing.

Read the

tutorial online.

Explicit sharing across several expressions

We demonstrate how to add explicit sharing – a let-like form – to a

DSL without introducing large notational inconvenience and, specifically,

without re-writing the code in a monadic style. Recall that in pure functional

code sharing is not observable: it is an imperative feature. The challenge of

explicit sharing in an embedded DSL was posed on Haskell-Cafe, back in 2008.

Read the

tutorial online.

Historical note on hash consing

Hash-consing reminds one of lisp (where it has been used widely). However,

hash-consing has been invented before lisp! Hash-consing – as well as hash

functions, investigation of their properties and the algorithm for compiling

arithmetic expressions (still used, e.g., in OCaml compiler) – were first

described in the paper by A.P.Ershov published in 1958. Although published in

Russian, the English translation appeared in Communications of the ACM later

that year: the unheard of publishing speed for those times.

Read

the tutorial online.

The School of Haskell has been available since early 2013. It’s main two

functions are to be an education resource for anyone looking to learn Haskell

and as a sharing resources for anyone who has built a valuable tutorial. The

School of Haskell contains tutorials, courses, and articles created by both

the Haskell community and the developers at FP Complete. Courses are available

for all levels of developers.

Since the last HCAR, School of Haskell has been open sourced, and is available

from its own domain name (schoolofhaskell.com). In addition, the

underlying engine powering interactive code snippets, ide-backend,

has also been released as open source.

Currently 3150 tutorials have been created and 441 have been officially

published. Some of the most visited tutorials are Text Manipulation,

Attoparsec, Learning Haskell at the SOH, Introduction to

Haskell - Haskell Basics, and A Little Lens Starter Tutorial. Over the

past year the School of Haskell has averaged about 16k visitors a month.

All Haskell programmers are encouraged to visit the School of Haskell and to

contribute their ideas and projects. This is another opportunity to showcase

the virtues of Haskell and the sophistication and high level thinking of the

Haskell community.

Further reading

https://www.schoolofhaskell.com/

2.3 Haskell Programming from first principles, a book forall

Haskell Programming is a book that aims to get people from the barest basics

to being well-grounded in enough intermediate Haskell concepts that they can

self-learn what would be typically required to use Haskell in production or to

begin investigating the theory and design of Haskell independently. We’re

writing this book because many have found learning Haskell to be difficult,

but it doesn’t have to be. What particularly contributes to the good results

we’ve been getting has been an aggressive focus on effective pedagogy and

extensive testing with reviewers as well as feedback from readers. My coauthor

Julie Moronuki is a linguist who’d never programmed before learning Haskell

and authoring the book with me.

Haskell Programming is currently content complete and is approximately 1,200 pages

long in the v0.12.0 release. The book is available for sale during the early

access, which includes the 1.0 release of the book in PDF. We’re still editing the material. We expect to release the final version of the book this winter.

Further reading

Learning Haskell is a new Haskell tutorial that integrates text and

screencasts to combine in-depth explanations with the hands-on experience of

live coding. It is aimed at people who are new to Haskell and functional

programming. Learning Haskell does not assume previous programming

expertise, but it is structured such that an experienced programmer who is new

to functional programming will also find it engaging.

Learning Haskell combines perfectly with the Haskell for Mac programming

environment, but it also includes instructions on working with a conventional

command-line Haskell installation. It is a free resource that should benefit

anyone who wants to learn Haskell.

Learning Haskell is still work in progress with eight chapters already

available. The current material covers all the basics, including higher-order

functions and algebraic data types. Learning Haskell

is approachable and fun – it includes topics such as illustrating various

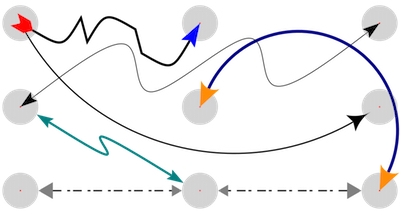

recursive structures using fractal graphics, such as this fractal tree.

Further chapters will be made available as we complete them.

Further reading

2.5 Programming in Haskell - 2nd Edition

Overview

Haskell is a purely functional language that allows programmers to rapidly

develop software that is clear, concise and correct. This book is aimed at a

broad spectrum of readers who are interested in learning the language,

including professional programmers, university students and high-school

students. However, no programming experience is required or assumed, and all

concepts are explained from first principles with the aid of carefully chosen

examples and exercises. Most of the material in the book should be accessible

to anyone over the age of around sixteen with a reasonable aptitude for

scientific ideas.

Structure

The book is divided into two parts. Part I introduces the basic concepts of

pure programming in Haskell and is structured around the core features of the

language, such as types, functions, list comprehensions, recursion and

higher-order functions. Part II covers impure programming and a range of more

advanced topics, such as monads, parsing, foldable types, lazy evaluation and

reasoning about programs. The book contains many extended programming

examples, and each chapter includes suggestions for further reading and a

series of exercises. The appendices provide solutions to selected exercises,

and a summary of some of the most commonly used definitions from the Haskell

standard prelude.

What’s New

The book is an extensively revised and expanded version of the first edition.

It has been extended with new chapters that cover more advanced aspects of

Haskell, new examples and exercises to further reinforce the concepts being

introduced, and solutions to selected exercises. The remaining material has

been completely reworked in response to changes in the language and feedback

from readers. The new edition uses the Glasgow Haskell Compiler (GHC), and is

fully compatible with the latest version of the language, including recent

changes concerning applicative, monadic, foldable and traversable types.

Further reading

http://www.cs.nott.ac.uk/~pszgmh/pih.html

2.6 Stack Builders Tutorials

At Stack Builders, we consider it our mission not only to develop robust and

reliable applications for clients, but to help the industry as a whole by

lowering the barrier to entry for technology that we consider important. We

hope that you enjoy our tutorials – we’re sure you’ll find them useful. Any

suggestions for future publications, don’t hesitate to contact us.

Further reading

The School of Computing Science at the University of Glasgow partnered with

the FutureLearn platform to deliver a six week massive open online course

(MOOC) entitled Functional Programming in Haskell. The course goes

through the basics of the Haskell language, using short videos, an online

REPL, multiple choice quizzes and articles.

The first run of the course completed on 30 Oct 2016. Over 6000 people signed

up for the course, 50%of whom actively engaged with the materials. Around

800 students completed the full course. The most engaging aspect of the

activity was the comradely atmosphere in the discussion forums.

The course will run again, probably in Sep–Oct 2017. Visit

our site

to register your interest.

We hope to refine the learning materials, based on the first run of the

course. We also intend to write up our experiences as a scholarly report.

Further reading

https://www.futurelearn.com/courses/functional-programming-haskell/1

3 Implementations

3.1 The Glasgow Haskell Compiler

By the time of publication GHC will be nearing release of its 8.2.1 release.

While GHC 8.0 was a feature-oriented release, the past year of GHC development

has been focused on stabilization and consolidation of existing features.

This has included an effort to reduce compilation times, improve code

generation, and place the levity polymorphism story introduced in GHC 8.0 on

a stable theoretical footing.

While priorities for the 8.4 release have not yet been discussed, they will very

likely include further focus on compilation time reduction, improved error

messages, and additional improvements to code generation.

3.1.1 Major changes in GHC 8.2

While the emphasis of 8.2 is on performance, stability, and consolidation, it

also includes a number of new features.

Libraries, source language, and type system

- Indexed Typeable representations. While GHC has long supported

runtime type reflection through the Typeable typeclass, its current

incarnation requires careful use, providing little in the way of type-safety.

For this reason the implementation of types like Data.Dynamic must be

implemented in terms of unsafeCoerce with no compiler verification.

GHC 8.2 will address this by introducing indexed type representations,

leveraging the type-checker to verify many programs using type reflection.

This allows facilities like Data.Dynamic to be implemented in a fully type-safe

manner. See the paper (https://research.microsoft.com/en-us/um/people/simonpj/papers/haskell-dynamic/)

for a description of the proposed interface and the Wiki (https://ghc.haskell.org/trac/ghc/wiki/Typeable/BenGamari) for

current implementation status.

- Backpack. Backpack has merged with GHC, Cabal and

cabal-install, allowing you to write libraries which are parametrized

by signatures, letting users decide how to instantiate them at a later point in

time. If you want to just play around with the signature language, there is a

new major mode ghc –backpack; at the Cabal syntax level, there are two

new fields signatures and mixins which permit you to define parametrized

packages, and instantiate them in a flexible way. More details are on the

Backpack wiki page (https://ghc.haskell.org/trac/wiki/Backpack).

- Levity polymorphism. GHC 8.0 reworked GHC’s kind system

introducing the notion of levity to describe a type’s runtime

representation. However, the ability to write functions which were

polymorphic over levity was purposefully withheld.

While GHC’s current compilation model doesn’t allow arbitrary levity

polymorphism, GHC 8.2 enables certain classes of polymorphism which were

either disallowed or broken in 8.0. See the paper for details

(https://www.microsoft.com/en-us/research/publication/levity-polymorphism/).

- deriving strategies. GHC now provides the programmer with a

precise mechanism to distinguish between the three ways to derive typeclass

instances: the usual way, the GeneralizedNewtypeDeriving way, and the

DeriveAnyClass way. See the DerivingStrategies Wiki page for

more details

(https://ghc.haskell.org/trac/wiki/DerivingStrategies).

- New classes in base. The Bifoldable, and

Bitraversable typeclasses are now included in the base

library.

- Unboxed sums. GHC 8.2 has a new language extension,

UnboxedSums, that enables unboxed representation for non-recursive sum

types. GHC 8.2 doesn’t use unboxed sums automatically, but the extension comes

with new syntax, so users can manually unpack sums. More details can be found in

the Wiki page

(https://ghc.haskell.org/trac/ghc/wiki/UnpackedSumTypes).

Runtime system

- Compact regions. This runtime system feature allows a

referentially “closed” set of heap objects to be collected into a

“compact region,” allowing cheaper garbage collection, sharing of

heap-objects between processes, and the possibility of inexpensive

serialization. See the paper

(http://ezyang.com/papers/ezyang15-cnf.pdf) for details.

- Better profiling support. The cost-center profiler now better

integrates with the GHC event-log. Heap profile samples can now be dumped to the

event log, allowing heap behavior to be more easily correlated with other

program events. Moreover, the cost center stack output (e.g. .prof

files) can now be produced in a machine-readable JSON format for easier

integration with external tooling.

- More robust DWARF output. GHC 8.2 will be the first release

with reliable support for DWARF debugging information. A number of bugs

leading to incorrect debug information for foreign calls have been fixed,

meaning it should now be safe to enable debugging information in production

builds.

With stable DWARF support comes a number of opportunities for new

performance analysis and debugging tools (e.g. statistical profiling, cheap

execution stacks). As GHC’s debugging information improves, we expect to see

tooling developed to support these applications. See the DWARF status page on

the GHC Wiki (https://ghc.haskell.org/trac/ghc/wiki/DWARF/Status)

for further information.

- Better support for NUMA platforms. Machines with non-uniform

memory access costs are becoming more and more common as core counts continue to

rise. The runtime system is now better equipped to efficiently run on such

systems.

- Experimental changes to the scheduler that enable the number of

threads used for garbage collection to be lower than the -N setting.

- Support for StaticPointers in GHCi. At long last

programs making use of the StaticPointers language extension will have

first-class bytecode interpreter support, allowing such programs to be loaded

into GHCi.

- Reduced CPU usage at idle. A long-standing regression resulting

in unnecessary wake-ups in an otherwise idle program was fixed. This should

lower CPU utilization and improve power consumption for some programs.

Miscellaneous

- Compiler Determinism. GHC 8.0.2 is the first release of GHC

which produces deterministic interface files. This helps consumers like Nix and

caching build systems, and presents new opportunities for compile-time

improvements. See the Wiki

(https://ghc.haskell.org/trac/ghc/wiki/DeterministicBuilds) for

details.

3.1.1.1 Development updates and acknowledgments

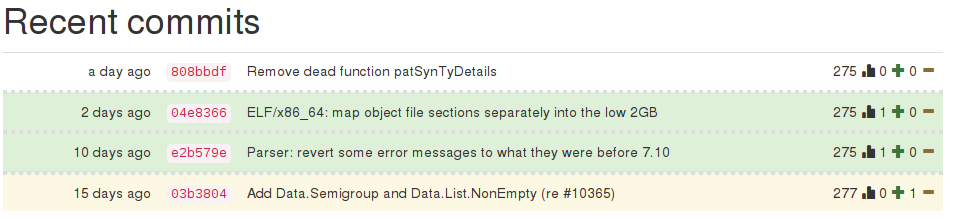

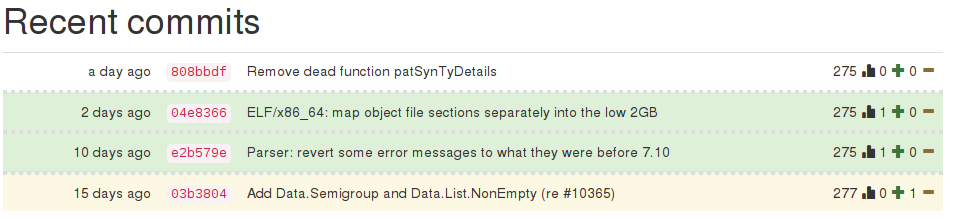

Since the 8.0 release nearly a year ago, GHC’s development community has

continued to grow. Over the last year we have seen sixty new

developers submit patches, many of whom have become regular contributors. These

include new generations of researchers, as well as, increasingly, regular

Haskell users from industry and elsewhere. GHC has had an exciting few months...

Moritz Angermann has been hard at work improving cross-compilation and bringing

remoting support to the -fexternal-interpreter feature introduced in GHC

8.0. He has also contributed a number of patches improving support for iOS and

Android targets and has been pondering how to enable Template Haskell during

cross-compilation.

Ryan Scott and Matthew Pickering have been fixing bugs all over the compiler, in

addition to their respective work on deriving strategies and the new

COMPLETE pragma mentioned above.

Tamar Christina has been hard at work as our resident Windows expert. In

addition to introducing split sections support, reworking dynamic linking,

fixing toolchain breakage due to the recent Windows 10 Creator’s Update, and

maintaining GHC packaging for the Chocolatey package manager, he is also an

invaluable source of knowledge.

Andrey Mokhov and Jose Calderon have been continuing work on Hadrian, GHC’s new

build system built on Shake. Hadrian is already very usable, lacking only in

support for a some non-standard build configurations, building documentation,

and producing binary and source distributions. These would be great, fairly

self-contained projects for any interested developer with a Haskell

experience. Contact Andrey if any of these sound intriguing to you.

Michal Terepeta has been performing a long-overdue grooming of GHC’s

nofib benchmark suite. This suite is one of the primary windows into

the performance of the compiler, yet has fallen into somewhat of a state of

disrepair. Michal has been fixing broken tests, refactoring the build system,

and generally making nofib a nicer place. In addition, he has been

gradually cleaning up native code generator, revisiting GHC’s use of the

hoopl library. Also working in the native code generator is Thomas

Jakway, who has been working on improving the register allocator’s treatment of

loops.

Another contributor working on runtime performance is Luke Maurer, who has

contributed a rework of GHC’s treatment of join points, one of the major

features of 8.2. Join points are an essential optimization which allows GHC to

eliminate closure allocation for some types of calls. Prior to GHC 8.2 the join

points optimization was fragile due to the lack of a sound theoretical footing.

With Luke’s work, GHC is much better at preserving join point opportunities,

leading to improved code generation in many performance critical settings. More

details can be found in his paper

(https://www.microsoft.com/en-us/research/publication/compiling-without-continuations).

As always, this is just a fraction of the contributions which we’ve seen in the

past months. GHC improves through the efforts of everyone who offers

patches, bug reports, code review, and discussion. If you have contributed any

of these in the past year, thank you!

GHC HQ has also taken a number of steps in the past months to try to improve

contributor workflow. Our new proposal

process (https://github.com/ghc-proposals/ghc-proposals)

officially began in December and has been extremely active ever since: the

ghc-proposals repository now

has over fifty pull requests and has facilitated hundreds of messages of great

discussion. Thanks to our everyone who has participated! GHC also now accepts

GitHub pull requests for small changes to the user guide and library documents,

which several contributors have made use of this. We hope that this channel will

make it easier for users to contribute and perhaps feel compelled to pick up

larger tasks in the future.

As always, if you are interested in contributing to any facet of GHC,

be the runtime system, type-checker, documentation, simplifier, or anything in

between, please come speak to us either on IRC (#ghc on

irc.freeenode.net) or ghc-devs@haskell.org. Happy Haskelling!

Further reading

Helium is a compiler that supports a substantial subset of Haskell 98 (but, e.g.,

n+k patterns are missing). Type classes are restricted to a number of

built-in type classes and all instances are derived. The advantage of Helium is

that it generates novice friendly error feedback, including domain

specific type error diagnosis by means of specialized type rules.

Helium and its associated packages are available from Hackage.

Install it by running cabal install helium. You should also

cabal install lvmrun on which it dynamically depends for running

the compiled code.

Currently Helium is at version 1.8.1. The major change with respect to 1.8

is that Helium is again well-integrated with the Hint programming environment

that Arie Middelkoop wrote in Java. The jar-file for Hint can be found on

the Helium website, which is located at http://www.cs.uu.nl/wiki/Helium.

This website also explains in detail what Helium is about, what it offers,

and what we plan to do in the near and far future.

A student has added parsing and static checking for type class and instance

definitions to the language, but type inferencing and code generating still

need to be added. Completing support for type classes is the second thing

on our agenda, the first thing being making updates to the documentation

of the workings of Helium on the website.

Frege is a Haskell dialect for the Java Virtual Machine (JVM). It covers

essentially Haskell 2010, though there are some mostly insubstantial

differences. Several GHC language extensions are supported, most prominently

higher rank types.

As Frege wants to be a practical JVM language, interoperability with

existing Java code is essential. To achieve this, it is not enough to have a

foreign function interface as defined by Haskell 2010. We must also have the

means to inform the compiler about existing data types (i.e. Java classes and

interfaces). We have thus replaced the FFI by a so called native

interface which is tailored for the purpose.

The compiler, standard library and associated tools like Eclipse IDE plugin,

REPL (interpreter) and several build tools are in a usable state, and

development is actively ongoing. The compiler is self hosting and has no

dependencies except for the JDK.

In the growing, but still small community, a consensus developed last summer

that existing differences to Haskell shall be eliminated. Ideally, Haskell

source code could be ported by just compiling it with the Frege compiler. Thus,

the ultimate goal is for Frege to become the Haskell implementation on

the JVM.

Already, in the last months, some of the most offending differences have

been removed: lambda syntax, instance/class context syntax, recognition

of |True| and |False| as boolean literals, lexical syntax for variables

and layout-mode issues. Frege now also supports code without module

headers.

Frege is available under the BSD-3 license at the GitHub project page. A ready

to run JAR file can be downloaded or retrieved through JVM-typical build tools

like Maven, Gradle or Leiningen.

All new users and contributors are welcome!

Currently, we have a new version of code generation in alpha status.

This will be the base for future interoperability with Java 8 and above.

In April, a community member submitted his masters thesis about

implementation of a STM library for Frege.

Further reading

https://github.com/Frege/frege

3.4 Specific Platforms

The Fedora Haskell SIG works to provide good Haskell support in the Fedora

Project Linux distribution.

For the coming Fedora 26 release ghc has been updated to 8.0.2 and all

the Haskell packages have been rebuilt and most updated to LTS 8.2

(except pandoc and hledger, due to missing dependencies). For current

releases there is a ghc-8.0.2 Fedora Copr repo available for Fedora

and EPEL 7, and also a Fedora Copr repo for stack. We use the

cabal-rpm packaging tool to create and update Haskell packages, and

improved fedora-haskell/fedora-haskell-tools to build them.

If you are interested in Fedora Haskell packaging, please join our

mailing-list and the Freenode #fedora-haskell channel.

You can also follow @fedorahaskell for occasional updates.

Further reading

3.4.2 Debian Haskell Group

The Debian Haskell Group aims to provide an optimal Haskell experience

to users of the Debian GNU/Linux distribution and derived distributions

such as Ubuntu. We try to follow the Haskell Platform versions for the

core package and package a wide range of other useful libraries and

programs. At the time of writing, we maintain 995 source packages.

A system of virtual package names and dependencies, based on the ABI

hashes, guarantees that a system upgrade will leave all installed

libraries usable. Most libraries are also optionally available with

profiling enabled and the documentation packages register with the

system-wide index.

The current stable Debian release (“jessie”) provides the Haskell Platform

2013.2.0.0 and GHC 7.6.3, with GHC 7.10.3 being available via

“jessie-backports”. In Debian unstable and testing (the soon-to-be released release “stretch”) we ship GHC 8.0.1.

Debian users benefit from the Haskell ecosystem on 17 architecture/kernel

combinations, including the non-Linux-ports KFreeBSD and Hurd.

Further reading

http://wiki.debian.org/Haskell

3.5 Related Languages and Language Design

Agda is a dependently typed functional programming language (developed

using Haskell). A central feature of Agda is inductive families,

i.e., GADTs which can be indexed by values and not just types.

The language also supports coinductive types, parameterized modules,

and mixfix operators, and comes with an interactive

interface—the type checker can assist you in the development of your

code.

A lot of work remains in order for Agda to become a full-fledged

programming language (good libraries, mature compilers, documentation,

etc.), but already in its current state it can provide lots of value as a

platform for research and experiments in dependently typed programming.

Some highlights from the past six months:

- Agda 2.5.2 was released in December 2016.

- The Agda documentation at

http://agda.readthedocs.org/en/stable/ is being continuously improved.

- Experimental support for homotopy type theory has been added to the

developement branch by Andrea Vezzosi.

Release of Agda 2.5.3 is planned for summer 2017.

Further reading

The Agda Wiki: http://wiki.portal.chalmers.se/agda/

The Disciplined Disciple Compiler (DDC) is a research compiler used to

investigate program transformation in the presence of computational effects.

It compiles a family of strict functional core languages and supports region

and effect typing. This extra information provides a handle on the operational

behaviour of code that isn’t available in other languages. Programs can be

written in either a pure/functional or effectful/imperative style, and one of

our goals is to provide both styles coherently in the same language.

What is new?

DDC is in an experimental, pre-alpha state, though parts of it do work. In

September this year we released DDC 0.4.3, with the following new features:

- Completed desugaring of pattern alternatives.

- Better type inference for higher ranked types, which allows explicit

dictonaries for Functor, Applicative, Monad and friends to be written

easily.

- Automatic insertion of run and box casts is now more well baked.

- Automatic interrogation of LLVM compiler version and generation of

matching LLVM assembly syntax.

- Added code generation for partial applications of data constructors.

- Added support for simple type synonyms.

Further reading

http://disciple.ouroborus.net

4 Libraries, Tools, Applications, Projects

4.1 Language Extensions and Related Projects

I am working on an ambitious update to GHC that will bring full dependent

types to the language. In GHC 8, the Core language and type inference have

already been updated according to the description in our ICFP’13 paper [1].

Accordingly, all type-level constructs are simultaneously kind-level

constructs, as there is no distinction between types and kinds. Specifically,

GADTs and type families are promotable to kinds. At this point, I conjecture

that any construct writable in those other dependently-typed languages will be

expressible in Haskell through the use of singletons.

Building on this prior work, I have written my dissertation on incorporating

proper dependent types in Haskell [2]. I have yet to have the time to start

genuine work on the implementation, but I plan to do so starting summer 2017.

Here is a sneak preview of what will be possible with dependent types,

although much more is possible, too!

data Vec :: * -> Integer -> * ^^ where

Nil :: Vec a 0

(:::) :: a -> Vec a n -> Vec a (1 !+ n)

replicate :: pi n. forall a. a -> Vec a n

replicate ^^ @0 _ = Nil

replicate x = x ::: replicate x

Of course, the design here (especially for the proper dependent types) is

preliminary, and input is encouraged.

Further reading

The generics-sop (“sop” is for “sum of products”) package is a

library for datatype-generic programming in Haskell, in the spirit of GHC’s

built-in DeriveGeneric construct and the generic-deriving

package.

Datatypes are represented using a structurally isomorphic representation that

can be used to define functions that work automatically for a large class of

datatypes (comparisons, traversals, translations, and more). In contrast with

the previously existing libraries, generics-sop does not use the full

power of current GHC type system extensions to model datatypes as an n-ary sum

(choice) between the constructors, and the arguments of each constructor as an

n-ary product (sequence, i.e., heterogeneous lists). The library comes with

several powerful combinators that work on n-ary sums and products, allowing to

define generic functions in a very concise and compositional style.

The current release is 0.2.0.0.

A new talk from ZuriHack 2016 is available on Youtube. The most interesting

upcoming feature is probably type-level metadata, making use of the fact that

GHC 8 now offers type-level metadata for the built-in generics. While the

feature is in principle implemented, there are still a few open questions

about what representation would be most convenient to work with in practice.

Help or opinions are welcome!

Further reading

The supermonad package provides a unified way to represent different monadic

notions. In other words, it provides a way to use standard and generalized

monads (with additional indices or constraints) with each other without having

to manually disambiguate which notion is referred to in every computation. To

achieve this, the library represents monads as a set of two type classes that

are general enough to allow instances for all of the different notions and

then aids constraint checking through a GHC plugin to ensure that everything

type checks properly. Due to the plugin the library can only be used with GHC.

If you are interested in using the library, we have a few examples of

different size in the repository to show how it can be utilized. The generated

Haddock documentation also has full coverage and can be seen on the libraries

Hackage page.

The project had its first release shortly before ICFP and the Haskell

Symposium 2016. We are currently working on providing the same kind of support

for applicative functors and arrows, so that generalizations of these notions

can be used as freely as the different notions of monads.

If you are interested in contributing, found a bug or have a suggestion to

improve the project we are happy to hear from you in person, by email or over

the projects bug tracker on GitHub.

Further reading

4.2 Build Tools and Related Projects

Background

Cabal is the standard packaging system for Haskell software. It specifies a

standard way in which Haskell libraries and applications can be packaged so

that it is easy for consumers to use them, or re-package them, regardless of

the Haskell implementation or installation platform.

cabal-install is the command line interface for the Cabal and Hackage

system. It provides a command line program cabal which has

sub-commands for installing and managing Haskell packages.

Recent Progress

We’ve recently produced

new

point releases of Cabal/cabal-install from the 1.24

branch. Among other things, Cabal 1.24.2.0 includes a

fix necessary to

make soon-to-be-released GHC 8.0.2 work on macOS Sierra.

Almost 1500 commits were made to the master branch by

53

different contributors since the 1.24 release. Among the highlights are:

-

Convenience,

or internal libraries – named libraries that are only intended

for use inside the package. A common use case is sharing code

between the test suite and the benchmark suite without exposing it

to the users of the package.

- Support for

foreign

libraries, which are Haskell libraries intended to be used by

foreign languages like C. Foreign libraries only work with GHC 7.8

and later.

- Initial support for building Backpack packages. Backpack is an

exciting new project adding an ML-style module system to Haskell,

but on the package level. See

here

and here

for a more thorough introduction to Backpack.

- ./Setup configure now accepts an argument

specifying

the component to be configured. This is mainly an internal

change, but it means that cabal-install can now perform

component-level parallel builds (among other things).

- A lot of improvements in the new-build feature

(a.k.a. nix-style local builds). Git HEAD version of

cabal-install is now recommended if you use

new-build. For an introduction to new-build, see

this

chapter of the manual.

- Special support for the Nix package manager in

cabal-install. See

here

for more details.

- cabal upload now uploads a package candidate by

default. Use cabal upload --publish to upload a final

version. cabal upload --check has been removed in favour

of package candidates.

-

An

--index-state flag for requesting a specific version

of the package index.

- New cabal reconfigure command, which re-runs

configure with most recently used flags.

-

New

autogen-modules field for modules built automatically

(like Paths_PACKAGENAME).

-

New

version range operator >=, which is equivalent to

>= intersected with an automatically-inferred major version

bound. For example, >= 2.0.3 is equivalent to >=

2.0.3 &&< 2.1.

-

An

--allow-older flag, dual to --allow-newer.

- New Parsec-based parser for .cabal files

has been merged,

but not enabled by default yet.

- The manual has

been converted to reST/Sphinx format, improved and expanded.

-

Hackage

Security has been enabled by default.

- A lot of bug fixes and performance improvements.

Looking Forward

The next Cabal/cabal-install versions will be released either

in early 2017, or simultaneously with GHC 8.2 (April/May 2017). Our

main focus at this stage is getting the new-build feature to

the state where it can be enabled by default, but there are many other

areas of Cabal that need work.

We would like to encourage people considering contributing to take a

look at the bug

tracker on GitHub and the

Wiki,

take part in discussions on tickets and pull requests, or submit their

own. The bug tracker is reasonably well maintained and it should be

relatively clear to new contributors what is in need of attention and

which tasks are considered relatively easy. For more in-depth

discussion there is also the

cabal-devel

mailing list.

Further reading

4.2.2 The Stack build tool

Stack is a modern, cross-platform build tool for Haskell code. It is intended

for Haskellers both new and experienced.

Stack handles the management of your toolchain (including GHC - the Glasgow

Haskell Compiler - and, for Windows users, MSYS), building and registering

libraries, building build tool dependencies, and more. While it can use

existing tools on your system, Stack has the capacity to be your one-stop shop

for all Haskell tooling you need.

The primary design point is reproducible builds. If you run stack

build today, you should get the same result running stack build

tomorrow. There are some cases that can break that rule (changes in your

operating system configuration, for example), but, overall, Stack follows this

design philosophy closely. To make this a simple process, Stack uses curated

package sets called snapshots.

Stack has also been designed from the ground up to be user friendly, with an

intuitive, discoverable command line interface.

Since its first release in June 2015, many people are using it as their

primary Haskell build tool, both commercially and as hobbyists. New features

and refinements are continually being added, with regular new releases.

Binaries and installers/packages are available for common operating systems to

make it easy to get started. Download it at http://haskellstack.org/.

Further reading

http://haskellstack.org/

4.2.3 Stackage: the Library Dependency Solution

Stackage began in November 2012 with the mission of making it possible to

build stable, vetted sets of packages. The overall goal was to make the Cabal

experience better. Two years into the project, a lot of progress has been made

and now it includes both Stackage and the Stackage Server. To date, there are

over 1900 packages available in Stackage. The official site is

https://www.stackage.org.

The Stackage project consists of many different components, linked to from the

Stackage Github repository https://github.com/fpco/stackage#readme.

These include:

- Stackage Nightly, a daily build of the Stackage package set

- LTS Haskell, which provides major-version compatibility for a package

set over a longer period of time

- Stackage Server, which runs on stackage.org and provides browsable docs,

reverse dependencies, and other metadata on packages

- Stackage Curator, a tool for running the various builds

The Stackage package set has first-class support in the Stack build tool

(→4.2.2). There is also support for cabal-install via cabal.config files,

e.g. https://www.stackage.org/lts/cabal.config.

There are dozens of individual maintainers for packages in Stackage. Overall

Stackage curation is handled by the “Stackage curator” team, which consists

of Michael Snoyman, Adam Bergmark, Dan Burton, Jens Petersen, and Luke Murphy.

Stackage provides a well-tested set of packages for end users to develop on, a

rigorous continuous-integration system for the package ecosystem, some basic

guidelines to package authors on minimal package compatibility, and even a

testing ground for new versions of GHC. Stackage has helped encourage package

authors to keep compatibility with a wider range of dependencies as well,

benefiting not just Stackage users, but Haskell developers in general.

If you’ve written some code that you’re actively maintaining, don’t hesitate

to get it in Stackage. You’ll be widening the potential audience of users for

your code by getting your package into Stackage, and you’ll get some helpful

feedback from the automated builds so that users can more reliably build your

code.

Since the last HCAR, we have moved Stackage Nightly to GHC 8.0.2, as

well as released LTS 8 based on that same GHC release. We continue to

make releases of LTS 6 and 7 concurrently, which are based on GHC

7.10.3 and 8.0.1, respectively.

A browser plugin (currently supported for Firefox/Google Chrome) to

automatically redirect Haddock documentation on Hackage to

corresponding Stackage pages, when the request is via search engines

like Google/Bing etc. For the case where the package hasn’t been added

yet to Stackage, no redirect will be made and the Hackage

documentation will be available. This plugin also tries to guess when

the user would want to go to a Hackage page instead of the Stackage

one and tries to do the right thing there.

Compared to the previous version, stackgo now always takes you to the

latest lts page of the package.

Further reading

This is a utility to install Haskell programs on a system using stack.

Although stack does have an install command, it only copies binaries.

Sometimes more is needed, other files and some directory structure. hsinstall

tries to install the binaries, the LICENSE file and also the resources

directory if it finds one.

Installations can be performed in one of two directory structures. FHS, or the

Filesystem Hierarchy Standard (most UNIX-like systems) and what I call

“bundle” which is a portable directory for the app and all of its files.

They look like this:

There are two parts to hsinstall that are intended to work together. The first

part is a Haskell shell script, util/install.hs. Take a copy of this

script and check it into a project you’re working on. This will be your

installation script. Running the script with the –help switch will

explain the options. Near the top of the script are default values for these

options that should be tuned to what your project needs.

The other part of hsinstall is a library. The install script will try to

install a resources directory if it finds one. the HSInstall library can

then be used in your code to locate the resources at runtime.

Note that you only need the library if your software has data files it needs

to locate at runtime in the installation directories. Many programs don’t have

this requirement and can ignore the library altogether.

Source code is available on darcshub, Hackage and Stackage

Further reading

4.2.6 cab — A Maintenance Command of Haskell Cabal Packages

cab is a MacPorts-like maintenance command of Haskell cabal packages.

Some parts of this program are a wrapper to ghc-pkg and cabal.

If you are always confused due to inconsistency of ghc-pkg and

cabal, or if you want a way to check all outdated packages, or if you want a

way to remove outdated packages recursively, this command helps you.

Since the last HCAR, Cabal 2.0 was supported thanks to Ryan Scott.

Further reading

http://www.mew.org/~kazu/proj/cab/en/

A Yesod scaffolding site with Postgres backend. It provides a JSON API backend

as a separate subsite. The primary purpose of this repository is to use Yesod

as a API server backend and do the frontend development using a tool like

React or Angular. The current code includes a basic example using React and

Babel which is bundled finally by webpack and added in the handler

getHomeR in a type safe manner.

The future work is to integrate it as part of yesod-scaffold and make it as

part of stack template.

Further reading

4.3 Repository Management

Octohat is a comprehensively test-covered Haskell library that wraps GitHub’s

API. While we have used it successfully in an open-source project to

automate

granting access control to servers, it is in very early development, and it

only covers a small portion of GitHub’s API.

Octohat is available on

Hackage, and the source

code can be found on GitHub.

We have already received some contributions from the community for Octohat,

and we are looking forward to more contributions in the future.

Further reading

Darcs is a distributed revision control system written in Haskell. In Darcs,

every copy of your source code is a full repository, which allows for full

operation in a disconnected environment, and also allows anyone with read

access to a Darcs repository to easily create their own branch and modify it

with the full power of Darcs’ revision control. Darcs is based on an

underlying theory of patches, which allows for safe reordering and merging of

patches even in complex scenarios. For all its power, Darcs remains a very

easy to use tool for every day use because it follows the principle of keeping

simple things simple.

In April 2016, we released Darcs 2.12, with minors update in September 2016

and January 2017 to fix compiling with GHC 8 and other minor bugs.

This new major release includes the new darcs

show dependencies command (for exporting the patch dependencies graph of a

repository to the Graphviz format), improvements for Git import, and

improvements to darcs whatsnew to facilitate support of Darcs by

third-party version control front ends like Meld and Diffuse.

SFC and donationsDarcs is free software licensed under the GNU GPL (version 2 or greater).

Darcs is a proud member of the Software Freedom Conservancy, a US tax-exempt

501(c)(3) organization. We accept donations at

http://darcs.net/donations.html.

Further reading

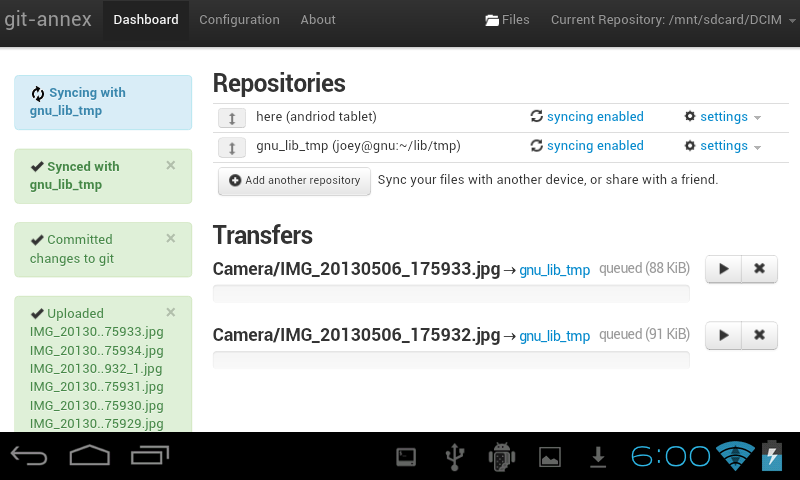

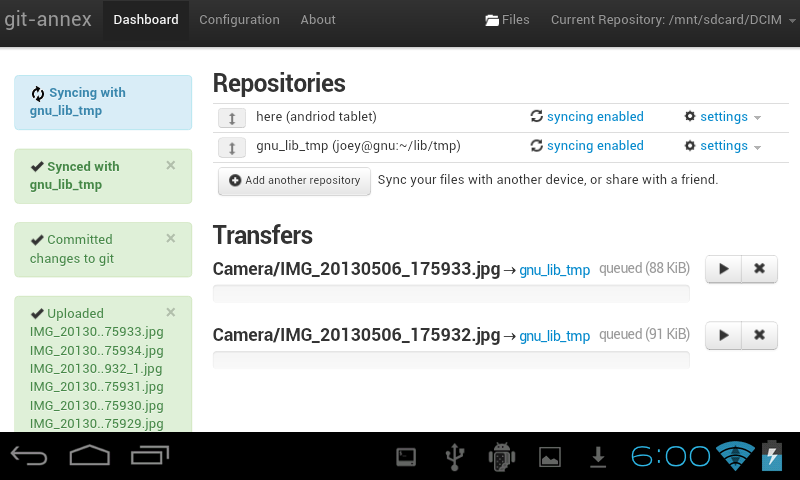

git-annex allows managing files with git, without checking the file contents

into git. While that may seem paradoxical, it is useful when dealing with

files larger than git can currently easily handle, whether due to limitations

in memory, time, or disk space.

As well as integrating with the git command-line tools, git-annex includes a

graphical app which can be used to keep a folder synchronized between

computers. This is implemented as a local webapp using yesod and warp.

git-annex runs on Linux, OSX and other Unixes, and has been ported to Windows.

There is also an incomplete but somewhat usable port to Android.

Five years into its development, git-annex has a wide user community. It is

being used by organizations for purposes as varied as keeping remote Brazilian

communities in touch and managing Neurological imaging data. It is available

in a number of Linux distributions, in OSX Homebrew, and is one of the most

downloaded utilities on Hackage. It was my first Haskell program.

At this point, my goals for git-annex are to continue to improve its

foundations, while at the same time keeping up with the constant flood of

suggestions from its user community, which range from adding support for

storing files on more cloud storage platforms (around 20 are already

supported), to improving its usability for new and non technically inclined

users, to scaling better to support Big Data, to improving its support for

creating metadata driven views of files in a git repository.

At some point I’d also like to split off any one of a half-dozen

general-purpose Haskell libraries that have grown up inside the git-annex

source tree.

Further reading

http://git-annex.branchable.com/

4.3.4 openssh-github-keys (Stack Builders)

It is common to control access to a Linux server by changing public keys

listed in the authorized_keys file. Instead of modifying this file

to grant and revoke access, a relatively new feature of OpenSSH allows the

accepted public keys to be pulled from standard output of a command.

This package acts as a bridge between the OpenSSH daemon and GitHub so that

you can manage access to servers by simply changing a GitHub Team, instead of

manually modifying the authorized_keys file. This package uses the

Octohat wrapper library for

the GitHub API which we released.

openssh-github-keys is still experimental, but we are using it on a couple of

internal servers for testing purposes. It is available on

Hackage and

contributions and bug reports are welcome in the

GitHub

repository.

While we don’t have immediate plans to put openssh-github-keys into heavier

production use, we are interested in seeing if community members and system

administrators find it useful for managing server access.

Further reading

https://github.com/stackbuilders/openssh-github-keys

4.4 Debugging and Profiling

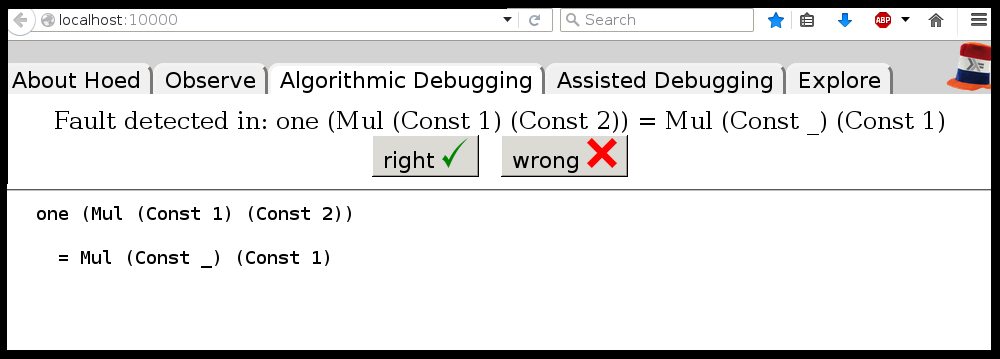

4.4.1 Hoed – The Lightweight Algorithmic Debugger for Haskell

Hoed is a lightweight algorithmic debugger that is practical to use for

real-world programs because it works with any Haskell run-time system and does

not require trusted libraries to be transformed.

To locate a defect with Hoed you annotate suspected functions and compile as

usual. Then you run your program, information about the annotated functions is

collected. Finally you connect to a debugging session using a webbrowser.

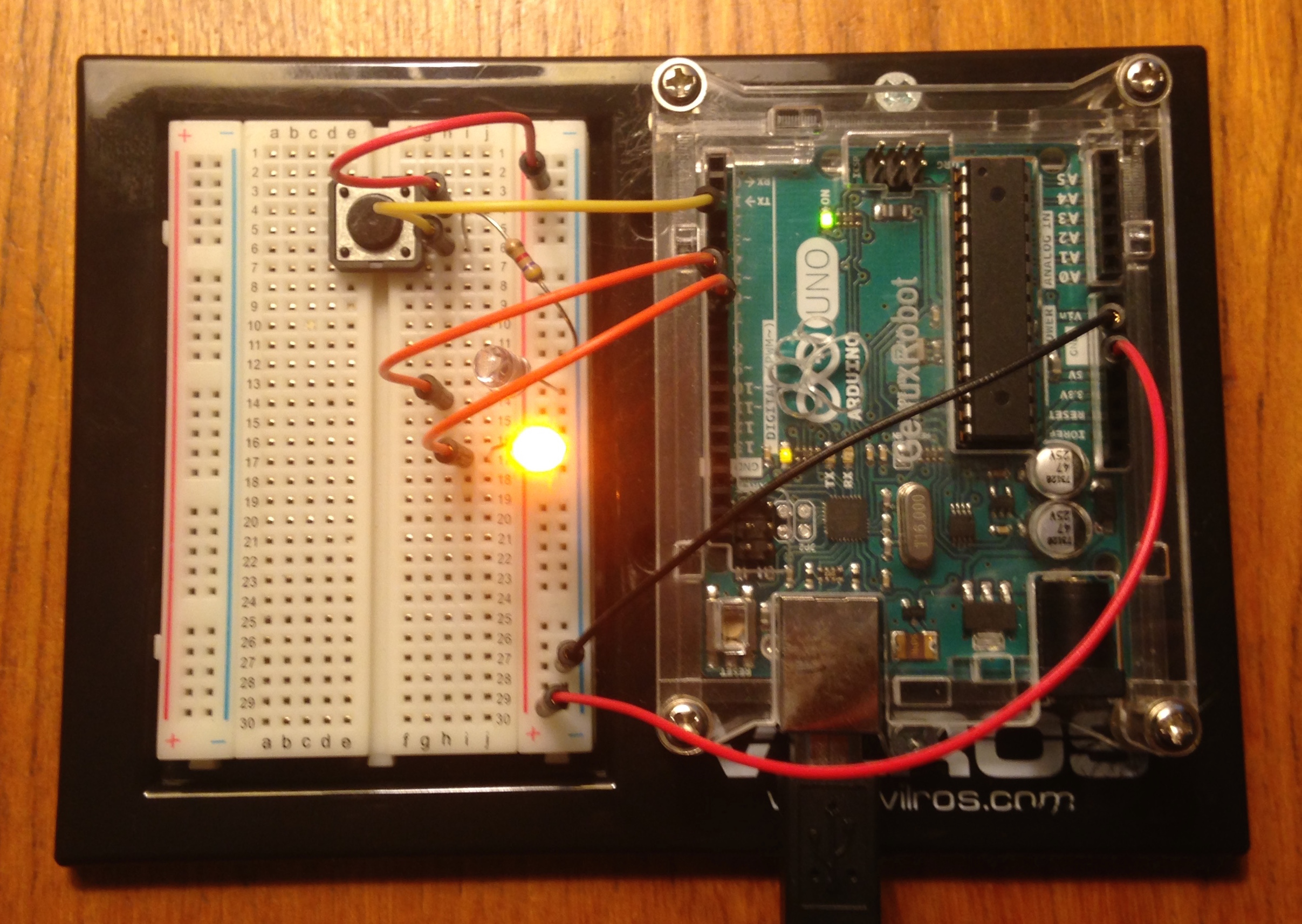

4.4.1.1 Using Hoed

Let us consider the following program, a defective implementation of a parity

function with a test property.

isOdd :: Int -> Bool

isOdd n = isEven (plusOne n)

isEven :: Int -> Bool

isEven n = mod2 n == 0

plusOne :: Int -> Int

plusOne n = n + 1

mod2 :: Int -> Int

mod2 n = div n 2

prop_isOdd :: Int -> Bool

prop_isOdd x = isOdd (2*x+1)

main :: IO ()

main = printO (prop_isOdd 1)

main :: IO ()

main = quickcheck prop_isOdd

Using the property-based test tool QuickCheck we find the counter example 1

for our property.

./MyProgram

*** Failed! Falsifiable (after 1 test): 1

Hoed can help us determine which function is defective. We annotate the

functions |isOdd|, |isEven|, |plusOne| and |mod2| as follows:

import Debug.Hoed.Pure

isOdd :: Int -> Bool

isOdd = observe "isOdd" isOdd'

isOdd' n = isEven (plusOne n)

isEven :: Int -> Bool

isEven = observe "isEven" isEven'

isEven' n = mod2 n == 0

plusOne :: Int -> Int

plusOne = observe "plusOne" plusOne'

plusOne' n = n + 1

mod2 :: Int -> Int

mod2 = observe "mod2" mod2'

mod2' n = div n 2

prop_isOdd :: Int -> Bool

prop_isOdd x = isOdd (2*x+1)

main :: IO ()

main = printO (prop_isOdd 1)

And run our program:

./MyProgram

False

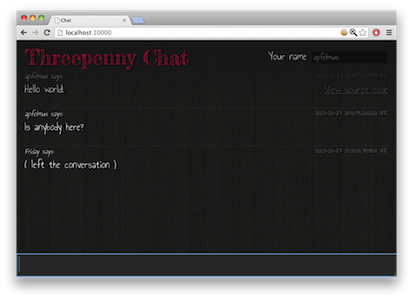

Listening on http://127.0.0.1:10000/

Now you can use your webbrowser to interact with Hoed.

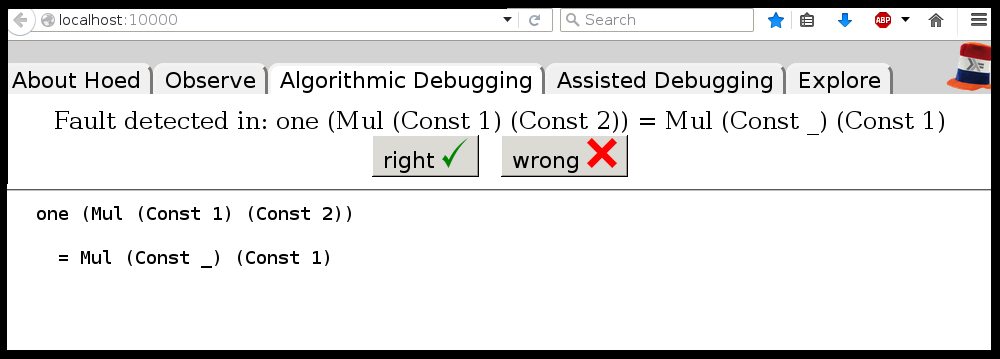

There is a classic algorithmic debugging interface in which you are shown

computation statements, these are function applications and their result, and

are asked to judge if these are correct. After judging enough computation

statements the algorithmic debugger tells you where the defect is in your

code.

In the explore mode, you can also freely browse the tree of computation

statements to get a better understanding of your program. The observe mode is

inspired by HOOD and gives a list of computation statements. Using regular

expressions this list can be searched. Algorithmic debugging normally starts

at the top of the tree, e.g. the application of isOdd to

(2*x+1) in the program above, using explore or observe mode a

different starting point can be chosen.

To reduce the number of questions the programmer has to answer, we added a new

mode Assisted Algorithmic Debugging in version 0.3.5 of Hoed. In this mode

(QuickCheck) properties already present in program code for property-based

testing can be used to automatically judge computation statements

Further reading

The library ghc-heap-view provides means to inspect the GHC’s heap and analyze

the actual layout of Haskell objects in memory. This allows you to investigate

memory consumption, sharing and lazy evaluation.

This means that the actual layout of Haskell objects in memory can be

analyzed. You can investigate sharing as well as lazy evaluation using

ghc-heap-view.

The package also provides the GHCi command :printHeap, which is

similar to the debuggers’ :print command but is able to show more

closures and their sharing behaviour:

> let x = cycle [True, False]

> :printHeap x

_bco

> head x

True

> :printHeap x

let x1 = True : _thunk x1 [False]

in x1

> take 3 x

[True,False,True]

> :printHeap x

let x1 = True : False : x1

in x1

The graphical tool ghc-vis (→4.4.3) builds on ghc-heap-view.

Since version 0.5.6, ghc-heap-view supports GHC 8.

Further reading

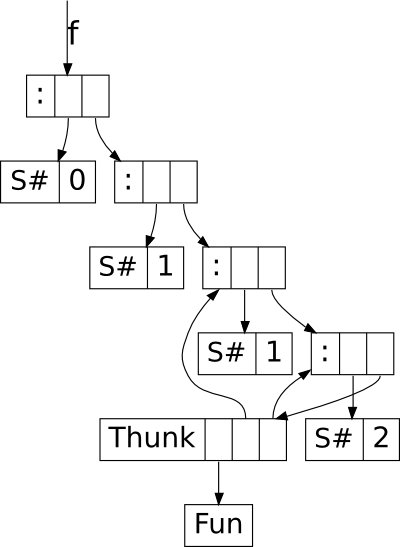

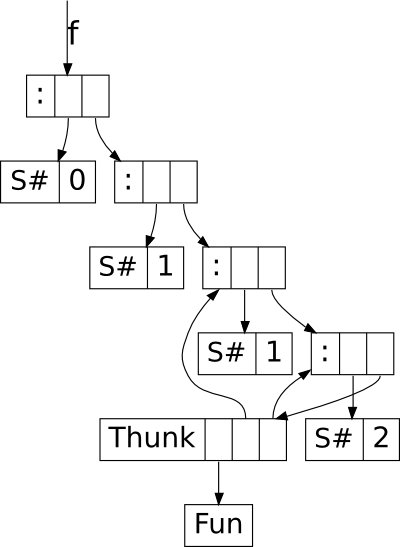

The tool ghc-vis visualizes live Haskell data structures in GHCi. Since it

does not force the evaluation of the values under inspection it is possible to

see Haskell’s lazy evaluation and sharing in action while you interact with

the data.

Ghc-vis supports two styles: A linear rendering similar to GHCi’s

:print, and a graph-based view where closures in memory are nodes and

pointers between them are edges. In the following GHCi session a partially

evaluated list of fibonacci numbers is visualized:

> let f = 0 : 1 : zipWith (+) f (tail f)

> f !! 2

> :view f

At this point the visualization can be used interactively: To evaluate a

thunk, simply click on it and immediately see the effects. You can even

evaluate thunks which are normally not reachable by regular Haskell code.

Ghc-vis can also be used as a library and in combination with GHCi’s debugger.

Further reading

http://felsin9.de/nnis/ghc-vis

4.4.4 Hat — the Haskell Tracer

Hat is a source-level tracer for Haskell. Hat gives access to detailed,

otherwise invisible information about a computation.

Hat helps locating errors in programs. Furthermore, it is useful for

understanding how a (correct) program works, especially for teaching and

program maintenance. Hat is not a time or space profiler. Hat can be used for

programs that terminate normally, that terminate with an error message or that

terminate when interrupted by the programmer.

You trace a program with Hat by following these steps:

- With hat-trans translate all the source modules of your Haskell

program into tracing versions. Compile and link (including the Hat library)

these tracing versions with ghc as normal.

- Run the program. It does exactly the same as the original program except

for additionally writing a trace to file.

- After the program has terminated, view the trace with a tool. Hat comes

with several tools for selectively viewing fragments of the trace in

different ways: hat-observe for Hood-like observations,

hat-trail for exploring a computation backwards,

hat-explore for freely stepping through a computation,

hat-detect for algorithmic debugging, …

Hat is distributed as a package on Hackage that contains all Hat tools and

tracing versions of standard libraries. Hat works with the Glasgow Haskell

compiler for Haskell programs that are written in Haskell 98 plus a few

language extensions such as multi-parameter type classes and functional

dependencies. Note that all modules of a traced program have to be

transformed, including trusted libraries (transformed in trusted mode). For

portability all viewing tools have a textual interface; however, many tools

require an ANSI terminal and thus run on Unix / Linux / OS X, but not on

Windows.

In the longer term we intend to transfer the lightweight tracing technology

that we use in Hoed (→4.4.1) also to Hat.

Further reading

4.5 Development Tools and Editors

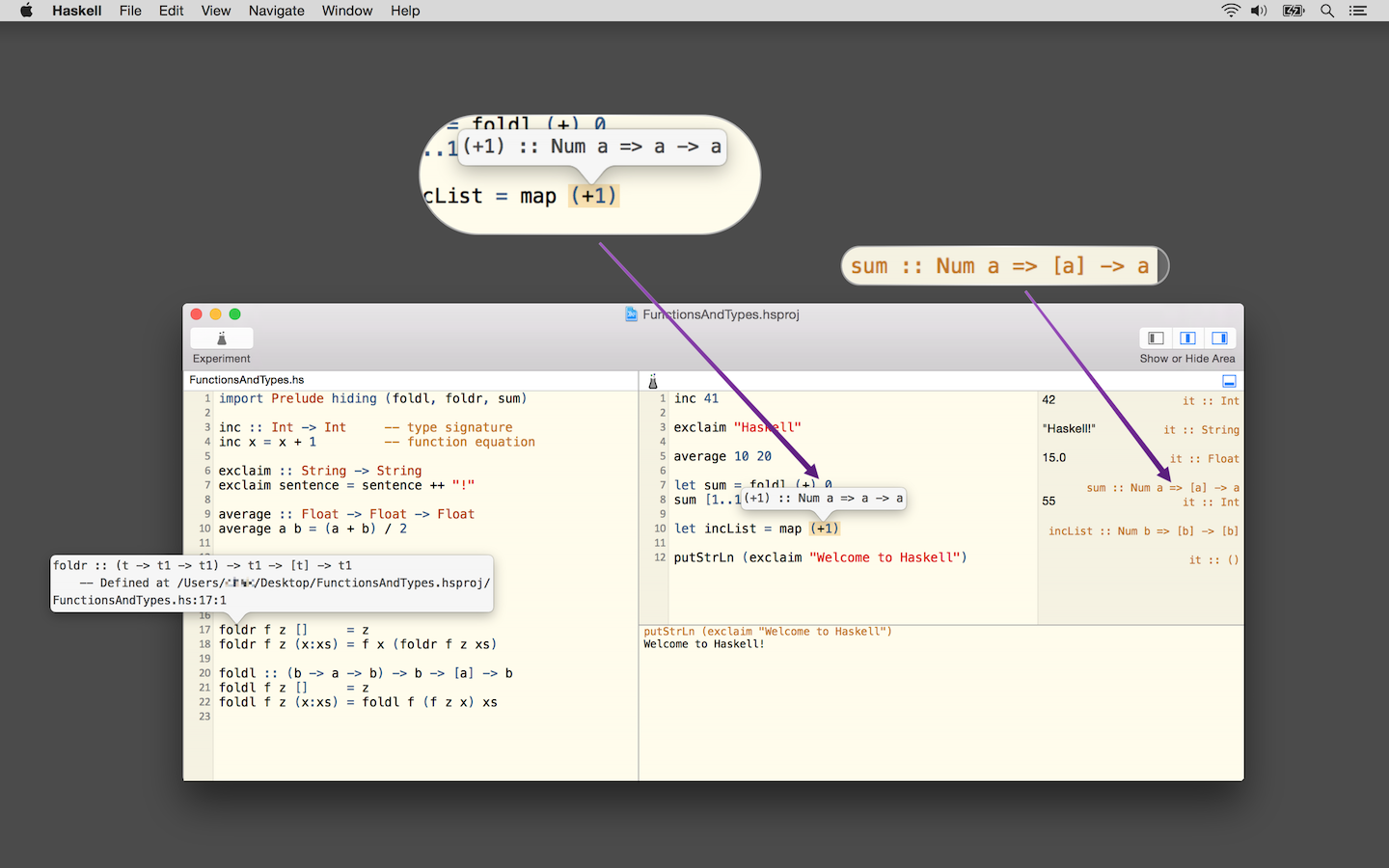

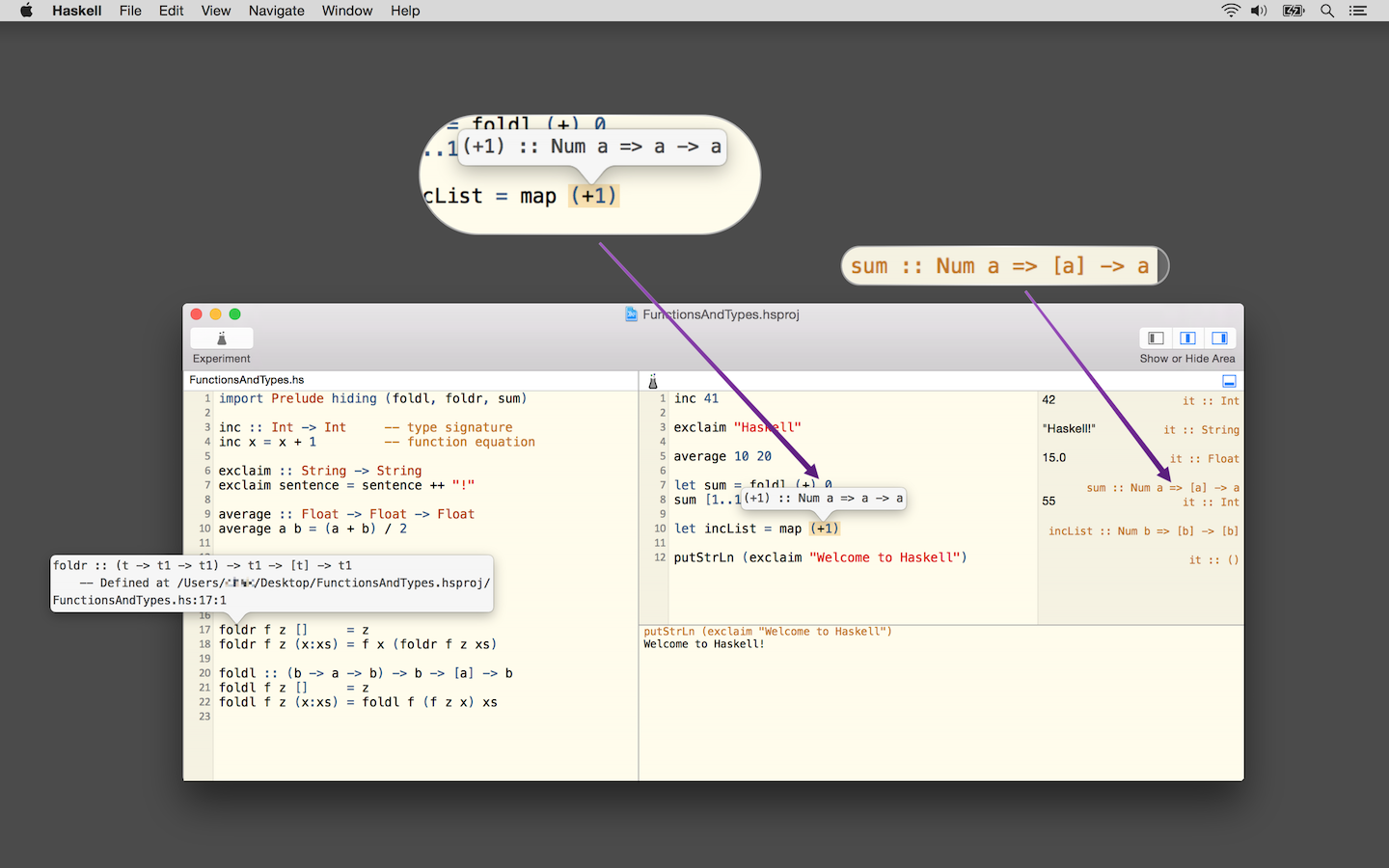

Haskell for Mac is an easy-to-use, innovative programming environment and

learning platform for Haskell on OS X. It includes its own Haskell

distribution and requires no further set up. It features interactive Haskell

playgrounds to explore and experiment with code. Playground code is not only

type-checked, but also executed while you type, which leads to a fast turn

around during debugging or experimenting with new code.

Integrated environment. Haskell for Mac integrates everything needed

to start writing Haskell code, including an editor with syntax highlighting

and smart identifier completion. Haskell for Mac creates Haskell projects

based on standard Cabal specifications for compatibility with the rest of the

Haskell ecosystem. It includes the Glasgow Haskell Compiler (GHC) and over 200

of the most popular packages of LTS Haskell package sets. Matching command

line tools and extra packages can be installed, too.

Type directed development. Haskell for Mac uses GHC’s support for

deferred type errors so that you can still execute playground code in the face

of type errors. This is convenient during refactoring to test changes, while

some code still hasn’t been adapted to new signatures. Moreover, you can use

type holes to stub out missing pieces of code, while still being able to run

code. The system will also report the types expected for holes and the types

of the available bindings.

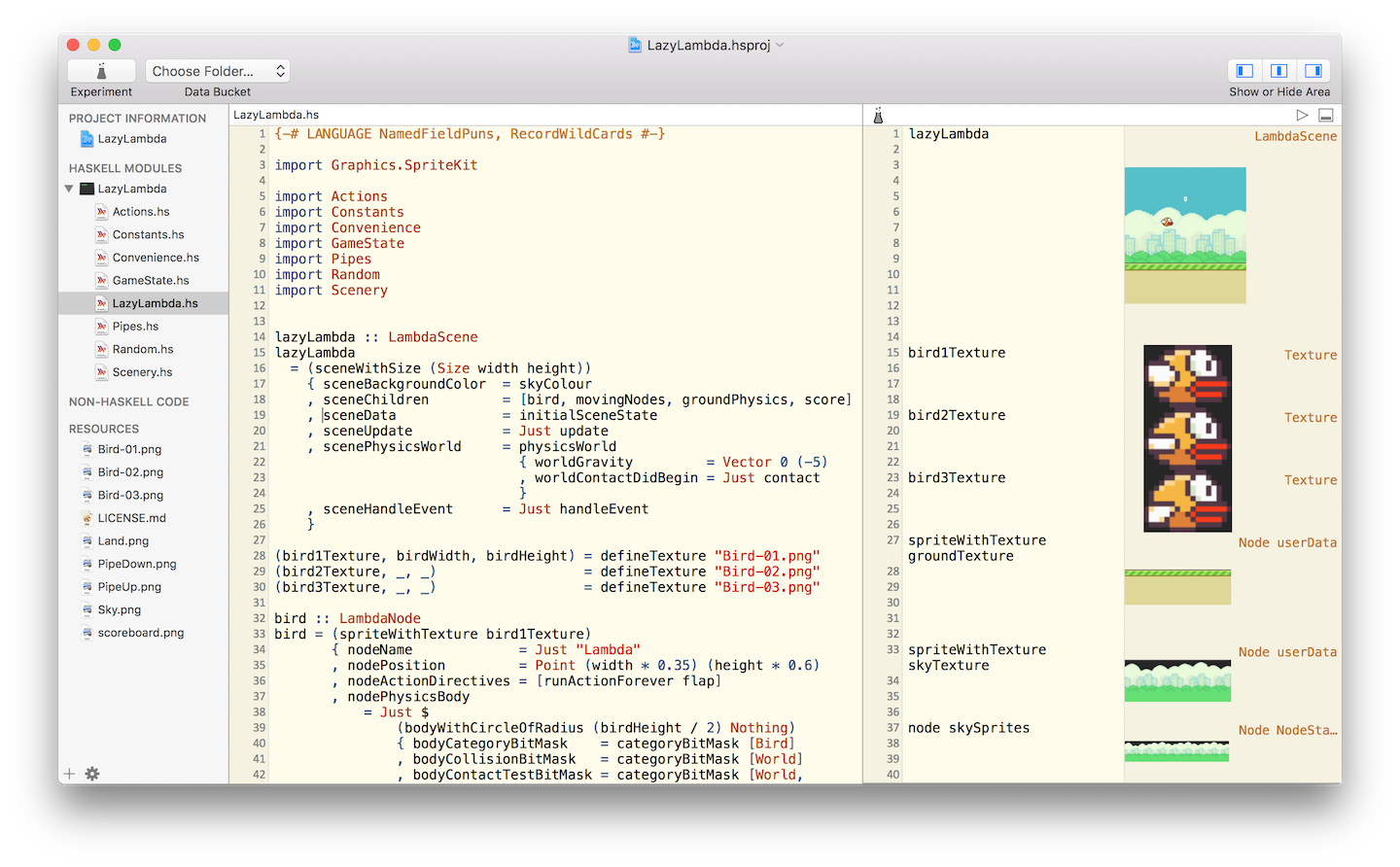

Interactive HTML, graphics &games. Haskell for Mac comes with

support for web programming, network programming, graphics programming,

animations, and much more. Interactively generate web pages, charts,

animations, or even games (with the OS X SpriteKit support). Graphics are also

live and change as you modify the program code.

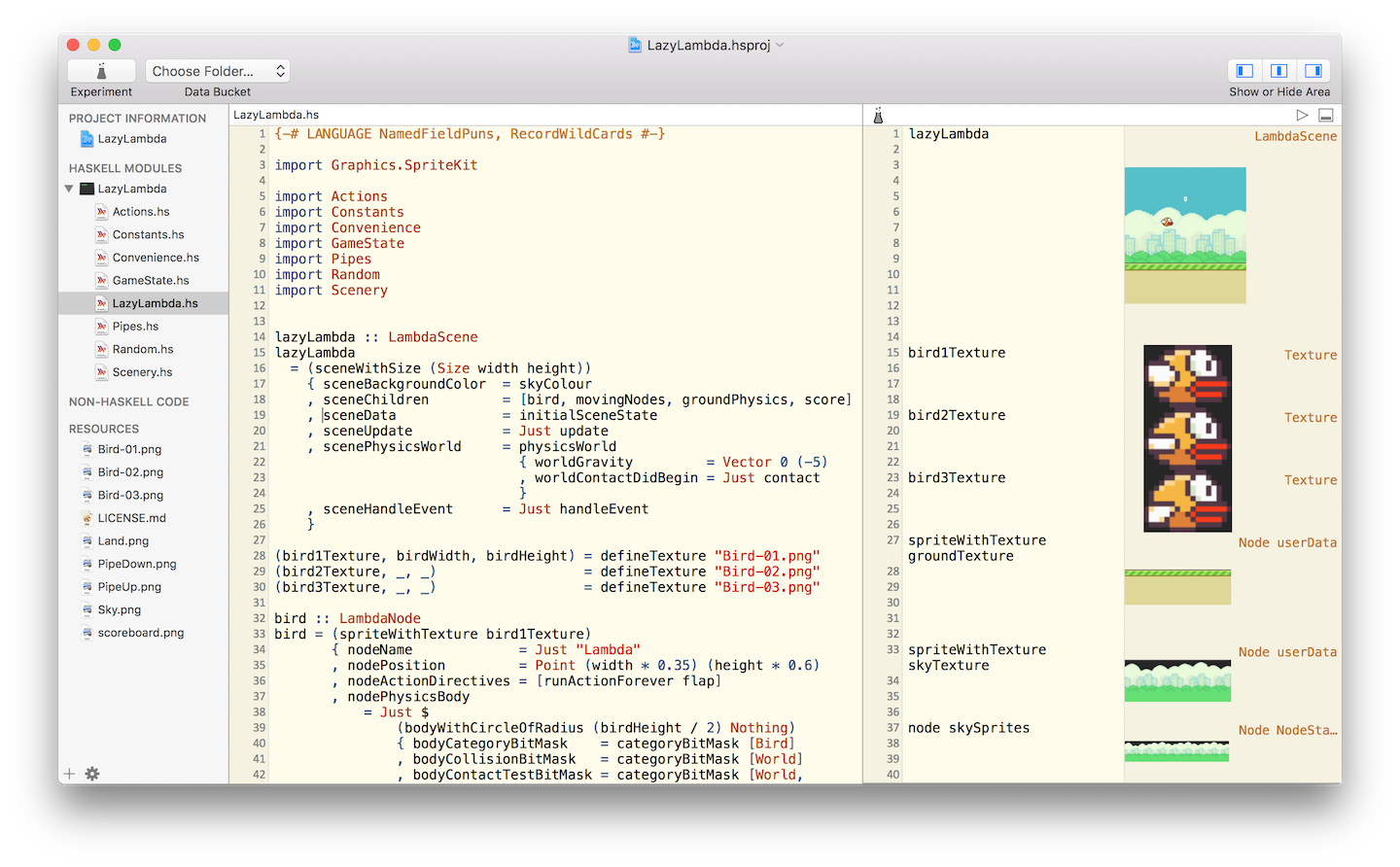

The screenshot below is from the development of a Flappy Bird clone in

Haskell. Watch the Haskell for Mac developer live code Flappy Bird in Haskell

in 20min at the end of the Compose :: Melbourne 2016 keynote at

https://speakerdeck.com/mchakravarty/playing-with-graphics-and-animations-in-haskell.

You can find more information about writing games in Haskell in this blog

post:

http://blog.haskellformac.com/blog/writing-games-in-haskell-with-spritekit.

Haskell for Mac has recently gained auto-completion of identifiers, taking

into account the current module’s imports. It now also features a graphical

package installer for LTS Haskell and support for GHC 8. Moreover, a new type

class, @Presentable@, enables custom rendering of user-defined data types

using images, HTML, and even animations.

Haskell for Mac is available for purchase from the Mac App Store. Just search

for "Haskell", or visit our website for a direct link. We are always available

for questions or feedback at support@haskellformac.com.

Further reading

The Haskell for Mac website: http://haskellformac.com

4.5.2 haskell-ide-engine, a project for unifying IDE functionality

haskell-ide-engine is a backend for driving the sort of features

programmers expect out of IDE environments. haskell-ide-engine is a

project to unify tooling efforts into something different text editors, and

indeed IDEs as well, could use to avoid duplication of effort.

There is basic support for getting type information and refactoring, more

features including type errors, linting and reformatting are planned. People

who are familiar with a particular part of the chain can focus their efforts

there, knowing that the other parts will be handled by other components of the

backend. Integration for Emacs and Leksah is available and should support the

current features of the backend. Work has started on a Language Server

Protocol transport, for use in VS Code. haskell-ide-engine also has a

REST API with Swagger UI. Inspiration is being taken from the work the Idris

community has done toward an interactive editing environment as well.

Help is very much needed and wanted so if this is a problem that interests

you, please pitch in! This is not a project just for a small inner circle.

Anyone who wants to will be added to the project on github, address your

request to @alanz.

Further reading

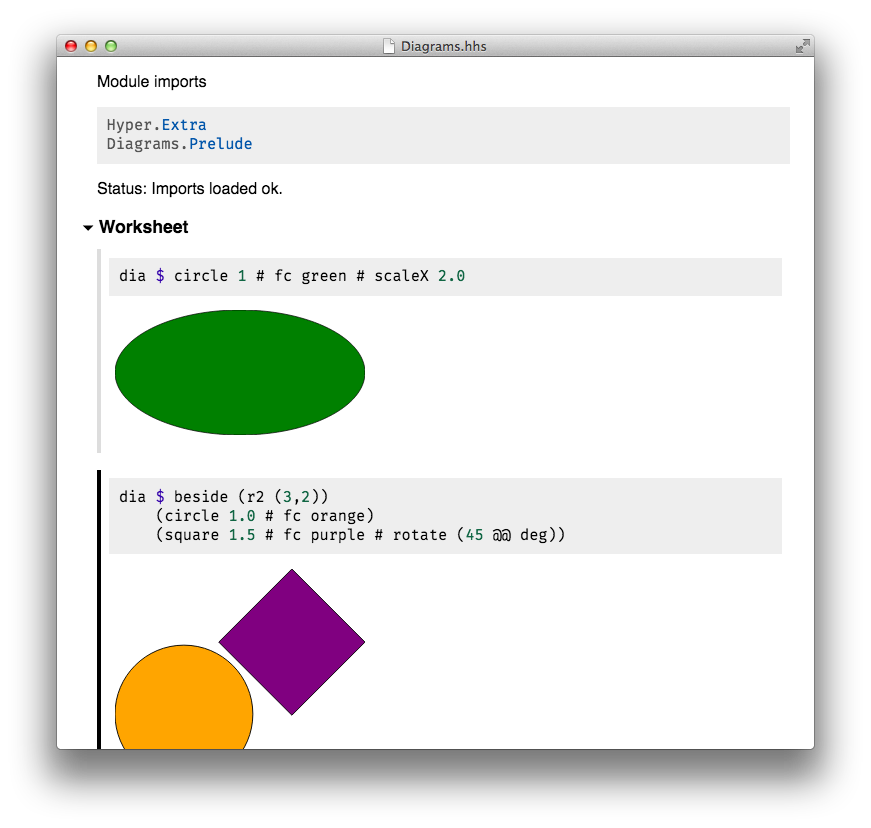

4.5.3 HyperHaskell – The strongly hyped Haskell interpreter

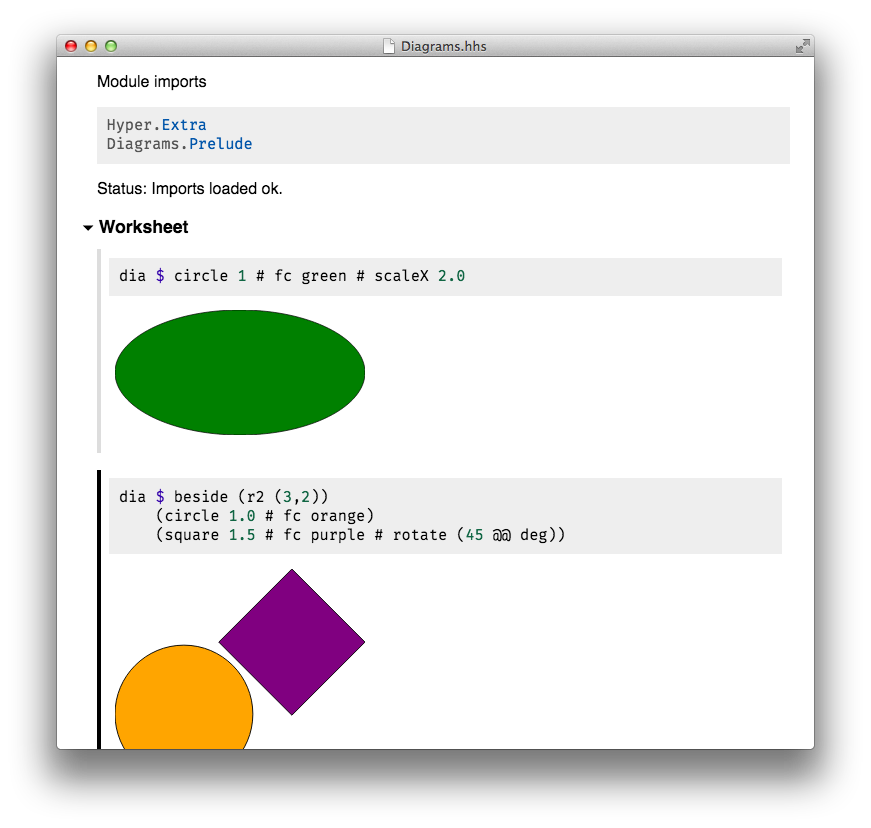

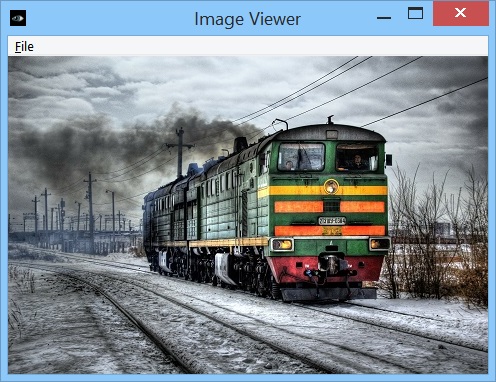

HyperHaskell is a graphical Haskell interpreter, not unlike GHCi, but

hopefully more awesome. You use worksheets to enter expressions and evaluate

them. Results are displayed graphically using HTML.

HyperHaskell is intended to be easy to install. It is

cross-platform and should run on Linux, Mac and Windows. Internally, it uses

the GHC API to interpret Haskell programs, and the graphical front-end is

built on the Electron framework. HyperHaskell is open source.

HyperHaskell’s main attraction is a Display class that

supersedes the good old Show class. The result looks like this:

Current status

HyperHaskell is currently Level alpha. Compared to the

previous report, no new release has been made, but basic features are working.

A new cell type, the text cell, has been implemented, but not yet

released.

I am looking for help in setting up binary releases on the Windows platform!

Future development

Programming a computer usually involves writing a program text in a particular

language, a “verbal” activity. But computers can also be instructed by

gestures, say, a mouse click, which is a “nonverbal” activity. The long term

goal of HyperHaskell is to blur the lines between programming

“verbally” and “nonverbally” in Haskell. This begins with an interpreter

that has graphical representations for values, but also includes editing a

program text while it’s running (“live coding”) and interactive

representations of values (e.g. “tangible values”). This territory is still

largely uncharted from a purely functional perspective, probably due to a lack

of easily installed graphical facilities. It is my hope that

HyperHaskell may provide a common ground for exploration and

experimentation in this direction, in particular by offering the

Display class which may, perhaps one day, replace our good old

Show class.

A simple form of live coding is planned for Level beta.

Further reading

4.6 Formal Systems and Reasoners

4.6.1 The Incredible Proof Machine

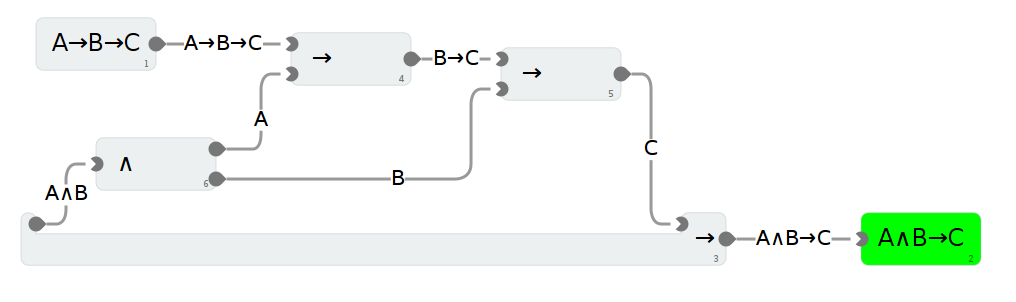

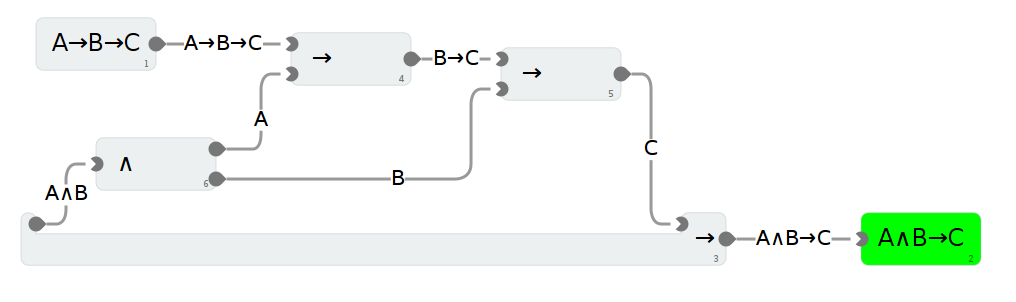

The Incredible Proof Machine is a visual interactive theorem prover: Create

proofs of theorems in propositional, predicate or other, custom defined logics

simply by placing blocks on a canvas and connecting them. You can think of it

as Simulink mangled by the Curry-Howard isomorphism.

It is also an addictive and puzzling game, I have been told.

The Incredible Proof Machine runs completely in your browser. While the UI is

(unfortunately) boring standard JavaScript code with a spagetthi flavor, all

the logical heavy lifting is done with Haskell, and compiled using GHCJS.

Further reading

Exference is a tool aimed at supporting developers writing Haskell code by

generating expressions from a type, e.g.

Input:

(Show b) => (a -> b) -> [a] -> [String]

Output:

\ f1 -> fmap (show . f1)

Input:

(Monad m, Monad n)

=> ([a] -> b -> c) -> m [n a] -> m (n b)

-> m (n c)

Output:

\ f1 -> liftA2 (\ hs i ->

liftA2 (\ n os -> f1 os n) i (sequenceA hs))

The algorithm does a proof search specialized to the Haskell type system. In

contrast to Djinn, the well known tool with the same general purpose,

Exference supports a larger subset of the Haskell type system - most

prominently type classes. The cost of this feature is that Exference makes no

promise regarding termination (because the problem becomes an undecidable one;

a draft of a proof can be found in the pdf below). Of course the

implementation applies a time-out.

There are two primary use-cases for Exference:

- In combination with typed holes: The programmer can insert typed holes

into the source code, retrieve the expected type from ghc and forward this

type to Exference. If a solution, i.e. an expression, is found and if it has

the right semantics, it can be used to fill the typed hole.

- As a type-class-aware search engine. For example, Exference is able to

answer queries such as |Int -> Float|, where the common search engines like

hoogle or hayoo are not of much use.

The current implementation is functional and works well. The most important

aspect that still could use improvement is the performance, but it would

probably take a slightly improved approach for the core algorithm (and thus a

major rewrite of this project) to make significant gains.

The project is actively maintained; apart from occasional bug-fixing and

general maintenance/refactoring there are no major new features planned

currently.

Try it out by on IRC(freenode): exferenceBot is in #haskell and #exference.

Further reading

4.7 Education

4.7.1 Holmes, Plagiarism Detection for Haskell

Holmes is a tool for detecting plagiarism in Haskell programs. A prototype

implementation was made by Brian Vermeer under supervision of Jurriaan Hage,

in order to determine which heuristics work well. This implementation could

deal only with Helium programs. We found that a token stream based comparison

and Moss style fingerprinting work well enough, if you remove template code

and dead code before the comparison. Since we compute the control flow graphs

anyway, we decided to also keep some form of similarity checking of

control-flow graphs (particularly, to be able to deal with certain

refactorings).

In November 2010, Gerben Verburg started to reimplement Holmes keeping only

the heuristics we figured were useful, basing that implementation on

haskell-src-exts. A large scale empirical validation has been made,

and the results are good. We have found quite a bit of plagiarism in a

collection of about 2200 submissions, including a substantial number in which

refactoring was used to mask the plagiarism. A paper has been written, which

has been presented at CSERC’13, and should become available in the ACM Digital

Library.

The tool will be made available through Hackage at some point, but before that

happens it can already be obtained on request from Jurriaan Hage.

Contact

<J.Hage at uu.nl>

4.7.2 Interactive Domain Reasoners

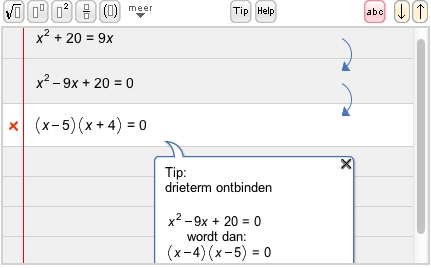

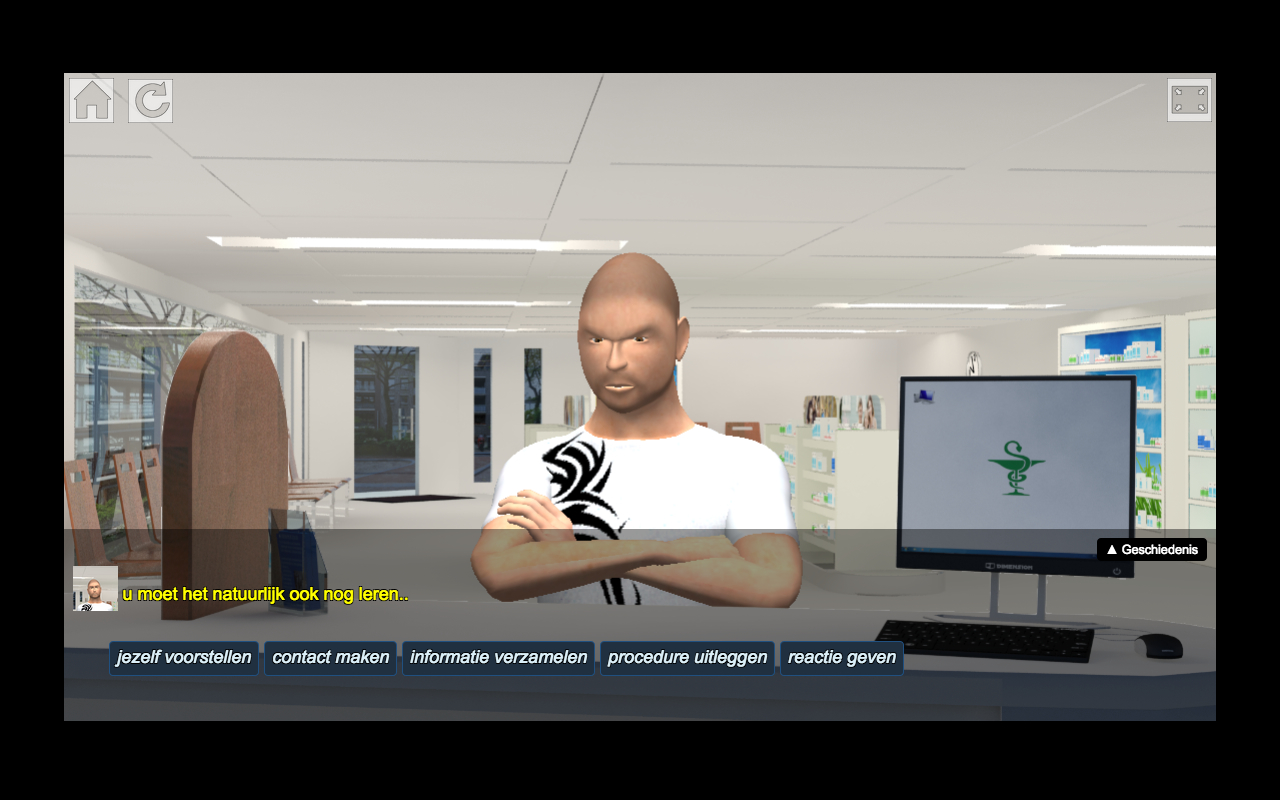

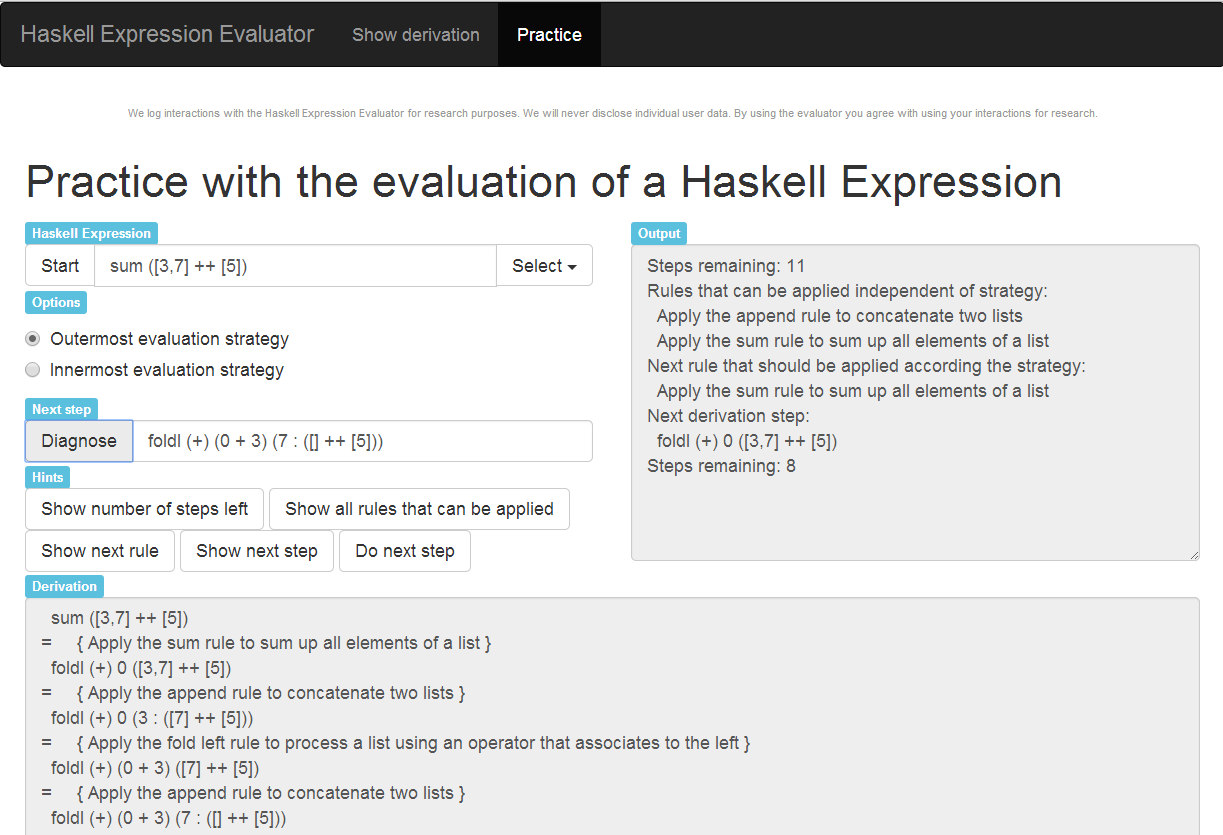

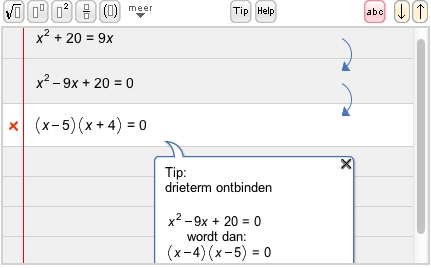

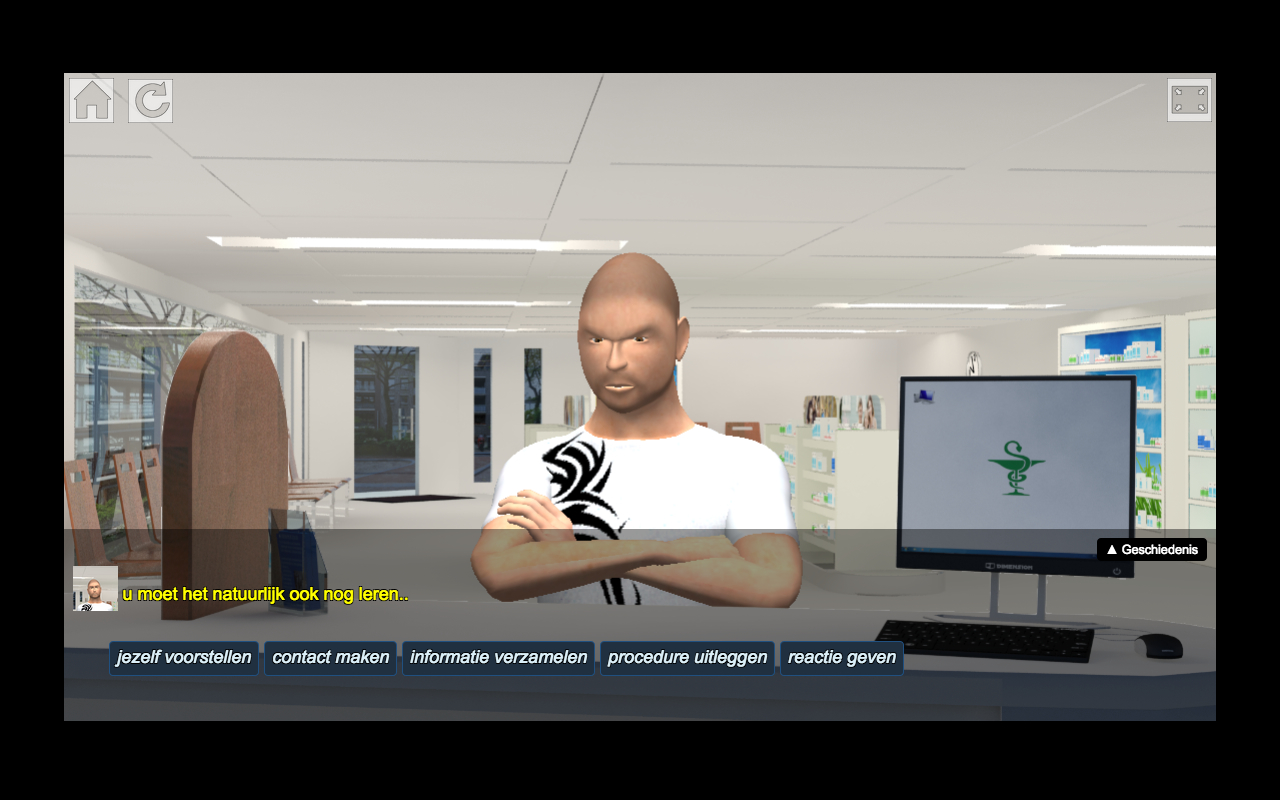

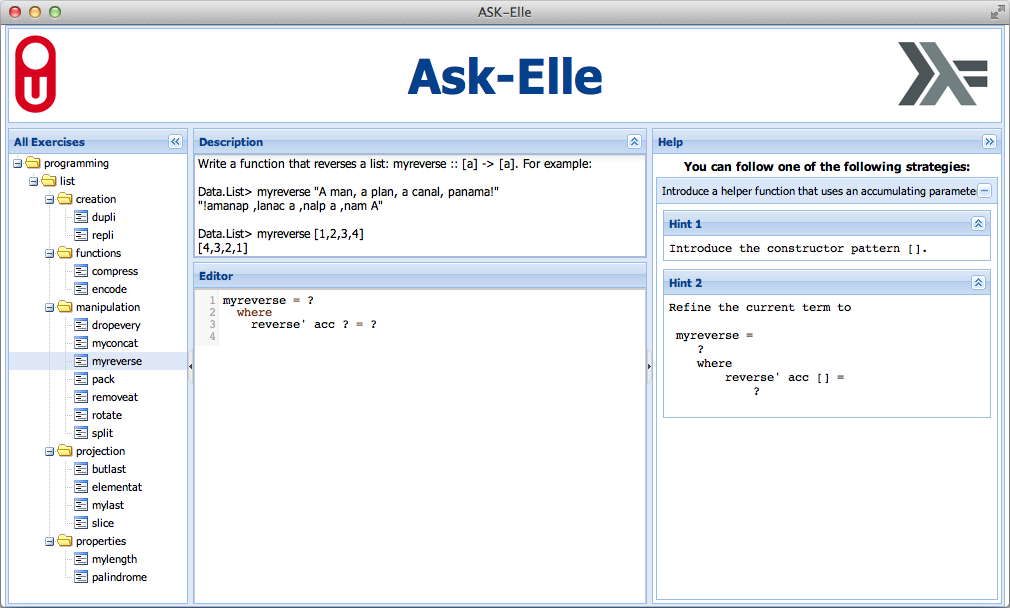

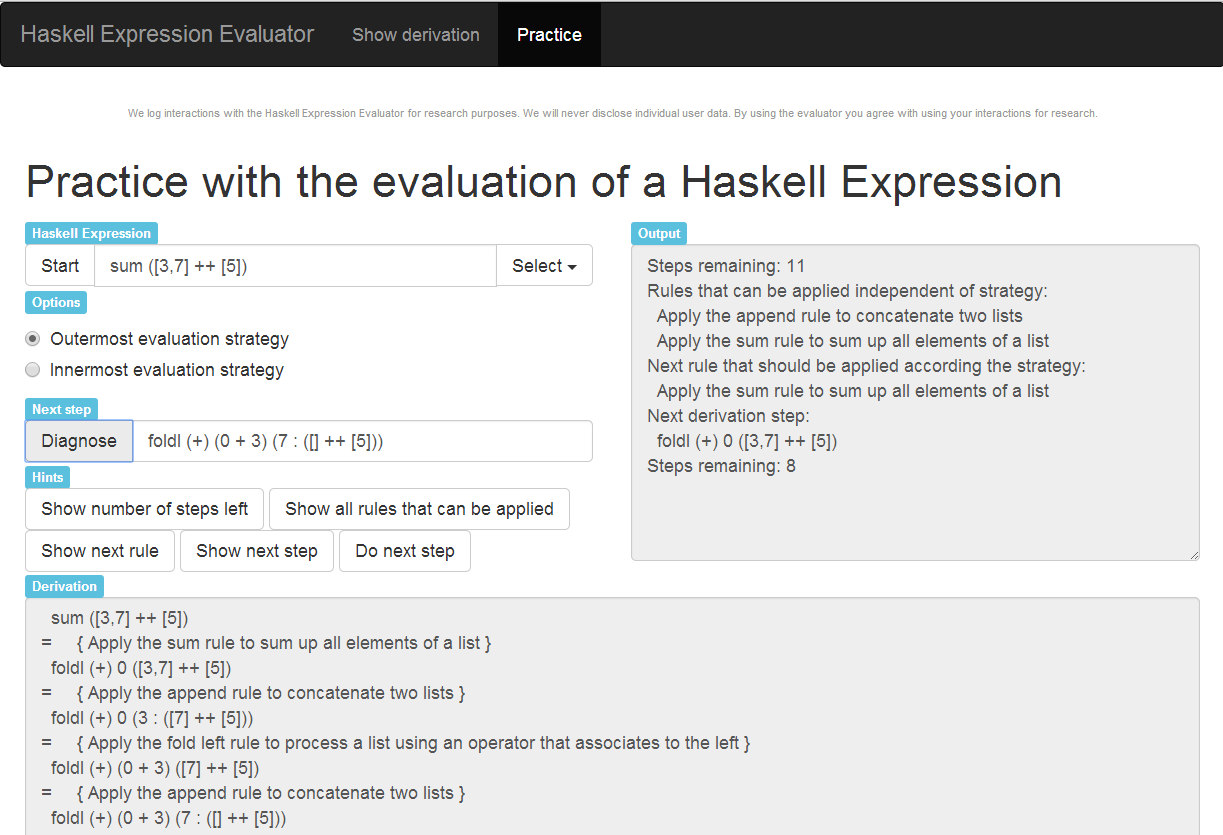

Ideas (Interactive Domain-specific Exercise Assistants) is a joint

research project between the Open University of

the Netherlands and Utrecht University. The project’s goal is to use software

and compiler technology to build state-of-the-art components for intelligent

tutoring systems (ITS), learning environments, and applied games. The

‘ideas’ software package

provides a generic framework for constructing the expert knowledge module

(also known as a domain reasoner) for an ITS or learning environment. Domain

knowledge is offered as a set of feedback services that are used by external

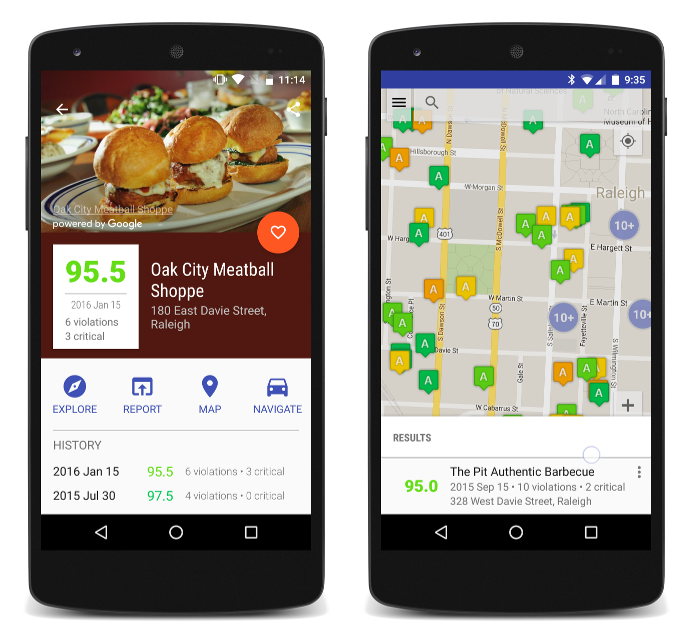

tools such as the digital mathematical environment (first/left screenshot) and

the Math-Bridge system. We have developed several domain reasoners based on

this framework, including reasoners for mathematics, linear algebra,

statistics, propositional logic, for learning Haskell (the Ask-Elle

programming tutor) and evaluating Haskell expressions, and for practicing

communication skills (the serious game

Communicate!,

second/right screenshot).

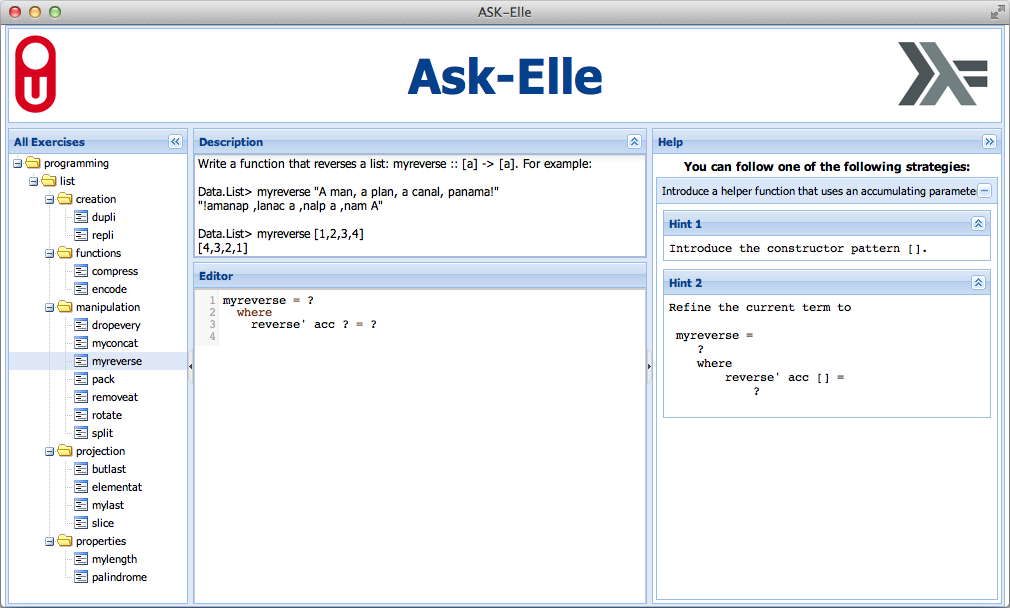

We have continued working on the domain reasoners that are used by our

programming tutors. The Ask-Elle

functional programming tutor lets you practice introductory functional

programming exercises in Haskell. We have extended this tutor with QuickCheck

properties for testing the correctness of student programs, and for the

generation of counterexamples. We have analysed the usage of the tutor to find

out how many student submissions are correctly diagnosed as right or wrong.

We have just started with the Advise-Me project

(Automatic Diagnostics with Intermediate Steps in Mathematics Education),

which is a Strategic Partnership in EU’s Erasmus+ programme. In this project

we develop innovative technology for calculating detailed diagnostics in

mathematics education, for domains such as ‘Numbers’ and ‘Relationships’. The

technology is offered as an open, reusable set of feedback and assessment

services. The diagnostic information is calculated automatically based on the

analysis of intermediate steps.

We are continuing our research in various directions. We are investigating

feedback generation for axiomatic proofs

for propositional logic. We also want to add student models to our framework

and use these to make the tutors more adaptive, and develop authoring tools to

simplify the creation of domain reasoners.

The library for developing domain reasoners with feedback services is

available as a Cabal source

package. We have written a tutorial on

how to make your own domain reasoner with this library. The

domain reasoner for

mathematics and logic has been released as a separate package.

Further reading

- Bastiaan Heeren, Johan Jeuring, and Alex Gerdes.

Specifying

Rewrite Strategies for Interactive Exercises. Mathematics in Computer

Science, 3(3):349–370, 2010.

- Bastiaan Heeren and Johan Jeuring.

Feedback services for

stepwise exercises. Science of Computer Programming, Special Issue on