Index

Haskell Communities and Activities Report

Nineteenth edition – November 2010

Janis Voigtländer (ed.)

Andreas Abel

Robin Adams

Iain Alexander

Krasimir Angelov

Heinrich Apfelmus

Jim Apple

Dmitry Astapov

Christiaan Baaij

Justin Bailey

Alexander Bau

Doug Beardsley

Jean-Philippe Bernardy

Tobias Bexelius

Annette Bieniusa

Mario Blazevic

Anthonin Bonnefoy

Edwin Brady

Gwern Branwen

Joachim Breitner

Erik de Castro Lopo

Roman Cheplyaka

Olaf Chitil

Duncan Coutts

Simon Cranshaw

Nils Anders Danielsson

Dominique Devriese

Daniel Diaz

Larry Diehl

Atze Dijkstra

Jonas Duregard

Marc Fontaine

Patai Gergely

Brett G. Giles

Andy Gill

George Giorgidze

Dmitry Golubovsky

Carlos Gomez

Matthew Gruen

Torsten Grust

Jurriaan Hage

Sönke Hahn

Bastiaan Heeren

Judah Jacobson

Jeroen Janssen

David Himmelstrup

Guillaume Hoffmann

Martin Hofmann

Jasper Van der Jeugt

Farid Karimipour

Oleg Kiselyov

Lennart Kolmodin

Michal Konecny

Eric Kow

Ben Lippmeier

Andres Löh

Tom Lokhorst

Rita Loogen

Ian Lynagh

John MacFarlane

Christian Maeder

José Pedro Magalhães

Ketil Malde

Vivian McPhail

Arie Middelkoop

Ivan Lazar Miljenovic

Neil Mitchell

Dino Morelli

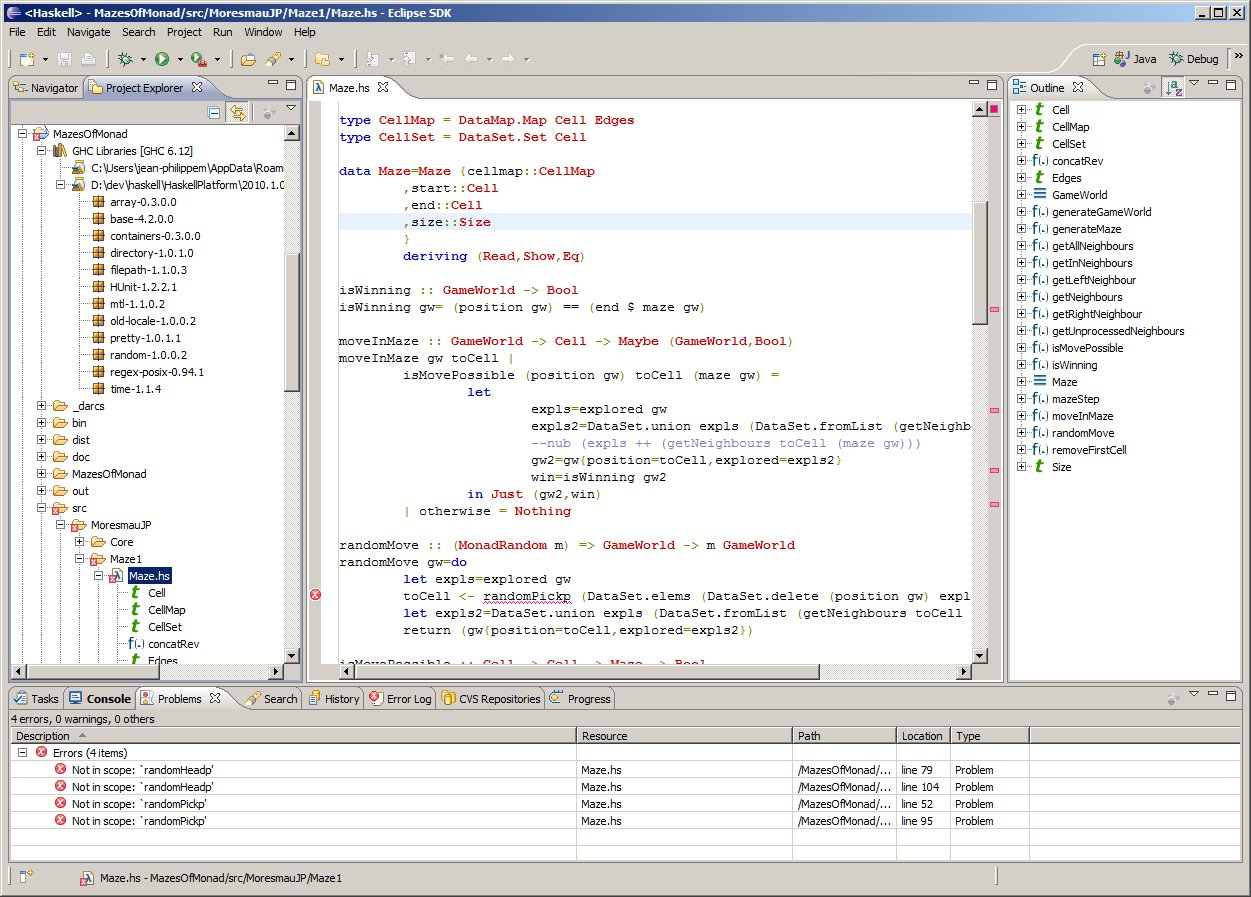

JP Moresmau

Matthew Naylor

Victor Nazarov

Jürgen Nicklisch-Franken

Rishiyur Nikhil

Thomas van Noort

Johan Nordlander

Miguel Pagano

Jens Petersen

Simon Peyton Jones

Bernie Pope

Matthias Reisner

Alberto Ruiz

David Sabel

Antti Salonen

Ingo Sander

Uwe Schmidt

Martijn Schrage

Tom Schrijvers

Jeremy Shaw

Marco Silva

Axel Simon

Michael Snoyman

Will Sonnex

Martijn van Steenbergen

Martin Sulzmann

Doaitse Swierstra

Henning Thielemann

Simon Thompson

Thomas Tuegel

Marcos Viera

Janis Voigtländer

Jan Vornberger

David Waern

Gregory D. Weber

Stefan Wehr

Mark Wotton

Kazu Yamamoto

Brent Yorgey

Preface

This is the 19th edition of the Haskell Communities and Activities Report.

As usual, fresh entries are formatted using a blue background, while updated entries have a header with a blue background.

Entries for which I received a liveness ping, but which have seen no essential update for a while, have been replaced with online pointers to previous versions.

Other entries on which no new activity has been reported for a year or longer have been dropped completely.

Please do revive such entries next time if you do have news on them.

I have restructured the report a bit.

Any feedback on that, or on my further attempts to improve the generation of the html version of the report, or in fact on anything else, would be very welcome.

A call for new entries and updates to existing ones will be issued on the usual mailing lists in April.

Now enjoy the current report and see what other Haskellers have been up to lately.

Janis Voigtländer, University of Bonn, Germany, <hcar at haskell.org>

1 Community

In the beginning of October, Haskellers was launched. It is a site designed to promote Haskell as a language for use in the real world by being a central meeting place for the myriad talented Haskell developers out there. It allows users to create profiles complete with skill sets and packages authored and gives employers a central place to find Haskell professionals.

Though the site is still in its infancy, the response has been staggering. Within a week of its launch, we are now sitting at over 200 active accounts. We are still planning on lots of new features: we may be adding social networking functionality, job postings, user polls, and much more. If you have any ideas, please let me know. And if you are at all involved in the Haskell community, be sure to create a profile.

Further reading

http://www.haskellers.com/

The goal of the Haskell Wikibook project is to build a community textbook

about Haskell that is at once free (as in freedom and in beer), gentle, and

comprehensive. We think that the many marvelous ideas of lazy functional

programming can and thus should be accessible to everyone in a central

place. In particular, the Wikibook aims to answer all those conceptual questions that are frequently asked on the Haskell mailing lists.

Everyone including you, dear reader, are invited to contribute, be it by spotting mistakes and asking for clarifications or by ruthlessly rewriting existing material and penning new chapters.

Thanks to user Duplode, a major reorganization of the introductory chapters is in progress.

Further reading

http://en.wikibooks.org/wiki/Haskell

2 Articles/Tutorials

There are plenty of academic papers about Haskell and plenty of

informative pages on the HaskellWiki. Unfortunately, there is not much

between the two extremes. That is where The Monad.Reader tries to fit

in: more formal than a Wiki page, but more casual than a journal

article.

There are plenty of interesting ideas that maybe do not warrant an

academic publication—but that does not mean these ideas are not

worth writing about! Communicating ideas to a wide audience is much

more important than concealing them in some esoteric journal. Even if

it has all been done before in the Journal of Impossibly Complicated

Theoretical Stuff, explaining a neat idea about “warm fuzzy things”

to the rest of us can still be plain fun.

The Monad.Reader is also a great place to write about a tool or

application that deserves more attention. Most programmers do not

enjoy writing manuals; writing a tutorial for The Monad.Reader,

however, is an excellent way to put your code in the limelight and

reach hundreds of potential users.

Since the last HCAR there has been one new issue, featuring an article

on combinators for automata, an interatee tutorial, and an exploration

of priority queue implementations. The next issue will be published

in November.

Further reading

http://themonadreader.wordpress.com/

2.2 Oleg’s Mini Tutorials and Assorted Small Projects

The collection of various Haskell mini tutorials and assorted

small projects

(http://okmij.org/ftp/Haskell/) has received two additions:

Eliminating Existentials

The web page demonstrates various ways of eliminating explicit

existential quantification in data types, replacing such data types

with isomorphic simple, first-order types. Although such a replacement

is of most use in the languages like SML without direct support for

existentials, the technique may simplify Haskell programs as well,

reducing their reliance on non-standard extensions. The web page uses

Haskell extensively to explain the technique and to demonstrate its

correctness, by writing isomorphisms between existentials and

simple-type representations.

The most interesting case was the elimination of a translucent

existential, which exposed part of its structure, being a list. The

type of the elements of the list is opaque. Eliminating such

existential required nested data types.

The web page discusses several ways of collecting values of different

types in the same list, stressing open unions implemented with and

without existentials. Implicitly heterogeneous lists without

existentials offer no value abstraction, let alone type

abstraction. On the upside, these open unions support a projection

operation, or safe downcast.

http://okmij.org/ftp/Computation/Existentials.html

Type-class overloaded functions: second-order typeclass programming with backtracking

We describe functions polymorphic over classes of types. Each

instance of such (2-polymorphic) function uses ordinary 1-polymorphic

methods, to generically process values of many types, the members of that

2-instance type class. The typeclass constraints are thus manipulated

as first-class entities. We also show how to write typeclass instances

with back-tracking: if one instance does not apply, the typechecker

will chose the ‘next’ instance — in the precise meaning of

‘next’.

We show a method to describe classes of types in a concise way:

instead of the exhaustive enumeration of class members, we use unions,

class differences, and unrestricted comprehension. These classes of

types may be either closed or open (extensible). After the classes are

defined, we can write arbitrarily many functions overloaded over these

type classes. An instance of our function for a specific type class

may use polymorphic functions to generically process all members of

that type class. Our functions are hence second-order polymorphic.

http://okmij.org/ftp/Haskell/types.html#poly2

The “Haskell Cheat Sheet” covers the syntax, keywords, and other

language elements of Haskell 98. Beginning to intermediate Haskell

programmers should find it useful; it can even serve as a memory aid

for experts.

The cheat sheet can be downloaded directly from

http://cheatsheet.codeslower.com or installed using cabal

(cabal install cheatsheet). Spanish and Japanese translations

of the cheatsheet can be found on the web site, as well.

Further reading

http://cheatsheet.codeslower.com

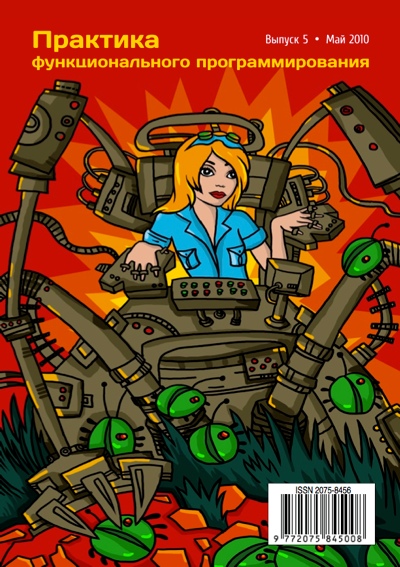

2.4 Practice of Functional Programming

“Practice of Functional Programing” is a Russian electronic magazine promoting functional programming. The magazine features articles that cover both theoretical and practical aspects of the craft. Most of the already published material is directly related to Haskell.

“Practice of Functional Programing” is a Russian electronic magazine promoting functional programming. The magazine features articles that cover both theoretical and practical aspects of the craft. Most of the already published material is directly related to Haskell.

The magazine attempts to keep a bi-monthly release schedule, with Issue #7 slated for release early in 2011.

Full contents of current and past issues are available in PDF from the official site of the magazine free of charge.

Articles are in Russian, with English annotations.

Further reading

http://fprog.ru/ for issues ##1–6

3 Implementations

Background

The Haskell Platform (HP) is the name of the “blessed” set of libraries and tools on which to build further Haskell libraries and applications. It takes a core selection of packages from the more than 2500 on Hackage (→6.7.1). It is intended to provide a comprehensive, stable, and quality tested base for Haskell projects to work from.

Historically, GHC shipped with a collection of packages under the name extralibs. Since GHC 6.12 the task of shipping an entire platform has been transferred to the Haskell Platform.

Recent progress

During the summer we had the second major release of the platform. This is the 2010.2.0.x release series. While there were no new packages included in this major release, there have been a few significant upgrades including QuickCheck version 2, the latest versions of the ‘regex-*’ packages and of course GHC 6.12.x.

Looking forward

Major releases take place on a 6 month cycle. The next major release will be in January 2011 and — barring any major problems — will include GHC 7.0.x.

This is the first round where we have started to use the new procedure for adding packages. There were two proposals: one to add the ‘text’ package and another for a major update to the ‘mtl’ library. At the time of writing the final decision has not been made on whether these proposals will be accepted for this round.

For the following major release, we would like to invite package authors to propose new packages. We also invite the rest of the community to take part in the review process on the libraries mailing list libraries@haskell.org. The procedure involves writing a package proposal and discussing it on the mailing list with the aim of reaching a consensus. Details of the procedure are on the development wiki.

Further reading

http://haskell.org/haskellwiki/Haskell_Platform

3.2 The Glasgow Haskell Compiler

GHC is humming along. We are currently deep into the release cycle for GHC 7.0. We have finally bumped the major version number, because GHC 7.0 has quite a bit of new stuff:

-

As long promised, Simon PJ and Dimitrios have spent a good chunk of the summer doing a complete rewrite of the constraint solver in the type inference engine. Because of GHC’s myriad type-system extensions, especially GADTs and type families, the old engine had begun to resemble the final stages of a game of Jenga. It was a delicately-balanced pile of blocks that lived in constant danger of complete collapse, and had become extremely different to modify (or even to understand). The new inference engine is much more modular and robust; it is described in detail in our paper [OutsideIn]. A blog post describes some consequential changes to let generalisation [LetGen].

As a result we have closed dozens of open type inference bugs, especially related to GADTs and type families.

-

There is a new, robust implementation of INLINE pragmas that behaves much more intuitively. GHC now captures the original RHS of an INLINE function, and keeps it more-or-less pristine, ready to inline at call sites. Separately, the original RHS is optimised in the usual way. Suppose you say

{-# INLINE f #-}

f x = ...blah...

g1 y = f y + 1

g2 ys = map f ys

Here, f will be inlined into g1 as you would expect, but obviously not into g2 (since it is not applied to anything). However f’s right hand side will be optimised (separately from the copy retained for inlining) so that the call from g2 runs optimised code.

There is a raft of other small changes to the optimisation pipeline too. The net effect can be dramatic: Bryan O’Sullivan reports some five-fold (!) improvements in his text-equality functions, and concludes "The difference between 6.12 and 7 is so dramatic, there’s a strong temptation for me to say ’wait for 7!’ to people who report weaker than desired performance."

-

David Terei implemented a new back end for GHC using LLVM. In certain situations using the LLVM backend can give fairly substantial performance improvements to your code, particularly if you are using the Vector libraries, DPH or making heavy use of fusion. In the general case it should give as good performance or slightly better than GHC’s native code generator and C backend. You can use it through the -fllvm compiler flag. More details of the backend can be found in David’s and Manuel Chakravarty’s Haskell Symposium paper [Llvm].

-

Bryan O’Sullivan and Johan Tibell implemented a new, highly-concurrent I/O manager. GHC now supports over a hundred thousand open I/O connections. The new I/O manager defines a separate backend per operating system, using the most efficient system calls for that particular operating system (e.g., epoll on Linux). This means that GHC can now be used to implement servers that make use of, e.g., HTTP long polling, where the server needs to handle a large number of open idle connections.

-

In joint work with Phil Trinder and his colleagues at Herriot Watt, Simon M designed and implemented a new parallel strategies library, described in their 2010 Haskell Symposium paper [Seq].

-

As reported in the previous status update, the runtime system has undergone substantial changes to the implementation of lazy evaluation in parallel, particularly in the way that threads block and wake up again. Certain benchmarks show significant improvements, and some cases of wildly unpredictable behaviour when using large numbers of threads are now much more consistent.

-

The API for asynchronous exceptions has had a redesign. Previously the combinators block and unblock were used to prevent asynchronous exceptions from striking during critical sections, but these had some serious disadvantages, particularly a lack of modularity where a library function could unblock asynchronous exceptions despite a prevailing block. The new API closes this loophole, and also changes the terminology: preventing asynchronous exceptions is now called "masking", and the new combinator is mask. See the documentation for the new API in Control.Exception for more details.

We are fortunate to have a growing team of people willing to roll up their

sleeves and help us with GHC. Amongst those who have got involved recently are:

- Daniel Fischer, who worked on improving the performance of the numeric libraries.

- Milan Straka, for great work improving the performance of the widely-used containers package [Containers].

- Greg Wright is leading a strike team to make GHC work better on Macs, and has fixed the RTS linker so that GHCi will now work in 64-bit mode on OS X.

- Evan Laforge, who has taken on some of the long-standing issues with the Mac installer.

- Sam Anklesaria implemented full import syntax for GHCi, and rebindable syntax for conditionals.

- PHO, who improved the OS X support.

- Sergei Trofimovich, who has fixed GHC on some less common Linux platforms.

- Marco Tulio Gontijo e Silva, who has been working on the RTS.

- Matthias Kilian, who has been working on *BSD support.

- Dave Peixotto, who has improved the PAPI support.

- Edward Z. Yang, who has implemented interruptible FFI calls.

- Reiner Pope, who added view patterns to Template Haskell.

- Gabor Pali, who added thread affinity support for FreeBSD.

- Bas van Dijk has been improving the exceptions API.

At GHC HQ we are having way too much fun; if you wait for us to

do something you have to wait a long time. So do not wait; join in!

Language developments, especially types

GHC continues to act as an incubator for interesting new language developments.

Here is a selection that we know about:

-

José Pedro Magalhães is implementing the derivable type classes mechanism (→6.4.1) described in his 2010 Haskell Symposium paper [Derivable]. I plan for this to replace GHC’s current derivable-type-class mechanism, which has a poor power-to-weight ratio and is little used.

-

Stephanie Weirich and Steve Zdancewic had a great sabbatical year at Cambridge. One of the things we worked on, with Brent Yorgey who came as an intern, was to close the embarrassing hole in the type system concerning newtype deriving (see Trac bug #1496). I have delayed fixing until I could figure out a Decent Solution, but now we know; see our 2011 POPL paper [Newtype]. Brent is working on some infrastructal changes to GHC’s Core language, and then we will be ready to tackle the main issue.

-

Next after that is a mechanism for promoting types to become kinds, and data constructors to become types, so that you can do typed functional programming at the type level. Conor McBride’s SHE prototype is the inspiration here [SHE]. Currently it is, embarrassingly, essentially untyped.

-

Template Haskell seems to be increasingly widely used. Simon PJ has written a proposal for a raft of improvements, which we plan to implement in the new year [TemplateHaskell].

-

Iavor Diatchki plans to add numeric types, so that you can have a type like Bus 8, and do simple arithmetic at the type level. You can encode this stuff, but it is easier to use and more powerful to do it directly.

-

David Mazieres at Stanford wants to implement Safe Haskell, a flag for GHC that will guarantee that your program does not use unsafePerformIO, foreign calls, RULES, and other stuff.

7.0 also has support for the Haskell 2010 standard, and the libraries that it specifies.

Packages and the runtime system

-

Simon Marlow is working on a new garbage collector that is designed to improve scaling of parallel programs beyond small numbers of cores, by allowing each processor core to collect its own local heap independently of the other cores. Some encouraging preliminary results were reported in a blog post. Work on this continues; the complexity of the system and the number of interacting design choices means that achieving an implementation that works well in a broad variety of situations is proving to be quite a challenge.

-

The "new back end" is still under construction. This is a rewrite of the part of GHC that turns STG syntax into C–, i.e., the bit between the Core optimisation passes and the native code generator. The rewrite is based on [Hoopl], a data-flow optimisation framework. Ultimately this rewrite should enable better code generation. The new code generator is already in GHC, but turned off by default; you get it with the flag -fuse-new-codegen. Do not expect to get better code with this flag yet!

The Parallel Haskell Project

Microsoft Research is funding a 2-year project to develop the real-world use of parallel Haskell. The project has recently kicked off with four industrial partners, with consulting and engineering support from Well-Typed (→10.1). Each organisation is working on its own particular project making use of parallel Haskell. The overall goal is to demonstrate successful serious use of parallel Haskell, and along the way to apply engineering effort to any problems with the tools that the organisations might run into.

We will shortly be announcing more details about the partner organisations and their projects. For the most part the projects are scientific and focus on single-node SMP systems, though one of the partners is working on network servers and another partner is very interested in clusters. In collaboration with Bernie Pope, the first tangible results from the project will be a new MPI binding (→5.1.2), which will appear on hackage shortly.

Progress on the project will be reported to the community. Since there are now multiple groups in the community that are working on parallelism, the plan is to establish a parallel Haskell website and mailing list to provide visibility into the various efforts and to encourage collaboration.

Data Parallel Haskell

Since the last report, we have continued to improve support for nested parallel divide-and-conquer algorithms. We started with QuickHull and are now working on an implementation of the Barnes-Hut n-body algorithm. The latter is not only significantly more complex, but also requires the vectorisation of recursive tree data-structures, going well beyond the capabilities of conventional parallel-array languages. In time for the stable branch of GHC 7.0, we replaced the old, per-core sequential array infrastructure (which was part of the sub-package dph-prim-seq) by the vector package — vector started its life as a next-generation spin off of dph-prim-seq, but now enjoys significant popularity independent of DPH.

The new handling of INLINE pragmas as well as other changes to the Simplifier improved the stability of DPH optimisations (and in particular, array stream fusion) substantially. However, the current candidate for GHC 7.0.1 still contains some performance regressions that affect the DPH and Repa libraries and to avoid holding up the 7.0.1 release, we decided to push fixing these regressions to GHC 7.0.2. More precisely, we are planning a release of DPH and Repa that is suitable for use with GHC 7.0 for the end of the year, to coincide with the release of GHC 7.0.2. From GHC 7.0 onwards, the library component of DPH will be shipped separately from GHC itself and will be available to download and install from Hackage as for other libraries.

To catch DPH performance regressions more quickly in the future, Ben Lippmeier implemented a performance regression testsuite that we run nightly on the HEAD. The results can be enjoyed on the GHC developer mailing list.

Sadly, Roman Leshchinskiy has given up his full-time engagement with DPH to advance the use of Haskell in the financial industry. We are looking forward to collaborating remotely with him.

Installers

The GHC installers have also received some attention for this release.

The Windows installer includes a much more up-to-date copy of the MinGW system, which in particular fixes a couple of issues on Windows 7. Thanks to Claus Reinke, the installer also allows more control over the registry associations etc.

Meanwhile, the Mac OS X installer has received some attention from Evan Laforge. Most notably, it is now possible to install different versions of GHC side-by-side.

Bibliography

-

Containers

-

"The performance of the Haskell containers package", Straka, Haskell Symposium 2010.

-

Derivable

-

"A generic deriving mechanism for Haskell", Magalhães, Dijkstra, Jeuring and Löh, Haskell Symposium 2010.

-

LetGen

-

"Let generalisation in GHC 7.0", Peyton Jones, blog post Sept 2010.

-

Newtype

-

"Generative Type Abstraction and Type-level Computation", Weirich, Zdancewic, Vytiniotis, and Peyton Jones, POPL 2011.

-

Llvm

-

"An LLVM Backend for GHC", Terei and Chakravarty, Haskell Symposium 2010.

-

OutsideIn

-

"Modular type inference with local assumptions: OutsideIn(X) ", Dimitrios Vytiniotis, Simon Peyton Jones, Tom Schrijvers, and Martin Sulzmann, Draft.

-

Seq

-

"Seq no more", Marlow, Maier, Trinder, Loidl, and Aswad, Haskell Symposium 2010.

-

SHE

-

The Strathclyde Haskell Enhancement, Conor McBride, 2010.

-

TemplateHaskell

-

New directions for Template Haskell, Peyton Jones, blog post October 2010.

-

Hoopl

-

Hoopl: A Modular, Reusable Library for Dataflow Analysis and Transformation.

LHC is a backend for the Glorious Glasgow Haskell Compiler (→3.2), adding low-level, whole-program optimization to the system. It is based on Urban Boquist’s GRIN language, and using GHC as a frontend, we get most of its great extensions and features.

Essentially, LHC uses the GHC API to convert programs to external core format — it then parses the external core, and links all the necessary modules together into a whole program for optimization. We currently have our own base library (heavily and graciously taken from GHC). This base library is similar to GHC’s (module-names and all), and it is compiled by LHC into external core and the package is stored for when it is needed. This also means that if you can output GHC’s external core format, then you can use LHC as a backend.

The short-term goal is to make LHC faster, easier to use, and more complete in its coverage of Haskell 98.

Further reading

3.5 UHC, Utrecht Haskell Compiler

What is new?

UHC is the Utrecht Haskell Compiler, supporting almost all Haskell98 features and most of Haskell2010, plus

experimental extensions.

Recently version 1.1.0 was released, featuring generic deriving (→6.4.1),

a new configurable garbage collector, initial support for building with UHC via cabal, and many bug fixes.

We plan the next release to offer a Javascript backend.

Furthermore we hope to add optimizations to eliminate some of the obvious inefficiencies.

As part of this work the intent is also to better integrate the work done on whole program analysis.

UHC Blog

Recently a UHC blog has been started.

The intent is to scribble about the internals of UHC and issues arising out of the implementation.

What do we currently do and/or has recently been completed?

As part of the UHC project, the following (student) projects and other activities are underway (in arbitrary order):

- Jeroen Bransen (PhD): “Incremental Global Analysis” (starting up).

- Jan Rochel (PhD): “Realising Optimal Sharing”, based on work by Vincent van Oostrum and Clemens Grabmayer.

- Arie Middelkoop (PhD): type system formalization and automatic generation from type rules.

- Tom Lokhorst: type based static analyses (recently completed, available with proper configuration).

- Jeroen Leeuwestein: incrementalization of whole program analysis.

- Atze van der Ploeg: lazy closures (recently completed).

- Paul van der Ende: garbage collection &LLVM (recently completed, available with proper configuration).

- Jeroen Fokker: GRIN backend, whole program analysis.

- Calin Juravle: base libraries (completed upto Haskell98, integrated).

- Levin Fritz: base libraries for Java backend (completed, integrated).

- Andres Löh: Cabal support (completed initial support, integrated).

- José Pedro Magalhães: generic deriving ((→6.4.1), completed, integrated, presented at Haskell Symposium).

- Doaitse Swierstra: parser combinator library.

- Atze Dijkstra: overall architecture, type system, bytecode interpreter + java + javascript backend, garbage collector.

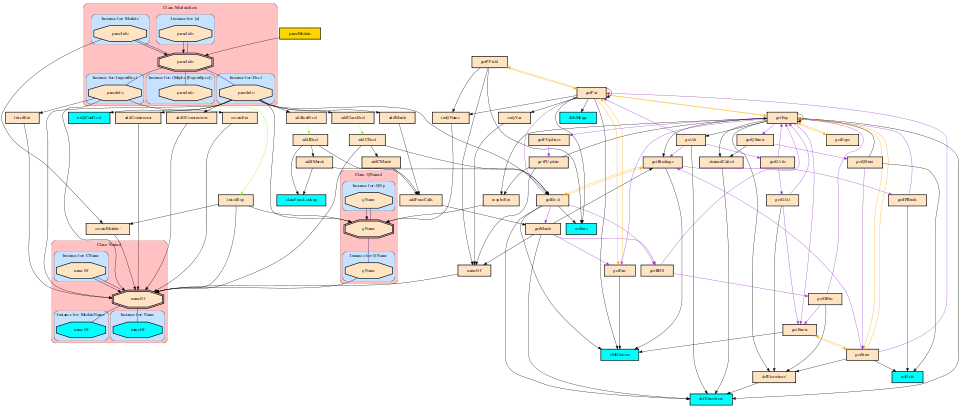

BackgroundUHC actually is a series of compilers of which the last is UHC, plus

infrastructure for facilitating experimentation and extension.

The distinguishing features for dealing with the complexity of the compiler and for experimentation are

(1) its stepwise organisation as a series of increasingly more complex standalone compilers,

the use of DSL and tools for its (2) aspectwise organisation (called Shuffle) and

(3) tree-oriented programming (Attribute Grammars, by way of the

Utrecht University Attribute Grammar (UUAG) system (→5.4.1).

Further reading

3.6 Exchanging Sources between Clean and Haskell

In a Haskell’10 paper we describe how we facilitate the exchange of sources between Clean (→4.4) and Haskell.

We use the existing Clean compiler as starting point, and implement a double-edged front end for this compiler:

it supports both standard Clean 2.1 and (currently a large part of) standard Haskell 98. Moreover, it

allows both languages to seamlessly use many of each other’s language features that were alien to each other before.

For instance, Haskell can now use uniqueness typing anywhere, and Clean can use newtypes efficiently.

This has given birth to two new dialects of Clean and Haskell, dubbed Clean* and Haskell*.

Measurements of the performance of the new compiler indicate that it is on par with the flagship Haskell compiler GHC.

Future plans

Although the most important features of Haskell 98 have been implemented, the list of remaining issues is still

rather long since some features took much more work than expected. Also, to enable the practical

reuse of Haskell libraries, we have to implement some of GHC’s extensions, such as generalised algebraic datatypes and

type families. This is challenging, not only in terms of the programming effort, but more because of the consequences

it will have on features such as uniqueness typing. We plan to use this double-edged front as an implementation laboratory

to investigate these avenues.

Further reading

- John van Groningen, Thomas van Noort, Peter Achten, Pieter Koopman, and Rinus Plasmeijer. Exchanging sources between Clean

and Haskell — A double-edged front end for the Clean compiler. In Jeremy Gibbons, editor, Proceedings of the Haskell

Symposium, Haskell ’10, Baltimore, MD, USA, pages 49–60. ACM Press, 2010.

- The front end is under active development, current releases are available via http://wiki.clean.cs.ru.nl/Download_Clean.

The Reduceron is a graph-reduction processor implemented on an FPGA.

Over the past 18 months, work on the Reduceron has led to a factor of

five speed-up. This has been achieved through a range of design

improvements spanning architectural, machine, and compiler-level

issues. See our ICFP’10 paper for details.

Work on the Reduceron continues. We have taken a step towards

parallel reduction in the form of primitive redex speculation.

We have developed a static analysis and transformation (currently

limited to first-order programs) that predicts and increases run-time

occurrences of primitive redexes, allowing a simpler and faster

machine design. Early results look good, and we hope to extend the

technique to higher-order programs.

Experiments in verification, both at the compiler level and the

bytecode level, are also underway.

Looking ahead, we aim eventually to have multiple Reducerons running

in parallel. We are also interested in increasing the amount of

memory available to the Reduceron, and in technology advances that may

enable faster clocking frequencies.

Two main by-products have emerged from the work. First, York

Lava, now available from Hackage, is the HDL we use. It is very

similar to Chalmers Lava (→11.6), but supports a greater variety of primitive

components, behavioral description, number-parameterized types, and a

first attempt at a Lava prelude. Second, F-lite is our subset

of Haskell, with its own lightweight toolset and experimental

supercompiler (http://haskell.org/communities/11-2009/html/report.html#sect4.1.4).

Further reading

3.8 Specific Platforms

3.8.1 Debian Haskell Group

The Debian Haskell Group aims to provide an optimal Haskell experience

to users of the Debian GNU/Linux distribution and derived distributions

such as Ubuntu. We try to follow the Haskell Platform versions for the

core package and package a wide range of other useful libraries and

programs. In total, we maintain 202 source packages.

A system of virtual package names and dependencies, based on the ABI

hashes, guarantees that a system upgrade will leave all installed

libraries usable. Most libraries are also optionally available with the

profiling data and the documentation packages register with the

system-wide index.

Currently, we are in the process of releasing the next version of

Debian, squeeze, so the updating rate has slowed. Once this is done, we

will bring our versions up to date. This will also require some work to

rename the packages from libghc6- to libghc-, as the next version of GHC

has a new major version number.

Further reading

http://wiki.debian.org/Haskell

3.8.2 Haskell in Gentoo Linux

Gentoo Linux currently officially supports GHC 6.10.4, including the

latest Haskell Platform (→3.1) for x86, amd64, sparc,

and ppc64. For previous GHC versions we also have binaries available

for alpha, hppa and ia64.

The full list of packages available through the official repository

can be viewed at

http://packages.gentoo.org/category/dev-haskell?full_cat.

The GHC architecture/version matrix is available at

http://packages.gentoo.org/package/dev-lang/ghc.

Please report problems in the normal Gentoo bug tracker

at bugs.gentoo.org.

We have also recently started an official Gentoo Haskell blog where we

can communicate with our users what we are doing

http://gentoohaskell.wordpress.com/.

There is also an overlay which contains more than 300 extra unofficial

and testing packages. Thanks to the Haskell developers using Cabal and

Hackage (→6.7.1), we have been able to write a tool called

“hackport” (initiated by Henning Günther) to generate Gentoo

packages with minimal user intervention. Notable packages in the

overlay include the latest version of the Haskell Platform as well as

the latest 6.12.2 release of GHC, as well as popular Haskell packages

such as pandoc (→9.2.3) and gitit (→5.2.5).

More information about the Gentoo Haskell Overlay can be found at

http://haskell.org/haskellwiki/Gentoo. Using Darcs (→6.5.1),

it is easy to keep up to date, to submit new packages, and to fix any

problems in existing packages. It is also available via the Gentoo

overlay manager “layman”. If you choose to use the overlay, then

any problems should be reported on IRC (#gentoo-haskell on

freenode), where we coordinate development, or via email

<haskell at gentoo.org> (as we have more people with the ability to

fix the overlay packages that are contactable in the IRC channel than

via the bug tracker).

Through recent efforts we have developed a tool called

“haskell-updater”

http://www.haskell.org/haskellwiki/Gentoo#haskell-updater

(initiated by Ivan Lazar Miljenovic). This is a replacement of the

old ghc-updater script for rebuilding packages when a new

version of GHC is installed which is now not only written in Haskell

but will also rebuild broken packages. “haskell-updater” is still

in active development to further refine and add to its features and

capabilities.

As always we are more than happy for (and in fact encourage) Gentoo

users to get involved and help us maintain our tools and packages,

even if it is as simple as reporting packages that do not always work

or need updating: with such a wide range of GHC and package versions

to co-ordinate, it is hard to keep up! Please contact us on IRC or

email if you are interested!

The Fedora Haskell SIG is an effort to provide good support for Haskell in Fedora.

Fedora 14 is shipping on 2nd November with ghc-6.12.3, haskell-platform-2010.2.0.0, and darcs-2.4.4. Library doc subpackages have been merged into their devel subpackages. Most of the new core gtk2hs packages have been packaged and xmobar was also added. There are currently 72 Haskell-related source packages in Fedora, and more than 60 new packages in the review queue.

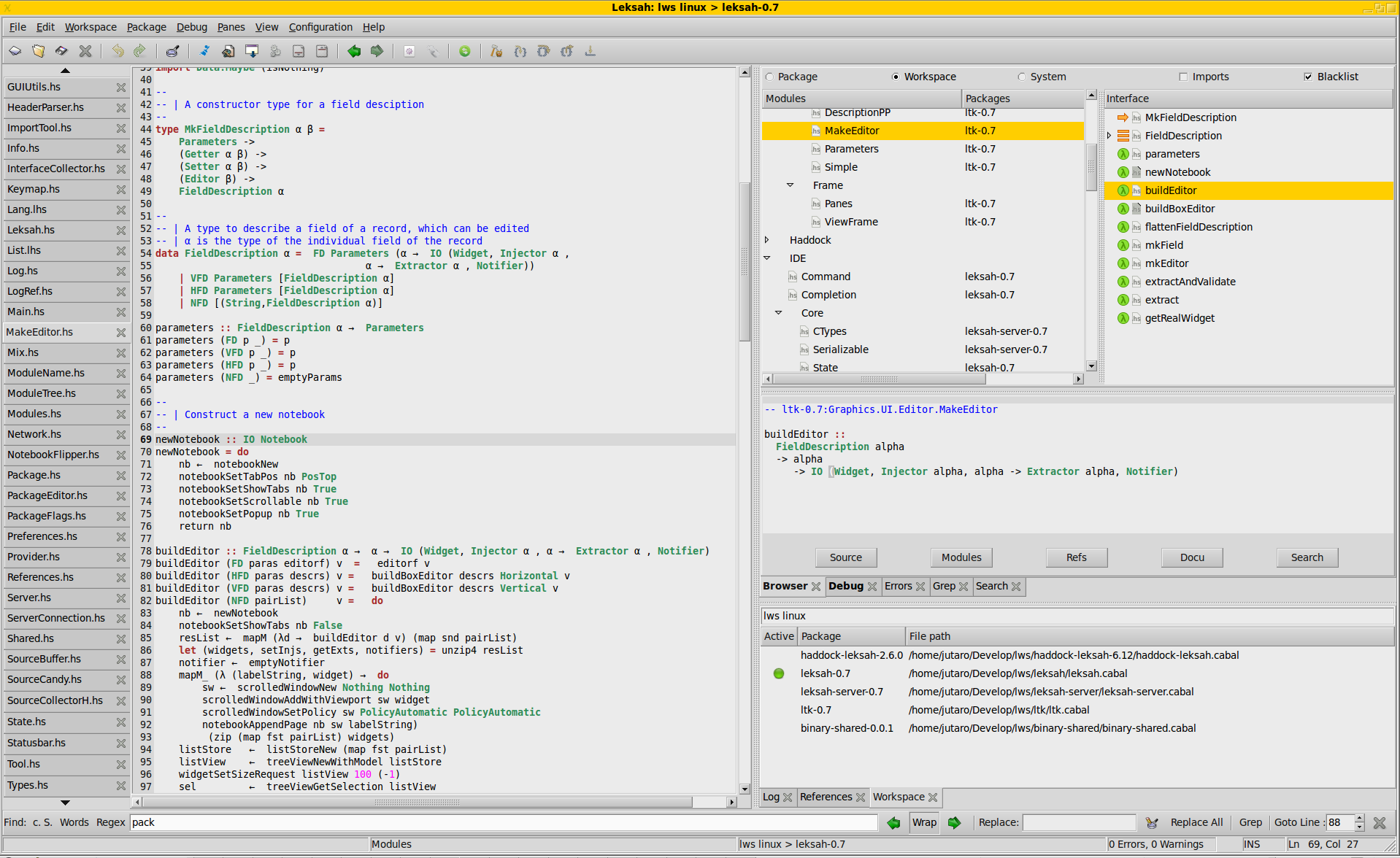

In Fedora 15 we are hoping to ship ghc 7 and to use ghc package hash metadata in our binary rpms. Also more packages are planned: e.g., pandoc and leksah.

Contributions to Fedora Haskell are welcome: join us on #fedora-haskell on Freenode IRC and our mailing-list.

Further reading

4 Related Languages

Agda is a dependently typed functional programming language (developed

using Haskell). A central feature of Agda is inductive families, i.e.

GADTs which can be indexed by values and not just types. The

language also supports coinductive types, parameterized modules, and

mixfix operators, and comes with an interactive interface—the

type checker can assist you in the development of your code.

A lot of work remains in order for Agda to become a full-fledged

programming language (good libraries, mature compilers, documentation,

etc.), but already in its current state it can provide lots of fun as

a platform for experiments in dependently typed programming.

In September version 2.2.8 was released, with these new features:

- Pattern matching for records.

- Proof-irrelevant function types.

- Reflection.

- Users can define new forms of binding syntax.

Further reading

The Agda Wiki: http://wiki.portal.chalmers.se/agda/

MiniAgda is a tiny dependently-typed programming language in the style

of Agda (→4.1). It serves as a laboratory to test potential additions to the

language and type system of Agda. MiniAgda’s termination checker is a

fusion of sized types and size-change termination and supports

coinduction. Equality incorporates eta-expansion at record and

singleton types. Function arguments can be declared as static; such

arguments are discarded during equality checking and compilation.

Currently, I am developing a source translation from MiniAgda to

Haskell as prototype for an Agda to Haskell compiler. In the long

run, I plan to evolve MiniAgda into a core language for Agda with

termination certificates.

MiniAgda is available as Haskell source code and compiles with GHC

6.12.x.

Further reading

http://www2.tcs.ifi.lmu.de/abel/miniagda/

Idris is an experimental language with full dependent types.

Dependent types allow types to be predicated on values, meaning that

some aspects of a program’s behavior can be specified precisely in

the type. The language is closely related to Epigram and Agda (→4.1).

It is available from http://www.idris-lang.org, and there is a

tutorial at http://www.idris-lang.org/tutorial.

Idris aims to provide a platform for realistic programming with

dependent types. By realistic, we mean the ability to interact with

the outside world and use primitive types and operations, to make a

dependently typed language suitable for systems programming. This

includes networking, file handling, concurrency, etc.

Idris emphasizes programming over theorem proving, but nevertheless

integrates with an interactive theorem prover. It is compiled, via C,

and uses the Boehm-Demers-Weiser garbage collector.

One goal of the project is to show that Idris, and dependently typed

programming in general, can be efficient enough for the development of

real world verified software. To this end, Idris is currently being used

to develop a library for verified network protocol implementation,

with example applications.

Further reading

http://www.idris-lang.org/

Clean is a general purpose, state-of-the-art, pure and lazy functional

programming language designed for making real-world applications.

Here is a short list of notable features:

- Clean is a lazy, pure, and higher-order functional programming language with

explicit graph-rewriting semantics.

- Although Clean is by default a lazy language, one can smoothly turn it into

a strict language to obtain optimal time/space behavior: functions can be

defined lazy as well as (partially) strict in their arguments; any (recursive)

data structure can be defined lazy as well as (partially) strict in any of its

arguments.

- Clean is a strongly typed language based on an extension of the well-known

Milner/Hindley/Mycroft type inferencing/checking scheme including the common

higher-order types, polymorphic types, abstract types, algebraic types, type

synonyms, and existentially quantified types.

- Clean has pattern matching, guards, list comprehensions, array

comprehensions and a lay-out sensitive mode.

- Clean supports type classes and type constructor classes to make overloaded

use of functions and operators possible.

- The uniqueness typing system in Clean makes it possible to develop efficient

applications. In particular, it allows a refined control over the single-threaded

use of objects which can influence the time and space behavior of

programs. Uniqueness typing can also be used to incorporate destructive

updates of objects within a pure functional framework. It allows destructive

transformation of state information and enables efficient interfacing to the

nonfunctional world (to C but also to I/O systems like X-Windows) offering

direct access to file systems and operating systems.

- Clean offers records and (destructively updateable) arrays and files.

- The Clean type system supports dynamic typing, allowing values of arbitrary

types to be wrapped in a uniform package and unwrapped via a type annotation at

run time. Using dynamics, code and data can be exchanged between Clean applications

in a flexible and type-safe way.

- Clean provides a built-in mechanism for generic functions.

- There is a Clean IDE and there are many libraries available offering

additional functionality.

Future plans

Please see the entry on exchanging sources between Clean and Haskell (→3.6)

for the future plans.

Further reading

http://wiki.clean.cs.ru.nl/

Timber is a general programming language derived from Haskell, with the specific aim of supporting development of complex event-driven systems. It allows programs to be conveniently structured in terms of objects and reactions, and the real-time behavior of reactions can furthermore be precisely controlled via platform-independent timing constraints. This property makes Timber particularly suited to both the specification and the implementation of real-time embedded systems. An implementation of Timber is available as a command-line compiler tool, currently targeting POSIX-based systems only.

Timber shares most of Haskell’s syntax but introduces new primitive constructs for defining classes of reactive objects and their methods. These constructs live in the Cmd monad, which is a replacement of Haskell’s top-level monad offering mutable encapsulated state, implicit concurrency with automatic mutual exclusion, synchronous as well as asynchronous communication, and deadline-based scheduling. In addition, the Timber type system supports nominal subtyping between records as well as datatypes, in the style of its precursor O’Haskell.

A particularly notable difference between Haskell and Timber is that Timber uses a strict evaluation order. This choice has primarily been motivated by a desire to facilitate more predictable execution times, but it also brings Timber closer to the efficiency of traditional execution models. Still, Timber retains the purely functional characteristic of Haskell, and also supports construction of recursive structures of arbitrary type in a declarative way.

The Timber compiler is currently undergoing a major reimplementation of its front-end, an effort triggered by increasing needs to significantly improve error messages as well as to sharpen up the documentation of the language syntax and its scoping rules. The new compiler, tentatively called version 2, will also include a newly developed Javascript back-end, HTML5 and OpenGL bindings, as well as bare-metal ARM7 support. A minor bug-fix release announced in the previous HCAR has been postponed and will be merged into the release of version 2. The latest release of the Timber compiler system still dates back to May 2009 (version 1.0.3).

Other active projects include interfacing the compiler to memory and execution-time analysis tools, extending it with a supercompilation pass, and building an interpreting debugger on basis of the new compiler front-end.

Further reading

http:://timber-lang.org

Disciple is a dialect of Haskell that uses strict evaluation as the default and supports destructive update of arbitrary data. Many Haskell programs are also Disciple programs, or will run with minor changes. In addition, Disciple includes region, effect, and closure typing, and this extra information provides a handle on the operational behaviour of code that is not available in other languages. Our target applications are the ones that you always find yourself writing C programs for, because existing functional languages are too slow, use too much memory, or do not let you update the data that you need to.

Our compiler (DDC) is still in the “research prototype” stage, meaning that it will compile programs if you are nice to it, but expect compiler panics and missing features. You will get panics due to ungraceful handling of errors in the source code, but valid programs should compile ok. The test suite includes a few thousand-line graphical demos, like a ray-tracer and an n-body collision simulation, so it is definitely hackable.

We have spent a good slab of time this year cleaning up the internals and getting proper regression testing build bots online. We now support OSX/x86, Linux/{x86, x86–64, PPC}, FreeBSD/x86, and Cygwin/x86 so you should be able to get DDC running on your own system without trouble. Other than that, we have been stabilising the existing implementation and fixing bugs. The plan for the coming year is to complete support for type classes and dictionary passing, and to extend the type system so that it can “auto-freeze” data structures that have been created using destructive update but will be treated as constant from then on. We are also working on an LLVM port which will provide faster code in the long term without having to rely on the existing via-C backend.

Disciple programs can be written in either a pure/functional or effectful/imperative style, and one of our main goals is to provide both styles coherently in the same language. The two styles can be mixed safely. For example: when using laziness, the type system guarantees that computations with visible side effects are not suspended. The fact that we have region, effect, and closure typing available means we can also support more fine-grained notions of ST-monad style effect encapsulation, with the added benefit that the encapsulation/masking is handled seamlessly by the type system. If this sounds interesting to you then drop us a line!

Further reading

http://trac.haskell.org/ddc

5 Haskell and …

5.1 Haskell and Parallelism

TwilightSTM is an extended Software Transactional Memory system.

It safely augments the STM monad with non-reversible actions and

allows introspection and modification of a transaction’s state.

TwilightSTM splits the code of a transaction into a (functional) atomic

phase, which behaves as in GHC’s implementation, and an (imperative)

twilight phase. Code in the twilight phase executes before the

decision about a transaction’s fate (restart or commit) is made and

can affect its outcome based on the actual state of the execution

environment.

The Twilight API has operations to detect

and repair read inconsistencies as well as operations to overwrite

previously written variables.

It also permits the safe embedding of I/O operations with the

guarantee that each I/O operation is executed only once. In contrast to

other implementations

of irrevocable transactions, twilight code may run concurrently with

other transactions including their twilight code in a safe

way. However, the programmer is obliged to prevent deadlocks and race

conditions when integrating I/O operations that participate in locking

schemes.

A prototype implementation is available on Hackage (http://hackage.haskell.org/package/twilight-stm).

We are currently working on the composability of Twilight monads and are applying TwilightSTM to different use cases.

Further reading

http://proglang.informatik.uni-freiburg.de/projects/twilight/

MPI, the Message Passing Interface, is a popular communications protocol

for distributed parallel computing (http://www.mpi-forum.org/). It is widely

used in high performance scientific computing, and is designed to scale up from

small multi-core personal computers to massively parallel supercomputers.

MPI applications

consist of independent computing processes which share information by message passing

communication. It supports both point-to-point and collective communication operators,

and manages much of the mundane aspects of message delivery. There are several

high-quality implementations of MPI available which adhere to the standard API

specification (the latest version of which is 2.2). The MPI specification defines

interfaces for C, C++, and Fortran, and bindings are available for many other

programming languages. As the name suggests, Haskell-MPI provides a Haskell interface

to MPI, and thus facilitates distributed parallel programming in Haskell. It is implemented

on top of the C API via Haskell’s foreign function interface. Haskell-MPI provides

three different ways to access MPI’s functionality:

- A direct binding to the C interface.

- A convenient interface for sending arbitrary serializable Haskell data values as messages.

- A high-performance interface for working with (possibly mutable) arrays of storable

Haskell data types.

We do not currently provide exhaustive coverage of all the functions and types defined by MPI

2.2, although we do provide bindings to the most commonly used parts. In the future we plan

to extend coverage based on the needs of projects which use the library.

We are in the final stages of preparing the first release of Haskell-MPI. We will

publish the code on Hackage once the user documentation is complete.

We have run various simple latency and bandwidth tests using up to 512 Intel x86-64 cores, and

for the high-performance interface, the results are within acceptable bounds of those

achieved by C.

Haskell-MPI is designed to work with any compliant implementation of MPI, and we

have successfully tested it with both OpenMPI (http://www.open-mpi.org/) and

MPICH2 (http://www.mcs.anl.gov/research/projects/mpich2/).

Further reading

http://github.com/bjpop/haskell-mpi

Eden extends Haskell with a small set of syntactic constructs for

explicit process specification and creation. While providing

enough control to implement parallel algorithms efficiently, it

frees the programmer from the tedious task of managing low-level

details by introducing automatic communication (via head-strict

lazy lists), synchronization, and process handling.

Eden’s main constructs are process abstractions and process

instantiations. The function process :: (a -> b) ->

Process a b embeds a function of type (a -> b) into a

process abstraction of type Process a b which,

when instantiated, will be executed in parallel. Process

instantiation is expressed by the predefined infix operator

( # ) :: Process a b -> a -> b. Higher-level

coordination is achieved by defining skeletons, ranging

from a simple parallel map to sophisticated replicated-worker

schemes. They have been used to parallelize a set of non-trivial

benchmark programs.

Survey and standard reference

Rita Loogen, Yolanda Ortega-Mallén, and Ricardo Peña:

Parallel Functional Programming in Eden, Journal of

Functional Programming 15(3), 2005, pages 431–475.

Implementation

We happily announce that a new release of the Eden compiler based on GHC 6.12.3 is available on our relaunched web pages, see

http://www.mathematik.uni-marburg.de/~eden

New features are the support of 64-Bit architectures and an extended version of the new GHC EventLog format for parallel program traces. These traces can be visualized using the Eden trace viewer tool EdenTV. The new version of this tool has been written in Haskell and is also freely available on the Eden web pages.

The Eden skeleton library is currently being revised and cabalized. A development snapshot is available

on the Eden pages.

Recent and Forthcoming Publications

- Lidia Sanchez-Gil, Mercedes Hidalgo-Herrero, Yolanda Ortega-Mallen: On the relation of call-by-need and call-by-name in a natural semantics setting, In Preproceedings of the 22nd Symposium on Implementation and Application of Functional Languages (IFL 2010), Technical Report UU-CS-2010-020, Department of Information and Computing Sciences, Utrecht University, 2010.

- Jost Berthold: Orthogonal Haskell Data Serialisation, In Preproceedings of the 22nd Symposium on Implementation and Application of Functional Languages (IFL 2010), Technical Report UU-CS-2010-020, Department of Information and Computing Sciences, Utrecht University, 2010.

- Oleg Lobachev and Rita Loogen:

Estimating Parallel Performance, a Skeleton-based Approach,

in HLPP’10: Workshop on High-level Parallel Programming, ACM Press, 2010, 25–34.

- Oleg Lobachev, Rita Loogen: Implementing Data Parallel Rational Multiple-Residue Arithmetic in Eden, in CASC’10:

Computer Algebra in Scientific Computing, Springer LNCS 6244, 2010, 178–193.

- Mischa Dieterle, Jost Berthold, Rita Loogen: A Skeleton for Distributed Work Pools in Eden, in FLOPS’10: Functional and Logic Programming, Springer LNCS 6009, 2010, 337-353.

- Thomas Horstmeyer, Rita Loogen:

Grace — Graph-based Communication in Eden,

Trends in Functional Programming, Volume 10, Intellect 2010, 1–16.

- Mustafa Aswad, Phil Trinder, Abdallah Al Zain, Greg Michaelson, Jost Berthold:

Low Pain vs No Pain Multi-core Haskells, Trends in Functional Programming,

Volume 10, Intellect 2010, 49–64.

- Lidia Sanchez-Gil, Mercedes Hidalgo-Herrero, Yolanda Ortega-Mallen:

An Operational Semantics for Distributed Lazy Evaluation,

Trends in Functional Programming, Volume 10, Intellect 2010, 65–80.

Further reading

http://www.mathematik.uni-marburg.de/~eden

5.2 Haskell and the Web

5.2.1 GHCJS: Haskell to Javascript compiler

GHCJS currently is a GHC back-end which produces Javascript code.

Modern Javascript environments become more and more advanced.

TraceMonkey and V8 engines allow very fast Javascript execution.

It is possible, for instance, to create an in-browser hardware emulator:

an emulated CPU’s instructions are compiled down to Javascript functions, and

Javascript instructions are compiled to the native host CPU’s instructions

by Javascript JIT-compilers (http://weblogs.mozillazine.org/roc/archives/2010/11/implementing_a.html).

The idea to bring the power of the Haskell language to the world of

AJAX-applications is not new. It has been proposed many times in

Haskell-café. The success of Google’s GWT was uncomfortable to

watch, when our beloved language lacked such a feature.

The first implementation I know is Dmitry Golubovsky’s

YHC back-end (http://www.haskell.org/haskellwiki/Yhc/Javascript).

The second one was my GHC backend hs2js (http://vir.mskhug.ru/).

There were differences between the two projects. Dmitry had tried to

provide a Haskell environment to develop everything in Haskell. He had developed

an automated conversion tool to generate Haskell-bindings from DOM IDL

specifications provided by the W3C. My aim was more modest: I thought that

we could use Haskell to implement complex logic. The ability to use

Parsec in a browser was asked for several times in Haskell-café. With the latter

approach we can extend existing Javascript-applications with

algorithms implemented in Haskell. UHC (→3.5) started to implement a

Javascript-backend recently (http://utrechthaskellcompiler.wordpress.com/2010/10/18/haskell-to-javascript-backend/), but I have not looked at it, yet.

GHCJS is a fresh rewrite of hs2js that was started in August 2010.

It is currently a standalone tool that uses GHC as a library and produces a

.js-file for each Haskell-module. Javascript code can load any

Haskell-module and evaluate any exported Haskell-value. Some examples

that are available with the GHCJS package show some simple Haskell programs

like generation of a sequence of prime-numbers. Each Haskell module

is currently a standalone Javascript file. When a value of some module

is needed, the module is loaded dynamically.

The code is available at the GHCJS github page

(see below) under the terms of the BSD3 license.

It was tested with GHC 6.12.

There are many tasks awaiting completion with GHCJS:

-

A faster and more robust module loader:

-

Now it loses a lot of time on 404 errors, trying to access modules

in the wrong package directory. I plan to use GHC’s package abstraction.

A package will be a Web-server’s directory and Javascript’s namespace.

Every module will be unambiguously associated with one package.

It will become possible to load a module with one unambiguous HTTP-request.

This change will short the loading time of Haskell programs.

-

Make it work in all major browsers:

-

There are some minor problems with Internet Explorer.

But it should be trivial to fix them.

-

FFI support:

-

FFI support should make the whole thing generally usable. FFI-exports

should generate easily-callable Javascript functions that will type-check

their arguments to make a combination of dynamically-typed Javascript

and statically-typed Haskell seamless. FFI-imports will allow the

implementation of DOM-manipulation in Haskell programs.

Further reading

https://github.com/sviperll/ghcjs

The Hawk system is a web framework for Haskell. It is comparable in functionality and architecture with Ruby on Rail

and other web frameworks. Its architecture follows the MVC pattern.

It consists of a simple relational database mapper for persistent storage of data

and a template system for the view component. This template system has two

interesting features: First, the templates are valid XHTML documents. The parts

where data has to be filled in are marked with Hawk specific elements and attributes.

These parts are in a different namespace, so they do not destroy the XHTML structure.

The second interesting feature is that the templates contain type descriptions for

the values to be filled in. This type information enables a static type check whether

the models and views fit together.

A first application of the Hawk framework is a customizable search for Hayoo! (→5.2.4).

But the framework is independent of the Holumbus search engine.

It will be applicable for the development of arbitrary web applications.

Hawk was developed by Björn Peemöller and Stefan Roggensack. Currently, Alexander Treptow is applying, testing, and extending the

framework.

The Web Application Interface (WAI) is an interface between web applications and web servers. By targeting the WAI, a web application can get access to multiple servers; and through WAI, a server can support web applications never intended to run on it.

In designing this package, performance was first priority: there should be no performance overhead for using the WAI. As such, an enumerator interface was selected for the response body, a handle-like interface, called a source, for the request body, and bytestrings used throughout. Another design decision was to keep the interface as general as possible by excluding variables which are not universal to all web servers.

Since the last report, version 0.2.0 has been released, which replaces some of the special data types (request and response headers, for instance) with CIByteString, a case-insensitive bytestring which allows easy lookups. The ecosystem around WAI has also matured significantly: we have handlers for CGI, FastCGI, SCGI, development servers and the Snap standalone server (→5.2.10), and middleware for cleaning URLs, GZIP compression, and JSON-P. There is even a backend to convert your web applications into desktop applications via Webkit.

Hopefully, WAI can be one of many smaller packages which lead to collaboration in the Haskell web development community and development of a healthy ecosystem. There is an experimental Happstack WAI backend, and the Yesod Web Framework (→5.2.8) uses WAI exclusively.

Further reading

http://github.com/snoyberg/wai

5.2.4 Holumbus Search Engine Framework

Description

The Holumbus framework consists of a set of modules and tools

for creating fast, flexible, and highly customizable search engines with Haskell.

The framework consists of two main parts. The first part is the indexer for extracting the data

of a given type of documents, e.g., documents of a web site, and store it in an appropriate index.

The second part is the search engine for querying the index.

An instance of the Holumbus framework is the Haskell API search engine Hayoo!

(http://holumbus.fh-wedel.de/hayoo/). The web interface for Hayoo! is

implemented with the Janus web server, written in Haskell and based on HXT (→8.8.2).

The framework supports distributed computations for building indexes

and searching indexes. This is done with a MapReduce like framework.

The MapReduce framework is independent of the index- and

search-components, so it can be used to develop distributed systems

with Haskell.

The framework is now separated into four packages, all available on

Hackage.

- The Holumbus Search Engine

- The Holumbus Distribution Library

- The Holumbus Storage System

- The Holumbus MapReduce Framework

The search engine package includes the indexer and search modules,

the MapReduce package bundles the distributed MapReduce system.

This is based on two other packages, which may be useful for their on:

The Distributed Library with a message passing communication layer

and a distributed storage system.

Features

- Highly configurable crawler module for flexible indexing of structured data

- Customizable index structure for an effective search

- find as you type search

- Suggestions

- Fuzzy queries

- Customizable result ranking

- Index structure designed for distributed search

- Git repository containing the current development version of all packages under

http://holumbus.fh-wedel.de/src.git

- Distributed building of search indexes

Current Work

The data structures of the Holumbus indexes have been optimized

for space and time. There is a new and efficient prefix tree structure,

which further enables index updates.

The indexer and search module is used

to support the Hayoo! engine for searching the hackage package library

(http://holumbus.fh-wedel.de/hayoo/hayoo.html). Because of the fast growing number

of packages on hackage, the Hayoo! search engine will be extended by a package search.

Sebastian Reese has finished his work on applying

the MapReduce framework and for giving

tuning and configuration hints. Benchmarks for various small problems

and for generating search indexes have shown that the architecture

scales very well.

In a subproject of Holumbus, the so called Hawk framework (→5.2.2),

Björn Peemöller and Stefan Roggensack have developed a web framework

for Haskell. Currently Alexander Treptow is applying, testing, and extending the

framework. A first application is a customizable search for Hayoo!

Further reading

The Holumbus web page

(http://holumbus.fh-wedel.de/)

includes downloads, Git web interface, current status, requirements,

and documentation.

Timo Hübel’s master thesis describing the Holumbus index structure and

the search engine is available at

http://holumbus.fh-wedel.de/branches/develop/doc/thesis-searching.pdf.

Sebastian Gauck’s thesis dealing with the crawler component is

available at

http://holumbus.fh-wedel.de/src/doc/thesis-indexing.pdf

The thesis of Stefan Schmidt describing the Holumbus MapReduce is

available via http://holumbus.fh-wedel.de/src/doc/thesis-mapreduce.pdf.

Gitit is a wiki built on Happstack (→5.2.6) and backed by a git, darcs, or mercurial

filestore. Pages and uploaded files can be modified either directly

via the VCS’s command-line tools or through the wiki’s web interface.

Pandoc (→9.2.3) is used for markup processing, so pages may be written in

(extended) markdown, reStructuredText, LaTeX, HTML, or literate Haskell,

and exported in thirteen different formats, including LaTeX, ConTeXt,

DocBook, RTF, OpenOffice ODT, MediaWiki markup, EPUB, and PDF.

Notable features of gitit include:

-

Plugins: users can write their own dynamically loaded page transformations,

which operate directly on the abstract syntax tree.

-

Math support: LaTeX inline and display math is automatically converted

to MathML, using the texmath library.

-

Highlighting: Any git, darcs, or mercurial repository can be made a gitit wiki.

Directories can be browsed, and source code files are

automatically syntax-highlighted. Code snippets in wiki pages

can also be highlighted.

-

Library: Gitit now exports a library, Network.Gitit, that makes it

easy to include a gitit wiki (or wikis) in any Happstack application.

-

Literate Haskell: Pages can be written directly in literate Haskell.

Further reading

http://gitit.net (itself

a running demo of gitit)

Happstack is a web application framework focused on high-scalability,

rapid development, ease of deployment, and flexibility. The core

libraries provided by Happstack include:

-

happstack-server

-

, an HTTP server with a rich environment for

routing requests, working with cookies, processing form data,

handling file uploads, serving static content with

sendfile(), and more. Applications can be run using the

built-in HTTP backend, or by using other handlers such as FastCGI.

-

happstack-state

-

, also known as MACID, provides a NoSQL,

RAM-cloud for distributed persistent state. Unlike limited

key-value stores, MACID natively stores arbitrary Haskell data

types. This allows the storage of user defined types as well as

standard data structures including trees, graphs, and maps. Updates

and queries are written using plain old Haskell functions. These

features are provided without sacrificing the ACID properties.

-

happstack-ixset

-

provides a set data-type with the ability to

index elements by multiple keys. It provides much of the same

functionality as a table in a relational database. This includes the

ability to search by one or more keys, search by range, update the

value at a specified index, etc.

-

happstack-data

-

builds on the binary library to provide

versioned data serialization and automatic migration of data from

older versions to newer versions.

Happstack also has integrated support for many web related libraries

including:

-

templates

-

using HSP, Hamlet, HStringTemplate, BlazeHtml, and more.

-

type-safe urls and routing

-

avoid bad links and namespace collisions using web-routes.

-

form generation and validation

-

using formlets or digestive functors.

-

databases

-

using HDBC, Takusen, HaskellDB, etc.

Future plans

Happstack 6 is nearly completed. Happstack 6 features many

improvements and performance enhancements to the happstack-server

library. It has been heavily refactored to make documentation browsing

easier. The haddock documentation has been greatly improved. And there

is now a detailed Happstack Crash Course which guides developers

through the libraries in a detailed and logical manner. It includes

many self-contained runnable demos.

Happstack 7 will include significant enhancements to the

happstack-state library including sharding and better tools for

examining and manipulating the contents of the data store. It will

also include a new implementation of happstack-ixset which is faster,

uses less memory, and has support for parallel traversals to take

advantage of multicore machines.

Happstack 8 will migrate to an iteratee-based HTTP backend for even

better performance and resource management. The tentative plan is to

use Hyena.

Further reading

5.2.7 Mighttpd — Yet another Web Server

Mighttpd (called mighty) is a simple but practical Web server in Haskell.

It is now working on Mew.org providing basic web features and CGI (mailman and contents search). Three packages are registered in hackageDB.

-

c10k

-

Since GHC is using the select system call, a Haskell program complied with GHC cannot handle over 1,024 connections/files simultaneously. The c10k package uses the prefork technique to get rid of this barrier.

-

webserver

-

The webserver package provides HTTP parser, session management, redirection, CGI, and so on. This package is independent from back-end storage systems. So you can build a Web server on any storage system including files, key-value-store DB, etc.

-

mighttpd

-

This package provides a simple but practical web server based on files using the c10k and webserver packages.

I am planning to implement FastCGI and WebSocket.

Further reading

http://www.mew.org/~kazu/proj/mighttpd/en/

Yesod is a web framework designed to play towards the strengths of the Haskell language to make web programming safer and more productive. It is fair to say that most web development today occurs in dynamic languages like PHP, Python, and Ruby, and we see the results: cross-site scripting attacks, applications that do not scale, and countless minor bugs entering production because they can only be detected at runtime.

Instead of providing a single monolithic package, Yesod is broken up into many smaller projects. This means that many of the powerful features of Yesod can be used in your own web development tool stack without issue. Packages for authentication, client-side encrypted session data, middlewares, web encodings, YAML, persistence, HTML templating and more are all fully available on Hackage, without any reliance on Yesod.

Yesod is currently on its 0.5 version. There are plans for some minor changes to take place in 0.6: mostly this involves extracting some functionality into separate packages for more flexibility in API changes. Assuming this change goes well, 0.6 will probably morph into a 1.0 release, indicating a fair level of API stability.

The Yesod documentation site (http://docs.yesodweb.com/) is a great place for information. It has code examples, screencasts, the Yesod blog and—most importantly—a book on Yesod. The book is not yet complete, but provides a very solid introduction to the main features, and it is constantly being revised and expanded.

Yesod is already powering some major sites, including Haskellers (→1.1). This not only shows that Yesod is ready for use today, but also gives some great examples of real-life Yesod code in the wild. If you are looking for type-safe, concise, RESTful web development, you should check out Yesod.

Further reading

http://docs.yesodweb.com/

Lemmachine is a REST’ful web framework that makes it easy to get HTTP right by exposing users to overridable hooks with sane defaults. The main architecture is a copy of Erlang-based Webmachine, which is currently the best documentation reference (for hooks &general design).

Lemmachine stands out from the dynamically typed Webmachine by being written in dependently typed Agda (→4.1). The goal of the project is to show the advantages gained from compositional testing by taking advantage of proofs being inherently compositional. See http://github.com/larrytheliquid/Lemmachine/blob/master/src/Lemmachine/Default/Proofs.agda for examples of universally quantified proofs (tests over all possible input values) written against the default resource, which does not override any hooks.

When a user implements their own resource, they can write simple lemmas (“unit tests”) against the resource’s hooks, but then literally reuse those lemmas to write more complex proofs (“integration tests”). For examples see some reuse of lemmas in the proofs.

The big goal is to show that in service oriented architectures, proofs of individual middlewares can themselves be reused to write cross-service proofs (even higher level “integration tests”) for a consumer application that mounts those middlewares. See a post at http://vision-media.ca/resources/ruby/ruby-rack-middleware-tutorial for what is meant by middleware.

Another goal is for Lemmachine to come with proofs against the default resource (as it already does). Any hooks the user does not override can be given to the user for free by the framework! Anything that is overridden can generate proofs parameterized only by the extra information the user would need to provide. This would be a major boost in productivity compared to traditional languages whose libraries cannot come with tests for the user that have language-level semantics for real proposition reuse!

Lemmachine currently uses the Haskell Hack abstraction so it can run on several Haskell webservers. Because Agda compiles to Haskell and has an FFI, existing Haskell code can be integrated quite easily.

The project is still in development and rapidly changing. Lemmas and proofs exist for status resolution, and you can now run resources! The focus will now comprise of a gradual direct translation of RFC 2616 sections into dependent type theory.

Further reading

http://github.com/larrytheliquid/Lemmachine

The Snap Framework is a web application framework built from the ground up for

speed, reliability, and ease of use. The project’s goal is to be a cohesive

high-level platform for web development that leverages the power and

expressiveness of Haskell to make building websites quick and easy.

The Snap Framework has been quite active since the last HCAR. Several

developers have joined the effort, the codebase has matured, and test coverage

has increased. Recent benchmarks using the upcoming GHC 7 show approximately

a 50%speed improvement from the benchmarks posted when we launched the project

back in May. These speed improvements come as a result of improvements to

both GHC and Snap.

The team is currently working on the upcoming 0.3 release which will include

a more flexible library interface and support for automatic recompilation of

apps amongst other things.

Further reading

http://snapframework.com

5.3 Haskell and Games

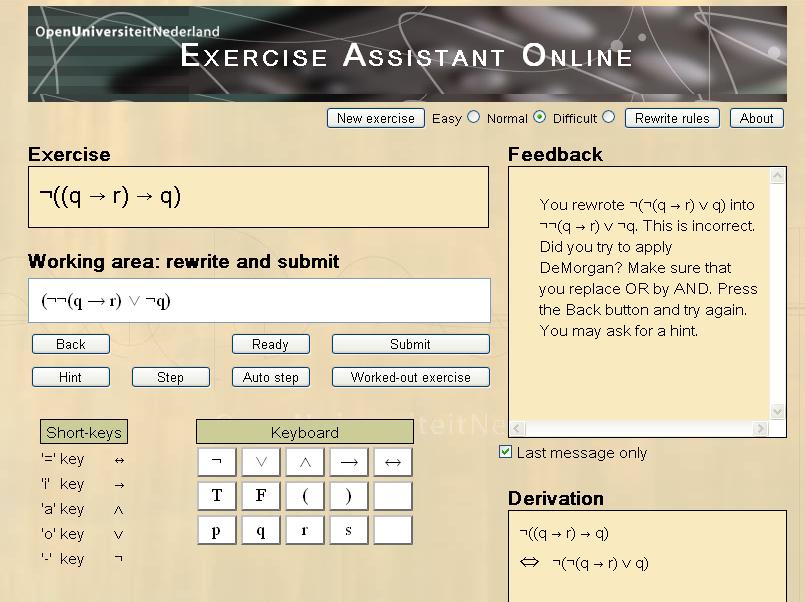

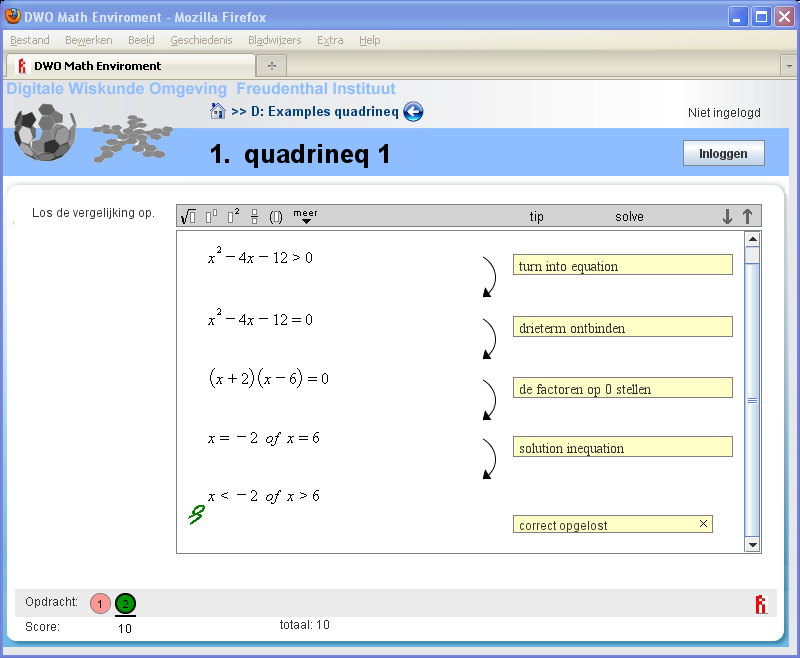

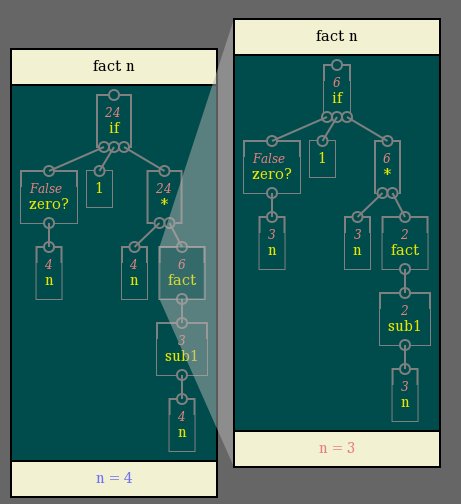

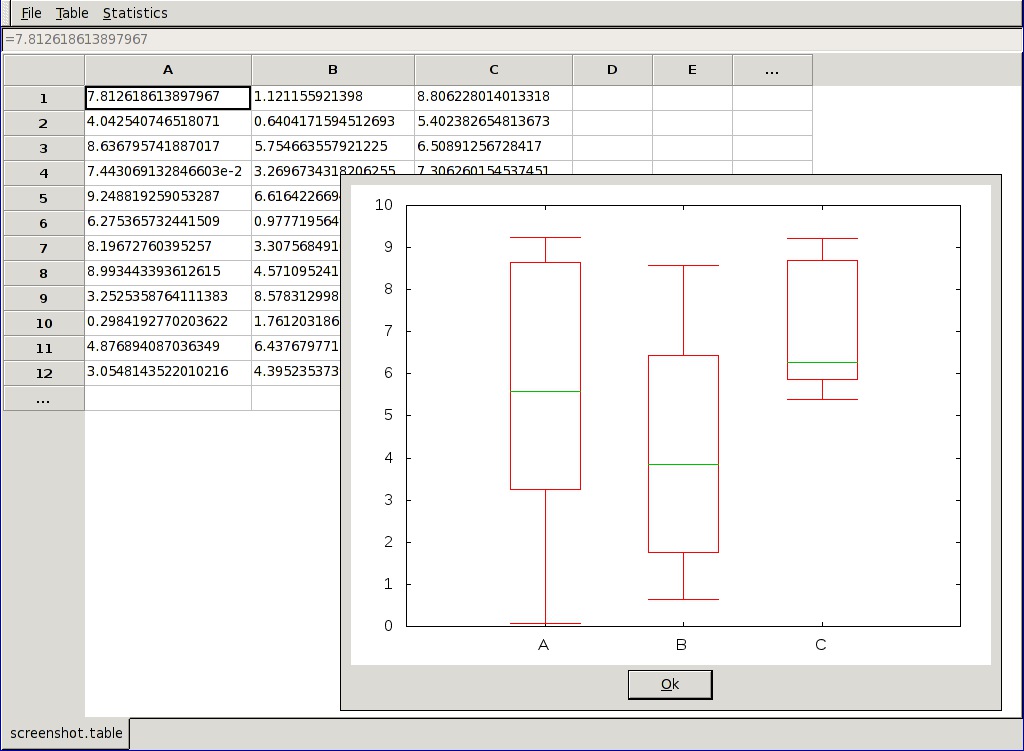

5.3.1 Nikki and the Robots