This is the 21st edition of the Haskell Communities and Activities Report.

As usual, fresh entries are formatted using a blue background, while updated entries have a header with a blue background.

Entries for which I received a liveness ping, but which have seen no essential update for a while, have been replaced with online pointers to previous versions.

Other entries on which no new activity has been reported for a year or longer have been dropped completely.

Please do revive such entries next time if you do have news on them.

A call for new entries and updates to existing ones will be issued on the usual mailing lists in April.

Now enjoy the current report and see what other Haskellers have been up to lately.

Any feedback is

very welcome, as always.

TODO: Persistent library — Improving the DSH library is my current preference.

The principle of this library is KISS, and "don’t reinvent the wheel" by reusing existing state-of-the-art libraries.

For the example code listed above, please refer to https://github.com/lilac/ivy-example/

Recent developments

I have ported ivy-web from wai to snap-server backend, and also wrote a sample project correspond to the starter project of snap.

When everything is fine and I am free, I will upload the code and bump the version to 0.2.

Further reading

rss2irc is an IRC bot that polls a single RSS or Atom feed and announces

new items to an IRC channel, with options for customizing output and

behavior. It aims to be an easy to use, dependable bot that does its job

and creates no problems.

rss2irc was published in 2008 by Don Stewart. Simon Michael took over

maintainership in 2009, with the goal of making a robust low-maintenance

bot to stimulate development in various free/open-source software

communities. It is currently used for several full-time bots including:

- hackagebot — announces new hackage releases in #haskell

- hledgerbot — announces hledger commits in #ledger

- zwikicommitbot — announces Zwiki commits in #zwiki

- squeaksobot — announces Squeak and Smalltalk-related Stack Overflow questions in #squeak

- squeakquorabot — announces Squeak/Smalltalk-related Quora questions in #squeak

- etoystrackerbot — announces new Etoys bugs in #etoys

- etoysupdatesbot — announces Etoys commits in #etoys

- planetzopebot — announces new planet.zope.org posts in #zope

The project is available under BSD license from its home page at

http://hackage.haskell.org/package/rss2irc.

Since last report there has been a great deal of cleanup and

enhancement, but no new release on hackage yet due to an xml-related

memory leak.

Further reading

http://hackage.haskell.org/package/rss2irc

5.3 Haskell and Games

FunGEn (Functional Game Engine) is a BSD-licensed cross-platform 2D

game engine implemented in and for Haskell, using OpenGL and GLUT. It

was created in 2002 by Andre Furtado, updated in 2008 by Simon Michael

and Miloslav Raus, and revived again in 2011, with a GHC 6.12-tested

0.3 release on Hackage, preliminary haddockification and a new home

repo.

FunGEn remains the quickest path to building cross-platform graphical

games in Haskell, due to its convenient game framework and

widely-available dependencies. It comes with several working examples

that are quite easy to read and build (pong, worms). In the last six

months there has been little activity and a new maintainer would be

welcome.

FunGEn-related discussions most often appear in the #haskell-game

channel on irc.freenode.net.

Further reading

http://darcsden.com/simon/fungen

5.3.2 Nikki and the Robots

Nikki and the Robots is a 2D platformer written in Haskell and produced by Joyride Laboratories. Nikki, the protagonist, walks and jumps around the levels wearing a cute ninja/cat costume. Nikki refrains from using any tools or weapons, with one exception: The Robots. These come in various types with different abilities and can be used by Nikki to solve puzzles, overcome obstacles, and complete the level tasks. The game features an integrated level editor.

We made our first binary release of Nikki and the Robots in April 2011.

Publishing

We are releasing the game and the level editor under an open source license (LGPL). The included graphics are published under a permissive Creative Commons license (cc-by-sa). We are also planning to create a server that will allow players to upload the levels they created and download levels from other players. We hope that a community of coders, level creators, and players will emerge around the game.

Simultaneously, we are working on episodes that we plan to sell via the game. These will include new graphics, more robots, a story line, other characters, and other surprises.

(Just to clarify: The licensing is very permissive. It allows others to create their own episodes and distribute them freely or sell them. This would be very welcome. If anybody is interested in this, we propose to join forces and sell all our episodes through one system.)

Technologies Used

- Qt for user input and rendering.

- OpenGL as an efficient rendering backend for Qt. Everything will remain 2D, though - we promise!

- Hipmunk, the Haskell bindings to the chipmunk physics engine.

Getting Involved

The project is still in alpha stage, so there are some features that are not yet implemented. For some, we have a clear vision on how to implement them; for others, we do not. If you want to get involved, check out our darcs repo, our launchpad site, and do not hesitate to contact us.

Further reading

5.4 Haskell and Compiler Writing

UUAG is the Utrecht University Attribute Grammar system. It is a preprocessor for Haskell

that makes it easy to write catamorphisms, i.e., functions that do to any data type what

foldr does to lists. Tree walks are defined using the intuitive concepts of

inherited and synthesized attributes, while keeping the full expressive power

of Haskell. The generated tree walks are efficient in both space and time.

An AG program is a collection of rules, which are pure Haskell functions between attributes.

Idiomatic tree computations are neatly expressed in terms of copy, default, and collection rules.

Attributes themselves can masquerade as subtrees and be analyzed accordingly (higher-order attribute). The order in which to visit the tree is derived automatically from the attribute computations. The tree walk is a single traversal from the perspective of the programmer.

Nonterminals (data types), productions (data constructors), attributes, and rules for attributes can be specified separately, and are woven and ordered automatically. These aspect-oriented programming features make AGs convenient to use in large projects.

The system is in use by a variety of large and small projects, such as the Utrecht Haskell Compiler UHC (→3.3), the editor Proxima for structured documents (http://www.haskell.org/communities/05-2010/html/report.html#sect6.4.5), the Helium compiler (http://www.haskell.org/communities/05-2009/html/report.html#sect2.3), the Generic Haskell compiler, UUAG itself, and many master student projects.

The current version is 0.9.39 (October 2011), is extensively tested, and is available on Hackage.

Recently, we improved the Cabal support and ensured compatibility with GHC 7.

We are working on the following enhancements of the UUAG system:

-

First-class AGs

-

We provide a translation from UUAG to AspectAG (→5.4.2).

AspectAG is a library of strongly typed Attribute Grammars

implemented using type-level programming. With this extension, we can write the main part of

an AG conveniently with UUAG, and use AspectAG for (dynamic) extensions. Our goal is to have

an extensible version of the UHC.

-

Ordered evaluation

-

We have implemented a variant of Kennedy and Warren (1976)

for ordered AGs. For any absolutely non-circular AGs, this algorithm finds a static

evaluation order, which solves some of the problems we had with an earlier approach for

ordered AGs. A static evaluation order allows the generated code to be strict, which is

important to reduce the memory usage when dealing with large ASTs.

The generated code is purely functional, does not require type

annotations for local attributes, and the Haskell compiler proves that the static evaluation

order is correct.

-

Multi-core evaluation

-

Our algorithm for ordered AGs identifies statically which subcomputations of children of a

production are independent and suitable for parallel evaluation. Together with the

strict evaluation as mentioned above, which is important when evaluating in parallel, the

generated code can automatically exploit multi-core CPUs. We are currently evaluating the

effectiveness of this approach.

-

Stepwise evaluation

-

In the recent past we worked on a stepwise evaluation scheme for AGs.

Using this scheme, the evaluation of a node may

yield user-defined progress reports, and the evaluation to the next report is

considered to be an evaluation step. By asking nodes to yield reports, we can encode

the parallel exploration of trees and encode breadth-first search strategies.

We are currently also running a Ph.D. project that investigates incremental evaluation.

Further reading

AspectAG is a library of strongly typed Attribute Grammars implemented using type-level programming.

Introduction

Attribute Grammars (AGs), a general-purpose formalism for describing recursive computations over data types,

avoid the trade-off which arises when building software incrementally:

should it be easy to add new data types and data type alternatives or to add new operations on existing data types?

However, AGs are usually implemented as a pre-processor,

leaving e.g. type checking to later processing phases and making interactive development,

proper error reporting and debugging difficult.

Embedding AG into Haskell as a combinator library solves these problems.

Previous attempts at embedding AGs as a domain-specific language were based on extensible records and thus exploiting

Haskell’s type system to check the well-formedness of the AG,

but fell short in compactness and the possibility to abstract over oft occurring AG patterns.

Other attempts used a very generic mapping for which the AG well-formedness could not be statically checked.

We present a typed embedding of AG in Haskell satisfying all these requirements.

The key lies in using HList-like typed heterogeneous collections (extensible polymorphic records)

and expressing AG well-formedness conditions as type-level predicates (i.e., typeclass constraints).

By further type-level programming we can also express common programming patterns,

corresponding to the typical use cases of monads such as Reader, Writer, and State.

The paper presents a realistic example of type-class-based type-level programming in Haskell.

We have included support for local and higher-order attributes.

Furthermore, a translation from UUAG to AspectAG is added to UUAGC as an experimental feature.

Current Status

We have recently added a combinator agMacro to provide support

for “attribute grammars macros”; a mechanism that makes it easy to

define attribute computation in terms of already existing attribute computation.

Background

The approach taken in AspectAG was proposed by Marcos Viera,

Doaitse Swierstra, and Wouter Swierstra in the

ICFP 2009 paper

“Attribute Grammars Fly First-Class: How to do aspect oriented programming in Haskell”.

The Attribute Grammar Macros combinator is described in a technical report:

UU-CS-2011-028.

Further reading

http://www.cs.uu.nl/wiki/bin/view/Center/AspectAG

6 Development Tools

6.1 Environments

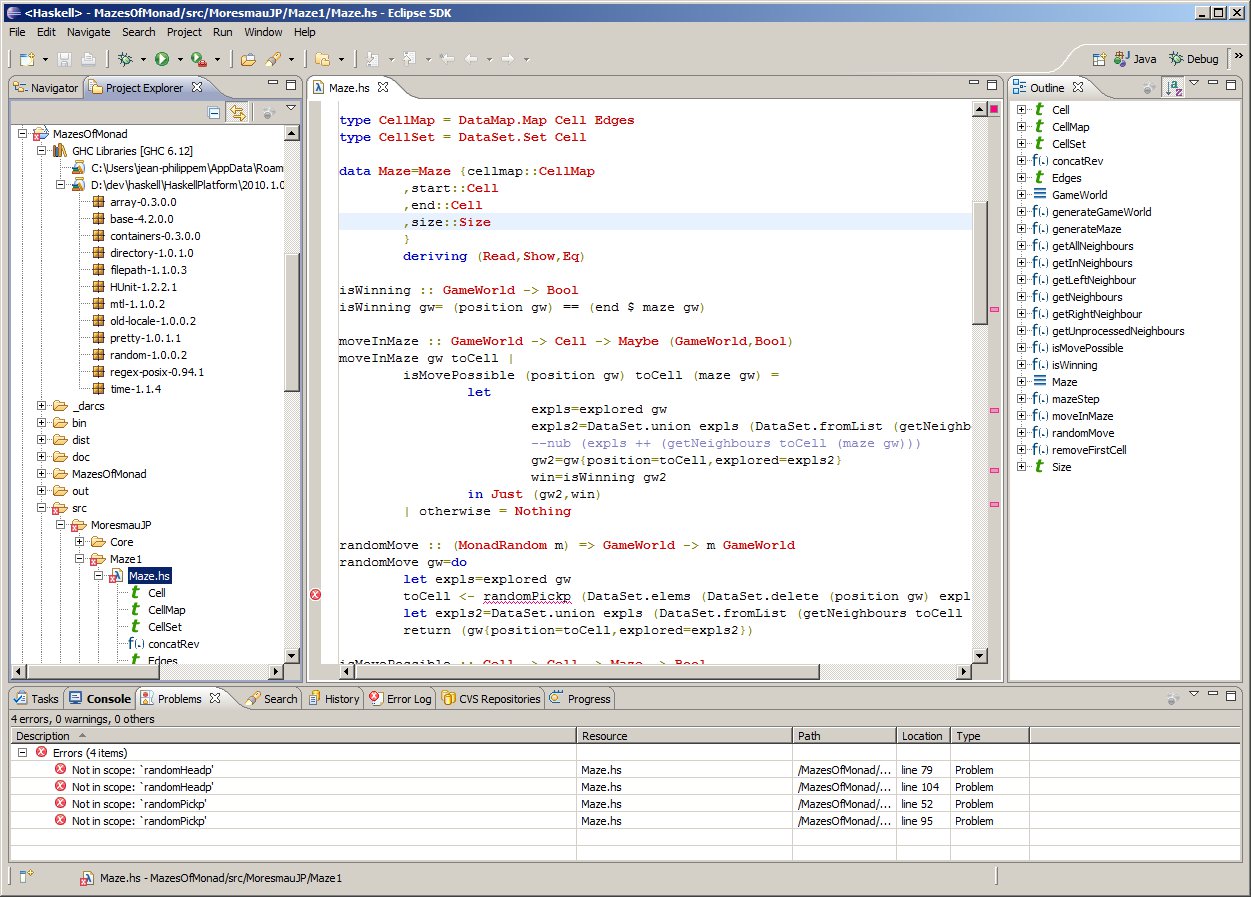

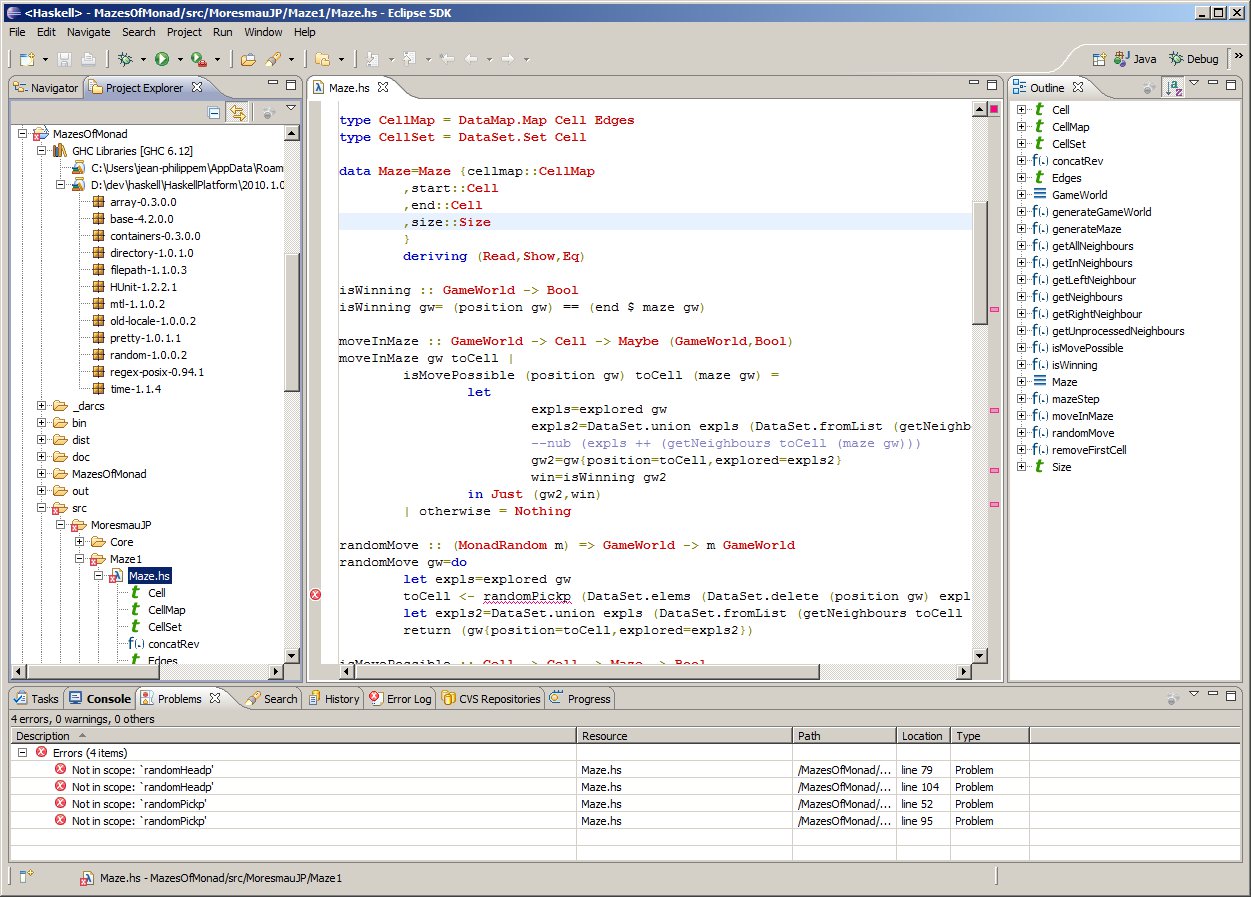

EclipseFP is a set of Eclipse plugins to allow working on Haskell code projects.

It features Cabal integration (.cabal file editor, uses Cabal settings for compilation), and GHC integration. Compilation is done via the GHC API, syntax coloring uses the GHC Lexer. Other standard Eclipse features like code outline, folding, and quick fixes for common errors are also provided. EclipseFP also allows launching GHCi sessions on any module including extensive debugging facilities. It uses Scion to bridge between the Java code for Eclipse and the Haskell APIs.

The source code is fully open source (Eclipse License) and anyone can contribute. Current version is 2.1.0, released in September 2011 and supporting GHC 6.12 and 7.0, and more versions with additional features are planned. Feedback on what is needed is welcome! The website has information on downloading binary releases and getting a copy of the source code. Support and bug tracking is handled through Sourceforge forums.

Further reading

http://eclipsefp.sourceforge.net/

6.1.2 ghc-mod — Happy Haskell Programming on Emacs

ghc-mod is an enhancement of the Haskell mode on Emacs. It provides the following features:

-

Completion

-

You can complete a name of keyword, module, class, function, types, language extensions, etc.

-

Code template

-

You can insert a code template according to the position of the cursor. For instance, “module Foo where” is inserted in the beginning of a buffer.

-

Syntax check

-

Code lines with error messages are automatically highlighted thanks to flymake. You can display the error message of the current line in another window. hlint (→6.3.2) can be used instead of GHC to check Haskell syntax.

-

Document browsing

-

You can browse the module document of the current line either locally or on Hackage.

-

Function type

-

You can display the type/information of the function on the cursor. (new)

ghc-mod consists of code in Emacs Lisp and a sub-command in Haskell.

The Emacs code executes the sub-command to obtain information about

your Haskell environment. The sub-command makes use of the GHC API

for that purpose.

ghc-mod now supports “hs-source-dirs” in a cabal file and GHC 7.2.

Further reading

http://www.mew.org/~kazu/proj/ghc-mod/en/

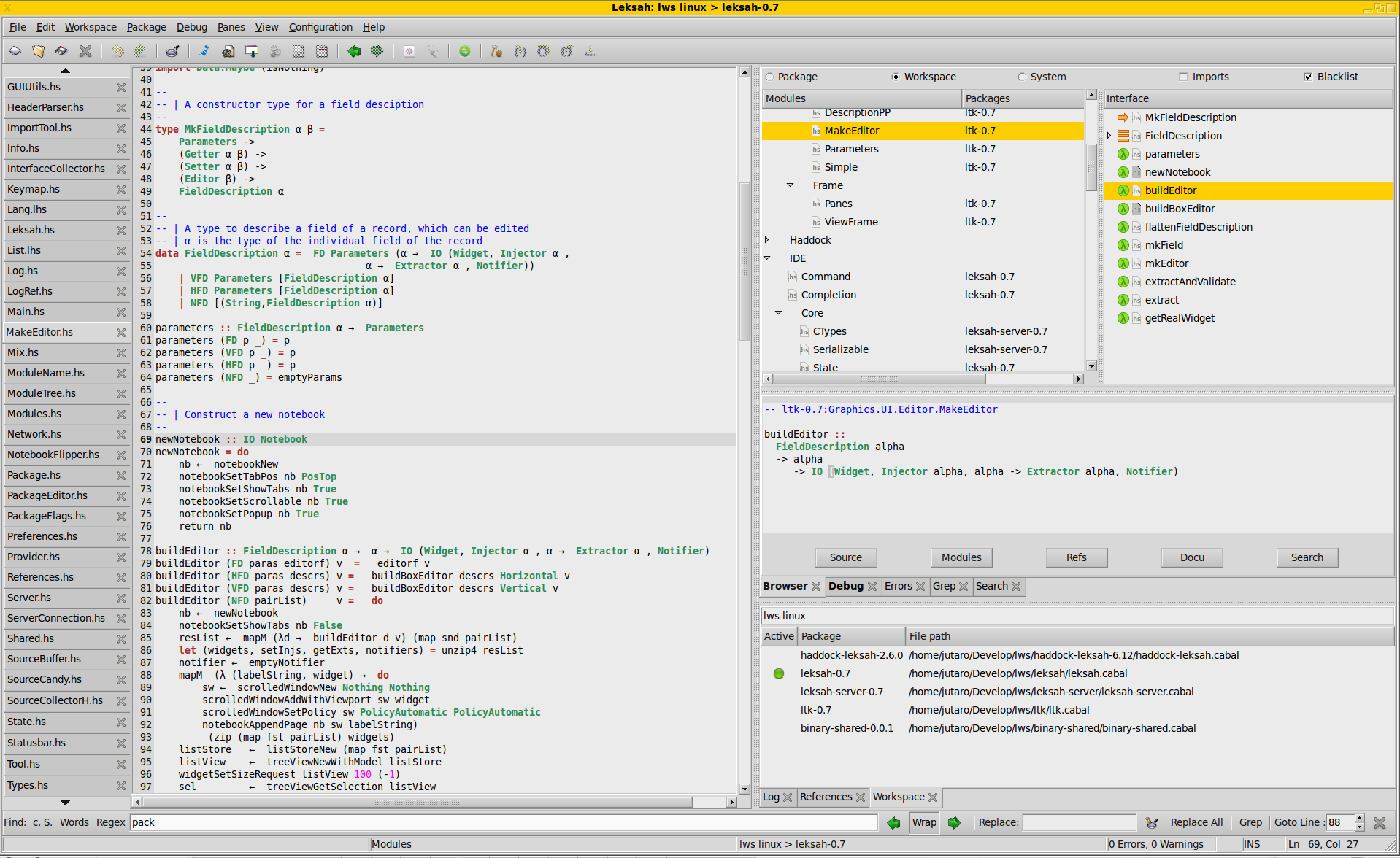

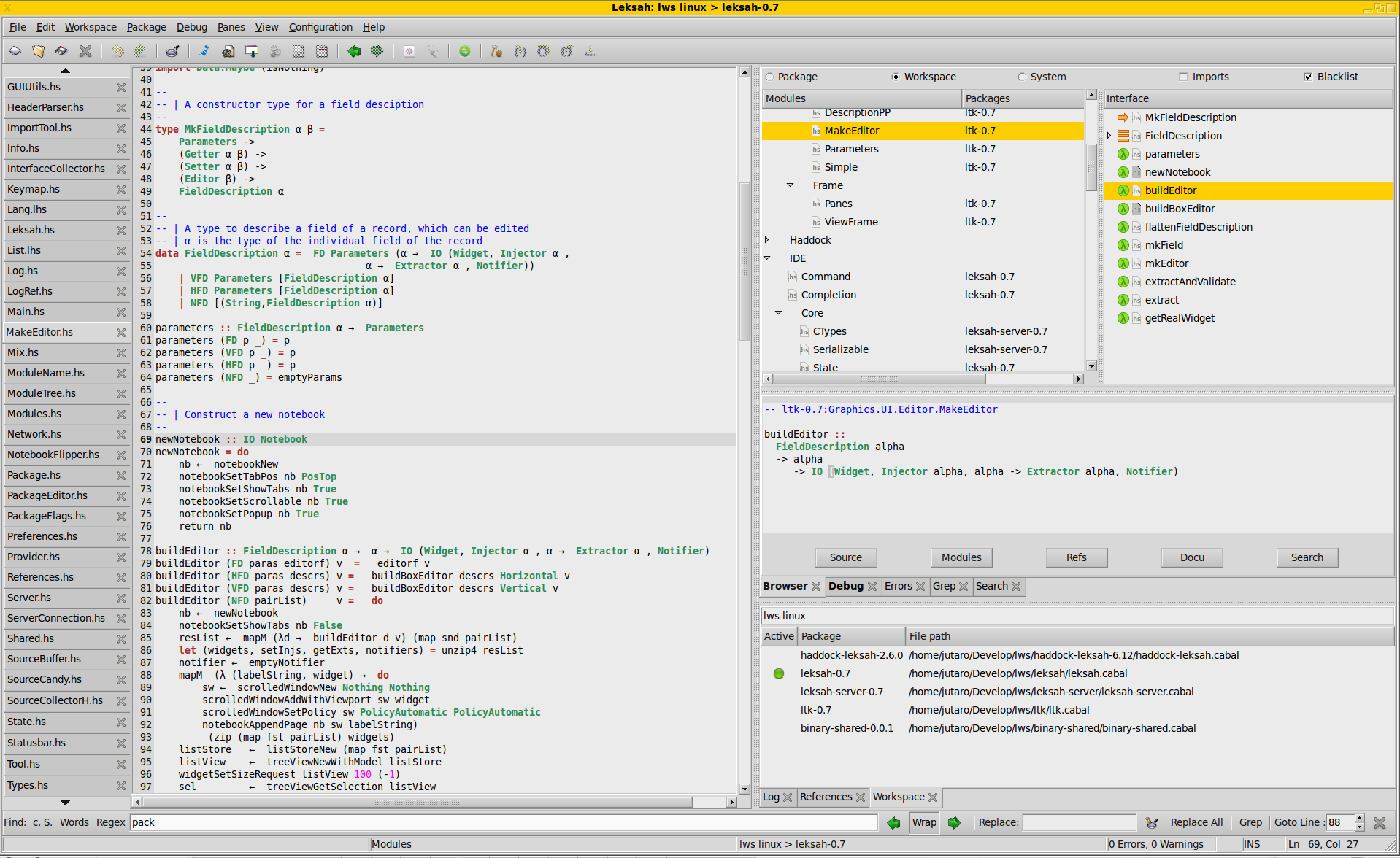

6.1.3 Leksah — The Haskell IDE in Haskell

Leksah is a Haskell IDE written in Haskell. It is still beta quality,

but we hope we can publish the 1.0 release this year.

The project has its focus on providing a practical tool for Haskell

development.

Leksah has already proved its usefulness in industrial projects.

We have had positive feedback and are pleased to see that a large number

of people are downloading Leksah and we hope you are finding it useful.

Leksah is at a critical point in its development, as it is difficult

to bring a project of this size to a success, considering we are just

two developers which work on it in their rare spare time.

If you can spare some time to work on part of the project, please get in

touch

by mailing the Leksah group or log onto IRC #leksah.

If there is something you do not like about Leksah let us know

and we can probably show you where to get started

fixing it.

We believe that Leksah can be an important contribution for Haskell,

to make its way from an academic language to a valuable tool in

industry.

Further reading

http://leksah.org/

6.1.5 HaRe — The Haskell Refactorer

Refactorings are source-to-source program transformations which change

program

structure and organization, but not program functionality. Documented in

catalogs and supported by tools, refactoring provides the means to adapt and

improve the design of existing code, and has thus enabled the trend towards

modern agile software development processes.

Our project, Refactoring Functional Programs, has as its major

goal to

build a tool to support refactorings in Haskell. The HaRe tool is now in its

sixth major release. HaRe supports full Haskell 98, and is integrated

with (X)Emacs and Vim. All the refactorings that HaRe supports, including

renaming, scope change, generalization and a number of others, are

module-aware, so that a change will be reflected in all the modules in a project,

rather than just in the module where the change is initiated. The system

also

contains a set of data-oriented refactorings which together transform a

concrete data type and associated uses of pattern matching into an

abstract type and calls to assorted functions. The latest snapshots

support the

hierarchical modules extension, but only small parts of the hierarchical

libraries, unfortunately.

In order to allow users to extend HaRe themselves,

HaRe includes an API for users to define their own program

transformations, together with Haddock documentation.

Please let us know if you are using the API.

Snapshots of HaRe are available from our webpage, as are related

presentations and publications from the group (including LDTA’05, TFP’05, SCAM’06, PEPM’08, PEPM’10, TFP’10, Huiqing’s PhD thesis and Chris’s PhD thesis).

The final report for the project appears there, too.

Recent developments

- HaRe 0.6, which is compatible with GHC-6.12.1, has been released; HaRe 0.6 is available on Hackage, and also downloadable from our project webpage.

- HaRe 0.6 comes with a number of new refactorings, including adding and

removing fields and constructors to data-type definitions, folding and unfolding against as-patterns, merging and splitting function definitions, converting between let and where constructs, introducing pattern matching and

generative folding.

- Support for automatic detection and semi-automatic elimination of duplicated code in Haskell programs is also available from HaRe 0.6.

- Support for a number of new refactorings for parallel Haskell have recently been added to HaRe. These include support to introduce simple divide and conquer parallelism, using the new Strategies module. The refactorings are designed to issue warnings to the user when ill-defined evaluation degrees are set, together with support for adding a threshold value.

Further reading

http://www.cs.kent.ac.uk/projects/refactor-fp/

6.2 Documentation

Haddock is a widely used documentation-generation tool for Haskell

library code. Haddock generates documentation by parsing and typechecking

Haskell source code directly and including documentation supplied by the

programmer in the form of specially-formatted comments in the source code

itself. Haddock has direct support in Cabal (→6.8.1), and is used to

generate the documentation for the hierarchical libraries that come with GHC,

Hugs, and nhc98

(http://www.haskell.org/ghc/docs/latest/html/libraries) as well as

the documentation on Hackage.

The latest release is version 2.9.4, released October 3 2011.

Recent changes:

- Support for GHC 7.2 and Alex 3.x

- New –qual flag for qualification of names

- Print doc coverage information to stdout

- Speed up generation of index

- Various bug fixes

Future plans

- Although Haddock understands many GHC language extensions, we would like it to

understand all of them. Currently there are some constructs you cannot comment,

like GADTs and associated type synonyms.

- Error messages is an area with room for improvement. We would like Haddock

to include accurate line numbers in markup syntax errors.

- On the HTML rendering side we want to make more use of Javascript in order to make

the viewing experience better. The frames-mode could be improved this way, for

example.

- Finally, the long term plan is to split Haddock into one program that creates

data from sources, and separate backend programs that use that data via the

Haddock API. This will scale better, not requiring adding new backends to Haddock

for every tool that needs its own format.

Further reading

Hoogle is an online Haskell API search engine. It searches the functions in the various libraries,

both by name and by type signature. When searching by name, the search just finds functions which

contain that name as a substring. However, when searching by types it attempts to find any functions

that might be appropriate, including argument reordering and missing arguments. The tool is written

in Haskell, and the source code is available online. Hoogle is available as a web interface, a

command line tool, and a lambdabot plugin.

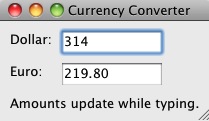

Hoogle has seen significant revisions in the last few months. Hoogle can now search all of Hackage (→6.8.1),

and has a brand new look and feel, including instant results as you type. Work continues improving

the performance and quality of the results.

Further reading

http://haskell.org/hoogle

This tool by Ralf Hinze and Andres Löh

is a preprocessor that transforms literate Haskell or Agda

code into LaTeX documents. The output is highly customizable

by means of formatting directives that are interpreted

by lhs2TeX. Other directives allow the selective inclusion

of program fragments, so that multiple versions of a program

and/or document can be produced from a common source.

The input is parsed using a liberal parser that can interpret

many languages with a Haskell-like syntax.

The program is stable and can take on large documents.

The current version is 1.17, so there has not been a new release

since the last report. Development repository and bug tracker

are on GitHub. There are still plans for a rewrite of lhs2TeX

with the goal of cleaning up the internals and making the functionality of

lhs2TeX available as a library.

Further reading

6.3 Testing and Analysis

shelltestrunner was first released in 2009, inspired by the test suite

in John Wiegley’s ledger project. It is a command-line tool for doing

repeatable functional testing of command-line programs or shell

commands. It reads simple declarative tests specifying a command, some

input, and the expected output, error output and exit status. Tests

can be run selectively, in parallel, with a timeout, in color, and/or

with differences highlighted.

In the last six months, shelltestrunner has had three releases (1.0,

1.1, 1.2) and acquired a home page. Projects using it include hledger,

yesod, berp, and eddie. shelltestrunner is free software released

under GPLv3+ from Hackage or http://joyful.com/shelltestrunner.

Further reading

http://joyful.com/repos/shelltestrunner

HLint is a tool that reads Haskell code and suggests changes to make it simpler.

For example, if you call maybe foo id it will suggest using fromMaybe foo instead.

HLint is compatible with almost all Haskell extensions, and can be easily extended with additional hints.

There have been numerous feature improvements since the last HCAR, including features to

detect duplicated code within a module. HLint can be tried online within hpaste.org.

Further reading

http://community.haskell.org/~ndm/hlint/

This project was born during the 2009 Google Summer of Code under the

name “Improving space profiling experience”. The name

hp2any covers a set of tools and libraries to deal with heap

profiles of Haskell programs. At the present moment, the project

consists of three packages:

- hp2any-core: a library offering functions to read heap

profiles during and after run, and to perform queries on them.

- hp2any-graph: an OpenGL-based live grapher that can

show the memory usage of local and remote processes (the latter

using a relay server included in the package), and a library

exposing the graphing functionality to other applications.

- hp2any-manager: a GTK application that can display

graphs of several heap profiles from earlier runs.

The project also aims at replacing hp2ps by reimplementing it

in Haskell and possibly adding new output formats. The manager

application shall be extended to display and compare the graphs in

more ways, to export them in other formats and also to support live

profiling right away instead of delegating that task to

hp2any-graph.

Recently, the hp2any project joined forces with

hp2pretty, which resulted in increased performance in the

core library.

Further reading

6.4 Optimization

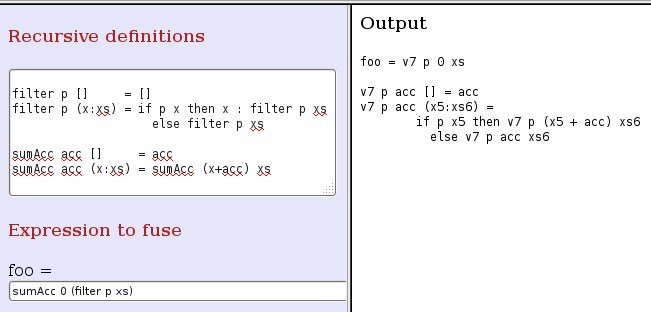

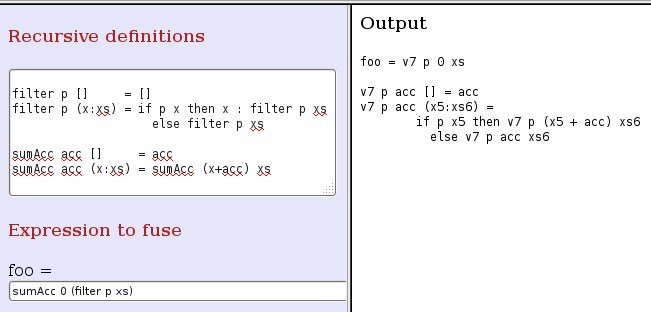

HFusion is an experimental tool for optimizing Haskell programs.

The tool performs source to source transformations by the application of a program

transformation technique called fusion. The aim of fusion is to reduce

memory management effort by eliminating the intermediate data structures produced

in function compositions.

It is based on an algebraic approach where functions are internally represented

in terms of a recursive program scheme known as hylomorphism.

We offer a web interface to test the technique on user-supplied recursive definitions and

HFusion is also available as a library on Hackage.

The last improvement to HFusion has been to accept as input an expression containing any number

of compositions, returning the expression which results from applying fusion to all of them.

Compositions which cannot be handled by HFusion are left unmodified.

In its current state, HFusion is able to fuse compositions of general recursive functions,

including primitive recursive functions (like dropWhile or insertions in binary search trees),

functions that make recursion over multiple arguments like zip, zipWith or equality predicates,

mutually recursive functions, and (with some limitations) functions with accumulators like foldl.

In general, HFusion is able to eliminate intermediate data structures of regular data types

(sum-of-product types plus different forms of generalized trees).

Further reading

6.4.2 Optimizing Generic Functions

Datatype-generic programming increases program reliability by reducing code

duplication and enhancing reusability and modularity. Several generic

programming libraries for Haskell have been developed in the past few years.

These libraries have been compared in detail with respect to expressiveness,

extensibility, typing issues, etc., but performance comparisons have been brief,

limited, and preliminary. It is widely believed that generic programs run slower

than hand-written code.

At Utrecht University we are looking into the performance of different generic

programming libraries and how to optimize them. We have confirmed that generic

programs, when compiled with the standard optimization flags of the Glasgow

Haskell Compiler (GHC), are substantially slower than their hand-written

counterparts.

However, we have also found that advanced optimization capabilities of GHC,

such as inline pragmas and rewrite rules, can be used to further optimize

generic functions, often achieving the same efficiency as hand-written code.

We are continuing our research in this topic and hope to provide more

information in the near future.

Further reading

http://dreixel.net/research/pdf/ogie.pdf

6.5 Boilerplate Removal

6.5.1 A Generic Deriving Mechanism for Haskell

Haskell’s deriving mechanism supports the automatic generation of instances for

a number of functions. The Haskell 98 Report only specifies how to generate

instances for the Eq, Ord, Enum, Bounded, Show, and Read classes. The

description of how to generate instances is largely informal. As a consequence,

the portability of instances across different compilers is not guaranteed.

Additionally, the generation of instances imposes restrictions on the shape of

datatypes, depending on the particular class to derive.

We have developed a new approach to Haskell’s deriving mechanism, which allows

users to specify how to derive arbitrary class instances using standard

datatype-generic programming techniques. Generic functions, including the

methods from six standard Haskell 98 derivable classes, can be specified

entirely within Haskell, making them more

lightweight and portable.

We have implemented our deriving mechanism together with many new derivable

classes in UHC (→3.3) and

GHC.

The implementation in GHC has a more convenient syntax; consider enumeration:

class GEnum a where

genum :: [a]

default genum :: ( Representable a,

Enum' (Rep a))

=> [a]

genum = map to enum'

instance (GEnum a) => GEnum (Maybe a)

instance (GEnum a) => GEnum [a]

These instances are empty, and therefore use the (generic) default

implementation. This is as convenient as writing |deriving| clauses, but allows

defining more generic classes.

This implementation relies on the new functionality of

default signatures,

like in |genum| above, which are like standard default methods but allow for a

different type signature.

Further reading

http://www.haskell.org/haskellwiki/Generics

6.6 Code Management

Darcs is a distributed revision control system written in Haskell. In

Darcs, every copy of your source code is a full repository, which allows for

full operation in a disconnected environment, and also allows anyone with

read access to a Darcs repository to easily create their own branch and

modify it with the full power of Darcs’ revision control. Darcs is based on

an underlying theory of patches, which allows for safe reordering and

merging of patches even in complex scenarios. For all its power, Darcs

remains a very easy to use tool for every day use because it follows the

principle of keeping simple things simple.

Our most recent release, Darcs 2.5.2, was in March 2011. The Darcs

2.5.x line provides faster repository-local operations, and faster

record with long patch histories, among other bug fixes and features.

The most recent version adds compatibility with Haskell Platform

2011.2.0.0.

We are currently working on releasing Darcs 2.8, which will include

Alexey Levan’s 2010 Google Summer of Code work on optimised darcs get

(using the “optimize –http” command) and a few refinements to Adolfo

Builes’ cache reliability work. The Darcs 2.8 release is planned to

include a faster and more human-readable annotate command.

Meanwhile, we are happy to have been able to participate in the Google

Summer of Code 2011 (as part of Haskell.org). We had two projects this

year, one to develop a a bidirectional bridge between Darcs and Git (and

potentially other VCSs), and the other to do some new exploratory work

on primitive patch types for a future Darcs 3. The bridge project will

improve collaboration between Darcs and Git users, allowing each to

contribute to projects hosted in the other’s VCS of choice. The

primitive patches work will allow us to implement some ideas we have

been discussing in the Darcs team in recent months, in particular,

separation of file dentifiers from file names and the separation of

on-disk patch contents from their in-memory representation. Making a

prototype implementation of these ideas will give us a better idea how

feasible they are in practice and help us to identify the technical

difficulities that may be lurking around the corner. Both projects

were succesful; see below for their respective wrap-ups and prototypes.

Darcs is free software licensed under the GNU GPL (version 2 or

greater). Darcs is a proud

member of the Software Freedom Conservancy, a US tax-exempt 501(c)(3)

organization. We accept donations at

http://darcs.net/donations.html.

Further reading

http://darcsden.com is a free Darcs (→6.6.1) repository

hosting service, similar to patch-tag.com or (in essence)

github. The darcsden software is also available (on darcsden)

so that anyone can set up a similar service. darcsden is available

under BSD license and was created by Alex Suraci.

Alex keeps the service running and fixes bugs, but is mostly focussed

on other projects. darcsden has a clean UI and codebase and is a

viable hosting option for smaller projects despite occasional

glitches.

The last Hackage release was in 2010. Other committers have been

submitting patches, and the darcsden software is close to becoming a

just-works installable darcs web ui for general use.

Further reading

http://darcsden.com

darcsum is an emacs add-on providing an efficient, pcl-cvs-like interface

for the Darcs revision control system (→6.6.1). It is especially useful for

reviewing and recording pending changes.

Simon Michael took over maintainership in 2010, and tried to make it more

robust with current Darcs. The tool remains slightly fragile, as it

depends on Darcs’ exact command-line output, and needs updating when that

changes. Dave Love has contributed a large number of cleanups.

darcsum is available under the GPL version 2 or later from

http://joyful.com/darcsum.

In the last six months darcsum acquired a home page, but there has

been little other activity. We are looking for a new maintainer for

this useful tool.

Further reading

http://joyful.com/darcsum/

6.6.6 cab — A Maintenance Command of Haskell Cabal Packages

cab is a MacPorts-like maintenance command of Haskell cabal packages. Some parts of this program are a wrapper to ghc-pkg, cabal, and cabal-dev.

If you are always confused due to inconsistency of ghc-pkg and cabal, or if you want a way to check all outdated packages, or if you want a way to remove outdated packages recursively, this command helps you.

cab now supports GHC 7.2.

Further reading

http://www.mew.org/~kazu/proj/cab/en/

Hackage-Debian is a tool for creating a Debian repository with all, or

almost all, of the packages in Hackage. It is highly based on the debian

available at http://hackage.haskell.org/package/debian. It should build

a snapshot of the Hackage database and then track each new package added to

build it on demand. It is still under development, but the first release

should be announced soon.

A limitation of the first version being developed is that it only builds the

latest version of each library. So, if a library depends on an older version

of another library, it will not be built. This is the reason why it does not

build all packages, but almost all of them.

Also, the first version will only deal with libraries, but there are plans to

also build programs.

The darcs repository for both hackage-debian and the modified version of the

debian package that it uses are available at

http://marcot.eti.br/darcs/hackage-debian and http://marcot.eti.br/darcs/haskell-debian.

6.7 Interfacing to other Languages

6.8 Deployment

Background

Cabal is the standard packaging system for Haskell software. It specifies a standard way in which Haskell libraries and applications can be packaged so that it is easy for consumers to use them, or re-package them, regardless of the Haskell implementation or installation platform.

Hackage is a distribution point for Cabal packages. It is an online archive of Cabal packages which can be used via the website and client-side software such as cabal-install. Hackage enables users to find, browse and download Cabal packages, plus view their API documentation.

cabal-install is the command line interface for the Cabal and Hackage system. It provides a command line program cabal which has sub-commands for installing and managing Haskell packages.

Recent progress

We have had two successful Google Summer of Code projects on Cabal this year. Sam Anklesaria worked on a “cabal repl” feature to launch an interactive GHCi session with all the appropriate pre-processing and context from the project’s .cabal file. Mikhail Glushenkov worked on a feature so that “cabal install” can build independent packages in parallel (not to be confused with building modules within a package in parallel). The code from both projects is available and they are awaiting integration into the main Cabal repository, which we expect to happen over the course of the next few months.

The “cabal test” feature which was developed as a GSoC project last summer has matured significantly in the last 6 months, thanks to continuing effort from Thomas Tuegel and Johan Tibell. The basic test interface will be ready to use in the next release, and there has been some progress on the “detailed” test interface.

The IHG is currently sponsoring some work on cabal-install. The first fruits of this work is a new dependency solver for cabal-install which is now included in the development version. The new solver can find solutions in more cases and produces more detailed error messages when it cannot find a solution. In addition, it is better about avoiding and warning about breaking existing installed packages. We also expect it to be a better basis for other features in future. For more details see the presentation by Andres Löh.

http://haskell.org/haskellwiki/HaskellImplementorsWorkshop/2011/Loeh

The last 6 months has seen significant progress on the new hackage-server implementation with help from many new volunteers, in particular Max Bolingbroke, but also several other people who helped at hackathons and subsequently. The IHG funded Well-Typed to improve package mirroring so that continuous nearly-live mirroring is now possible. We are also grateful to factis research GmbH who have kindly donated a VM to help the hackage developers test the new server code. We expect to do live mirroring and public beta testing using this server during the next few months.

Looking forward

Users are increasingly relying on hackage and cabal-install and are increasingly frustrated by dependency problems. Solutions to the variety of problems do exist. It will however take sustained effort to solve them. The good news is that there is the realistic prospect of the new hackage-server being ready in the not too distant future with features to help monitor and encourage package quality, and the recent work on cabal-install should reduce the frustration level somewhat.

The last 6 months has seen a good upswing in the number of volunteers spending their time on cabal and hackage, so much so that a clear bottleneck is patch review and integration bandwidth. A similar issue is that many of the long standing bugs and feature requests require significant refactoring work which many volunteers feel reluctant or unable to do. Assistance in these areas would be very valuable indeed.

We would like to encourage people considering contributing to join the cabal-devel mailing list so that we can increase development discussion and improve collaboration. The bug tracker is reasonably well maintained and it should be relatively clear to new contributors what is in need of attention and which tasks are considered relatively easy.

Further reading

7 Libraries

7.1 Processing Haskell

7.2 Parsing and Transforming

Library for parsing and manipulating ePub files and OPF package data. An attempt has been made here to very thoroughly implement the OPF Package Document specification.

epub-metadata is available from Hackage, the Darcs repository below, and also in binary form for Arch Linux through the AUR.

See also epub-tools (→8.8.10).

Further reading

7.2.3 Utrecht Parser Combinator Library: uu-parsinglib

The previous extension for recognizing merging parsers was generalized so now any kind of applicative and monadic parsers can be merged in an interleaved way. As an example take the situation where many different programs write log entries into a log file, and where each log entry is uniquely identified by a transaction number (or process number) which can be used to distinguish them. E.g., assume that each transaction consists of an |a|, a |b| and a |c| action, and that a digit is used to identify the individual actions belonging to the same transaction; the individual transactions can now be recognized by the parser:

pABC :: Grammar String

pABC = (\ a d -> d:a) <$> pA <*> (pDigit' >>=

\d -> pB *> mkGram (pSym d) *>

pC *> mkGram (pSym d)

)

run (pmMany(pABC)) "a2a1b1b2c2a3b3c1c3"

Result: ["2a","1a","3a"]

Furthermore the library was provided with many more examples in two modules in the |Demo| directory.

Features

-

Much simpler internals than the old library (http://haskell.org/communities/05-2009/html/report.html#sect5.5.8) .

-

Combinators for easily describing parsers which produce their results online, do not hang on to the input and provide excellent error messages. As such they are “surprise free” when used by people not fully aware of their internal workings.

- Parsers “correct” the input such that parsing can proceed when an erroneous input is encountered.

- The library basically provides the to be preferred applicative interface and a monadic interface where this is really needed (which is hardly ever).

- No need for try-like constructs which makes writing Parsec based parsers tricky.

- Scanners can be switched dynamically, so several different languages

can occur intertwined in a single input file.

- Parsers can be run in an interleaved way, thus generalizing the merging and permuting parsers into a single applicative interface. This makes it e.g. possible to deal with white space or comments in the input in a completely separate way, without having to think about this in the parser for the language at hand (provided of course that white space is not syntactically relevant).

Future plans

Since the part dealing with merging is relatively independent of the underlying parsing machinery we may split this off into a separate package. This will enable us also to make use of a different parsing engines when combining parsers in a much more dynamic way. In such cases we want to avoid too many static analyses.

Future versions will contain a check for grammars being not left-recursive, thus taking away the only remaining source of surprises when using parser combinator libraries. This makes the library even greater for use teaching environments. Future versions of the library, using even more abstract interpretation, will make use of computed look-ahead information to speed up the parsing process further.

The old library in the |uulib| package stays stable, and can continue to be used. A few changes were needed in order to make it compile with GHC 7.2.

Contact

If you are interested in using the current version of the library in order

to provide feedback on the provided interface, contact <doaitse at swierstra.net>. There is a low volume, moderated mailing list which was moved to <parsing at lists.science.uu.nl> (see also http://www.cs.uu.nl/wiki/bin/view/HUT/ParserCombinators).

7.2.4 Regular Expression Matching with Partial Derivatives

We are still improving the performance of our

matching algorithms. The latest implementation can be downloaded

via hackage.

Further reading

regex-applicative is aimed to be an efficient and easy to use parsing combinator library for Haskell based on regular expressions.

Regular expressions have Perl-like (left-biased) semantics to satisfy most of

the daily regex needs, but also allow longest matching prefix search

useful for

lexical analysis.

For example, the following code finds filename extensions:

import Text.Regex.Applicative

getExtension :: String -> Maybe String

getExtension str =

str =~

many anySym *>

sym '.' *>

many anySym

More examples can be found on the

wiki.

Further reading

7.3 Mathematical Objects

7.3.1 normaldistribution: Minimum Fuss Normally Distributed Random Values

Normaldistribution is a new package that lets you produce normally

distributed random values with a minimum of fuss. The API builds

upon, and is largely analogous to, that of the Haskell 98 Random

module (more recently System.Random). Usage can be as simple as:

sample <-normalIO. For more information and examples see

the package description on Hackage.

Further reading

http://hackage.haskell.org/package/normaldistribution

7.3.2 dimensional: Statically Checked Physical Dimensions

Dimensional is a library providing data types for performing

arithmetics with physical quantities and units. Information about

the physical dimensions of the quantities/units is embedded in their

types, and the validity of operations is verified by the type checker

at compile time. The boxing and unboxing of numerical values as

quantities is done by multiplication and division with units. The

library is designed to, as far as is practical, enforce/encourage

best practices of unit usage within the frame of the si. Example:

d :: Fractional a => Time a -> Length a

d t = a / _2 * t ^ pos2

where a = 9.82 *~ (meter / second ^ pos2)

Ongoing experimental work includes:

The core library, dimensional, can be installed off Hackage using

cabal. The experimental packages can be cloned off of Github.

Dimensional relies on numtype for type-level integers (e.g., pos2 in the above example), ad for automatic differentiation,

and HList (→7.4.1) for type-level vector and matrix representations.

Further reading

7.3.3 AERN-Real and Friends

AERN stands for Approximating Exact Real Numbers.

We are developing a family of libraries that will provide:

-

a reliable and fast arbitrary precision

correctly rounded interval arithmetic, including

both standard and inverted intervals with Kaucher arithmetic

-

arbitrary precision arithmetic of interval polynomials

and polynomial intervals to

-

automatically reduce overestimations in interval computations

-

efficiently support validated numerical integration

-

automatically decide many inequalities and interval inclusions

with non-linear and elementary functions

that occur in numerical theorem proving and specifically

in the verification of numerical programs

-

a type class hierarchy for validated

and exact computation, featuring

-

standard mathematical structures

such as posets and lattices extended to

take account of rounding errors and

partially decided relations such as equality

-

separate treatment of numerical order and

interval refinement order

-

ability to increase computational effort to reduce

the effect of rounding and partiality,

converging to no rounding and total relations

with infinite effort

-

extensive set of QuickCheck properties for each

type class, enabling automatic checking of, e.g.,

algebraic properties such as associativity

extended to take account of rounding

-

a framework for distributed query-driven lazy dataflow

exact numerical computation with tidy

exact semantics based on Domain Theory

There are stable older versions of the libraries

on Hackage but these lack the type classes described above.

We are currently in the process of redesigning and rewriting the libraries

from scratch.

Out of the newly designed code we recently released libraries

featuring

- the type classes for approximate real number operations

- correctly rounded real interval arithmetic with Double endpoints

A release of interval arithmetic with MPFR endpoints is planned as soon as

a solution is found for an easier installation of the hmpfr package.

(Currently one has to compile a ghc without gmp to use hmpfr.)

We have made progress on implementing polynomial intervals

with a core written in C but have suspended the development until

we finish a Haskell-only implementation of an arithmetic of interval polynomials

(ie polynomials with interval coefficients).

We are likely to use interval polynomials as endpoints for

polynomial intervals when the work on polynomial intervals is resumed.

The development files now include demos that apply interval polynomials

on validated simulation of selected ODE IVPs and hybrid systems.

All AERN development is open and we welcome contributions and

new developers.

Further reading

http://code.google.com/p/aern/

Paraiso is a domain-specific language (DSL) embedded in Haskell, aimed

at generating explicit type of partial differential equations solving

programs, for accelerated and/or distributed computers. Equations for

fluids, plasma, general relativity, and many more falls into this

category. This is still a tiny domain for a computer scientist, but

large enough that an astrophysicist (I am) might spend even his entire

life in it.

In Paraiso we can describe equation-solving algorithms in

mathematical, simple notation using builder monads. At the

moment it can generate programs for multicore CPUs as well as single

GPU, and tune their performance via automated benchmarking and genetic

algorithms. The experiment is under way; the fluid simulator I am using

is 464 lines in Haskell. So far, Paraiso has tried more than

117’000 different implementations of this single algorithm, each being

about 10’000 lines of CUDA program. The best one found so far is 33.4

times faster than the initial guess, and twice faster than the

hand-tuned implementation.

Anyone can get Paraiso from hackage

(http://hackage.haskell.org/package/Paraiso) or github

(https://github.com/nushio3/Paraiso).

The next big challenge is to make Paraiso generate distributed

computations.

Further reading

http://paraiso-lang.org/wiki/

7.4 Data Types and Data Structures

7.4.1 HList — A Library for Typed Heterogeneous Collections

HList is a comprehensive, general purpose Haskell library for typed

heterogeneous collections including extensible polymorphic records and

variants. HList is analogous to the standard list

library, providing a host of various construction, look-up, filtering,

and iteration primitives. In contrast to the regular lists, elements

of heterogeneous lists do not have to have the same type. HList lets

the user formulate statically checkable constraints: for example, no

two elements of a collection may have the same type (so the elements

can be unambiguously indexed by their type).

An immediate application of HLists is the implementation of open,

extensible records with first-class, reusable, and compile-time only

labels. The dual application is extensible polymorphic variants (open

unions). HList contains several implementations of open records,

including records as sequences of field values, where the type of each

field is annotated with its phantom label. We and others have also used

HList for type-safe database access in Haskell. HList-based Records

form the basis of OOHaskell. The HList library relies on common

extensions of Haskell 2010. HList is being used in AspectAG

(→5.4.2), typed EDSL of attribute grammars, and in HaskellDB.

The October 2011 version of HList library has many changes, mainly related to

deprecating TypeCast (in favor of ~) and getting rid

of overlapping instances. The only use of OverlappingInstances is in

the implementation of the generic type equality predicate

TypeEq. We plan to remove even that remaining single

occurrence. The code works with GHC 7.0.4.

Future plans include the implementation of TypeEq without resorting to

overlapping instances (so, HList will be overlapping-free), and moving

towards type functions and expressive kinds.

Further reading

Persistent is a type-safe data store interface for Haskell.

Haskell has many different database bindings available. However, most

of these have little knowledge of a schema and therefore do not provide

useful static guarantees. They also force database-dependent interfaces and

data structures on the programmer.

There are Haskell specific data stores such as acid-state that get around these flaws.

This allows one to easily store any Haskell type and have type-safe interactions with data.

However, the use case is limited to in memory storage without replication,

and they aren’t designed to interface with other programming languages.

Persistent maintains much of the advantage of using native Haskell data types — you store and retrieve normal Haskell records, and your queries are also type-safe — they must match the schema. However, Persistent lets you persist your data to a battle tested database of your choice that is well optimized for your problem domain. Persistent is backend agnostic, and there are currently interfaces to Sqlite, Postgresql, and MongoDB.

Since the last report, Persistent has undergone an internal re-write and major API changes.

The MongoDB backend has been polished and works out of the box with the Yesod web framework.

Here is a quick example of the new Persistent query language:

selectList [ PersonFirstName ==. "Simon",

PersonLastName ==. "Jones"] []

Future plans

There are 3 main directions for Persistent:

- Improvements that work across all Persistent backends (example: better application-level

joins)

- Better database-specific integration (example: better SQL joins)

- Adding more database backends

Most of Persistent development occurs within the Yesod (→5.2.6) community.

However, there is nothing specific to Yesod about it.

You can have a type-safe, productive way to store data,

even on a project that has nothing to do with web development.

Further reading

http://yesodweb.com/book/persistent

7.5 Generic and Type-Level Programming

Unbound is a domain-specific language and library for working with

binding structure. Implemented on top of the RepLib generic

programming framework, it automatically provides operations such as

alpha equivalence, capture-avoiding substitution, and free variable

calculation for user-defined data types, requiring only a tiny bit of

boilerplate on the part of the user. It features a simple yet rich

combinator language for binding specifications, including support for

pattern binding, type annotations, recursive binding, nested binding,

and multiple atom types.

Since the last HCAR, a new version of Unbound has been released,

adding support for several set-like binding strategies (where the

order of bound variables does not matter) and for GADTs which do

not use existential quantification.

Further reading

A library of flexible newtype wrappers which simplify the process of

selecting appropriate typeclass instances,

which is particularly useful for composed types.

Version 0.1.0 has been released on Hackage,

providing support for a more comprehensive range of typeclasses

when wrapping simple values,

and some documentation.

Work is still ongoing to flesh out the typeclass instances available

and improve the documentation.

7.5.3 Generic Programming at Utrecht University

One of the research themes investigated within the

Software Technology Center in the

Department of Information and Computing Sciences at

Utrecht University is generic programming. Over the

last 15 years, we have played a central role in the development of generic

programming techniques, languages, and libraries.

Currently we maintain a number of

generic programming libraries and applications. We report most of them in

this entry; emgm was reported on before (http://haskell.org/communities/05-2009/html/report.html#sect5.9.3), and

our

generic deriving mechanism

has its own entry (→6.5.1).

-

multirec

-

This library represents datatypes uniformly and grants

access to sums (the choice between constructors), products (the sequence of

constructor arguments), and recursive positions. Families of mutually recursive

datatypes are supported. Functions such as map, fold,

show, and equality are provided as examples within the library. Using

the library functions on your own families of datatypes requires some

boilerplate code in order to instantiate the framework, but is facilitated by

the fact that multirec contains Template Haskell code that generates

these instantiations automatically.

The multirec library can also be used for type-indexed datatypes. As a

demonstration, the zipper library is

available on Hackage. With

this datatype-generic zipper, you can navigate values of several types.

The latest version

available on Hackage

includes limited support for datatype compositions; we are

still planning to extend the library with support for parameterized datatypes.

-

regular

-

While multirec focuses on support for mutually recursive regular

datatypes, regular supports only single regular datatypes. The approach

used is similar to that of multirec, namely using type families to

encode the pattern functor of the datatype to represent generically. There have

been no major releases of the

regular or

regular-extras packages on Hackage since the last report. The current

versions provide a number of typical generic functions, but also some less

well-known but useful functions: deep seq, QuickCheck’s

arbitrary and coarbitrary, and binary’s get

and put.

-

instant-generics

-

Using type families and type classes in a way similar to multirec and

regular, instant-generics is yet another approach to generic

programming, supporting a large variety of datatypes and allowing the definition

of type-indexed datatypes. It was first described by

Chakravarty et al.,

and forms the basis of one of our rewriting libaries. It is

available on Hackage.

-

syb

-

Scrap Your Boilerplate (syb) has been supported by GHC since the 6.0

release. This library is based on combinators and a few primitives for type-safe

casting and processing constructor applications. It was

originally developed by Ralf Lämmel and Simon Peyton Jones. Since then,

many people have contributed with research relating to syb or its

applications.

Since syb has been separated from the base package, it can now

be updated independently of GHC. We have recently released

version 0.3 on Hackage, which

has some minor extensions and fixes.

-

Annotations

-

We presented two applications of generic annotations at the Workshop

on Generic Programming 2010:

selections and

storage. In the

former we use annotations at every recursive position of a datatype to allow for

inserting position information automatically. This allows for informative

parsing error messages without the need for explicitly changing the datatype

to contain position information. In the latter we use the annotations as

pointers to locations in the heap, allowing for transparent and efficient

data structure persistency on disk.

-

Rewriting

-

We also maintain two libraries for generic rewriting: a

simple, earlier

library based on regular, and the

guarded

rewriting library, based on instant-generics. The former allows for

rewriting only on regular datatypes, while the latter supports more datatypes

and also rewriting rules with preconditions.

We also continue to look at benchmarking and improving the performance of

different libraries for generic programming (→6.4.2).

Further reading

http://www.cs.uu.nl/wiki/GenericProgramming

7.6 User Interfaces

Gtk2Hs is a set of Haskell bindings to many of the libraries included

in the Gtk+/Gnome platform. Gtk+ is an extensive and mature

multi-platform toolkit for creating graphical user interfaces.

GUIs written using Gtk2Hs use themes to resemble the native look on

Windows. Gtk is the toolkit used by Gnome, one of the two major GUI toolkits

on Linux. On Mac OS programs written using Gtk2Hs are run by Apple’s

X11 server but may also be linked against a native Aqua implementation

of Gtk.

Gtk2Hs features:

- Automatic memory management (unlike some other C/C++ GUI

libraries, Gtk+ provides proper support for garbage-collected languages)

- Unicode support

- High quality vector graphics using Cairo

- Extensive reference documentation

- An implementation of the “Haskell School of Expression” graphics

API

- Bindings to many other libraries that build on Gtk: gio, GConf,

GtkSourceView 2.0, glade, gstreamer, vte, webkit

In a heroic effort, Duncan Coutts has adjusted Gtk2Hs and its build system to

run with GHC 7.X compilers. A release 0.12.1 was the result of this effort

which, however, was only announced on the Gtk2Hs website. Since then a few but

important bugs have been fixed, amongst them one relating to slow Cairo

drawing. These bug fixes are now in the current 0.12.2 release.

Further reading

7.7 Graphics

Assimp is a set of bindings to the Assimp Open Asset Import Library. This library can import many different types of 3D models for use in graphics. The full list of formats is available at the project website (linked below) and at the git repo for the project. Assimp is being developed alongside the Cologne ray tracer (→8.4.5) but could be useful in any 3D graphics project.

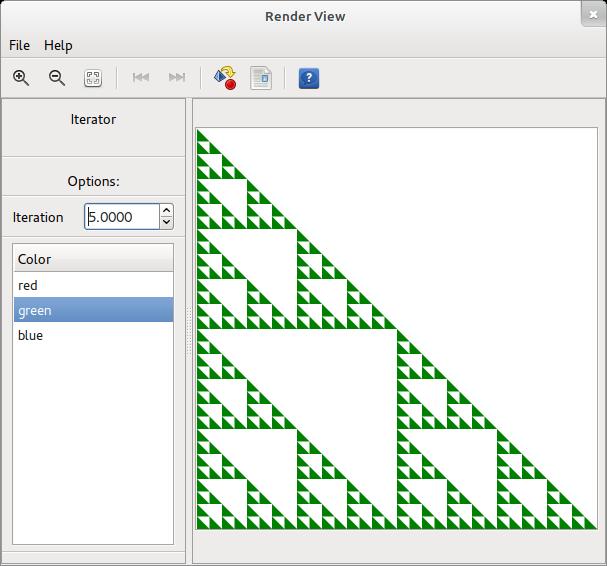

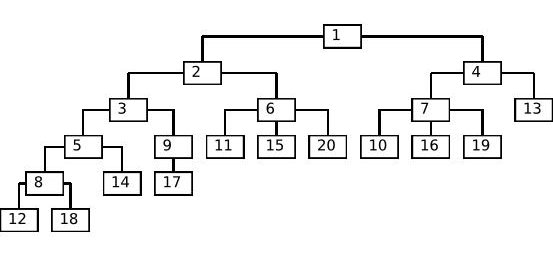

Further reading

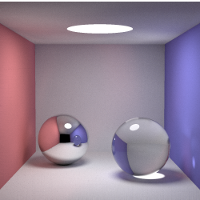

Craftwerk is a 2D vector graphic library. The motivation was to have a graphic library that is able to generate output which can be embedded into LaTeX as well as support for rendering with Cairo. Thus the library separates the graphic’s data structure from any context dependency and the aim is to support various drivers. Currently a driver for output with the TikZ package (http://sourceforge.net/projects/pgf/) for LaTeX is available. Using the additional craftwerk-cairo and craftwerk-gtk packages, direct rendering into PDF files or GTK widgets is possible. The craftwerk-gtk package also provides functions to generate simple user interfaces for interactive graphics.

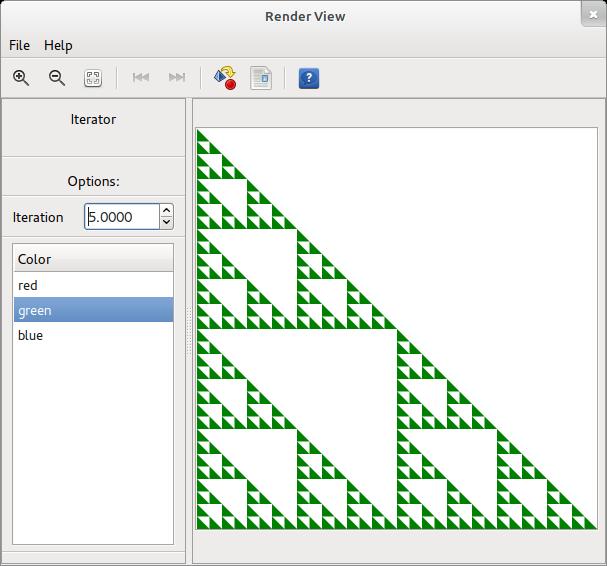

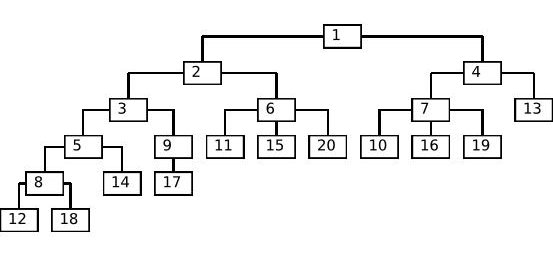

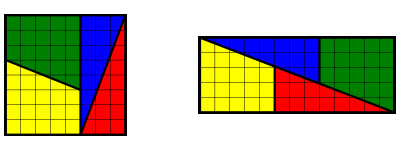

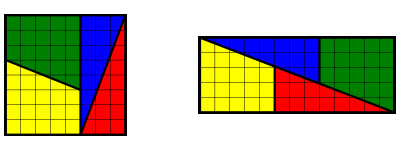

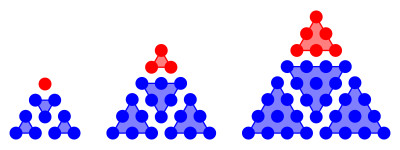

Above, two examples are shown.

In the first, you can see a screenshot of the GTK interface for interactive graphics showing a Sierpinski triangle, and the second is a simple example of a tree rendered with the Cairo driver. Graphics or figures can be created in a hierarchical fashion including the application of styles and decorations to subnodes. The current functionality includes almost the complete Cairo function set extended by arrow tips and a few primitives. The same function set is supported for TikZ output, and graphics generated with the two drivers match closely. Immediate development tasks are:

- Improvement of rendering speed in the Cairo driver.

- Better and unified text rendering capabilities.

- Refactoring of the UI module towards better usability.

Besides additional functionality, a long term goal is to support other drivers like Wumpus, Haha (ASCII rendering) or OpenGL. Craftwerk could also serve as an intermediate layer for libraries like plot or chart to enable LaTeX export. At the moment the library is still at a preliminary stage and the next step is a consolidation of a basic feature set. Any contributions or ideas are welcome and the latest code as well as experiments with other drivers are available on GitHub.

Further reading

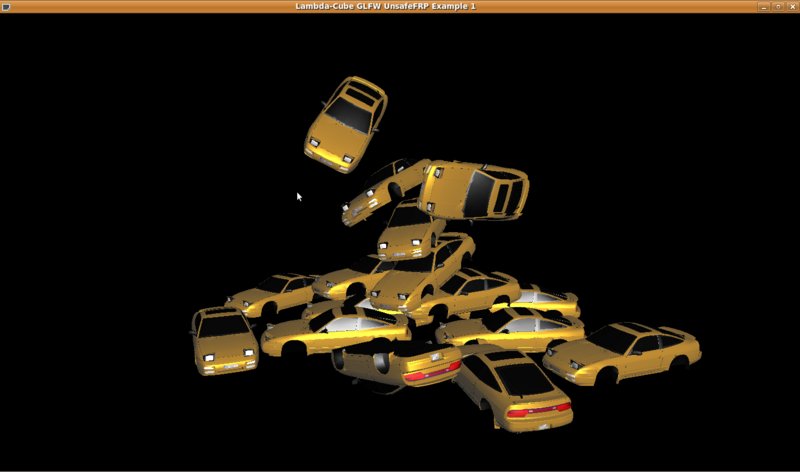

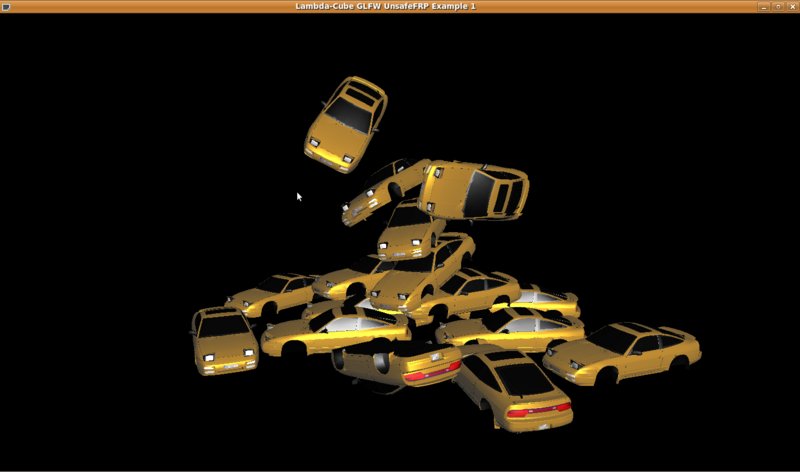

LambdaCube is a 3D rendering engine entirely written in Haskell.

The main goal of this project is to provide a modern and feature rich

graphical backend for various Haskell projects, and in the long run it

is intended to be a practical solution even for serious purposes. The

engine uses Ogre3D’s (http://www.ogre3d.org) mesh and material

file format, therefore it should be easy to find or create new content

for it. The code sits between the low-level C API (raw OpenGL, DirectX

or anything equivalent; the engine core is graphics backend agnostic)

and the application, and gives the user a high-level API to work with.

The most important features are the following:

- loading and displaying Ogre3D models

- resource management

- modular architecture

If your system has OpenGL and GLUT installed, the

lambdacube-examples package should work out of the box. The

engine is also integrated with the Bullet physics engine (→8.8.7), and you can

find a running example in the lambdacube-bullet package.

Since the last update, the current version of the library saw only a

few minor updates: fast serialisation (using its own binary format)

and various bugfixes. Behind the scenes, we are working on a

completely new version, which will provide a graphics-oriented

data-flow DSL (in the same spirit as GPipe). The goal is to allow the

description of complex effects without mutable variables.

In the meantime, we also built a fully functional Stunts example,

which is available as a separate package.

Everyone is invited to contribute! You can help the project by playing

around with the code, thinking about API design, finding bugs (well,

there are a lot of them anyway), creating more content to display, and

generally stress testing the library as much as possible by using it

in your own projects.

Further reading

The diagrams library provides an embedded domain-specific language for

declarative drawing. The overall vision is for diagrams to become a

viable alternative to DSLs like MetaPost or Asymptote, but with the

advantages of being declarative—describing what to draw, not

how to draw it—and embedded—putting the entire power of

Haskell (and Hackage) at the service of diagram creation.

Development on the library has proceeded apace since the last HCAR,

and the 0.4 release now features a comprehensive user manual as well

as support for a large collection of primitive shapes, many different

modes of composition, paths, cubic splines, images, text, arbitrary

monoidal annotations, named subdiagrams, and more.

There is plenty more work to be done; new contributors are

particularly welcome!

Future plans

Plans for the near future include a native SVG backend, improved font

support, arrowheads, and improvements to the handling of named

subdiagrams. Longer-term plans include support for animations, a

custom Gtk application for editing diagrams, and any other awesome

stuff we think of.

Further reading

ChalkBoard is a domain specific language for describing images.

The language is uncompromisingly functional

and encourages the use of modern functional idioms.

The novel contribution of ChalkBoard is that it uses off-the-shelf

graphics cards to speed up rendering of our functional description.

We always intended to use ChalkBoard to animate educational

videos, as well as for processing streaming videos.

ChalkBoard also has an animation language,

based round an applicative functor, Active.

It has been called Functional Reactive Programming,

without the reactive part!

ChalkBoard has been released on hackage,

but is not actively being developed.

It would be nice to port the code to HTML5,

and we are happy to act as mentors for this effort.

Kevin Matlage graduated in May 2011 with an MS. His MS thesis was about the

design, implementation and applications of ChalkBoard. The thesis was awarded

the departmental Miller award, for best MS of the year.

Congratulations Kevin!

Further reading

http://www.ittc.ku.edu/csdl/fpg/Tools/ChalkBoard

7.8 Text and Markup Languages

Description

HaTeX is an implementation of LaTeX, with the aim to be

a helpful tool to generate LaTeX code.

From a global sight, it’s composed of:

- The LaTeX syntax description.

- A renderer of LaTeX code.

- A set of combinators of LaTeX entities.

- A monadic implementation of combinators.

- Methods for a subset of LaTeX packages.

What is new?

The third version of HaTeX has just released, and it is a completely

new implementation. Althought a lot of code is still valid, the

internal representation of values has changed drastically. Now,

the LaTeX code is written in an Abstract Syntax Tree (AST), via

the LaTeX datatype.

Future plans

A near future plan is to analyze the final AST output to find

possible errors in your LaTeX code, and to warn you about this.

Code is already adapting to this feature.

Other new coming features are tree draws from a tree data structure,

to extend the AMS-LaTeX functionality

(currently, too limited) and to implement a LaTeX code parser.

Contact

If you are someway interested in this project, please,

feel free to give any kind of opinion or idea,

or to ask any question you have. A good place

to take contact and stay tuned is the HaTeX mailing list:

hatex <at> projects.haskell.org

Of course, you always can mail to the maintainer.

Further reading

7.8.2 Haskell XML Toolbox

Description

The Haskell XML Toolbox (HXT) is a collection of tools for processing XML with

Haskell. It is itself purely written in Haskell 98. The core component of the

Haskell XML Toolbox is a validating XML-Parser that supports

almost fully the Extensible Markup Language (XML) 1.0 (Second Edition).

There is a validator based on DTDs and a new more powerful one for

Relax NG schemas.

The Haskell XML Toolbox is based on the ideas of HaXml and HXML,

but introduces a more general approach for processing XML with Haskell.

The processing model is based on arrows. The arrow interface is more flexible

than the filter approach taken in the earlier HXT versions and in HaXml.

It is also safer; type checking of combinators becomes possible with the arrow

approach.

HXT is partitioned into a collection of smaller packages: The core

package is

hxt. It contains a validating XML parser, an HTML parser,

filters for manipulating XML/HTML and so called XML pickler for

converting XML to and from native Haskell data.

Basic functionality for character handling and decoding is

separated into the packages hxt-charproperties and

hxt-unicode. These packages may be generally useful even for non XML projects.

HTTP access can be done with the help of the packages

hxt-http for native Haskell HTTP access and hxt-curl via a

libcurl binding. An alternative lazy non validating parser for XML and HTML can be

found in hxt-tagsoup.

The XPath interpreter is in package hxt-xpath, the XSLT part in

hxt-xslt

and the Relax NG validator in hxt-relaxng. For checking the XML

Schema Datatype definitions, also used with Relax NG, there is a

separate and generally useful regex package hxt-regex-xmlschema.

The old HXT approach working with filter hxt-filter is still

available,

but currently only with hxt-8. It has not (yet) been updated to the

hxt-9 mayor version.

Features

- Validating XML parser

- Very liberal HTML parser

- Lightweight lazy parser for XML/HTML based on Tagsoup (http://www.haskell.org/communities/05-2010/html/report.html#sect5.11.3)

- Binding to the expat parser via hexpat package

- Easy de-/serialization between native Haskell data and XML by pickler and pickler combinators

- XPath support

- Full Unicode support

- Support for XML namespaces

- Cabal package support for GHC

- HTTP access via Haskell bindings to libcurl and via Haskell HTTP

package

- Tested with W3C XML validation suite

- Example programs

- Relax NG schema validator

- Lightweight regex library with full support of Unicode and XML Schema

Datatype regular expression syntax

- An HXT Cookbook for using the toolbox and the arrow interface

- Basic XSLT support

- GitHub repository with current development versions of all packages

http://github.com/UweSchmidt/hxt

Current Work

In October 2011 a project has been started as part of a master thesis

for an XML validator based on XML Schema. Experiences with developing

the Relax-NG have shown, that such a project may be done within such a limited

time. The XML picklers can be used to easily parse XML Schema an transform it

into an AST. So the core work consists of developing an appropriate abstract

syntax and to normalize, check and transform this AST before validating XML documents.

Within this project the XML data type library already in use with Relax-NG is planned

to be completed. In the current version, time and date data types are not yet supported.

We expect to finish the work in March 2012.

Further reading

The Haskell XML Toolbox Web page

(http://www.fh-wedel.de/~si/HXmlToolbox/index.html)

includes links to downloads, documentation, and further information.

A getting started tutorial about HXT is available

in the Haskell Wiki (http://www.haskell.org/haskellwiki/HXT

). The conversion between XML and native Haskell data types is

described in another Wiki page

(http://www.haskell.org/haskellwiki/HXT/Conversion_of_Haskell_data_from/to_XML).

8 Applications and Projects

8.1 Education

8.1.1 Holmes, Plagiarism Detection for Haskell

Holmes is a tool for detecting plagiarism in Haskell programs.

A prototype implementation was made by Brian Vermeer under supervision

of Jurriaan Hage, in order to determine which heuristics work well.

This implementation could deal only with Helium programs.

We found that a token stream based comparison and Moss style fingerprinting

work well enough, if you remove template code and dead code before

the comparison. Since we compute the control flow graphs anyway,

we decided to also keep some form of similarity checking of control-flow

graphs (particularly, to be able to deal with certain refactorings).

In November 2010, Gerben Verburg started to reimplement Holmes keeping only

the heuristics we figured were useful, basing that implementation

on haskell-src-exts.

A large scale empirical validation has been made, and the results

are good. We have found quite a bit of plagiarism in a collection of about 2200

submissions, including a substantial number in which refactoring was

used to mask the plagiarism. A paper has been written, but is currently

unpublished.

The tool will not be made available

through Hackage, but will be available free of use to lecturers on request.

Please contact J.Hage@uu.nl for more information.

We also have a implemented graph based that computes

near graph-isomorphism that seems to work really well in comparing

control-flow graphs in an inexact fashion. However, it does not scale

well enough in terms of computations to be included in the comparison, and is

not mature enough to deal with certain easy refactorings.

Future work includes a Hare-against-Holmes bash in which Hare users will do

their utmost to fool Holmes.

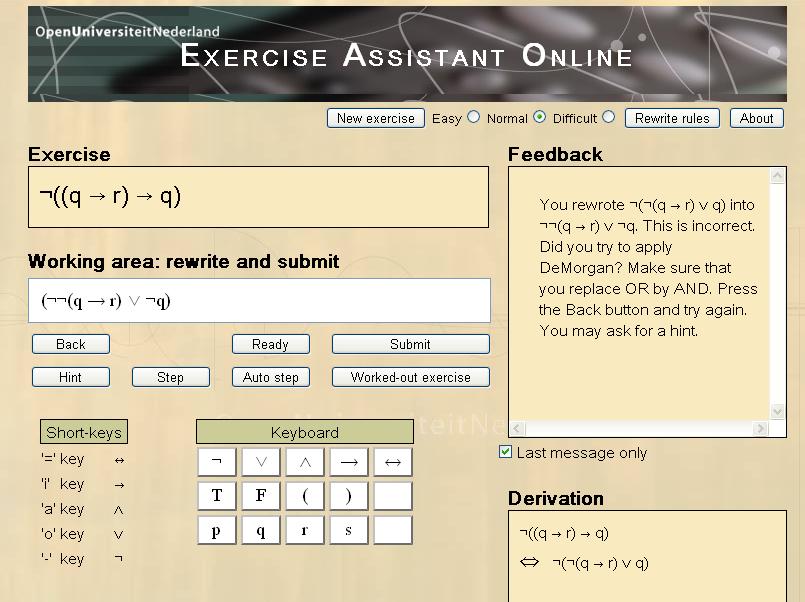

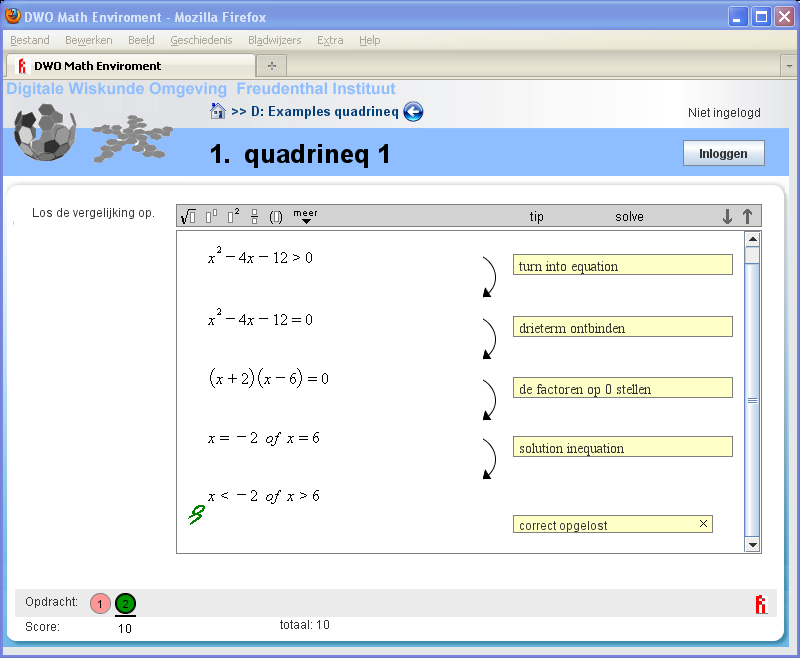

8.1.2 Interactive Domain Reasoners

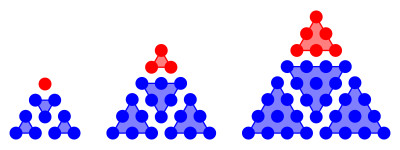

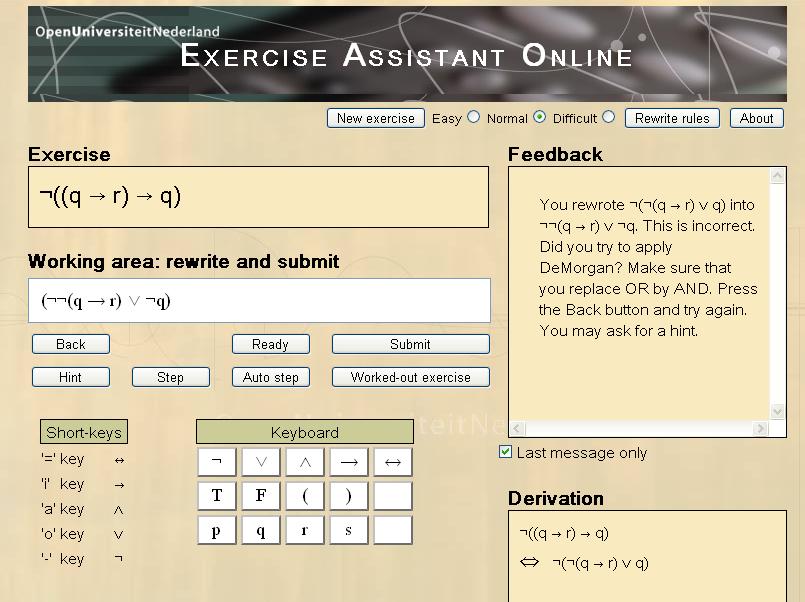

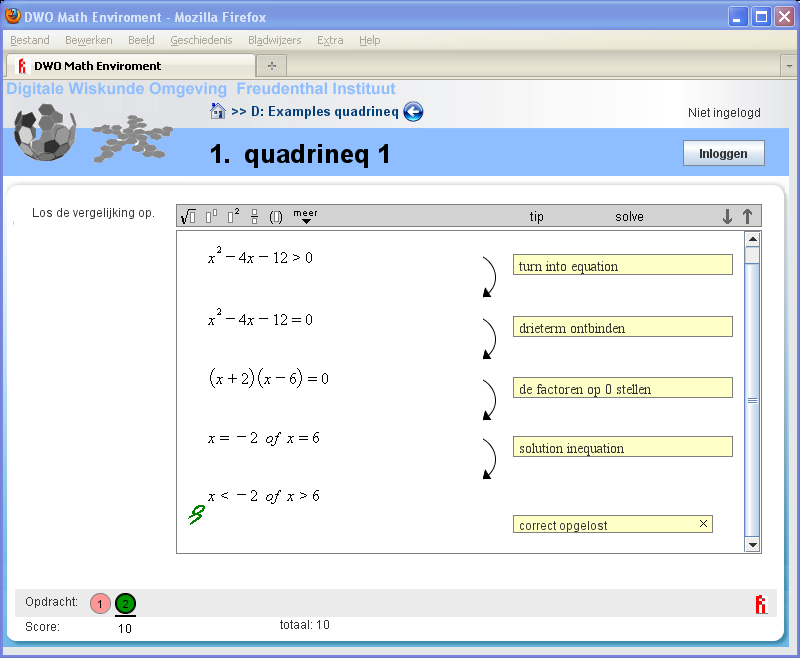

The Ideas project (at Open Universiteit Nederland and Universiteit

Utrecht) aims at developing interactive domain reasoners on various topics.

These reasoners assist students in solving exercises incrementally by checking

intermediate steps, providing feedback on how to continue, and detecting

common mistakes. The reasoners are based on a strategy language, from which

all feedback is derived automatically. The calculation of feedback is offered

as a set of web services, enabling external (mathematical) learning environments

to use our work. We currently have a binding with the Digital Mathematics

Environment (DWO) of the Freudenthal Institute, the ActiveMath learning

system (DFKI and Saarland University), and our own online exercise assistant

that supports rewriting logical expressions into disjunctive normal form.

We are adding support for more exercise types, mainly at the level of high

school mathematics. For example, our tool now covers simplifying expressions

with exponents, rational equations, and derivatives. We have investigated how

users can interleave solving different parts of exercises. Recently, we have

focused on designing a

functional programming tutor. This tool lets you practice

introductory functional programming exercises. We are investigating how we can